Multiprocessors

Multiprocessors. Politecnico di Milano SeminarRoom , Bld 20 30 November , 2017. Antonio Miele Marco Santambrogio Politecnico di Milano. Outline. Multiprocessors Flynn taxonomy SIMD architectures Vector architectures MIMD architectures A real life example What ’ s next.

Multiprocessors

E N D

Presentation Transcript

Multiprocessors Politecnico di Milano SeminarRoom, Bld 20 30 November, 2017 Antonio Miele Marco Santambrogio Politecnico di Milano

Outline • Multiprocessors • Flynn taxonomy • SIMD architectures • Vector architectures • MIMD architectures • A real life example • What’s next

Supercomputers Definition of a supercomputer: • Fastest machine in world at given task • A device to turn a compute-bound problem into an I/O bound problem • Any machine costing $30M+ • Any machine designed by Seymour Cray CDC6600 (Cray, 1964) regarded as first supercomputer

The Cray XD1 example • The XD1 uses AMD Opteron 64-bit CPUs • and it incorporates Xilinx Virtex-II FPGAs • Performance gains from FPGA • RC5 Cipher Breaking • 1000x faster than 2.4 GHz P4 • Elliptic Curve Cryptography • 895-1300x faster than 1 GHz P3 • Vehicular Traffic Simulation • 300x faster on XC2V6000 than 1.7 GHz Xeon • 650xfaster on XC2VP100 than 1.7 GHz Xeon • Smith Waterman DNA matching • 28x faster than 2.4 GHz Opteron

Supercomputer Applications • Typical application areas • Military research (nuclear weapons, cryptography) • Scientific research • Weather forecasting • Oil exploration • Industrial design (car crash simulation) • All involve huge computations on large data sets • In 70s-80s, Supercomputer Vector Machine

Parallel Architectures • Definition: “A parallel computer is a collection of processing elements that cooperates and communicate to solve large problems fast” • Almasi and Gottlieb, Highly Parallel Computing, 1989 • The aim is to replicate processors to add performance vs design a faster processor. • Parallel architecture extends traditional computer architecture with a communication architecture • abstractions (HW/SW interface) • different structures to realize abstraction efficiently

Beyond ILP • ILP architectures (superscalar, VLIW...): • Support fine-grained, instruction-level parallelism; • Fail to support large-scale parallel systems; • Multiple-issue CPUs are very complex, and returns (as far as extracting greater parallelism) are diminishing extracting parallelism at higher levels becomes more and more attractive. • A further step: process- and thread-level parallel architectures. • To achieve ever greater performance:connect multiple microprocessors in a complex system.

Beyond ILP • Most recent microprocessor chips are multiprocessor on-chip: Intel i5, i7, IBM Power 8, Sun Niagara • Major difficulty in exploiting parallelism in multiprocessors: suitable software being (at least partially) overcome, in particular, for servers and for embedded applications which exhibit natural parallelism without the need of rewriting large software chunks

Flynn Taxonomy (1966) • SISD - Single Instruction Single Data • Uniprocessor systems • MISD - Multiple Instruction Single Data • No practical configuration and no commercial systems • SIMD - Single Instruction Multiple Data • Simple programming model, low overhead, flexibility, custom integrated circuits • MIMD - Multiple Instruction Multiple Data • Scalable, fault tolerant, off-the-shelf micros

SISD A serial (non-parallel) computer Single instruction: only one instruction stream is being acted on by the CPU during any one clock cycle Single data: only one data stream is being used as input during any one clock cycle Deterministic execution This is the oldest and even today, the most common type of computer

SIMD A type of parallel computer Single instruction: all processing units execute the same instruction at any given clock cycle Multiple data: each processing unit can operate on a different data element Best suited for specialized problems characterized by a high degree of regularity, such as graphics/image processing

MISD A single data stream is fed into multiple processing units. Each processing unit operates on the data independently via independent instruction streams.

MIMD Nowadays, the most common type of parallel computer Multiple Instruction: every processor may be executing a different instruction stream Multiple Data: every processor may be working with a different data stream Execution can be synchronous or asynchronous, deterministic or non-deterministic

Which kind of multiprocessors? Many of the early multiprocessors were SIMD – SIMD model received great attention in the ’80’s, today is applied only in very specific instances (vector processors, multimedia instructions); MIMD has emerged as architecture of choice for general-purpose multiprocessors Lets see these architectures more in details..

SIMD - Single Instruction Multiple Data Same instruction executed by multiple processors using different data streams. Each processor has its own data memory. Single instruction memory and control processor to fetch and dispatch instructions Processors are typically special-purpose. Simple programming model.

SIMDArchitecture PE PE PE PE PE PE PE Mem Mem Mem Mem Mem Mem Mem Array Controller Inter-PE Connection Network PE Control Mem Data • Only requires one controller for whole array • Only requires storage for one copy of program • All computations fully synchronized Central controller broadcasts instructions to multiple processing elements (PEs)

Alternative Model: Vector Processing SCALAR (1 operation) VECTOR (N operations) v2 v1 r2 r1 + + v3 r3 vector length add.vv v3, v1, v2 add r3, r1, r2 Vector processors have high-level operations that work on linear arrays of numbers: "vectors" 25

Vector Supercomputers Epitomized by Cray-1, 1976: Scalar Unit + Vector Extensions • Load/Store Architecture • Vector Registers • Vector Instructions • Hardwired Control • Highly Pipelined Functional Units • Interleaved Memory System • No Data Caches • No Virtual Memory

Vector programming model Scalar Registers Vector Registers v15 r15 r0 v0 [0] [1] [2] [VLRMAX-1] Vector Length Register • VLR v1 Vector Arithmetic Instructions ADDV v3, v1, v2 v2 v3 + + + + + + [0] [1] [VLR-1] Vector Load and Store Instructions LV v1, r1, r2 Vector Register v1 Memory Base, r1 Stride, r2

Vector Arithmetic Execution • Use deep pipeline (=> fast clock) to execute element operations • Simplifies control of deep pipeline because elements in vector are independent (=> no hazards!) V1 V2 V3 Six stage multiply pipeline V3 <- v1 * v2

Vector Instruction Execution Execution using one pipelined functional unit Execution using four pipelined functional units A[24] A[27] A[25] A[26] A[6] B[24] B[26] B[25] B[27] B[6] A[20] A[23] A[21] A[22] A[5] B[20] B[22] B[23] B[21] B[5] A[18] A[17] A[16] A[19] A[4] B[18] B[19] B[17] B[16] B[4] A[15] A[13] A[12] A[14] A[3] B[15] B[14] B[13] B[12] B[3] C[11] C[10] C[8] C[2] C[9] C[7] C[1] C[4] C[6] C[5] C[3] C[2] C[1] C[0] C[0] ADDV C,A,B

Vector Unit Structure Functional Unit Lane Vector Registers Elements 0, 4, 8, … Elements 1, 5, 9, … Elements 2, 6, 10, … Elements 3, 7, 11, … Memory Subsystem

T0 Vector Microprocessor (UCB/ICSI, 1995) Vector register elements striped over lanes [24] [25] [26] [27] [28] [29] [30] [31] [16] [17] [18] [19] [20] [21] [22] [23] [8] [9] [10] [11] [12] [13] [14] [15] [0] [1] [2] [3] [4] [5] [6] [7] Lane

Vector Applications Limited to scientific computing? • Multimedia Processing (compress., graphics, audio synth, image proc.) • Standard benchmark kernels (Matrix Multiply, FFT, Convolution, Sort) • Lossy Compression (JPEG, MPEG video and audio) • Lossless Compression (Zero removal, RLE, Differencing, LZW) • Cryptography (RSA, DES/IDEA, SHA/MD5) • Speech and handwriting recognition • Operating systems/Networking (memcpy, memset, parity, checksum) • Databases (hash/join, data mining, image/video serving) • Language run-time support (stdlib, garbage collection) • even SPECint95

MIMD - Multiple Instruction Multiple Data • Each processor fetches its own instructions and operates on its own data. • Processors are often off-the-shelf microprocessors. • Scalable to a variable number of processor nodes. • Flexible: • single-user machines focusing on high-performance for one specific application, • multi-programmed machines running many tasks simultaneously, • some combination of these functions. • Cost/performance advantages due to the use of off-the-shelf microprocessors. • Fault tolerance issues.

Why MIMD? MIMDs are flexible – they can function as single-user machines for high performances on one application, as multiprogrammed multiprocessors running many tasks simultaneously, or as some combination of such functions; Can be built starting from standard CPUs (such is the present case nearly for all multiprocessors!).

MIMD • To exploit a MIMD with n processors • at least n threads or processes to execute • independent threads typically identified by the programmer or created by the compiler. • Parallelism is contained in the threads • thread-level parallelism. • Thread: from a large, independent process to parallel iterations of a loop. Important: parallelism is identified by the software (not by hardware as in superscalar CPUs!)... keep this in mind, we'll use it!

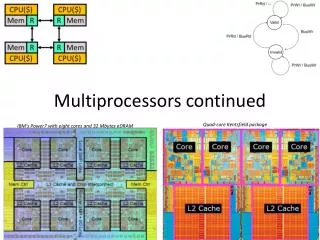

MIMD Machines Existing MIMD machines fall into 2 classes, depending on the number of processors involved, which in turn dictates a memory organization and interconnection strategy. Centralized shared-memory architectures • at most few dozen processor chips (< 100 cores) • Large caches, single memory multiple banks • Often called symmetric multiprocessors (SMP) and the style of architecture called Uniform Memory Access (UMA) Distributed memory architectures • To support large processor counts • Requires high-bandwidth interconnect • Disadvantage: data communication among processors

Key issues to design multiprocessors How many processors? How powerful are processors? How do parallel processors share data? Where to place the physical memory? How do parallel processors cooperate and coordinate? What type of interconnection topology? How to program processors? How to maintain cache coherency? How to maintain memory consistency? How to evaluate system performance?

One core • A 64-bit Power Architecture core • Two-issue superscalar execution • Two-way multithreaded core • In-order execution • Cache • 32 KB instruction and a 32 KB data Level 1 cache • 512 KB Level 2 cache • The size of a cache line is 128 bytes • One core to rule them all

Cell: PS3 • Cell is a heterogeneous chip multiprocessor • One 64-bit Power core • 8 specialized co-processors • based on a novel single-instruction multiple-data (SIMD) architecture called SPU (Synergistic Processor Unit)

Xenon: XBOX360 • Three symmetrical cores • each two way SMT-capable and clocked at 3.2 GHz • SIMD: VMX128 extension for each core • 1 MB L2 cache (lockable by the GPU) running at half-speed (1.6 GHz) with a 256-bit bus

Microsoft vision • Microsoft envisions a procedurally rendered game as having at least two primary components: • Host thread: a game's host thread will contain the main thread of execution for the game • Data generation thread: where the actual procedural synthesis of object geometry takes place • These two threads could run on the same PPE, or they could run on two separate PPEs. • In addition to the these two threads, the game could make use of separate threads for handling physics, artificial intelligence, player input, etc.

Questions RISK more than others think is safe, CARE more than others think is wise, DREAM more than other think is practical, EXPECT more than others think is possible cadel maxim