Computer Cluster

Computer Cluster. Course at the University of Applied Sciences - FH München Prof. Dr. Christian Vogt. Contents. TBD. Selected Literature. Gregory Pfister: In Search of Clusters, 2 nd ed ., Pearson 1998

Computer Cluster

E N D

Presentation Transcript

Computer Cluster Courseatthe University of Applied Sciences - FH München Prof. Dr. Christian Vogt

Contents • TBD

Selected Literature • Gregory Pfister: In Searchof Clusters, 2nded., Pearson 1998 • Documentationforthe Windows Server 2008 Failover Cluster (on the Microsoft Web Pages) • Sven Ahnert: Virtuelle Maschinen mit VMware und Microsoft, 2. Aufl., Addison-Wesley 2007 (the 3rd edition is announced for June 26, 2009).

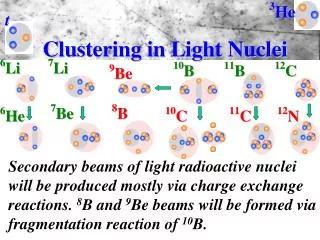

Whatis a Cluster? • Wikipediasays:A computer cluster is a group of linked computers, working together closely so that in many respects they form a single computer. • Gregory Pfister says:A clusteris a type of parallel ordistributedsystemthat: • consistsof a collectionofinterconnectedwholecomputers, • andisutilizedas a single, unifiedcomputingresource.

Features (Goals) of Clusters • High Performance Computing • LoadBalancing • High Availability • Scalability • Simplified System Management • Single System Image

Basic Typesof Clusters • High Performance Computing (HPC) Clusters • LoadBalancing Clusters (aka Server Farms) • High-Availability Clusters (aka Failover Clusters)

Selected HA Cluster Products (1) • VMScluster (DEC 1984, today: HP) • Sharedeverythingclusterwithupto 96 nodes. • IBM HACMP (High Availability Cluster Multiprocessing, 1991) • Upto 32 nodes (IBM System p with AIX or Linux). • IBM Parallel Sysplex (1994) • Sharedeverything, upto 32 nodes (mainframeswith z/OS). • Solaris Cluster, aka Sun Cluster • Upto 16 nodes.

Selected HA Cluster Products (2) • Heartbeat (HA Linux project, started in 1997) • Noarchitecturallimitforthenumberofnodes. • Red Hat Cluster Suite • Upto 128 nodes. DLM • Windows Server 2008 Failover Cluster • Was: Microsoft Cluster Server (MSCS, since 1997). • Upto 16 nodes on x64 (8 nodes on x86). • Oracle Real Application Cluster (RAC) • Two or more computers, each running an instance of the Oracle Database, concurrently access a single database. • Up to 100 nodes.

Cluster with Virtual Machines (1) • Onephysicalmachineashotstandbyforseveralphysicalmachines: physicalvirtualcluster

Cluster with Virtual Machines (2) • Consolidationofseveralclusters: physicalvirtualcluster

Cluster with Virtual Machines (3) • Clustering hosts (failingoverwhole VMs): physicalvirtualcluster

iSCSI • Internet Small Computer Systems Interface • is a storageareanetwork (SAN) protocol, • carries SCSI commandsover IP networks (LAN, WAN, Internet), • is an alternative toFibre Channel (FC), using an existingnetworkinfrastructure. • An iSCSIclientiscalled an iSCSI Initiator. • An iSCSIserveriscalled an iSCSI Target

iSCSI Initiator • An iSCSIinitiatorinitiates a SCSI session, i.e. sends a SCSI commandtothetarget. • A Hardware Initiator (hostbusadapter, HBA) • handlestheiSCSIand TCP processingand Ethernet interruptsindependentlyofthe CPU. • A Software Initiator • runsas a memory resident devicedriver, • uses an existingnetworkcard, • leaves all protocolhandlingtothemain CPU.

iSCSI Target • An iSCSItarget • waitsforiSCSIinitiators‘ commands, • providesrequiredinput/outputdatatransfers. • Hardware Target:A storagearray (SAN) mayofferitsdisks via theiSCSIprotocol. • A Software Target: • offers (partsof) thelocaldiskstoiSCSIinitiators, • uses an existingnetworkcard, • leaves all protocolhandlingtothemain CPU.

Logical Unit Number (LUN) • A Logical Unit Number (LUN) • istheunitofferedbyiSCSItargetstoiSCSIinitiators, • represents an individuallyaddressable SCSI device, • appearsto an initiatorlike a locallyattacheddevice, • mayphysicallyreside on a non-SCSI device, and/orbepartof a RAID set, • mayrestrictaccessto a singleinitiator, • maybesharedbetweenseveralinitiators (leavingthehandlingofaccessconflictstothefile resp. operatingsystem, ortosomeclustersoftware).Attention: manyiSCSItargetsolutions do not offerthisfunctionality.

CHAP Protocol • iSCSI • optionallyusesthe Challenge-Hand-shakeAuthen-tication Protocol (CHAP) forauthenticationofinitiatorstothetarget, • does not providecryptographicprotectionforthedatatransferred. • CHAP • uses a three-wayhandshake, • basestheverification on a sharedsecret, whichmust beknowntoboththeinitiatorandthetarget.

Preparing a Failover Cluster • In order tobuild a Windows Server 2008 Failover Cluster youneedto: • Installthe Failover Cluster Feature (in Server Manager). • Conncectnetworksandstorage. • Public network • Heartbeatnetwork • Storage network (FC oriSCSI, unlessyouuse SAS) • Validatethehardwareconfiguration (Cluster Vali-dation Wizard in the Failover Cluster Management snap-in).

PreparingtheShared Storage • All disks on a shared storage bus are automatically placed in an offline state when first mapped to a cluster node. This allows storage to be simultaneously mapped to all nodes in a cluster even before the cluster is created. No longer do nodes have to be booted one at a time, disks prepared on one and then the node shut down, another node booted, the disk configuration verified, and so on.

The Cluster Validation Wizard • Run the Cluster Validation Wizard (in Failover Cluster Management). • Adjustyourconfigurationuntilthewizarddoes not reportanyerrors. • An error-freeclustervalidationis a prerequisiteforobtaining Microsoft supportforyourclusterinstallation. • A fulltestofthe Wizard consistsof: • System configuration • Inventory • Network • Storage

Initial Creationof a Windows Server 2008 Failover Cluster • Usethe Create Cluster Wizard (in Failover Cluster Management) tocreatethecluster. You will havetospecify • whichserversaretobepartofthecluster, • a nameforthecluster, • an IP addressforthecluster. • Other parameters will bechosenautomatically, andcanbechangedlater.

Fencing • (Node) Fencing is the act of forcefully disabling a cluster node (or at least keeping it from doing disk I/O: Disk Fencing). • The decisionwhen a nodeneedstobefencedistakenbytheclustersoftware. • Somewaysofhow a nodecanbefencedare • bydisablingitsport(s) on a Fibre Channel switch, • by (remotely) powering down thenode, • byusingthe SCSI-3 Persistent Reservation.

SAN FabricFencing • SomeFibre Channel Switches allowprogramstofence a nodebydisablingtheswitchport(s) thatitisconnectedto.

STONITH • “Shoot the other node in the head”. • A special STONITH device (a Network Power Switch) allows a clusternodeto power down otherclusternodes. • Used, forexample, in Heartbeat, the Linux HA project.

SCSI-3 Persistent Reservation • Allows multiple nodestoaccess a SCSI device. • Blocks othernodesfromaccessingthedevice. • Supports multiple pathsfromhosttodisk. • Reservationsare persistent across SCSI busresets, andnodereboots. • Usesreservations, andregistration. • Toejectanothersystem‘sregistration, a nodeissues a pre-emptandabortcommand.

Fencing in Failover Cluster • Windows Server 2008 Failover Cluster uses SCSI-3 Persistent Reservations. • All sharedstoragesolutions (e.g. iSCSI Targets) used in thecluster must use SCSI-3 commands, and in particularsupport persistent reserva-tions. (Many open sourceiSCSItargets do not fulfillthisrequirement, e.g. OpenFiler, orFreeNAStarget.)

A Cluster Validation Error • The Cluster Validation Wizard mayreportthefollowingerror:

Cluster Partitioning (Split-Brain) • Cluster Partitioning (Split-Brain) isthe situ-ationwhenthe cluster nodes break up into groups which can communicate in their groups, and with the shared storage, but not between groups. • Cluster Partitioningcanleadtoseriousproblems, includingdatacorruption on theshareddisks.

Quorum Schemes • Cluster Partitioningcanbeavoidedbyusinga Quorum Scheme: • A groupofnodesisonlyallowedtorunas a clusterwhenithasquorum. • Quorumconsistsof a majorityofvotes. • Votescanbecontributedby • Nodes • Disks • File Shares eachofwhichcanprovideoneormorevotes.

Votes in Failover Cluster • In Windows Server 2008 Failover Cluster votescanbecontributedby • a node, • a disk (calledthewitnessdisk), • a fileshare, eachofwhichprovidesexactlyonevote. • A Witness Disk or File Share contains the cluster registry hive in the \Cluster directory.(The same information is also stored on each of the cluster nodes but may be out of date).

Quorum Schemes in Windows Server 2008 Failover Cluster (1) Windows Server 2008 Failover Cluster canuseanyoffour different Quorum Schemes: • NodeMajority • Recommended for a clusterwith an oddnumberofnodes. • Nodeand Disk Majority • Recommended for a clusterwith an evennumberofnodes.

Quorum Schemes in Windows Server 2008 Failover Cluster (2) • Nodeand File Share Majority • Recommended for a multi-siteclusterwith an evennumberofnodes. • NoMajority: Disk Only • A groupofnodesmayrunas a clusteriftheyhaveaccesstothewitnessdisk. • The witnessdiskis a singlepointoffailure. • Not recommended. (Onlyforbackwardcompatibilitywith Windows Server 2003.)

Failover Cluster Terminology • Resources • Groups • Services andApplications • Dependencies • Failover • Failback • Looks-Alive („Basic resourcehealth check“, defaultinterval: 5 sec.) • Is-Alive („Thoroughresourcehealth check“, defaultinterval: 1 min.)

Services andApplications • DFS Namespace Server • DHCP Server • Distributed Transaction Coordinator (DTC) • File Server • Generic Application • Generic Script • Generic Service • Internet Storage Name Service (ISNS) Server • Message Queuing • Other Server • Print Server • Virtual Machine (Hyper-V) • WINS Server

Properties of Services andApplications • General: • Name • PreferredOwner(s)(Muss angegeben werden, wenn ein Failback gewünscht ist.) • Failover: • Period (Default: 6 hours)Numberofhours in whichthe Failover Threshold must not beexceeded. • Threshold (Default: 2 [?, 2 for File Server])Maximum numberoftimestoattempt a restartorfailover in thespecifiedperiod. Whenthisnumberisexceeded, theapplicationisleft in thefailedstate. • Failback: • Preventfailback (Default) • Allowfailback • Immediately • Failbackbetween (specifyrangeofhoursoftheday)

ResourceTypes In additionto all servicesandapplicationsmentionedbefore: • File Share Quorum Witness • IP Address • IPv6 Address • IPv6 Tunnel Address • MSMQ Triggers • Network Name • NFS Share • Physical Disk • Volume ShadowCopy Service Task