Advanced Techniques in Dimension Reduction: Projection Pursuit, ICA, and NCA Explained

This article explores advanced techniques in dimension reduction, focusing on Projection Pursuit (PP), Independent Component Analysis (ICA), and Network Component Analysis (NCA). Projection pursuit identifies “interesting” directions based on objective functions, while ICA seeks latent variables that are statistically independent. NCA integrates prior biological knowledge into its sparse loading matrix, providing unique insights into the data structure. The interplay of these methods highlights their unique capabilities for clustering, visualization, and uncovering hidden structures in high-dimensional datasets.

Advanced Techniques in Dimension Reduction: Projection Pursuit, ICA, and NCA Explained

E N D

Presentation Transcript

Dimension reduction (2) Projection pursuit ICA NCA Partial Least Squares • Blais. “The role of the environment in synaptic plasticity…..” (1998) • Liao et al. PNAS. (2003) • http://www.cis.hut.fi/aapo/papers/NCS99web/node11.html • Barker & Raynes. J. Chemometrics2013.

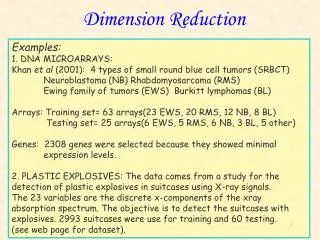

Projection pursuit A very broad term: finding the most “interesting” direction of projection. How the projection is done depends on the definition of “interesting”. If it is maximal variation, then PP leads to PCA. In a narrower sense: Finding non-Gaussian projections. For most high-dimensional clouds, most low-dimensional projections are close to Gaussian important information in the data is in the directions for which the projected data is far from Gaussian.

Projection pursuit It boils down to objective functions – each kind of “interesting” has its own objective function(s) to maximize.

Projection pursuit PCA Projection pursuit with multi-modality as objective.

Projection pursuit One objective function to measure multi-modality: It uses the first three moments of the distribution. It can help finding clusters through visualization. To find w, the function is maximized over w by gradient ascent:

Projection pursuit Can think of PCA as a case of PP, with the objective function: For other PC directions, find projection onto space orthogonal to the previously found PCs.

Projection pursuit Some other objective functions (y is the RV generated by projection w’x) http://www.riskglossary.com/link/kurtosis.htm The Kurtosis as defined here has value 0 for normal distribution. Higher Kertusis: peaked and fat-tailed.

Independent component analysis Again, another view of dimension reduction is factorization into latent variables. ICA finds a unique solution by requiring the factors to be statistically independent, rather than just uncorrelated. Lack of correlation only determines the second-degree cross-moment, while statistical independence means for any functions g1() and g2(), For multivariate Gaussian, uncorrelatedness = independence

ICA Multivariate Gaussian is determined by second moments alone. Thus if the true hidden factors are Gaussian, then still they can be determined only up to a rotation. In ICA, the latent variables are assumed to be independent and non-Gaussian. The matrix A must have full column rank.

Independent component analysis ICA is a special case of PP. The key is again for y being non-Gaussian. Several ways to measure non-Gaussianity: Kurtotis (zero for Gaussian RV, sensitive to outliers) Entropy (Gaussian RV has the largest entropy given the first and second moments) (3) Negentropy: ygauss is a Gaussian RV with the same covariance matrix as y.

ICA To measure statistical independence, use mutual information, Sum of marginal entropies minus the overall entropy Non-negative; Zero if and only if independent.

ICA The computation: There is no closed form solution, hence gradient descent is used. Approximation to negentropy (for less intensive computation and better resistance to outliers) Two commonly used G(): v is standard gaussian. G() is some nonquadratic function. When G(x)=x4 this is Kurtosis.

ICA FastICA: Center the X vectors to mean zero. Whiten the X vectors such that E(xx’)=I. This is done through eigen value decomposition. Initialize the weight vector w Iterate: w+=E{xg(wTx)}-E{g’(wTx)}w w=w+/||w+|| until convergence g() is the derivative of the non-quadratic function

ICA Figure 14.28: Mixtures of independent uniform random variables. The upper left panel shows 500 realizations from the two independent uniform sources, the upper-right panel their mixed versions. The lower two panels show the PCA and ICA solutions, respectively.

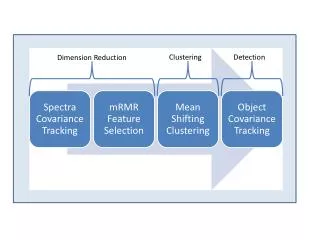

Network component analysis Other than dimension reduction, hidden factor model, there is another way to understand a model like this: It can be understood as explaining the data by a bipartite network --- a control layer and an output layer. Unlike PCA and ICA, NCA doesn’t assume a fully linked loading matrix. Rather, the matrix is sparse. The non-zero locations are pre-determined by biological knowledge about regulatory networks. For example,

Network component analysis Motivation: Instead of blindly search for lower dimensional space, a priori information is incorporated into the loading matrix.

NCA XNxP=ANxKPKxP+ENxP Conditions for the solution to be unique: A is full column rank; When a column of A is removed, together with all rows corresponding to non-zero values in the column, the remaining matrix is still full column rank; P must have full row rank

NCA Fig. 2. A completely identifiable network (a) and an unidentifiable network (b). Although the two initial [A] matrices describing the network matrices have an identical number of constraints (zero entries), the network in b does not satisfy the identifiability conditions because of the connectivity pattern of R3. The edges in red are the differences between the two networks.

NCA Notice that both A and P are to be estimated. Then the criteria of identifiability is in fact untestable. The compute NCA, minimize the square loss function: Z0 is the topology constraint matrix – i.e. which position of A is non-zero. It is based on prior knowledge. It is the network connectivity matrix.

NCA Solving NCA: This is a linear decomposition system which has the bi-convex property. It is solved by iteratively solving for A and P while fixing the other one. Both steps use least squares. Convergence is judged by the total least-square error. The total error is non-increasing in each step. Optimality is guaranteed if the three conditions for identifiability are satisfied. Otherwise a local optimum may be found.

PLS • Finding latent factors in X that can predict Y. • X is multi-dimensional, Y can be either a random variable or a random vector. • The model will look like: • where Tjis a linear combination of X • PLS is suitable in handling p>>N situation.

PLS Data: Goal:

PLS Solution: ak+1 is the (k+1)theigen vector of Alternatively, The PLS components minimize Can be solved by iterative regression.

PLS Example: PLS v.s. PCA in regression: Y is related to X1