PLATO Data Center: Purpose and Structure

PLATO Data Center: Purpose and Structure. Laurent Gizon (PDPM) Hamed Moradi (PDC Project Office). The PDC is in charge of the validation, calibration, and analysis of the PLATO observations. It delivers the final PLATO science Data Products. SGS Structure.

PLATO Data Center: Purpose and Structure

E N D

Presentation Transcript

PLATO Data Center:Purpose and Structure Laurent Gizon (PDPM) Hamed Moradi (PDC Project Office)

The PDC is in charge of the validation, calibration, and analysis of the PLATO observations. It delivers the final PLATO science Data Products.

SGS Structure Mission Operations Center (MOC, flight-critical) Science Operations Center (SOC, mission-critical) PLATO Data Center (PDC, science-critical) Science Preparatory Activities (scientific specification of software)

PLATO Ground Segment Operations Ground Segment Science Ground Segment Mission Operations Centre Science Operations Centre SOC PLATO Data Center PDC PLATO Science Preparation Management PSPM Ground Station Network

The definition phase objectives of the SGS are to establish the technical (PDC) and scientific (PSPM) requirements baseline for the SGS and to develop the operations concept, architecture and interfaces. • The definition phase activities of the PDC and PSPM are organized according to the following guidelines: • PSPM provides the scientific specifications of the software • PDC translates the scientific specifications into technical specifications • PDC implements the technical specifications • PSPM checks that the PDC software is consistent with the initial scientific specifications. This validation by PSPM occurs within the PDC – a normal part of the development QA process.

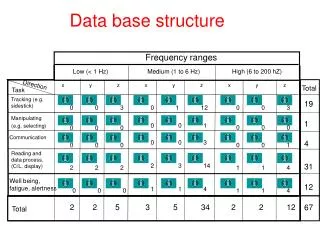

Data Levels Telemetry: Baseline:109 Gb/day uncompressed (8.7 Mb/s compressed, during 3.5 hr each day). Level 0: Depacketized light curves, centroid curves, and selected imagettes (~1600), for each telescope (32+2) Level 1: Analysis of imagettes to validate and optimize performance of on-board treatment. Implementation of on-ground instrumental corrections, such as CCD corrections and jitter corrections. Then computation of average light curves and centroid curves for each star (science-ready). Level 2: PLATO science Data Products (next table). Final DP is a list of confirmed planetary systems, fully characterized by the transit curves, the stellar seismic parameters, and the follow-up observations. High scientific added value.

Ancillary Observations Essential information for the success of the mission: input catalog, follow-up observations, etc. Support for on-board processing, on-ground calibration, and scientific data analysis Stellar properties: effective temperature, absolute luminosity, radius [Gaia], chemical abundances, v sin i, activity, properties specific to multiple stars. Follow-up observations to confirm planets (at several wavelengths when possible) Other relevant complementary observations: hires spectra, astrometry, imaging, spectro-polarimetry, etc.. The ancillary data are in support of the processing activities and are accessed by the PDC via the main data base.

Ancillary observations: star catalogs stellar parameters follow-up observations spectroscopy radial velocities interferometry astrometry (Gaia) DP0 DP1 DP3 DP4 DP2 DP6 DP5 Exoplanet Analysis System

PDC Architecture • Main Data Base centralizes DPs and ancillary data (PDC-DB @ MPS) • PDC develops and implements code at ESA SOC to produce DP1 • Data Processing Centers (PDPCs) manufacture DP2-DP6 • Distribution of DPs and long term archive under SOC responsibility

data access coordination SOC PDC L. Gizon, MPS main database & system architecture R. Burston, MPS data treatment implementation I. Pardowitz, MPS Exoplanet Analysis System N. Walton, IoA ancillary database R. Burston, MPS PLC Science Team+PDPM science activities PLATO Instrument Manager MOC SOC includes a processing center for the validation and calibration of the data ESA overall coordination (oversight) of science data releases, data access and distribution PDC designs and implements software to be run at the SOC data treatment Algorithms R. Samadi, LESIA Stellar Analysis System T. Appourchaux, IAS

PDC WBS 1 Central Data Base 5 Data Processing Centers

WP32 Data Processing Algorithms (Talk by Samadi)WP35 Ancillary Data Management(Talk by Deleuil)WP36 Exoplanet Analysis System(Talk by Walton)WP37 Stellar Analysis System(Talk by Appourchaux)

WP31 System architectureand main database (Burston) System architecture, archives, data base, system management Data flow design and management, export system, network Simulation of data stream

WP33 Data Processing Development (Pardowitz) Write and implement core-processing software that will run at the SOC Requires a good understanding of system interfaces with SOC and operational procedures For phase A, study jitter correction to prove feasibility

WP34 Input Catalog (Giommi) • Implementation of the PLATO input catalogue, under Italian responsibility. • This activity is related to the target and field characterization activity in the PSPM segment of the PMC. • WP34 delivers the validated PIC to the PDC-DB

WP38 Data Analysis Support Tools (Gizon) • PDC documentation management • Tools to support the analysis of individual light curves and to provide feedback to L2 processing pipelines (exoplanet and stellar). • PDPC-M is the place were consortium scientists inspect light curves, assess DP validity and update ranking of planet candidates • Search tools and VO activities • Internal PDC web site • In particular, PDC web site makes FU info accessible to FU observers

Time table Regular meetings with ESA to specify interfaces PDC-SOC End Feb 2011 5th PDC Meeting in K-Lindau. Identify final problems. Invite PSPM Leaders. WP Leaders deliver reports to LG by March 2011 PDC document delivered to PCL in May 2011 June 2011: Decision on PLATO selection End June 2011, Phase B1 meeting. December 2011: End phase B1 November 2018: Launch of PLATO 3+2+1 years in space Several releases of DPs during and after space mission PDC must remain operational up to ~3 yrs after the end of the space mission in order to confirm last planets.

Data validation Validate onboard software: Check onboard processing using ground copy of onboard software and the imagettes of ~1600 stars Validate distortion matrix model, 2D sky background model, PSF model fits Validate computation of masks and windows Validate onboard setup: Fine tuning of onboard software algorithm. For example choose number of parameters needed to describe PSF. Especially during configuration mode. Monitor health of each telescope and assess quality of the data

Data corrections Correction for jitter. Performed independently for each telescope; requires PSF knowledge, stellar catalog, and distortion matrix. Integration time correction, sampling time correction Statistical analysis over the 40 telescopes to identify cosmic ray hits, hot pixels, and possibly deficient telescopes Average light curves and centroid curves over all telescopes (weighted average). Compute error based on scatter The ~1600 stars for which imagettes are available receive a more sophisticated treatment. PSF fits to improve photometry (contamination from neighboring sources taken into acount). Imagettes are downloaded for all stars for which a serious planetary candidate has been identified. Long term detrending probably moved to PDC

Data volumes Telemetry rate: 109 Gb/day uncompressed Over a 6 yr mission: 30 TB uncompressed The volume of archived L0, L1 and HK data is expected to be 10-50 times this amount (reformatting and calibration history), i.e. 300-1500 TB The volume of the science data products is likely to be negligible in comparison (although the complexity of the data may be high). Ancillary data base: basic stellar observations and parameters, spectra, Gaia specific obs, etc. How big? The overall data volume should not exceed a few PB, which is not problematic.