Statistical Learning

Statistical Learning. Chapter 20 of AIMA KAIST CS570 Lecture note. Based on AIMA slides, Jahwan Kim’s slides and Duda, Hart & Stork’s slides. Statistical Learning. We view LEARNING as a form of uncertain reasoning from observation. Outline. Bayesian Learning Bayesian inference

Statistical Learning

E N D

Presentation Transcript

StatisticalLearning Chapter 20 of AIMA KAIST CS570 Lecture note Based on AIMA slides, Jahwan Kim’s slides and Duda, Hart & Stork’s slides

Statistical Learning We view LEARNING as a form of uncertain reasoning from observation 2

Outline • Bayesian Learning • Bayesian inference • MAP and ML • Naïve Bayesian method • Parameter Learning • Examples • Regression and LMS • Learning Probability Distribution • Parametric method • Non-parametric method 3

Bayesian Learning 1 • View learning as Bayesian updating of a probability distribution over the hypothesis space • H is the hypothesis variable, values h1,…,hn be possible hypotheses. • Let d=(d1,…dn)be the observed data vectors. • Often (always) iid assumption is made. • Let X denote the prediction. • In Bayesian Learning, • Compute the probability of each hypothesis given the data. Predict based on that basis. • Predictions are made by using all hypotheses. • Learning in Bayesian setting is reduced to probabilistic inference. 4

Bayesian Learning 2 • The probability that the prediction is X, when the data d is observed is P(X|d) = åi P(X|d, hi) P(hi|d) = åi P(X|hi) P(hi|d) • Prediction is weighted average over the predictions of individual hypothesis. • Hypotheses are intermediaries between the data and the predictions. • Requires computing P(hi|d) for all i. This is usually intractable. 5

Bayesian Learning BasicsTerms • P(hi) is called the (hypothesis) prior. • We can embed knowledge by means of prior. • It also controls the complexity of the model. • P(hi|d) is called posterior (or a posteriori) probability. • Using Bayes’ rule, P(hi|d)/ P(d|hi)P(hi) • P(d|hi) is called the likelihood of the data. • Under iid assumption, P(d|hi)=Õj P(dj|hi). • Let hMAP be the hypothesis for which the posterior probability P(hi|d) is maximal. It is called the maximum a posteriori (or MAP) hypothesis. 6

Candy Example • Two flavors of candy, cherry and lime, wrapped in the same opaque wrapper. (cannot see inside) • Sold in verylarge bags, of which there are known to be five kinds: • h1: 100% cherry • h2: 75% cherry + 25% lime • h3: 50% -50 % • h4: 25 % cherry -75 % lime • h5: 100% lime • Priors known: P(h1),…,P(h5) are 0.1, 0.2, 0.4, 0.2, 0.1 • Suppose from a bag of candy, we took N pieces of candy and all of them were lime (data dN). • What kind of bag is it ? • What flavor will the next candy be ? 7

Candy ExamplePosterior probability of hypotheses • P(h1|dN) / P(dN|h1)P(h1)=0,P(h2|dN) / P(dN|h2)P(h2)= 0.2(.25)N,P(h3|dN) / P(dN|h3)P(h3)=0.4(.5)N,P(h4|dN) / P(dN|h4)P(h4)=0.2(.75)N,P(h5|dN) / P(dN|h5)P(h5)=P(h5)=0.1. • Normalize them by requiring them to sum up to 1. 8

Maximum a posteriori (MAP) Learning • Since calculating the exact probability is often impractical, we use approximation by MAP hypothesis. That is, P(X|d)¼P(X|hMAP). • Make prediction with most probable hypothesis • Summing over that hypotheses space is often intractable • instead of large summation (integration), an optimization problem can be solved. • For deterministic hypothesis, P(d|hi) is 1 if consistent, 0 otherwise MAP = simplest consistent hypothesis (cf. science) • The true hypothesis eventually dominates the Bayesian prediction 10

MAP approximation MDL Principle • Since P(hi|d)/ P(d|hi)P(hi), instead of maximizing P(hi|d), we may maximize P(d|hi)P(hi). • Equivalently, we may minimize –log P(d|hi)P(hi)=-log P(d|hi)-log P(hi). • We can interpret this as choosing hi to minimize the number of bits that is required to encode the hypothesis hi and the data d under that hypothesis. • The principle of minimizing code length (under some pre-determined coding scheme) is called the minimum description length (or MDL) principle. • MDL is used in wide range of practical machine learning applications. 11

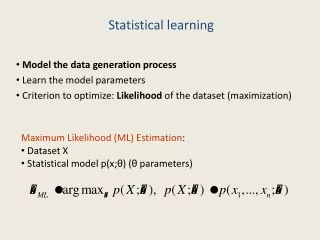

Maximum Likelihood Approximation • Assume furthermore that P(hi)’s are all equal, i.e., assume the uniform prior. • reasonable when there is no reason to prefer one hypothesis over another a priori. • For Large data set, prior becomes irrelevant • to obtain MAP hypothesis, it suffices to maximize P(d|hi), the likelihood. • the maximum likelihood hypothesis hML. • MAP and uniform prior , ML • ML is the standard statistical learning method • Simply get the best fit to the data 12

Naïve Bayes Method • Attributes (components of observed data) are assumed to beindependent in Naïve Bayes Method. • Works well for about 2/3 of real-world problems, despite naivety of such assumption. • Goal: Predict the class C, given the observed data Xi=xi. • By the independent assumption, P(C|x1,…xn) / P(C) Õi P(xi|C) • We choose the most likely class. • Merits of NB • Scales well: No search is required. • Robust against noisy data. • Gives probabilistic predictions. 13

Learning with Data : Parameter Learning • Introduce parametric probability model with parameter q. • Then the hypotheses are hq, i.e., hypotheses are parameterized. • In the simplest case, q is a single scalar. In more complex cases, q consists of many components. • Using the data d, predict the parameter q. 15

ML Parameter Learning Examples : discrete case • A bag of candy whose lime-cherry proportions are completely unknown. • In this case we have hypotheses parameterized by the probability q of cherry. • P(d|hq)=Õj P(dj|hq)=qcherry(1-q)lime • Find hq Maximize P(d|hq) • Two wrappers, green and red, are selected according to some unknown conditional distribution, depending on the flavor. • It has three parameters: q=P(F=cherry), q1=P(W=red|F=cherry), q2=P(W=red|F=lime). P(d|hQ)= qcherry(1-q)lime q1red,cherry(1-q1)green,cherry q2red,lime(1-q2)green,lime • Find hQ Maximize P(d|hQ) 16

ML Parameter Learning Example : continuous caseSingle Variable Gaussian • Gaussian pdf on a single variable: • Suppose x1,…,xN are observed. Then the log likelihood is • We want to find m and s that will maximize this. Find where gradient is zero. 17

ML Parameter Learning Example : continuous caseSingle Variable Gaussian • Solving this, we find • This verifies ML agrees with our common sense. 18

ML Parameter Learning Example : continuous caseLinear Regression • Y has a Gaussian distribution whose mean is depend on X and standard deviation is fixed • Maximize • = minimizing • This quantity is sum of squared errors. Thus in this case, ML ,Least Mean-Square (LMS) 19

Bayesian Parameter Learning • ML approximation’s deficiency with small data • e.g. ML of one cherry observation = 100% cherry • Bayesian parameter learning • Place a hypothesis prior over the possible values of parameters • Update this distribution as data arrive 20

Bayesian Learning of Parameter q The density becomes more peaked as the number of samples increase Despite different prior dsitribution, posterior density is virtually identical with large set of data 21

Bayesian Parameter Learning ExampleBeta Distribution : Candy example revisited. • q is the value of a random variable Qin Bayesian view. • P(Q) is a continuous distribution. • Uniform density is one candidate. • Another possibility is to use beta distributions. • Beta distribution has two hyperparametersa and b, and is given by (a normalizing constant) ba,b(q)=aqa-1(1-q)b-1. • mean : a/(a+b). • Larger a suggest q is closer to 1 than to 0 • More peaked when a+b is large, suggesting greater certainty about the value of Q. 22

Beta Distribution ba,b(q)=aqa-1(1-q)b-1 23

Baysian Parameter Learning ExampleProperty of Beta Distribution • if Q has a prior ba,b, then the posterior distribution for Q is also a beta distribution. • P(q|d=cherry) = aP(d=cherry|q)P(q) = a’ q ba,b(q) = a’ q¢qa-1(1-q)b-1 = a’qa(1-q)b-1 =ba+1,b • Beta distribution is called the conjugate prior for the family of distributions for a Boolean variable. • a and b as virtual count • Uniform prior ba,b seen a-1 cherry and b-1 lime 24

Density Estimation • All Parametric densities are unimodal (have a single local maximum), whereas many practical problems involve multi-modal densities • Nonparametric procedures can be used with arbitrary distributions and without the assumption that the forms of the underlying densities are known • There are two types of nonparametric methods: • Estimating P(x | j ) • Bypass probability and go directly to a-posteriori probability estimation 25

Density Estimation : Basic idea • Probability that a vector x will fall in region R is: • P is a smoothed (or averaged) version of the density function p(x) if we have a sample of size n; therefore, the probability that k points fall in R is then: and the expected value for k is: E(k) = nP (3) 26

ML estimation of P = is reached for • Therefore, the ratio k/n is a good estimate for the probability P and hence for the density function p. • p(x) is continuous and that the region R is so small that p does not vary significantly within it, we can write: where x’ is a point within R and V the volume enclosed by R. • Combining equation (1) , (3) and (4) yields: 27

Parzen Windows • Parzen-window approach to estimate densities assume that the region Rn is a d-dimensional hypercube • ((x-xi)/hn) is equal to unity if xi falls within the hypercube of volume Vn centered at x and equal to zero otherwise. 28

The number of samples in this hypercube is: By substituting kn in equation (7), we obtain the following estimate: Pn(x) estimates p(x) as an average of functions of x and the samples (xi) (i = 1,… ,n). These functions can be general! 29

Illustration of Parzen Window • The behavior of the Parzen-window method • Case where p(x) N(0,1) • Let (u) = (1/(2) exp(-u2/2) and hn = h1/n (n>1) (h1: known parameter) • Thus: is an average of normal densities centered at the samples xi. 30

Numerical results For n = 1 and h1=1For n = 10 and h = 0.1, the contributions of the individual samples are clearly observable ! 31

Analogous results are also obtained in two dimensions as illustrated 34

Case where p(x) = 1.U(a,b) + 2.T(c,d) (unknown density) (mixture of a uniform and a triangle density) 35

Summary • Full Bayesian learning gives best possible predictions but is intractable • MAP Learning balances complexity with accuracy on training data • ML approximation assumes uniform prior, OK for large data sets • Parameter estimation is often used 37