System Combination

Explore methods like parse hybridization, constituent voting, naive Bayes, and parser switching to improve NLP parsing results. Experiment with bagging and boosting in parser training and evaluate their impact on parsing performance.

System Combination

E N D

Presentation Transcript

System Combination LING 572 Fei Xia 01/31/06

Papers • (Henderson and Brill, EMNLP-1999): Exploiting Diversity in NLP: Combining Parsers • (Henderson and Brill, ANLP-2000): Bagging and Boosting a Treebank Parser

f1 f f2 … fm Task

Paper #1 ML1 f1 ML2 f2 f MLm fm

Paper #2: bagging ML f1 ML f2 f ML fm

Scenario ML1 f1 ML2 f2 f MLm fm

Three parsers • Collins (1997) • Charniak (1997) • Ratnaparkhi (1997)

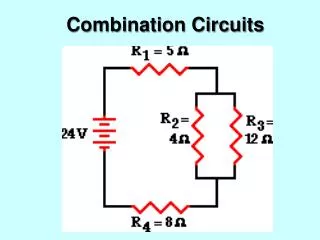

Major strategies • Parse hybridization: combine substructures of the input parses to produce a better parse. • Parser switching: for each x, f(x) is one of the fi(x)

Parse hybridization: Method 1 • Constituent voting: • Include a constituent if it appears in the output of a majority of the parsers. • It requires no training. • All parsers are treated equally.

Parse hybridization: Method 2 • Naïve Bayes Y=π(c) is a binary function return true when c should be included in the hyp Xi=Mi(c) is a binary function return true when parser i suggests c should be in the parse

Parse hybridization • If the number of votes required by constituent voting is greater than half of the parsers, the resulting structure has no crossing constituents. • What will happen if the input parsers disagree often?

Parser switching: Method 1 • Similarity switching • Intuition: choose the parse that is most similar to the other parses. • Algorithm: • For each parse πi, create the constituent set Si. • The score for πi is • Choose the parse with the highest score. • No training is required.

Parser switching: Method 2 • Naïve Bayes

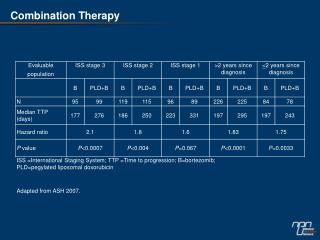

Experiments • Training data: WSJ except sections 22 and 23 • Development data: Section 23 • For training Naïve Bayes • Test data: Section 22

Robustness testing 90.43 90.74 91.25 91.25 Add a 4th parser: F-measure about 67.6 Performance remains the same except for constituent voting

Summary of 1st paper • Combining parsers produces good results: 89.67% 91.25% • Different methods of combining: • Parse hybridization • Constituent voting • Naïve Bayes • Parser switching • Similarity switching • Naïve Bayes

Experiment settings • Parser: Collins’s Model 2 (1997) • Training data: sections 01-21 • Test data: Section 23

Bagging ML f1 ML f2 f {(s,t)} ML fm Combining method: constituent voting

Experiment results • Baseline (no bagging): 88.63 • Initial (one bag): 88.38 • Final (15 bags): 89.17

Boosting Weighted Sample ML f1 Training Sample ML Weighted Sample f2 f … ML fT

Boosting results Boosting does not help: 88.63 88.84

Summary • Combining parsers produces good results: 89.67% 91.25% • Bagging helps: 88.63% 89.17% • Boosting does not help (in this case): 88.63% 88.84%