Nonlinear Empirical Models

Nonlinear Empirical Models. Neural Network Models of Process Behaviour. generally modeling input-output behaviour empirical models - no attempt to model physical structure estimated from plant data. Neural Networks. structure motivated by physiological structure of brain

Nonlinear Empirical Models

E N D

Presentation Transcript

Nonlinear Empirical Models J. McLellan

Neural Network Models of Process Behaviour • generally modeling input-output behaviour • empirical models - no attempt to model physical structure • estimated from plant data J. McLellan

Neural Networks... • structure motivated by physiological structure of brain • individual nodes or cells - “neurons” -sometimes called “perceptrons” • neuron characteristics - notion of “firing” or threshold behaviour J. McLellan

Stages of Neural Network Model Development • data collection - training set, validation set • specification / initialization - structure of network, initial values • “learning” or training - estimation of parameters • validation - ability to predict new data set collected under same conditions J. McLellan

Data Collection • expected range and point of operation • size of input perturbation signal • type of input perturbation signal - random input sequence?- number of levels (two or more?) • validation data set J. McLellan

Model Structure • numbers and types of nodes • input, “hidden”, output • depends on type of neural network- e.g., Feedforward Neural Network- e.g., Recurrent Neural Network • types of neuron functions - threshold behaviour - e.g., sigmoid function, ordinary differential equation J. McLellan

“Learning” (Training) • estimation of network parameters - weights, thresholds and bias terms • nonlinear optimization problem • objective function - typically sum of squares of output prediction error • optimization algorithm - gradient-based method or variation J. McLellan

Validation • use estimated NN model to predict outputs for new data set • if prediction unacceptable, “re-train” NN model with modifications - e.g., number of neurons • diagnostics - sum of squares of prediction error- R2 - coefficient of determination J. McLellan

Feedforward Neural Networks • signals flow forward from input through hidden nodes to output- no internal feedback • input nodes - receive external inputs (e.g., controls) and scale to [0,1] range • hidden nodes - collect weighted sums of inputs from other nodes and act on the sum with a nonlinear function J. McLellan

Feedforward Neural Networks (FNN) • output nodes - similar to hidden nodes BUT they produce signals leaving the network (outputs) • FNN has one input layer, one output layer, and can have many hidden layers J. McLellan

FNN - Neuron Model • ith neuron in layer l+1 threshold value weight state of neuron activation function J. McLellan

FNN parameters • weights wl+1ij - weight on output from jth neuron in layer l entering neuron i in layer l+1 • threshold - determines value of function when inputs to neuron are zero • bias - provision for additional constants to be added J. McLellan

FNN Activation Function • typically sigmoidal function J. McLellan

FNN Structure hidden layer input layer output layer J. McLellan

Mathematical Basis • approximation of functions • e.g., Cybenko, 1989 - J. of Mathematics of Control, Signals and Systems • approximation to arbitrary degree given sufficiently large number of nodes - sigmoidal J. McLellan

Training FNN’s • calculate sum of squares of output prediction error • take current iterates of parameters, calculate forward and calculate E • update estimates of weights working backwards - “backpropagation” J. McLellan

Estimation • typically using a gradient-based optimization method • make adjustments proportional to • issues - highly over-parameterized models - potential for singularity • e.g., Levenberg-Marquardt algo. J. McLellan

How to use FNN for modeling dynamic behaviour? • structure of FNN suggests static model • model dynamic model as nonlinear difference equation • essentially a NARMAX model J. McLellan

Linear discrete time transfer function • transfer function • equivalent difference equation J. McLellan

FNN Structure - 1st order linear example hidden layer input layer output layer yk yk+1 uk uk-1 J. McLellan

FNN model for 1st order linear example • essentially modelling algebraic relationship between past and present inputs and outputs • nonlinear activation function not required • weights required - correspond to coefficients in discrete transfer function J. McLellan

Applications of FNN’s • process modeling - bioreactors, pulp and paper, • nonlinear control • data reconciliation • fault detection • some industrial applications - many academic (simulation) studies J. McLellan

“Typical dimensions” • Dayal et al., 1994 - 3-state jacketted CSTR as a basis • 700 data points in training set • 6 inputs, 1 hidden layer with 6 nodes, 1 output J. McLellan

Advantages of Neural Net Models • limited process knowledge required - but be careful (e.g., Dayal et al. paper) • flexible - can model difficult relationships directly (e.g., inverse of a nonlinear control problem) J. McLellan

Disadvantages • potential for large computational requirements - implications for real-time application • highly over-parameterized • limited insight into process structure • amount of data required • limited to range of data collection J. McLellan

Recurrent Neural Networks • neurons contain differential equation model - 1st order linear + nonlinearity • contain feedback and feedforward components • can represent continuous dynamics • e.g., You and Nikolaou, 1993 J. McLellan

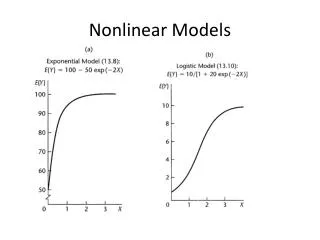

Nonlinear Empirical Model Representations • Volterra Series (continuous and discrete) • Nonlinear Auto-Regressive Moving Average with Exogenous Inputs (NARMAX) • Cascade Models J. McLellan

Volterra Series Models • higher-order convolution models • continuous J. McLellan

Volterra Series Model • discrete time J. McLellan

Volterra Series models... • can be estimated directly from data or derived from state space models • causality - limits of sum or integration • functions hi - referred to as the ith order kernel • applications - typically second-order(e.g., Pearson et al., 1994 - binder) J. McLellan

NARMAX models • nonlinear difference equation models • typical form • dependence on lagged y’s - autoregressive • dependence on lagged u’s - moving average J. McLellan

NARMAX examples • with products, cross-products • 2nd order Volterra model • as NARMAX model in u only, with second order terms J. McLellan

Nonlinear Cascade Models • made from serial and parallel arrangements of static nonlinear and linear dynamic elements • e.g., 1st order linear dynamic element fed into a “squaring” element • obtain products of lagged inputs • cf. second order Volterra term J. McLellan