Tier-1 Overview

Tier-1 Overview. Andrew Sansum 21 November 2007. Overview of Presentations. Morning Presentations Overview (Me) Not really overview – at request of Tony mainly MoU commitments CASTOR (Bonny) Storing the data and getting it to tape Grid Infrastructure (Derek Ross) Grid Services

Tier-1 Overview

E N D

Presentation Transcript

Tier-1 Overview Andrew Sansum 21 November 2007

Overview of Presentations • Morning Presentations • Overview (Me) • Not really overview – at request of Tony mainly MoU commitments • CASTOR (Bonny) • Storing the data and getting it to tape • Grid Infrastructure (Derek Ross) • Grid Services • dCache future • Grid Only Access • Fabric Talk (Martin Bly) • Procurements • Hardware infrastructure (inc Local Network) • Operation • Afternoon Presentations • Neil (RAL benefits) • Site Networking (Robin Tasker) • Machine Rooms (Graham Robinson)

What I’ll Cover • Mainly going to cover MoU commitments • Response Times • Reliability • On-Call • Disaster planning • Also cover staffing

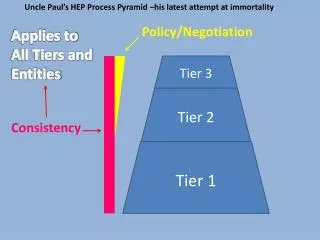

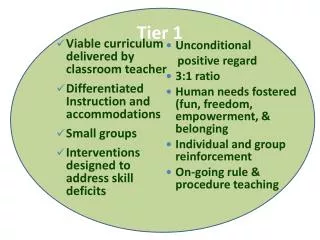

Grid Services Grid/exp Support Fabric (H/W and OS) CASTOR SW/Robot Ross Condurache Hodges Klein (PPS) Vacancy Bly Wheeler Vacancy Thorne White (OS support) Adams (HW support) Corney (GL) Strong (Service Manager) Folkes (HW Manager) deWitt Jensen Kruk Ketley Jackson (CASE) Prosser (Contractor) (Nominally 5.5 FTE) Project Management (Sansum/Gordon/(Kelsey)) (1.5 FTE) Database Support (0.5 FTE) (Brown) Machine Room operations (1.5 FTE) Networking Support (0.5 FTE) GRIDPP2 Team Organisation

Staff Evolution to GRIDPP3 • Level • GRIDPP2 (13.5 GRIDPP + 3.0 e-Science) • GRIDPP3 (17.0 GRIDPP + 3.4 e-Science) • Main changes • Hardware repair effort 1->2 FTE • New incident response team (2 FTE) • Extra castor effort (0.5 FTE) (but this is already effort that has been working on CASTOR unreported. • Small changes elsewhere • Main problem • We have injected 2 FTE of effort temporarily into CASTOR. Long term GRIDPP3 plan funds less effort than current experience suggests that we need.

WLCG/GRIDPP MoU Expectations [1] Prime service hours are 08:00-18:00 during the working week of the centre, except public holidays.

Response Time • Time to acknowledge fault ticket • 12-48 hour response time outside prime shift • On-call system should easily cover this provided possible to automatically classify problem tickets by level of service required. • Cover during prime shift more challenging (2-4 hours) but is already a routine task for Admin on Duty • To hit availability target must be much faster (2 hours or less)

Reliability • Have made good progress in last 12 months • Prioritised issues affecting SAM test failures. • Introduced “issue tracking” and weekly reviews of outstanding issues. • Introduced resilience into trouble spots (but more still to do) • Moved services to appropriate capacity hardware, seperated services, etc etc. • Introduced new team role: “Admin on Duty”. Monitoring farm operation, ticket progression, EGEE broadcast info. • Best Tier-1 averaged over last 3 months (other than CERN).

MoU Commitments (Availability) • Really reliability (availability while scheduled up) • Still tough – 97-99% service availability will be hard (1% is just 87 hours per year). • OPN reliability predicted to be 98% without resilience, site SJ5 connection is much better (Robin will discuss). • Most faults (75%) will fall outside normal working hours • Software components still changing (eg CASTOR upgrades, WMS) etc. • Many faults in 2008 will be “new” only emerging as WLCG ramps up to full load. • Emergent faults can take a long time to diagnose and fix (days) • To improve on current availability will need to: • Improve automation • Speed up manual recovery process • Improve monitoring further • Provide on-call

On-Call • On-Call will be essential in order to meet response and availability targets. • On-Call project now running (Matt Hodges), target is to have on-call operational by March 2008. • Automation/recovery/monitoring all important parts of on-call system. Avoid callouts by avoiding problems. • May be possible to have some weekend on-call cover before March for some components. • On-call will continue to evolve after March as we learn from experience.

Disaster Planning (I) • Extreme end of availability problem. Risk analysis exists, but aging and not fully developed. • Highest Impact risks: • Extended environment problem in machine room • Fire • Flood • Power Failure • Cooling failure • Extended network failure • Major data loss through loss of CASTOR metadata • Major security incident (site or Tier-1)

Disaster Planning (II) • Some disaster plan components exist • Disaster plan for machine room. Assuming equipment is undamaged, relocate and endeavour to sustain functions but at much reduced capacity. • Datastore (ADS) disaster recovery plan developed and tested • Network plan exists • Individual Tier-1 systems have documented recovery processes and fire-safe backups or can be instanced from kickstart server. Not all these are simple nor are all fully tested. • Key Missing Components • National/Global services (RGMA/FTS/BDII/LFC/…). Address by distributing elsewhere. Probably feasible and is necessary – 6 months. • CASTOR – All our data holdings depend on integrity of catalogue. Recover from first principles not tested. Is flagged as a priority area but balance against need to make CASTOR work. • Second – independent Tier-1 build infrastructure to allow us to rebuild Tier-1 at new physical location. Would allow us to address major issues such as fire. Major project – priority?

Conclusions • Made a lot of progress in many areas this year. Availability improving, hardware reliable, CASTOR working quite well and upgrades on-track. • Main challenges for 2008 (data taking) • Large hardware installations and almost immediate next procurement • CASTOR at full load • On-call and general MoU processes