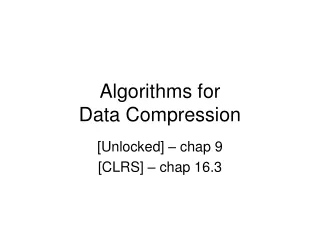

BWT-Based Compression Algorithms compress better than you have thought

BWT-Based Compression Algorithms compress better than you have thought Haim Kaplan, Shir Landau and Elad Verbin Talk Outline History and Results 10-minute introduction to Burrows-Wheeler based Compression. Our results: Part I: The Local Entropy Part II: Compression of integer strings

BWT-Based Compression Algorithms compress better than you have thought

E N D

Presentation Transcript

BWT-Based Compression Algorithms compress better than you have thought Haim Kaplan, Shir Landau and Elad Verbin

Talk Outline • History and Results • 10-minute introduction to Burrows-Wheeler based Compression. • Our results: • Part I: The Local Entropy • Part II: Compression of integer strings

History • SODA ’99: Manzini presents the first worst-case upper bounds on BWT-based compression: • And:

History • CPM ’03 + SODA ‘04: Ferragina, Giancarlo, Manzini and Sciortino give Algorithm LeafCover which comes close to • However, experimental evidence shows that it doesn’t perform as well as BW0 (20-40% worse) Why?

Our Results • We Analyze only BW0. • We get: For every

Our Results • Sample values:

Our Results • We actually prove this bound vs. a stronger statistic: • (seems to be quite accurate) • Analysis through results which are of independent interest

Preliminaries • Want to define: • Present the BW0 algorithm

order-0 entropy Lower bound for compression without context information

order-k entropy = Lower bound for compression with order-k contexts

order-k entropy mississippi: Context for i: “mssp” Context for s: “isis” Context for p: “ip”

Algorithm BW0 BW0= BWT + MTF + Arithmetic

BW0: Burrows-Wheeler Compression Text in English (similar contexts -> similar character) mississippi BWT Text with spikes (close repetitions) ipssmpissii Move-to-front Integer string with small numbers 0,0,0,0,0,2,4,3,0,1,0 Arithmetic Compressed text 01000101010100010101010

The BWT • Invented by Burrows-and-Wheeler (‘94) • Analogue to Fourier Transform (smooth!) [Fenwick] string with context-regularity mississippi BWT ipssmpissii string with spikes (close repetitions)

The BWT cyclic shifts of the text sorted lexicographically output of BWT BWT sorts the characters by their context

The BWT mississippi: Context for i: “mssp” Context for s: “isis” Context for p: “ip” chars with context ‘i’ chars with context ‘p’ mississippi BWT sorts the characters by their context

Manzini’s Observation • (Critical for Algorithm LeafCover) • BWT of the string = concatenation of order-k contexts contexts

Move To Front • By Bentley, Sleator, Tarjan and Wei (’86) ipssmpissii string with spikes (close repetitions) move-to-front integer string with small numbers 0,0,0,0,0,2,4,3,0,1,0

Move to Front Sara Shara Shir Samech שרה שרה שיר שמח))

After MTF • Now we have a string with small numbers: lots of 0s, many 1s, … • Skewed frequencies: Run Arithmetic! Character frequencies

Summary of BW0 BW0= BWT + MTF + Arithmetic

Summary of BW0 • bzip2: Achieves compression close to statistical coders, with running-time close to gzip. • Here we analyze only BW0. • 15% worse than bzip2. • Our upper bounds (roughly) apply to the better algorithms as well.

The Local Entropy • We have the string -- small numbers • In dream world, we could compress it to sum of logs. • Define

The Local Entropy • Let’s explore LE: • LE is a measure of local similarity: • LE(aaaaabbbbbbcccccddddd)=0

The Local Entropy • Example: LE(abracadabra)= 3*log2+3*log3+1*log4+3*log5

The Local Entropy • We use two properties of LE: • BSTW: • Convexity:

Observation: Locality of MTF • MTF is sort of local: • Cutting the input string into parts doesn’t influence much: Only positions per part • So: a b a a a b a b

The Local Entropy • We use two properties of LE: • BSTW: • Convexity:

Worst case string for LE: ab…z ab…z ab…z

Worst case string for LE: ab…z ab…z ab…z • MTF: • LE:

Worst case string for LE: ab…z ab…z ab…z • MTF: • LE: • Entropy:

Worst case string for LE: ab…z ab…z ab…z • MTF: • LE: • Entropy: • This *always* holds

The Local Entropy • We use two properties of LE: • BSTW: • Convexity:

So, in the dream world we could compress up to • What about reality? – How close can we come to ?

What about reality? – How close can we come to ? • Problem: Compress an integer sequence close to its sum of logs,

Compressing an integer sequence • Problem: Compress an integer sequence s’ close to its sum of logs,

First Solution • Universal Encodings of Integers • Integer x -> • Encoding bits

Better Solution • Doing some math, it turns out that Arithmetic compression is good. • Not only good: It is best!

The order0 math • Theorem: For any string s’ and any ,