Using Description-Based Methods for Provisioning Clusters

Using Description-Based Methods for Provisioning Clusters. Philip M. Papadopoulos San Diego Supercomputer Center University of California, San Diego. Computing Clusters. How I spent my Weekend Why should you care about about software configuration Description based configuration

Using Description-Based Methods for Provisioning Clusters

E N D

Presentation Transcript

Using Description-Based Methods for Provisioning Clusters Philip M. Papadopoulos San Diego Supercomputer Center University of California, San Diego

Computing Clusters • How I spent my Weekend • Why should you care about about software configuration • Description based configuration • Taking the administrator out of cluster administration • XML-based assembly instructions • What’s next

A Story from this last Weekend • Attempt a TOP 500 Run on a two fused 128 node PIII (1GHz, 1GB mem) clusters • 100 Mbit ethernet, Gigabit to frontend. • Myrinet 2000. 128 port switch on each cluster • Questions • What LINPACK performance could we get? • Would Rocks scale to 256 nodes? • Could we set up/teardown and run benchmarks in the allotted 48 hours? • Would we get any sleep?

Setup • Fri: Started 5:30pm. Built new frontend. Physical rewiring of myrinet, added ethernet switch. • Fri: Midnight. Solved some ethernet issues. Completely reinstalled all nodes. • Sat: 12:30a Went to sleep. • Sat: 6:30a. Woke up. Submitted first LINPACK runs (225 nodes) New Frontend 8 Cross Connects (Myrinet) 128 nodes (120 on Myrinet) 128 nodes (120 on Myrinet)

Mid-Experiment • Sat 10:30a. Fixed a failed disk, and a bad SDRAM. Started Runs on 240 nodes. Went shopping at 11:30a • 4:30p. Examined Output. Started ~6hrs of Runs • 11:30p. Examined more visits. Submitted final large runs. Went to sleep • 285 GFlops • 59.5% Peak • Over 22 hours of continuous computing 240 Dual PIII (1Ghz, 1GB) - Myrinet

Final Clean-up • Sun 9a. Submitted 256 node Ethernet HPL • Sun 11:00a. Added GigE to frontend. Started complete reinstall of all 256 nodes. • ~40 Minutes for Complete reinstall (return to original system state) • Did find some (fixable) issues at this scale • Too Many open database connections (fixing for next release) • DHCP client not resilient enough. Server was fine. • Sun 1:00p. Restored wiring, rebooted original frontends. Started reinstall tests. Some debug of installation issues uncovered at 256 nodes. • Sun 5:00p. Went home. Drank Beer.

Installation, Reboot, Performance 32 Node Re-Install • < 15 minutes to reinstall 32 node subcluster (rebuilt myri driver) • 2.3min for 128 node reboot Start Finsish Reboot Start HPL

Key Software Components • HPL + ATLAS. Univ. of Tennessee • The truckload of open source software that we all build on • Redhat linux distribution and installer • de facto standard • NPACI Rocks • Complete cluster-aware toolkit that extends a Redhat Linux distribution • (Inherent Belief that “simple and fast” can beat out “complex and fast”)

Why Should You Care about Cluster Configuration/Administration? • “We can barely make clusters work.” – Gordon Bell @ CCGCS02 • “No Real Breakthroughs in System Administration in the last 20 years” – P. Beckman @ CCGCS02 • System Administrators love to continuously twiddle knobs • Similar to a mechanic continually adjusting air/fuel mixture on your car • Or worse: Randomly unplugging/plugging spark plug wires • Turnkey/Automated systems remove the system admin from the equation • Sysadmin doesn’t like this. • This is good

Clusters aren’t homogeneous • Real clusters are more complex than a pile of identical computing nodes • Hardware divergence as cluster changes over time • Logical heterogeneity of specialized servers • IO servers, Job Submission, Specialized configs for apps, … • Garden-variety cluster owner shouldn’t have to fight the provisioning issues. • Get them to the point where they are fighting the hard application parallelization issues • They should be able to easily follow software/hardware trends • Moderate-sized (upto ~128 nodes) clusters are the “standard” grid endpoints • A non-uber administrator handles two 128 node Rocks clusters at SIO (260:1 admin to system ratio)

NPACI Rocks Toolkit – rocks.npaci.edu • Techniques and software for easy installation, management, monitoring and update of Linux clusters • A complete cluster-aware distribution and configuration system. • Installation • Bootable CD + floppy which contains all the packages and site configuration info to bring up an entire cluster • Management and update philosophies • Trivial to completely reinstall any (all) nodes. • Nodes are 100% automatically configured • RedHat Kickstart to define software/configuration of nodes • Software is packaged in a query-enabled format • Never try to figure out if node software is consistent • If you ever ask yourself this question, reinstall the node • Extensible, programmable infrastructure for all node types that make up a real cluster.

Tools Integrated • Standard cluster tools • MPICH, PVM, PBS, Maui (SSH, SSL -> Red Hat) • Rocks add ons • Myrinet support • GM device build (RPM), RPC-based port-reservation (usher-patron) • Mpi-launch (understands port reservation system) • Rocks-dist – distribution work horse • XML (programmable) Kickstart • eKV (console redirect to ethernet during install) • Automated mySQL database setup • Ganglia Monitoring (U.C. Berkeley and NPACI) • Stupid pet administration scripts • Other tools • PVFS • ATLAS BLAS, High Performance Linpack

Support for Myrinet • Myrinet device driver must be versioned to the exact kernel version (eg. SMP,options) running on a node • Source is compiled at reinstallation on every (Myrinet) node (adds 2 minutes installation) (a source RPM, by the way) • Device module is then installed (insmod). • GM_mapper run (add node to the network) • Myrinet ports are limited and must be identified with a particular rank in a parallel program • RPC-based reservation system for Myrinet ports • Client requests port reservation from desired nodes • Rank mapping file (gm.conf) created on-the-fly • No centralized service needed to track port allocation • MPI-launch hides all the details of this • HPL (LINPACK) comes pre-packaged for Myrinet • Build your Rocks cluster, see where it sits on the Top500

Key Ideas • No difference between OS and application software • OS installation is completely disposable • Unique state that is kept only at a node is bad • Creating unique state at the node is even worse • Software bits (packages) are separated from configuration • Diametrically opposite from “golden image” methods • Description-based configuration rather than image-based • Installed OS is “compiled” from a graph. • Inheritance of software configurations • Distribution • Configuration • Single step installation of updated software OS • Security patches pre-applied to the distribution not post-applied on the node

Rocks extends installation as a basic way to manage software on a cluster It becomes trivial to insure software consistency across a cluster

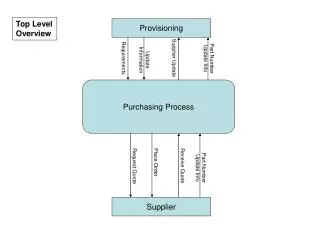

Rocks Disentangles Software Bits (distributions) and Configuration Collection of all possible software packages (AKA Distribution) Descriptive information to configure a node Kickstart file RPMs Appliances Compute Node IO Server Web Server

Managing Software Distributions Collection of all possible software packages (AKA Distribution) Descriptive information to configure a node Kickstart file RPMs Compute Node IO Server Web Server

Rocks-dist Repeatable process for creation of localized distributions • # rocks-dist mirror • Rocks mirror • Rocks 2.2 release • Rocks 2.2 updates • # rocks-dist dist • Create distribution • Rocks 2.2 release • Rocks 2.2 updates • Local software • Contributed software • This is the same procedure NPACI Rocks uses. • Organizations can customize Rocks for their site. • Iterate, extend as needed

Description-based Configuration Collection of all possible software packages (AKA Distribution) Descriptive information to configure a node Kickstart file RPMs Compute Node IO Server Web Server

Description-based Configuration • Infrastructure that “describes“ the roles of cluster nodes • Nodes are assembled using Red Hat's kickstart • Components are defined in XML files that completely describe and configure software subsystems • Software bits are in standard RPMs. • Kickstart files are built on-the-fly, tailored for each node through Database interaction VS.

What is a Kickstart file? Setup/Packages (20%) Package Configuration (80%) cdrom zerombr yes bootloader --location mbr --useLilo skipx auth --useshadow --enablemd5 clearpart --all part /boot --size 128 part swap --size 128 part / --size 4096 part /export --size 1 --grow lang en_US langsupport --default en_US keyboard us mouse genericps/2 timezone --utc GMT rootpw --iscrypted nrDG4Vb8OjjQ. text install reboot %packages @Base @Emacs @GNOME %post cat > /etc/nsswitch.conf << 'EOF' passwd: files shadow: files group: files hosts: files dns bootparams: files ethers: files EOF cat > /etc/ntp.conf << 'EOF' server ntp.ucsd.edu server 127.127.1.1 fudge 127.127.1.1 stratum 10 authenticate no driftfile /etc/ntp/drift EOF /bin/mkdir -p /etc/ntp cat > /etc/ntp/step-tickers << 'EOF' ntp.ucsd.edu EOF /usr/sbin/ntpdate ntp.ucsd.edu /sbin/hwclock --systohc Portable (ASCII), Not Programmable, O(30KB)

What are the Issues • Kickstart file is ASCII • There is some structure • Pre-configuration • Package list • Post-configuration • Not a “programmable” format • Most complicated section is post-configuration • Usually this is handcrafted • Really Want to be able to build sections of the kickstart file from pieces • Straightforward extension to new software, different OS

Focus on the notion of “appliances” How do you define the configuration of nodes with special attributes/capabilities

Assembly Graph of a Complete Cluster - “Complete” Appliances (compute, NFS, frontend, desktop, …) - Some key shared configuration nodes (slave-node, node, base)

Describing Appliances • Purple appliances all include “slave-node” • Or derived from slave-node • Small differences are readily apparent • Portal, NFS has “extra-nic”. Compute does not • Compute runs “pbs-mom”, NFS, Portal do not • Can compose some appliances • Compute-pvfs IsA compute and IsA pvfs-io

Architecture Dependencies • Focus only on the differences in architectures • logically, IA-64 compute node is identical to IA-32 • Architecture type is passed from the top of graph • Software bits (x86 vs. IA64) are managed in the distribution

Abstract Package Names, versions, architecture ssh-client Not ssh-client-2.1.5.i386.rpm Allow an administrator to encapsulate a logical subsystem Node-specific configuration is retrieved from our database IP Address Firewall policies Remote access policies … <?xml version="1.0" standalone="no"?> <!DOCTYPE kickstart SYSTEM "@KICKSTART_DTD@" [<!ENTITY ssh "openssh">]> <kickstart> <description> Enable SSH </description> <package>&ssh; </package> <package> &ssh;-clients</package> <package> &ssh;-server</package> <package> &ssh;-askpass</package> <!-- include XFree86 packages for xauth --> <package>XFree86</package> <package>XFree86-libs</package> <post> cat > /etc/ssh/ssh_config << 'EOF' <!-- default client setup --> Host * CheckHostIP no ForwardX11 yes ForwardAgent yes StrictHostKeyChecking no UsePrivilegedPort no FallBackToRsh no Protocol 1,2 EOF </post> </kickstart> XML Used to Describe Modules

Space-Time and HTTP Node Appliances Frontends/Servers DHCP IP + Kickstart URL Kickstart RQST Generate File kpp DB Request Package Serve Packages kgen Install Package • HTTP: • Kickstart URL (Generator) can be anywhere • Package Server can be (a different) anywhere Post Config Reboot

Subsystem Replacement is Easy • Binaries are in de facto standard package format (RPM) • XML module files (components) are very simple • Graph interconnection (global assembly instructions) is separate from configuration • Examples • Replace PBS with Sun Grid Engine • Upgrade version of OpenSSH or GCC • Turn on RSH (not recommended) • Purchase commercial compiler (recommended)

Reset • 100s of clusters have been built with Rocks on a wide variety of physical hardware • Installation/Customization is done in a straightforward programmatic way • Scaling is excellent • HTTP is used as a transport for reliability/performance • Configuration Server does not have to be in the cluster • Package Server does not have to be in the cluster • (Sounds grid-like)

What’s still missing? • Improved Monitoring • Monitoring Grids of Clusters • “Personal” cluster monitor • Straightforward Integration with Grid (Software) • Will use NMI (NSF Middleware Initiative) software as a basis (A Grid endpoint should no harder to setup than a cluster) • Any sort of real IO story • PVFS is a toy (no reliability) • NFS doesn’t scale properly (no stabilty) • MPI-IO is only good for people willing to retread large sections of code. (most users want read/write/open close). • Real parallel job control • MPD looks promising • …

Meta Cluster Node Meta Cluster Monitor Built on Ganglia

Integration with Grid Software • As part of NMI, SDSC is working on a package called gridconfig • http://rocks.npaci.edu/nmi/gridconfig/overview.html • Provide a higher level tool for creating and consistently managing configuration files for software components • Allows config data (eg path names, policy files, etc). To be shared among unrelated components • Does NOT require changes to config file formats • Globus GT2 has over a dozen configuration files • Over 100 configurable parameters • Many files share similar information • Will be part of NMI-R2

Some Gridconfig Details Top Level Components Globus Details (example)

Accepting Configuration • Parameters from previous screens stored in a simple schema (mySQL database is backend) • From these parameters, native format configuration files are written

Rocks Near Term • Version 2.3 in alpha now, Beta by end 1st week of October • RedHat 7.3 • Integration with Sun Grid Engine (SCS – Singapore) • Resource target for SCE (P. Uthayopas, KU, Thailand) • Bug fixes, additional software components • Some low-level Engineering changes on interaction with Redhat installer • Working toward NMI Ready (point release in Dec. early Jan).

Parting Thoughts • Why is grid software so hard to successfully deploy? • If large cluster configurations can be really thought of as disposable, shouldn’t virtual organizations be just as easily constructed? • http://rocks.npaci.edu • http://Ganglia.sourceforge.net • http://rocks.npaci.edu/nmi/gridconfig