Parallelizing Incremental Bayesian Segmentation (IBS)

Parallelizing Incremental Bayesian Segmentation (IBS). Joseph Hastings Sid Sen. Outline. Background on IBS Code Overview Parallelization Methods (Cilk, MPI) Cilk Version MPI Version Summary of Results Final Comments. Background on IBS. IBS.

Parallelizing Incremental Bayesian Segmentation (IBS)

E N D

Presentation Transcript

Parallelizing Incremental Bayesian Segmentation (IBS) Joseph Hastings Sid Sen

Outline • Background on IBS • Code Overview • Parallelization Methods (Cilk, MPI) • Cilk Version • MPI Version • Summary of Results • Final Comments

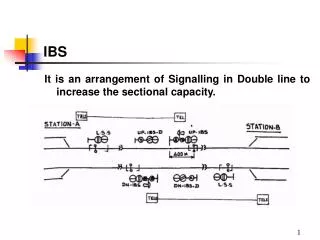

IBS • Incremental Bayesian Segmentation [1] is an on-line machine learning algorithm designed to segment time-series data into a set of distinct clusters • It models the time-series as the concatenation of processes, each generated by a distinct Markov chain, and attempts to find the most-likely break points between the processes

Training Process • During the training phase of the algorithm, IBS builds a set of Markov matrices that it believes are most likely to describe the set of processes responsible for generating the time series

Hi-Level Control Flow • main() • loops through input file • Runs break-point detection • Foreach segment: • check_out_process() • Foreach existing matrix • compute_subsumed_marginal_likelihood() • Adds segment to set of matrices or subsumes

Parallelizable Computation • compute_subsumed_marginal_likelihood() • Depends on a single matrix and the new segment • Produces a single score • The index of the best score must be calculated

MPI • Library facilitating inter-process communication • Provides useful communication routines, particularly MPI_Allreduce, which simultaneously reduces data on all nodes and broadcasts the result

Cilk • Originally developed by the Supercomputing Technologies Group at the MIT Laboratory for Computer Science • Cilk is a language for multithreaded parallel programming based on ANSI C that is very effective for exploiting highly asynchronous parallelism [3] (which can be difficult to write using message-passing interfaces like MPI)

Cilk • Specify number of worker threads or “processors” to create when running a Cilk job • No one-to-one mapping of worker threads to processors, hence the quotes • Work-stealing algorithm • When a processor runs out of work, asks another processor chosen at random for work to do • Cilk’s work-stealing scheduler executes any Cilk computation in nearly optimal time • Computation on P processors executed in time Tp≤ T1/P + O(T)

Code Modifications • Keywords: cilk, spawn, sync • Convert any methods that will be spawned or that will spawn other (Cilk) methods into Cilk methods • In our case: main(), check_out_process(), compute_subsumed_marginal_likelihood() • Main source of parallelism comes from subsuming current process with each existing process and choosing subsumption with the best score • spawn compute_subsumed_marginal_likelihood(proc, get(processes,i), copy_process_list(processes));

Code Modifications • When updating global_score need to enforce mutual exclusion between worker threads • Cilk_lockvar score_lock; ... Cilk_lock(score_lock); ... Cilk_unlock(score_lock);

Cilk Results Optimal performance achieved using 2 processors (trade-off between overhead of Cilk and parallelism of program)

Adaptive Parallelism • Real intelligence is in the Cilk runtime system, which handles load balancing, paging, and communication protocols between running worker threads • Currently have to specify the number of processors to run a Cilk job on • Goal is to eventually make the runtime system adaptively parallel by intelligently determining how many threads/processors to use • Fair and efficient allocation among all running Cilk jobs • Cilk Macroscheduler [4] uses steal rate of worker thread as a measure of its processor desire (if a Cilk job spends a substantial amount of its time stealing, then the job has more processors than it desires)

Code Modifications • check_out_process() first broadcasts the segment using MPI_Bcast() • Each process loops over all matrices, but only performs subsumption if (I % np == rank) • Each process computes best score, and MPI_Allreduce() is used to reduce this information to the globally best score • Each process learns the index of the best matrix and performs the identical subsumption

MPI Results Big improvement from 1 to 2 processors; levels off for 3 or more

MPI vs. Cilk • MPI version much more complicated, involved more lines of code, and much more difficult to debug • Cilk version required thinking about mutual-exclusion, which MPI avoids • Cilk version required few code changes, but conceptually more complicated to think about

References (Presentation) • [1] Paola Sebastiani and Marco Ramoni. Incremental Bayesian Segmentation of Categorical Temporal Data. 2000. • [2] Wenke Lee and Salvatore J. Stolfo. Data Mining Approaches for Intrusion Detection. 1998. • [3] Cilk 5.3.2 Reference Manual. Supercomputing Technologies Group, MIT Lab for Computer Science. November 9, 2001. Available online: http://supertech.lcs.mit.edu/manual-5.3.2.pdf. • [4] R. D. Blumofe, C. E. Leiserson, B. Song. Automatic Processor Allocation for Work-Stealing Jobs. (Work in progress)