RANDOM SAMPLING:

RANDOM SAMPLING:. Topic #2. Key Definitions Pertaining to Sampling. Population : the set of “units” (in survey research, usually either individuals or households ), that are to be studied, for example ( N = size of population): The U.S. voting age population [ N = ~ 200m]

RANDOM SAMPLING:

E N D

Presentation Transcript

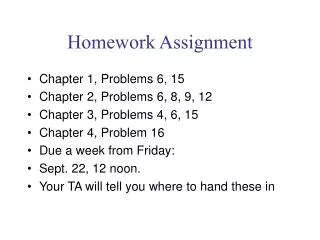

RANDOM SAMPLING: Topic #2

Key Definitions Pertaining to Sampling • Population: the set of “units” (in survey research, usually either individuals or households), that are to be studied, for example (N = size of population): • The U.S. voting age population [N = ~ 200m] • All people who are “expected” to vote in the upcoming election [N = ~ 130] (pre-election tracking polls) • All U.S. “households” [N = ~100m] • All registered voters in Maryland [N = ~ 2.6m] • All Newsweek subscribers [N = ~ 1.5m] • All UMBC undergraduate students [N = ~10,000] • All cards in a deck of cards [N = 52] • Sample: any subset of units drawn from the population • Sample size = n • Sampling fraction = n / N • usually small, e.g., 1/100,000, but • the fraction can be larger (and can even be greater than 1)

Key Definitions Pertaining to Sampling (cont.) • (Simple) sampling frame:a list of every unit in the population or • more generally, a setup that allows a random sample to be drawn • Random (or Probability) Sample:a sample such that each unit in the population has a calculable, i.e., precise and known in advance, chance of appearing in the (“drawn”) sample, e.g., selected by lottery, • i.e., use random mechanism to pick units out of the sampling frame. • Non-Random Sample: a sample selected in any non-random fashion, so that the probability that a unit is drawn into the sample cannot be calculated. • Call-in, voluntary response, interviewer selected, etc.

Key Definitions Pertaining to Sampling (cont.) • Simple Random Sample (SRS): a sample of size n such that every pairof units in the population has the same chance of appearing in the sample. • This implies that every possible sample of size n has the same chance of be the actual sample. • This also implies that every individual unit has the same chance of appearing in the sample, but some other kinds of random samples also have this property • Systematic Random Sample:a random sample of size n drawn from a simple sampling frame, such that each of the first N/n (i.e., the inverse of the sampling fraction) units on the list has the same chance of being selected and every (N/n)th subsequent unit on the list is also selected. • This implies that every unit — but not every subset of n units — in the population has the same chance of being in the sample. • Multi-Stage Random Sample: a sample selected by random mechanisms in several stages, • most likely because it is impossible or impractical to acquire a list of all units in the population, • i.e., because no simple sampling frame is available.

Key Definitions Pertaining to Sampling (cont.) • (Population) Parameter: a characteristic of the population, e.g., the percent of the population that approves of the way that the President is handling his job. • For a given population at a given time, the value of a parameter is fixed but typically is unknown (which is why we may be interested in conducting a survey). • (Sample) Statistic:a characteristic of a sample, e.g., the percent of a sample that approves of the way that the President is handling his job. • The value of a sample statistic is known (for any particular sample) but it is not fixed — it varies from sample to sample. • A sample statistic is typically used to estimate the comparable population parameter.

Key Definitions Pertaining to Sampling (cont.) • Most population parameters and sample statistics we consider are percentages, e.g., • the percent of the population or sample who approve of the way the President is doing his job, or • the percent of the population or sample who intend to vote Republican in the upcoming election. • A sample statistic is unbiasedif its expected value is equal to the corresponding population parameter. • This means that, as we take more and more samples from the same population, the average of all the sample statistics “converges” on (comes closer and closer to) the true population parameter.

Key Definitions Pertaining to Sampling (cont.) • The variation in sample statistics from sample to sample is called sampling error. • (Random) Sampling Error:the magnitude of the inherent variability of sample statistics (from sample to sample) • Public opinion polls and other surveys (for which the sample statistics are percentages) commonly report their sampling errors in terms of the margin of error associated with sample statistics. • This measure of sampling error is precisely defined and discussed below.

Sampling Error Demonstration • Consider the set of all cases in the ANES/SETUPS data for all years to be the population (N = 19,973). • Calculate some population parameter, e.g. PRESI-DENTIAL APPROVAL (V29). • Run SPSS on V29 for whole population (N = 19,973; adjusted/valid N = 17,485 (removing missing data) • Population parameter = 9333/17485 = 53.4% • SPSS allows us to takes random samples of any size out of this population. • Say n = 1500 • For each such sample, calculate corresponding sample statistic and see how it fluctuates/varies from sample to sample.

TABLE OF SAMPLING RESULTSPopulation parameter = 58.5% (V29 Presidential Approval, 1972-2000) Table shows samples statistics for 20 samples of each size Sample #n = 15 (Dev.) n = 150 (Dev.) n = 1500(Dev.) 1 56.3 -2.2 61.0 +2.5 60.9 +2.4 2 58.1 -0.4 61.9 +3.4 57.3 -1.2 3 61.8 +3.3 61.2 +2.7 59.0 +0.5 4 61.4 +2.9 63.3 +4.8 57.5 -1.0 5 90.2 +31.7 59.9 +1.4 58.7 +0.2 6 39.8 -18.7 60.3 +1.8 60.5 +2.0 7 60.2 +1.7 58.5 0.0 59.1 +0.6 8 64.1 +5.6 54.2 -4.3 57.5 -1.0 9 56.0 -2.5 49.4 -9.1 59.9 +1.4 10 76.5 +18.0 60.1 +1.6 58.8 +0.3 11 40.2 -18.3 61.5 +3.0 58.2 -0.3 12 57.8 -0.7 53.4 -5.1 58.8 +0.3 13 76.2 +17.7 47.9 -10.6 58.2 -0.3 14 59.8 +1.3 58.2 -0.3 57.5 -1.0 15 61.4 +2.9 60.5 +2.0 58.5 0.0 16 56.5 -2.0 49.6 -8.9 58.0 -0.5 17 68.2 +9.7 53.0 -5.5 58.7 +0.2 18 55.5 -3.0 50.8 -7.7 56.6 -1.9 19 58.4 -0.1 56.3 -2.2 57.0 -1.5 20 45.7 -12.8 58.8 +0.3 59.5 +1.0 Mean 60.2 +1.7 57.0 -1.5 58.5 0.0 Mean Ab.Dev. 7.8 7.8 3.9 3.9 0.9 0.9 Standard Dev. 11.7 11.7 4.8 4.8 1.1 1.1

Sampling (cont.) • Sampling is indispensable for many types of research, in particular public opinion and voting behavior research, because it is impossible, prohibitively expensive, and/or self-defeating to study every unit in (typically large) populations. • Non-random sampling gives no assurance of producing samples that are representative of the populations from which they are drawn. (Indeed, it often is not clear how to define the population from which many non-random samples are drawn, e.g., call-in polls.) • Random or probability sampling provides an expectation of producing representative samples, in the sense that random sampling statistics are unbiased (i.e., on average they equal true population parameters) and they are subject to a calculable (and controllable, by varying sample size and other factors) degree of sampling error.

Sampling (cont.) • More formally, most random sample statistics are • (approximately) normally distributed • with an average value equal to the corresponding population parameter, and • a variability (sampling error) that • is mainly a function of sample size n (as well as variability within the population sampled), and • can be calculated on the basis of the laws of probability. • When parameters and statistics are percentages, the magnitude of sampling error is commonly expressed in terms of a margin of error of ± X%. • The margin of error ± X% gives the magnitude of the 95% confidence interval for the sample statistic, which can be interpreted in the following way.

Margin of Error • Suppose that the Gallup Poll reports that the President’s current approval rating is 62%, subject to a margin of error of 3%. • This means: • Gallup drew one random sample (of size n = ~1500) that produced a sample statistic of 62%. • If [hypothetically] Gallup had taken a great many random samples of the same size n = 1500 from the same population at the same time, the different samples would have given varying sample statistics (approval ratings). • But 95% of these samples statistics would give approval ratings within 3 percentage points of the true population parameter (i.e., the Presidential approval rating we would get if [hypothetically] we took a complete and wholly successfully census). • Put more practically (given that Gallup took just one sample), we can be 95% confident that the actual sample statistic of 62% lies within 3 percentage points of the true parameter; • Therefore, we are 95% confident that the President's “true” approval rating lies within the range of 62 ± 3%, i.e., from 59% to 65%.

Margin of Error (cont.) • But you should ask: how can Gallup say that its poll has a margin of error of 3%, when they actually took just one sample, not the repeated samples hypothetically referred to above? • The answer is that the margins of error of a random sample can be calculated mathematically, using the laws of probability (in the same way one can calculate the probability of being dealt a particular hand in a card game or of winning a lottery). • This is the sense in which the margin of error of random samples is calculable, but that of non-random samples is not calculable.

Theoretical Probabilities of Different Sample Statistics • Consider the following population: • a deck of cards with N = 52. • Of course, we know all the characteristics (parameters) of this population (e.g., the percent of cards in the deck that are red, clubs, aces, etc.). • But let’s consider what we expect will happen if we take repeated (very small) random samplesout of this population and determine the corresponding sample statistic in each sample.

Example #1 • Let the population parameterof interest be the percent of cards in the deck that are red(which we know is 50%). • Now suppose we run the following sampling experiment. We see what will happen if we estimate the value of this parameter by drawing a random samples and using the corresponding sample statistic, i.e., the percent of cards in the sample that are red. • We take one or more random samples of size n by shuffling the deck, dealing out n cards, and observing them. • While we know that the sample statistic will vary from sample to sample, we can calculate how likely we are to get any specific sample statistic using the laws of probability. • For simplicity, suppose we take samples • of size of just n = 2, and • that we sample “with replacement.”

Example #1 (cont.) • On any draw (following replacement on the second and any subsequent draws), the probability of getting a red card is .5 (since half the cards in the population are red) and the probability of getting a non-red (black) card is also .5 .

Example #2 Let the population parameterof interest be the percent of cards in the deck that are diamonds(which we know is 25%). On any draw (following replacement on the second or subsequent draws), the probability of getting a diamonds card is .25 (since a quarter of the cards in the population are diamonds) and the probability of getting a non-diamond (hearts, clubs, or spades) card is .75 .

Examples #1 and #2 • The point of these examples is this: • Given any population with a given population parameter, when we draw a sample of a given size from the population, we can in principle calculate the probability of getting a particular sample statistic. • Don’t worry – you will not be asked to make such calculations from scratch. • Survey researchers do not make such calculations either. • A very simple formula can provide one such calculation to a very good approximation. • Alternatively, one can refer to tables (typically found at the back of statistics books).

The Inverse Square Root Law • Mathematical analysis shows that random sampling error is (as you would expect) inversely (or negatively) related to the size of the sample, • that is, smaller samples have larger sampling error, while larger samples have smaller error. • However, this is not a linear inverse relationship, • e.g., doubling sample size does not cut sampling error in half; • rather sampling error is inversely related to the square root of sample size. • For example, if a random sample of a given size has a margin of error of ± 6%, we can reduce this margin of error by increasing the sample size, but • we cannot do this by doubling the size of the sample; rather • we must take a sample four times as large to cut the margin of error in half (to ± 3%).

The Inverse Square Root Law (cont.) • In general, if Sample 1 and Sample 2 have sizes n1 and n2 respectively, and sampling errors e1 and e2 respectively, we have relationship (1) below, which is called the inverse square root law. • Note however that (1) does not actually allow you to calculate the magnitude of sampling error associated with a sample of a given size.

The Inverse Square Root Law (cont.) • For simple random samples and sample statistics that are percentages, statement (2) below is approximately true. • Note that (2) allows you to calculate the actual margin of error associated with a sample of any size (where the sample statistic is a percentage). • Remember this margin of error is the 95% confidence interval.

Table: Maximum Sampling Error by Sample Size (Table 3.4, Weisberg et al., p. 74 Note: Gallup and SRC (ANES) do not use simple random samples. These [and also formula (2)] give maximum sampling errors that occur when the population is hetero-geneous, i.e., the population parameter is not close to 0% or 100%.

Compare Column Deviations and ME100/√n =± 26% ± 8% ± 2.6% [Remember: 95% of all sample statistics should fall with the margin of error] Sample # n = 15 (Dev.) n = 150 (Dev.) n = 1500 (Dev.) 1 56.3 -2.2 61.0 +2.5 60.9 +2.4 2 58.1 -0.4 61.9 +3.4 57.3 -1.2 3 61.8 +3.3 61.2 +2.7 59.0 +0.5 4 61.4 +2.9 63.3 +4.8 57.5 -1.0 5 90.2 +31.7 59.9 +1.4 58.7 +0.2 6 39.8 -18.7 60.3 +1.8 60.5 +2.0 7 60.2 +1.7 58.5 0.0 59.1 +0.6 8 64.1 +5.6 54.2 -4.3 57.5 -1.0 9 56.0 -2.5 49.4 -9.1 59.9 +1.4 10 76.5 +18.0 60.1 +1.6 58.8 +0.3 11 40.2 -18.3 61.5 +3.0 58.2 -0.3 12 57.8 -0.7 53.4 -5.1 58.8 +0.3 13 76.2 +17.7 47.9 -10.6 58.2 -0.3 14 59.8 +1.3 58.2 -0.3 57.5 -1.0 15 61.4 +2.9 60.5 +2.0 58.5 0.0 16 56.5 -2.0 49.6 -8.9 58.0 -0.5 17 68.2 +9.7 53.0 -5.5 58.7 +0.2 18 55.5 -3.0 50.8 -7.7 56.6 -1.9 19 58.4 -0.1 56.3 -2.2 57.0 -1.5 20 45.7 -12.8 58.8 +0.3 59.5 +1.0 Mean 60.2 +1.7 57.0 -1.5 58.5 0.0 Mean Ab.Dev. 7.8 7.8 3.9 3.9 0.9 0.9 Standard Dev. 11.7 11.7 4.8 4.8 1.1 1.1

Sampling With and Without Replacement • Examples 1 and 2 assumed sampling with replacement; that is, we • shuffled the deck, drew out the first card, and observed whether it was red or diamond; • put the first card back in the deck (“replaced it”), shuffled the deck again, drew out a second card (possibly the same card as before), and observed it; • put the second card back in the deck, and continued in this manner until we had a sample of the desired size. • Note that, if we sample with replacement, we can draw a sample that is larger than the population (because cards may appear in the same sample multiple times).

Sampling With and Without Replacement (cont.) • However, the more natural way in which we might select a random sample of n cards is to shuffle the deck and then simply deal out n cards. • This is called sampling without replacement. • In this case, no card can appear more than once in the sample, and • we cannot draw a sample larger than n = 52 = N. • But the probability calculations become considerably more burdensome. • The probability of getting a red card on the first draw is .5, but • given that we get a red card on the first draw, the probability of getting red card on the second draw is no longer .5 (= 26/52) but 25/51 and the probability of getting black card on the second draw is no longer .5 (= 26/52) but 26/51. • But if the sampling fraction is very small, there is almost no difference between sampling with and without replacement.

Sampling With and Without Replacement (cont.) • In practice, survey researchers • sample without replacement, but • calculate the sampling error associated with their samples as if they were sampling with replacement because the latter calculations are much easier. • Moreover, sampling error resulting from sampling without replacement is always (at least slightly) smaller than those resulting from sampling with replacement. • To take an extreme example, a sample of size n = N • has zero sampling error if you sample without replacement (you have a complete census, but • has some sampling error if you sample with replacement. • Furthermore, survey research typically involves relatively small samples from huge populations, giving very small sampling fractions), in which case the two sampling methods are equivalent for all practical purposes.

Implications of the Inverse Square Root Law • Increasing sample size in order to reduce sampling error is subject to diminishing marginal returns. • Quite small samples have sampling errors that are manageable for many purposes. • Additional research resources are usually better invested in reducing other types of (non-sampling) errors. • Sample statistics for population subgroups have larger margins of error than those for the whole population. • For example, if a poll estimates the President's popularity in the public as a whole at 62% with a margin of error of about ± 3%, and the same poll estimates his popularity among men at (say) 60% (and women at 64%), the latter statistics are subject to a margin of error of about ± 4.5% (3% x √2 ≈ 3% x 1.5) • Likewise, the estimate of his popularity among African-Americans (about 10% of the population and sample) has a margin of error of about ± 9% (3% x √10 ≈ 3% x 3). • If research focuses importantly on such subgroups, it is desirable to use either (i) a larger than normal sample size or (ii) a stratified sample (with a higher sampling fraction in the smaller subgroup).

A Counter-Intuitive Implication • Notice that this discussion (including both the 100%/√nformula and Weisberg’s Table 3.4) refers only to the sample size nand it makes no reference to the population size N (or to the sampling fraction n/N). • This is because (for the most part) — sampling error depends on absolute sample size, and not on sample size relative to population size (i.e., the sampling fraction). • This is precisely true if samples are drawn with replacement, i.e., if it is theoretically possible for any given unit in the population to be drawn into the same sample two or more times. • Otherwise, i.e., if samples are drawn without replacement [which is the common practice], the statement is true for all practical purposes, provided the sampling fraction is fairly small, e.g., a sampling fraction of about 1/100 or less. • In survey research, of course, the sampling fraction is typically much smaller than this; • for the NES, on the order of 1/100,000.

Counter-Intuitive Implication (cont.) • If in fact we do draw a sample without replacement and with a high sampling fraction (e.g., 1/10), the only “problem” is that sampling error will be less than formula (2) and Table 3.4 indicate. • If the sampling fraction is 1 [i.e., n = N] and the sample is drawn without replacement, sampling error is zero [you have census] • If we sample with replacement, sample size can increase without limit and, in particular, can exceed population size. • An implication of this consideration is that, if a given margin of error is desired, a local survey requires a sample size almost as large as a national survey with the same margin or error. • Thus, in so far as costs are proportionate to sample size, good local surveys cost almost as much as national ones. • Only in the past decade or so have frequent good quality pre-election state polls been available. • Implication for identifying “battleground states”

Note: there are about 11,000 kidney cancer deaths in the US each year, so about 1 person in every 30,000 dies of kidney cancer each year.

The Response Rate • Drawn sample: the units of the population (potential respondents in a survey) randomly drawn into the sample. • Completed sample: the units in the drawn sample from which data is successfully collected; i.e., • in survey research, the potential respondents who are successfully interviewed. • Completion (or response) rate: the size of the completed sample as a percent of drawn sample. • A low response rate has two problems: • it increases sampling error (based on the size of the completed sample), and • much more importantly, non-respondents are largely self-selected or otherwise not randomly selected from the drawn sample. • While the size of the completed sample is (we hope) a large fraction of the drawn sample, it is not [we know] a random sample of the drawn sample, and therefore • the completed sample is not a fully random sample of the population as a whole, which implies that • sample statistics may be biased in more or less unknown ways. • Practical implication: survey researchers should invest a lot of resources into trying to get the highest reasonably feasible response rate. • This is much better use of resources than drawing a larger sample to get a larger completed sample with no better response rate.

Example: A Random Sample of UMBC Students • Define the population precisely, e.g., full-time undergraduates [N = 9,000] • Acquire a sampling frame [list of all students] and assign a number to each unit in population (each student). • Use a Table of Random Numbers or some other random mechanism to a select sample of the desired size (say n = 900): • Sampling fraction is 900/9,000 = 1/10. • Systematicrandom sample: • pick a random number between 1 and10, and then • pick that student and every 10th student thereafter • Simple random sample: • with or without replacement? • Stratify the sample? • Observe [interview] students in sample [response rate < 100%] • Use sample statistics to estimate population parameter(s) of interest. • Calculate margin or error: • about ±100%/√900 = ±100%/30 = ±3.3% if SRS with replacement, but • a bit smaller if we sample without replacement, or • a bit larger if we use systematic random sample.

How to Select Random Samples(See back of last page of Handout #2)

Problem: Often a Simple Sampling Frame is Not Available • ANES vs. British Election Studies: • The BES population is all “enrolled voters,” as opposed to the “voting age population” used by the ANES. • The BES therefore has a simple sampling frame available, • i.e., the UK list of all enrolled voters (which is both more inclusive and less duplicative than “voter registration” lists in U.S. states). • Thus BES can draw a simple random sample of this population. • The resulting sample is unclustered, but • since the UK is small country and BES uses telephone interviews, this does not present a problem. • ANES samples “voting age population” (VAP) from a geographically extensive area for personal interviews. • ANES must therefore use a (non-simple) multi-stage sampling method • that produces a clustered sample, • which facilitates personal interviewing.

Example of Two-Stage Sampling • Suppose we want a representative sample (n = 2000) of U.S. college students [N≈ 15,000,000]. • No simple sampling frame exists and it would be extremely burdensome to create one. • U.S. Department of Education can provide us with a list of all U.S. colleges and universities [N≈ 4000] • with [approximate] student enrollment for each. • We select a first-stage sample of institutions of size (say) n = 100, each institution having a probability of selection proportional to its size. • We then contact the Registrar’s Office at each of the 100 institutions to get a list of all students at each selected college. • We then use these lists as simple sampling frames to select 100 second-stage simple random samples of size (say) n = 20 students at each institution.

Example of Two-Stage Sampling (cont.) • Pooling the second-stage samples of students creates a representative national sample of college students of size n = 2000. • If some USDE enrollment figures turn out to be wrong, we can correct this by the weighting the student cases unequally. • An important advantage (if we are using personal interviews to collect the data) is that this student sample is clustered, so • interviewers need to go to only 100 locations, not almost 2000. • Its sampling error is calculable and is somewhat greater than that for a SRS of same size. • We can compensate for this by increasing the sample size a bit. • Suppose we • took a SRS of colleges at the first stage, and • used a uniform sampling fraction at second stage. • This also would produce a representative (unbiased) sample, • but it would have a larger sampling error.

Stratified Sampling • We might also stratify the sample by selecting separate samples of appropriate size from (for example) • community colleges [if included in population], • four-year colleges, and • universities, and/or • from different regions of the country, etc. • religious or other affiliations, etc. • Such stratification reduces sampling error a bit compared with non-stratified samples of the same size. • Stratification is especially useful if we want to compare two subgroups of unequal size (e.g., Students at public vs. private institutions, white vs. non-white students, in-state vs. out-of-state students, etc.). • Stratify by subgroups and draw samples of equal size for each subgroup, with the result that statistics for each subgroup are subject to the same margin of error.

ANES Multi-Stage Sampling • See Weisberg et al, pp. 49-53: • 1st Stage: stratified (by region) and weighted sample of about 120 primary sampling units (PSUs). • Metro area and (clusters of) counties. • This sample of PSUs is used for decade or more [see map, p. 51 ==>]. • ANES recruits and trains local interviewers in each PSU. • 2nd Stage: sample “blocks” within PSUs • 3rd Stage: sample houses within blocks • 4th Stage: sample of one adult in each house, • usually weighted by the number of persons of voting age in the household.

Non-Sampling Error • Error resulting from a low response rate (discussed earlier.) • Non-coverage error: the sampling frame may not cover exactly the population of interest, and this may bias sample statistics a bit. • ANES non-coverage: • Alaska and Hawaii (until the 1990s) • Americans living abroad • institutionalized population, homeless, etc. • Measurement errors due to unambiguous, unclear, or otherwise poorly framed questions, poorly designed questionnaires, inappropriate interviewing circumstances, interviewer mistakes, etc. • Errors in data entry, coding, tabulation, or other aspects of data processing.

Non-Sampling Errors (cont.) • Note that all these are indeed non-sampling errors. • Data based on a complete census of the population (without sampling) would be subject to the same errors. • Once sample size reaches a reasonable size, extra resources are better devoted to increasing the response rate and reducing other kinds of non-sampling errors than to further increasing sample size. Herbert Weisberg, The New Science of Survey Research: The Total Survey Error Approach (2005)

How the Poll Was Conducted The latest New York Times/CBS News Poll is based on telephone interviews conducted Sept. 15 through Sept. 19, 2006 with 1,131 adults throughout the United States. Of these, 1,007 said they were registered to vote. [Response Rate?] The sample of telephone exchanges called was randomly selected by a computer from a complete list of more than 42,000 active residential exchanges across the country. The exchanges were chosen so as to assure that each region of the country was represented in proportion to its population [stratification]. Within each exchange, random digits were added to form a complete telephone number, thus permitting access to listed and unlisted numbers alike. Within each household, one adult was designated by a random procedure to be the respondent for the survey. The results have been weighted to take account of household size and number of telephone lines into the residence and to adjust for variation in the sample relating to geographic region, sex, race, marital status, age and education. In theory, in 19 cases out of 20, overall results based on such samples will differ by no more than three percentage points in either direction from what would have been obtained by seeking out all American adults. For smaller subgroups, the margin of sampling error is larger. Shifts in results between polls over time also have a larger sampling error. In addition to sampling error, the practical difficulties in conducting any survey of public opinion may introduce other sources of error into the poll. Variation in the wording and order of questions, for example, may lead to somewhat different results. Dr. Michael R. Kagay of Princeton, N.J., assisted The Times in its polling analysis. Complete questions and results are available at nytimes.com/polls.

Some Results from Supplementary Non-Political Questions POLIU.S. Adult StudentsPopulation* • Average Height (Men) 70.0" 69.3" • Average Height (Women) 64.8" 63.8" • Average Weight (Men) 178 lbs 190 lbs • Average Weight (Women) 135 lbs 163 lbs • Average # of Children 2.82 2.05** * Census Bureau data based on large-scale surveys. ** Average number of children per women

Review • The Gallup Poll announces that, according its most recent survey: • “62% of the voting age population approves of the way the President is handling his job in office.” • They also note that this survey has a margin of error of ± 3%. • What does this mean? • The Gallup organization is trying to estimate this population parameter: • the percent of the voting age population that approves of the of the way the President is handling his job in office. • This value of this population parameter is unknown. • That’s why Gallup is taking a survey. • Their survey produces a sample statistic of (approximately) 62%. • This is Gallup’s “best guess,” based on the data at hand, of the value of the unknown population parameter.

Review (cont.) • Their reported margin of error of ± 3% means this: • Gallup is 95% confident that the true population parameter lies in the interval 59%-65%. • Why does Gallup give a 95% confidence interval, rather than (say) a 90% or 99% confidence interval? • Only because it is a statistical convention to report 95% intervals. • What does Gallup mean when it says they are 95% percent confident that the true population parameter lies in this interval? • They mean that if (hypothetically) if they were to take a great many samples of this type and size from this population with this parameter value, 95% of the statistics would be within 3 percentage points of the true population parameter.