Knowledge Representations

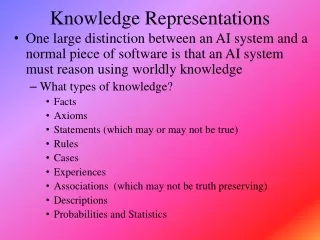

Knowledge Representations. One large distinction between an AI system and a normal piece of software is that an AI system must reason using worldly knowledge What types of knowledge? Facts Axioms Statements (which may or may not be true) Rules Cases Experiences

Knowledge Representations

E N D

Presentation Transcript

Knowledge Representations • One large distinction between an AI system and a normal piece of software is that an AI system must reason using worldly knowledge • What types of knowledge? • Facts • Axioms • Statements (which may or may not be true) • Rules • Cases • Experiences • Associations (which may not be truth preserving) • Descriptions • Probabilities and Statistics

Types of Representations • Early systems used either • semantic networks or predicate calculus to represent knowledge • or used simple search spaces if the domain/problem had very limited amounts of knowledge (e.g., simple planning as in blocks world) • With the early expert systems in the 70s, a significant shift took place to production systems, which combined representation and process (chaining) and even uncertainty handling (certainty factors) • later, frames (an early version of OOP) were introduced • Problem-specific approaches were introduced such as scripts and CDs for language representation • In the 1980s, there was a shift from rules to model-based approaches • Since the 1990s, Bayesian networks and hidden Markov Models have become popular • First, we will take a brief look at some of the representations

Search Spaces • Given a problem expressed as a state space (whether explicitly or implicitly) • Formally, we define a search space as [N, A, S, GD] • N = set of nodes or states of a graph • A = set of arcs (edges) between nodes that correspond to the steps in the problem (the legal actions or operators) • S = a nonempty subset of N that represents start states • GD = a nonempty subset of N that represents goal states • Our problem becomes one of traversing the graph from a node in S to a node in GD • Example: • 3 missionaries and 3 cannibals are on one side of the river with a boat that can take exactly 2 people across the river • how can we move the 3 missionaries and 3 cannibals across the river such that the cannibals never outnumber the missionaries on either side of the river (lest the cannibals start eating the missionaries!)

M/C Solution • We can represent a state as a 6-item tuple: (a, b, c, d, e, f) • a/b = number of missionaries/cannibals on left shore • c/d = number of missionaries/cannibals in boat • e/f = number of missionaries/cannibals on right shore • where a + b + c + d + e + f = 6 • a >= b (unless a = 0), c >= d (unless c = 0), and e >= f (unless e = 0) • Legal operations (moves) are • 0, 1, 2 missionaries get into boat • 0, 1, 2 missionaries get out of boat • 0, 1, 2 cannibals get into boat • 0, 1, 2 missionaries get out of boat • boat sails from left shore to right shore • boat sails from right shore to left shore

Relationships • We often know stuff about objects (whether physical or abstract) • These objects have attributes (components, values) and/or relationships with other things • So, one way to represent knowledge is to enumerate the objects and describe them through their attributes and relationships • Common forms of such relationship representations are • semantic networks – a network consists of nodes which are objects and values, and edges (links/arcs) which are annotated to include how the nodes are related • predicate calculus – predicates are often relationships and arguments for the predicates are objects • frames – in essence, objects (from object-oriented programming) where attributes are the data members and the values are the specific values stored in those members – in some cases, they are pointers to other objects

Representations With Relationships Here, we see the same information being represented using two different representational techniques – a semantic network (above) and predicates (to the left)

Another Example: Blocks World Here we see a real-world situation of three blocks and a predicate calculus representation for expressing this knowledge We equip our system with rules such as the below rule to reason over how to draw conclusions and manipulate this block’s world This rule says “if there does not exist a Y that is on X, then X is clear

Collins and Quillian were the first to use semantic networks in AI by storing in the network the objects and their relationships their intention was to represent English sentences edges would typically be annotated with these descriptors or relations isa – class/subclass instance – the first object is an instance of the class has – contains or has this as a physical property can – has the ability to made of, color, texture, etc Semantic Networks A semantic network to represent the sentences “a canary can sing/fly”, “a canary is a bird/animal”, “a canary is a canary”, “a canary has skin”

Representing Word Meanings • Quillian demonstrated how to use the semantic network to represent word meanings • each word would have one or more networks, with links that attach words to their definition “planes” • the word plant is represented as three planes, each of which has links to additional word planes

Frames • The semantic network requires a graph representation which may not be a very efficient use of memory • Another representation is the frame • the idea behind a frame was originally that it would represent a “frame of memory” – for instance, by capturing the objects and their attributes for a given situation or moment in time • a frame would contain slots where a slot could contain • identification information (including whether this frame is a subclass of another frame) • relationships to other frames • descriptors of this frame • procedural information on how to use this frame (code to be executed) • defaults for slots • instance information (or an identification of whether the frame represents a class or an instance)

Frame Example Here is a partial frame representing a hotel room The room contains a chair, bed, and phone where the bed contains a mattress and a bed frame (not shown)

Production Systems • A production system is • a set of rules (if-then or condition-action statements) • working memory • the current state of the problem solving, which includes new pieces of information created by previously applied rules • inference engine (the author calls this a “recognize-act” cycle) • forward-chaining, backward-chaining, a combination, or some other form of reasoning such as a sponsor-selector, or agenda-driven scheduler • conflict resolution strategy • when it comes to selecting a rule, there may be several applicable rules, which one should we select? the choice may be based on a conflict resolution strategy such as “first rule”, “most specific rule”, “most salient rule”, “rule with most actions”, “random”, etc

Chaining • The idea behind a production system’s reasoning is that rules will describe steps in the problem solving space where a rule might • be an operation in a game like a chess move • translate a piece of input data into an intermediate conclusion • piece together several intermediate conclusions into a specific conclusion • translate a goal into substeps • So a solution using a production system is a collection of rules that are chained together • forward chaining – reasoning from data to conclusions where working memory is sought for conditions that match the left-hand side of the given rules • backward chaining – reasoning from goals to operations where an initial goal is unfolded into the steps needed to solve that goal, that is, the process is one of subgoaling

Example System: Water Jugs • Problem: given a 4-gallon jug (X) and a 3-gallon jug (Y), fill X with exactly 2 gallons of water • assume an infinite amount of water is available • Rules/operators • 1. If X = 0 then X = 4 (fill X) • 2. If Y = 0 then Y = 3 (fill Y) • 3. If X > 0 then X = 0 (empty X) • 4. If Y > 0 then Y = 0 (empty Y) • 5. If X + Y >= 3 and X > 0 then X = X – (3 – y) and Y = 3 (fill Y from X) • 6. If X + Y >= 4 and Y > 0 then X = 4 and Y = Y – (4 – X) (fill X from Y) • 7. If X + Y <= 3 and X > 0 then X = 0 and Y = X + Y (empty X into Y) • 8. If X + Y <= 4 and Y > 0 then X = X + Y and Y = 0 (empty Y into X) • rule numbers used on the next slide

Conflict Resolution Strategies • In a production system, what happens when more than one rule matches? • a conflict resolution strategy dictates how to select from between multiple matching rules • Simple conflict resolution strategies include • random • first match • most/least recently matched rule • rule which has matched for the longest/shortest number of cycles (refractoriness) • most salient rule (each rule is given a salience before you run the production system) • More complex resolution strategies might • select the rule with the most/least number of conditions (specificity/generality) • or most/least number of actions (biggest/smallest change to the state)

MYCIN • By the early 1970s, the production system approach was found to be more than adequate for constructing large scale expert systems • in 1971, researchers at Stanford began constructing MYCIN, a medical diagnostic system • it contained a very large rule base • it used backward chaining • to deal with the uncertainty of medical knowledge, it introduced certainty factors (sort of like probabilities) • in 1975, it was tested against medical experts and performed as well or better than the doctors it was compared to (defrule 52 if (site culture is blood) (gram organism is neg) (morphology organism is rod) (burn patient is serious) then .4 (identity organism is pseudomonas)) If the culture was taken from the patient’s blood and the gram of the organism is negative and the morphology of the organism is rods and the patient is a serious burn patient, then conclude that the identity of the organism is pseudomonas (.4 certainty)

MYCIN in Operation • Mycin’s process starts with “diagnose-and-treat” • repeat • identify all rules that can provide the conclusion currently sought • match right hand sides (that is, search for rules whose right hand sides match anything in working memory) • use conflict resolution to identify a single rule • fire that rule • find and remove a piece of knowledge which is no longer needed • find and modify a piece of knowledge now that more specific information is known • add a new subgoal (left-hand side conditions that need to be proved) • until the action done is added to working memory • Mycin would first identify the illness, possibly ordering more tests to be performed, and then given the illness, generate a treatment • Mycin consisted of about 600 rules

R1/XCON • Another success story is DEC’s R1 • later renamed XCON • This system would take customer orders and configure specific VAX computers for those orders including • completing the order if the order was incomplete • how the various components (drive and tape units, mother board(s), etc) would be placed inside the mainframe cabinet) • how the wiring would take place among the various components • R1 would perform forward chaining over about 10,000 rules • over a 6 year period, it configured some 80,000 orders with a 95-98% accuracy rating • ironically, whereas planning/design is viewed as a backward chaining task, R1 used forward chaining because, in this particular case, the problem is data driven, starting with user input of the computer system’s specifications • R1’s solutions were similar in quality to human solutions

R1 Sample Rules • Constraint rules • if device requires battery then select battery for device • if select battery for device then pick battery with voltage(battery) = voltage(device) • Configuration rules • if we are in the floor plan stage and there is space for a power supply and there is no power supply available then add a power supply to the order • if step is configuring, propose alternatives and there is an unconfigured device and no container was chosen and no other device that can hold it was chosen and selecting a container wasn’t proposed yet and no problems for selecting containers were identified then propose selecting a container • if the step is distributing a massbus device and there is a single port disk drive that has not been assigned to a massbus and there are no unassigned dual port disk drives and the number of devices that each massbus should support is known and there is a massbus that has been assigned at least one disk drive and that should support additional disk drives and the type of cable needed to connect the disk drive is known, then assign the disk drive to this massbus

Strong Slot-n-Filler Structures • To avoid the difficulties with Frames and Nets, Schank and Rieger offered two network-like representations that would have implied uses and built-in semantics: conceptual dependencies and scripts • the conceptual dependency was derived as a form of semantic network that would have specific types of links to be used for representing specific pieces of information in English sentences • the action of the sentence • the objects affected by the action or that brought about the action • modifiers of both actions and objects • they defined 11 primitive actions, called ACTs • every possible action can be categorized as one of these 11 • an ACT would form the center of the CD, with links attaching the objects and modifiers

Example CD • The sentence is “John ate the egg” • The INGEST act means to ingest an object (eat, drink, swallow) • the P above the double arrow indicates past test • the INGEST action must have an object (the O indicates it was the object Egg) and a direction (the object went from John’s mouth to John’s insides) • we might infer that it was “an egg” instead of “the egg” as there is nothing specific to indicate which egg was eaten • we might also infer that John swallowed the egg whole as there is nothing to indicate that John chewed the egg!

The CD Theory ACTs • Is this list complete? • what actions are missing? • Could we reduce this list to make it more concise? • other researchers have developed other lists of primitive actions including just 3 – physical actions, mental actions and abstract actions

The sentence is “John prevented Mary from giving a book to Bill” This sentence has two ACTs, DO and ATRANS DO was not in the list of 11, but can be thought of as “caused to happen” The c/ means a negative conditional, in this case it means that John caused this not to happen The ATRANS is a giving relationship with the object being a Book and the action being from Mary to Bill – “Mary gave a book to Bill” like with the previous example, there is no way of telling whether it is “a book” or “the book” Complex Example

Scripts • The other structured representation developed by Schank (along with Abelson) is the script • a description of the typical actions that are involved in a typical situation • they defined a script for going to a restaurant • scripts provide an ability for default reasoning when information is not available that directly states that an action occurred • so we may assume, unless otherwise stated, that a diner at a restaurant was served food, that the diner paid for the food, and that the diner was served by a waiter/waitress • A script would contain • entry condition(s) and results (exit conditions) • actors (the people involved) • props (physical items at the location used by the actors) • scenes (individual events that take place) • The script would use the 11 ACTs from CD theory

Restaurant Script • The script does not contain atypical actions • although there are options such as whether the customer was pleased or not • There are multiple paths through the scenes to make for a robust script • what would a “going to the movies” script look like? would it have similar props, actors, scenes? how about “going to class”?

Knowledge Groups • One of the drawbacks of the knowledge representations demonstrated thus far is that all knowledge is grouped into a single, large collection of representations • the rules taken as a whole for instance don’t denote what rules should be used in what circumstance • Another approach is to divide the representations into logical groupings • this permits easier design, implementation, testing and debugging because you know what that particular group is supposed to do and what knowledge should go into it • it should be noted that by distributing the knowledge, we might use different problem solving agents for each set of knowledge so that the knowledge is stored using different representations

Knowledge Sources and Agents • Which leads us to the idea of having multiple problem solving agents • each agent is responsible for solving some specialized type of problem(s) and knows where to obtain its own input • each agent has its own knowledge sources, some internal, some external • since external agents may have their own forms of representation, the agent must know • how to find the proper agents • how to properly communicate with these other agents • how to interpret the information that it receives from these agents • how to recover from a situation where the expected agent(s) is/are not available

What is an Agent? • Agents are interactive problem solvers that have these properties • situated – the agent is part of the problem solving environment – it can obtain its own input from its environment and it can affect its environment through its output • autonomous – the agent operates independently of other agents and can control its own actions and internal states • flexible – the agent is both responsive and proactive – it can go out and find what it needs to solve its problem(s) • social – the agent can interact with other agents including humans • Some researchers also insist that agents have • mobility – have the ability to move from their current environment to a new environment (e.g., migrate to another processor) • delegation – hand off portions of the problem to other agents • cooperation – if multiple agents are tasked with the same problem, can their solutions be combined?

The Semantic Web • The WWW is a collection of data and knowledge in an unstructured format • Humans often can take knowledge from disparate sources and put together a coherent picture, can problem solving agents? • Agents on the semantic web all have their own capabilities and know where to look for knowledge • Whether a static source, or an agent that can provide the needed information through its own processing, or from a human • The common approach is to model the knowledge of a web site using an ontology • ontologies give agents the ability to translate the results of another agent, or the data provided from a website, into a version of knowledge that they can understand and use

Knowledge Acquisition and Modeling • Expert System construction used to be a trial-and-error sort of approach with the knowledge engineers • once they had knowledge from the experts, they would fill in their knowledge base and test it out • By the end of the 80s, it was discovered that creating an actual domain model was the way to go – build a model of the knowledge before implementing anything • A model might be • a dependency graph of what can cause what to happen • or an associational model which is a collection of malfunctions and the manifestations we would expect to see from those malfunctions • or a functional model where component parts are enumerated and described by function and behavior • The emphasis changed to knowledge acquisition tools (KADS) • domain experts enter their knowledge as a graphical model that contains the component parts of the item being diagnosed/designed, their functions, and rules for deciding how to diagnose or design each one

A NASA Example • Here is a model developed by NASA for a Livingston propulsion system for rockets • a reactive self-configuring autonomous system • knowledge modeled using propositional calc (instead of predicate calc – there are a finite number of elements, each will be modeled by its own proposition) Helium is the fuel tank Oxidizer is mixed to cause the fuel to burn Acc is the accelerometer which, along with sensors in the valves, is used as input to control the system Pryo valves are used as control – once they Change state, they stay in that state – so they are used to change the flow of fuel when an error is detected, opening or closing a new pathway from tank to engine

Model (Architecture) for the System • The idea is that the configuration manager tries to keep the spacecraft moving but at the lowest cost configuration • Sensors feed into the ME (mode estimator) to determine if the system is functioning and in the lowest configuration • If not, the MR (mode reconfiguration) plans a new mode by determining what valves to open and close • Since this is a spacecraft, the output of the MR is a set of actions that cause valves to open or close directly The high level planner generates a sequence of hardware configurations goals such as the amount of propellant that should be used , it is the configuration manager that must translate these goals into actions

VT – an Elevator’s Design The design of an elevator can be used to generate a diagnostic system for elevator problems, or in VT’s case, a system that can design new elevators

Reasoning with Uncertainty • Representations generally represent knowledge as fact • However, often, knowledge and the use of the knowledge brings with it a degree of uncertainty • how can we represent and reason with uncertainty? • We find two forms of uncertainty • unsure input • unknown – do not know the answer so you have to say unknown • unclear – answer doesn’t fit question (e.g., not yes but 80% yes) • vague data – is a 100 degree temp a “high fever” or just “fever”? • ambiguous/noisy data – data may not be easily interpretable • non-truth preserving knowledge (most rules are associational, not truth preserving) • unlike “if you are a man then you are mortal”, a doctor might reason from symptoms to diseases • “all men are mortal” denotes a class/subclass relationship, which is truth preserving • but the symptom to disease reasoning is based on associations and is not guaranteed to be true

Certainty Factors • First used in the Mycin system, the idea is that we will attribute a measure of belief to any conclusion that we draw • CF(H | E) = MB(H | E) – MD(H | E) • certainty factor for hypothesis H given evidence E is the measure of belief we have for H minus measure of disbelief we have for H • CFs are applied to hypotheses that are drawn from rules • CFs can be combined as we associate a CF with each condition and each conclusion of each rule • To use CFs, we need • to annotate every rule with a CF value (this comes from the expert) • ways to combine CFs when we use AND, OR, • Combining rules are straightforward: • for AND use min • for OR use max • for use * (multiplication)

CF Example • Assume we have the following rules: • A B (.7) • A C (.4) • D F (.6) • B AND G E (.8) • C OR F H (.5) • We know A, D and G are true (so each have a value of 1.0) • B is .7 (A is 1.0, the rule is true at .7, so B is true at 1.0 * .7 = .7) • C is .4 • F is .6 • B AND G is min(.7, 1.0) = .7 (G is 1.0, B is .7) • E is .7 * .8 = .56 • C OR F is max(.4, .6) = .6 • H is .6 * .5 = .30

Continued • Another combining rule is needed when we can conclude the same hypothesis from two or more rules • we already used C OR F H (.5) to conclude H with a CF of .30 • let’s assume that we also have the rule E H (.5) • since E is .56, we have H at .56 * .5 = .28 • We now believe H at .30 and at .28, which is true? • the two rules both support H, so we want to draw a stronger conclusion in H since we have two independent means of support for H • We will use the formula CF1 + CF2 – CF1*CF2 • CF(H) = .30 + .28 - .30 * .28 = .496 • our belief in H has been strengthened through two different chains of logic

Fuzzy Logic • Prior to CFs, Zadeh introduced fuzzy logic to introduce “shades of grey” into logic • other logics are two-valued, true or false only • Here, any proposition can take on a value in the interval [0, 1] • Being a logic, Zadeh introduced the algebra to support logical operators of AND, OR, NOT, • X AND Y = min(X, Y) • X OR Y = max(X, Y) • NOT X = (1 – X) • X Y = X * Y • Where the values of X, Y are determined by where they fall in the interval [0, 1]

Fuzzy Set Theory • Fuzzy sets are to normal sets what fuzzy logic is to logic • fuzzy set theory is based on fuzzy values from fuzzy logic but includes set operations instead of logic operations • The basis for fuzzy sets is defining a fuzzy membership function for a set • a fuzzy set is a set of items along with their membership values in the set where the membership value defines how closely that item is to being in that set • Example: the set tall might be denoted as • tall = { x | f(x) = 1.0 if x > 6’2”, .8 if x > 6’, .6 if x > 5’10”, .4 if x > 5’8”, .2 if x > 5’6”, 0 otherwise} • so we can say that a person is tall at .8 if they are 6’1” or we can say that the set of tall people are {Anne/.2, Bill/1.0, Chuck/.6, Fred/.8, Sue/.6}

Fuzzy Membership Function • Typically, a membership function is a continuous function (often represented in a graph form like above) • given a value y, the membership value for y is u(y), determined by tracing the curve and seeing where it falls on the u(x) axis • How do we define a membership function? • this is an open question

Using Fuzzy Logic/Sets • 1. fuzzify the input(s) using fuzzy membership functions • 2. apply fuzzy logic rules to draw conclusions • we use the previous rules for AND, OR, NOT, • 3. if conclusions are supported by multiple rules, combine the conclusions • like CF, we need a combining function, this may be done by computing a “center of gravity” using calculus • 4. defuzzify conclusions to get specific conclusions • defuzzification requires translating a numeric value into an actionable item • Fuzzy logic is often applied to domains where we can easily derive fuzzy membership functions and have a few rules but not a lot • fuzzy logic begins to break down when we have more than a dozen or two rules

Example • We have an atmospheric controller which can increase or decrease the temperature of the air and can increase or decrease the fan based on these simple rules • if air is warm and dry, decrease the fan and increase the coolant • if air is warm and not dry, increase the fan • if air is hot and dry, increase the fan and the increase the coolant slightly • if air is hot and not dry, increase the fan and coolant • if air is cold, turn off the fan and decrease the coolant • Our input obviously requires the air temperature and the humidity, the membership function for air temperature is shown to the right if it is 60, it would be considered cold 0, warm 1, hot 0 if it is 85, it would be cold 0, warm .3 and hot .7

Continued • Temperature = 85, humidity indicates dry .6 • hot .7, warm .3, cold 0, dry .6, not dry .4 (not dry = 1 – dry = 1 - .6) • Rule 1 has “warm and dry” • warm is .3, dry is .6, so “warm and dry” = min(.3, .6) = .3 • Rule 2 has “warm and not dry” • min(.3, .4) = .3 • Rule 3 has “hot and dry” = min(.7, .3) = .3 • our fourth and fifth rules give us 0 since cold is 0 • Our conclusions from the first three rules are to • decrease the coolant and increase the fan at levels of .3 • increase the fan at level of .3 • increase the fan at .3 and increase the coolant slightly • To combine our results, we might increase the fan by .9 and decrease the coolant (assume “increase slightly” means increase by ¼) by .3 - .3/4 = .9/4 • Finally, we defuzzify “decrease by .9/4” and “increase by .9” to actionable amounts

Using Fuzzy Logic • The most common applications for fuzzy logic are for controllers • devices that, based on input, make minor modifications to their settings – for instance • air conditioner controller that uses the current temperature, the desired temperature, and the number of open vents to determine how much to turn up or down the blower • camera aperture control (up/down, focus, negate a shaky hand) • a subway car for braking and acceleration • Fuzzy logic has been used for expert systems • but the systems tend to perform poorly when more than just a few rules are chained together • in our previous example, we just had 5 stand-alone rules • when we chain rules, the fuzzy values are multiplied (e.g., .5 from one rule * .3 from another rule * .4 from another rule, our result is .06)

Dempster-Shaefer Theory • The D-S Theory goes beyond CF and Fuzzy Logic by providing us two values to indicate the utility of a hypothesis • belief – as before, like the CF or fuzzy membership value • plausibility – adds to our belief by determining if there is any evidence (belief) for opposing the hypothesis • We want to know if h is a reasonable hypothesis • we have evidence in favor of h giving us a belief of .7 • we have no evidence against h, this would imply that the plausibility is greater than the belief • p(h) = 1 – b(~h) = 1 (since we have no evidence against h, ~h = 0) • Consider two hypotheses, h1 and h2 where we have no evidence in favor of either, so b(h1) = b(h2) = .5 • we have evidence that suggests ~h2 is less believable than ~h1 so that b(~h2) = .3 and b(~h1) = .5 • h1 = [.5, .5] and h2 = [.5, .7] so h2 is more believable

Computing Multiple Beliefs • D-S theory gives us a way to compute the belief for any number of subsets of the hypotheses, and modify the beliefs as new evidence is introduced • the formula to compute belief (given below) is a bit complex • so we present an example to better understand it • but the basic idea is this: we have a belief value for how well some piece of evidence supports a group (subset) of hypotheses • we introduce a new evidence and multiply the belief from the first with the belief in support of the new evidence for those hypotheses that are in the intersection of the two subsets • the denominator is used to normalize the computed beliefs, and is 1 unless the intersection includes some null subsets