Neural heuristics for Problem Solving

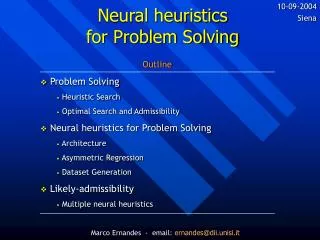

10-09-2004 Siena Neural heuristics for Problem Solving Outline Problem Solving Heuristic Search Optimal Search and Admissibility Neural heuristics for Problem Solving Architecture Asymmetric Regression Dataset Generation Likely-admissibility Multiple neural heuristics

Neural heuristics for Problem Solving

E N D

Presentation Transcript

10-09-2004 Siena Neural heuristics for Problem Solving Outline • Problem Solving • Heuristic Search • Optimal Search and Admissibility • Neural heuristics for Problem Solving • Architecture • Asymmetric Regression • Dataset Generation • Likely-admissibility • Multiple neural heuristics Marco Ernandes - email: ernandes@dii.unisi.it

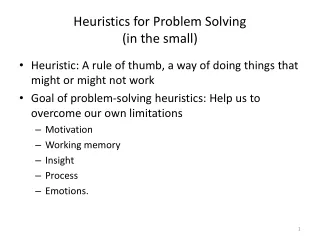

1 2 3 4 5 11 9 15 5 6 7 8 8 1 6 10 xo 9 10 11 12 3 7 14 g P 13 14 15 4 13 2 12 Problem Solving • PS is a decision-making process that aims to find a sequence of operators that takes the agent from a given state to a goal state. • We talk about: states, successor function (defines the operators available at each state), initial state, goal state, problem space. Marco Ernandes - email:ernandes@dii.unisi.it

Heuristic Search • Search algorithms (best-first, greedy, …): define a strategy to investigate the search-tree. • Heuristic information h(n): typically the distance from node n (of the search-tree)to goal • Heuristic usage policy : how to combine h(n) and g(n) to obtain f(n). n g(n) h(n) xo * g P Marco Ernandes - email:ernandes@dii.unisi.it

Optimal Heuristic Search • GOAL: retrieve the solution that minimizes the path cost (sum of all the operator costs) C=C* • Requires: • an optimal search algorithm (A*, IDA*, BS*) • an admissible heuristic, h(n)h*(n) (i.e. Manhattan) • an admissible heuristic usage (f(n) =h(n) + g(n) , ) • Complexity: • Optimal solving: any puzzle is NP-Hard. • Non-admissible search is polynomial. Marco Ernandes - email:ernandes@dii.unisi.it

Our idea about admissible search • The best performance in literature: memory-based heuristics (Disjoint Pattern DBs, <Korf&Taylor, 2002>) • Offline phase: resolution of all possibile subproblems and storage of all the results. • Online phase: decomposition of a node in subproblems and database querying. • Our idea: • memory-based heuristics have little future because they only shift NP-completeness from time to space. • ANN (as universal approx.) can provide effective non-memory-based, “nearly” admissible heuristics. Marco Ernandes - email:ernandes@dii.unisi.it

Outline: • Problem Solving • Heuristic Search • Optimal Search and Admissibility • Neural heuristics for Problem Solving • Architecture • Asymmetric Regression • Dataset Generation • Likely-admissibility • Multiple neural heuristics Marco Ernandes - email:ernandes@dii.unisi.it

heuristic evaluation error check 7 3 5 BP 2 4 6 01001000010 1 8 request an estimation extraction of an example Neural heuristics We used standard MLP networks. ONLINE PHASE OFFLINE PHASE h(n) Marco Ernandes - email:ernandes@dii.unisi.it

– Neural heuristics –Outputs, Targets & Entrances • It’s a regression problem, hence we used 1 linear output neuron (modified a posteriori exploiting information from Manhattan-like heuristics). • 2 possible targets: • A) “direct” target function o(x) = h*(x) • B) “gap” target o(x) = h*(x)-hM(x) (which takes advantage of Manhattan too) • Entrances coding: • we tried 3 different vector-valued codings • future work: represent configurations as graphs, in order to have non-dimension-dependent learning. (i.e. exploit learning from 15-puzzle, in the 24-puzzle). Marco Ernandes - email:ernandes@dii.unisi.it

– Neural heuristics –Learning Algorithm • Normal backpropagation algorithm, but … • Introducing a coefficient of asymmetry in the error function. This stresses admissibility: • Ed = (1-w) (od –td) if (od –td) < 0 • Ed = (1+w) (od –td) if (od –td) > 0 • We used a dynamic decreasing w, in order to stress underestimations when learning is simple and to ease it successively. Momentum a=0,8 helped smoothness. with 0 < w < 1 Marco Ernandes - email:ernandes@dii.unisi.it

– Neural heuristics –Asymmetric Regression • This is a general idea for backprop learning. • It can suit any regression problem where overestimations harm more than underestimations (or contrary). • Heuristic machine learning is an ideal application field. • We believe that totally admissible neural heuristics are theoretically impossible, or at least impracticable. Symmetric error Asymmetric error Marco Ernandes - email:ernandes@dii.unisi.it

– Neural heuristics –Dataset Generation • Examples are previously optimally solved configurations. It seems a big problem, but … • Few examples are sufficient for good learning. A few hundreds to have faster search than Manhattan. We used a training set of 25000 to (500 million times smaller than the problem space). • These examples are mainly “easy” ones, over 60% of 15-puzzle examples have d < 30, whereas only 0,1% of random cases have d < 30 [see 15-puzzle search tree distribution]. • All the process is fully parallelizable. • Further works: auto-feed learning. Marco Ernandes - email:ernandes@dii.unisi.it

– Neural heuristics –Are sub-symbolic heuristics “online”? • We believe so. Even that there is an offline learning phase. For 2 reasons: • 1. Nodes visited during search are generally UNSEEN. • Exactly like often humans do with learned heuristics: we don’t recover a heuristic value from a database, we compute it employing the inner rules that the heuristic provides. • 2. The learned heuristic should be dimension-independent: learning over small problems could be used for bigger problems (i.e. 8-puzzle 15-puzzle). This is not possible with mem-based heuristics. Marco Ernandes - email:ernandes@dii.unisi.it

Outline: • Problem Solving • Heuristic Search • Optimal Search and Admissibility • Neural heuristics for Problem Solving • Architecture • Asymmetric Regression • Dataset Generation • Likely-admissibility • Multiple neural heuristics Marco Ernandes - email:ernandes@dii.unisi.it

Likely-Admissible Search • We relax the optimality requirement in a probabilistic sense (not qualitatively like e-admissible search). • Why is it a better approach than e-admissibility? • It allows to retrieve TRULY OPTIMAL solutions. • It still allows to change the nature of search complexity. • Because search can rely on any heuristic, unlike e-admissible search that works only on already-proven-admissible ones. • Because we can better combine search with statistical machine learning techniques. Using universal approximators we can automatically generate heuristics. Marco Ernandes - email:ernandes@dii.unisi.it

– Likely-Admissible Search –A statistical framework • One requisite: to have a previous statistical analysis of overestimation frequencies of our h. • P(h$) shall be the probability that heuristic h underestimates h* for any given state xX. • ph shall be the probability of optimally solving a problem using h and A*. • TO ESTIMATE OPTIMALITY FROM ADMISSIBILITY: Marco Ernandes - email:ernandes@dii.unisi.it

– Likely-Admissible Search –Multiple Heuristics • To enrich the heuristic information we can generate many heuristics and use them simultaneously, as: • Thus: • If we will consider for simplicity that all j heuristics have the same given P(h+2): j grows logarithmically with d and pH Marco Ernandes - email:ernandes@dii.unisi.it

– Likely-Admissible Search –Optimality prediction • Unfortunately the last equation is very optimistic since it assumes a total error independency among neural heuristics. • For predicions we have to use which is: • Extremely precise for optimality over 80%. • Imprecise for low predictions. • Predictions are much more accurate than e-admissible search predictions. Marco Ernandes - email:ernandes@dii.unisi.it

Experimental Results & Demo • Compared to Manhattan: • IDA* with 1 ANN (optimality 30%): 1/1000 execution time, 1/15000 nodes visited • IDA* with 2 ANN (opt. 50%): 1/500 time, 1/13000 nodes. • IDA* with 4 ANN-1 (opt. 90%): 1/70 time, 1/2800 nodes. • Compared to DPDBs: • IDA* with 1 ANN (optimality 30%): between -17% and +13% nodes visited, between 1,4 and 3,5 times slower • IDA* with 2 ANN (opt. 50%): -5% nodes visited, 5 times slower (but this could be parallelized completely!) Try the “demo” at: http://www.dii.unisi.it/ ~ernandes/samloyd/ Marco Ernandes - email:ernandes@dii.unisi.it