Network Coding for Error Correction and Security

Network Coding for Error Correction and Security. Raymond W. Yeung The Chinese University of Hong Kong. Introduction Network Coding vs Algebraic Coding Network Error Correction Secure Network Coding Applications of Random Network Coding in P2P Concluding Remarks . Outline. Introduction.

Network Coding for Error Correction and Security

E N D

Presentation Transcript

Network Coding forError Correction and Security Raymond W. Yeung The Chinese University of Hong Kong

Introduction Network Coding vs Algebraic Coding Network Error Correction Secure Network Coding Applications of Random Network Coding in P2P Concluding Remarks Outline

A Network Coding Example The Butterfly Network

b1 b2 b1 b2 b1 b2 b1 b2 b1 b2 b1 b2 b1 b1+b2 b2 b2 b1 b1+b2 b1+b2

A Network Coding Example with Two Sources

b1 b2 b1 b2 b1+b2 b2 b2 b2 b1 b1 b1 b1+b2 b1+b2 b2 b1

b1 b2 b1 t = 1 b2 t = 2 b1+b2 b1+b2 t = 3 Wireless/Satellite Application 50% saving for downlink bandwidth!

When there is 1 source to be multicast in a network, store-and-forward may fail to optimize bandwidth. When there are 2 or more independent sources to be transmitted in a network (even for unicast), store-and-forward may fail to optimize bandwidth. In short, Information is NOT a commodity! Two Themes of Network Coding

A network is represented by a graph G = (V,E) with node set V and edge (channel) set E. A symbol from an alphabet F can be transmitted on each channel. There can be multiple edges between a pair of nodes. Model of a Point-to-Point Network

The source node s generates an information vector x = (x1 x2 … xk) Fk. What is the condition for a node t to be able to receive the information vector x? Max-Flow Bound. If maxflow(t) < k, then node t cannot possibly receive x. Single-Source Network Coding

If network coding is allowed, a node t can receive the information vector x iff maxflow(t) ≥ k i.e., the max-flow bound can be achieved simultaneously by all such nodes t. (Ahlswede et al. 00) Moreover, this can be achieved by linear network coding for a sufficiently large base field. (Li, Y and Cai, Koetter and Medard, 03) The Basic Results

Recall that x = (x1 x2 … xk) is the multicast message. For each channel e, assign a column vector fe such that the symbol sent on channel e is x fe. The vector fe is called the global encoding kernel of channel e. The global encoding kernel of a channel is analogous to a column in the generator matrix of a classical block code. The global encoding kernel of an output channel at a node must be a linear combination of the global encoding kernels of the input channels. Global Encoding Kernels of a Linear Network Code

An Example k = 2, let x = (b1, b2)

b1 b2 b1 b2 b1 b1+b2 b2 b1+b2 b1+b2

A message of k symbols from a base field F is generated at the source node s. A k-dimensional linear multicast has the following property: A non-source node t can decode the message correctly if and only if maxflow(t) k. By the Max-flow bound, this is also a necessary condition for a node t to decode (for any given network code). Thus the tightness of the Max-flow bound is achieved by a linear multicast, which always exists for sufficiently large base fields. A Linear Multicast

Consider a (n,k) classical block code with minimum distance d. Regard it as a network code on an combination network. Since the (n,k) code can correct d-1 erasures, all the nodes at the bottom can decode. An (n,k) Code with dmin = d

The Combination Network s n n-d+1 n-d+1

For the nodes at the bottom, maxflow(t) = n-d+1. By the Max-flow bound, k maxflow(t) = n-d+1 or d n-k+1, the Singleton bound. Therefore, the Singleton bound is a special case of the Max-flow bound for network coding. An MDS code is a classical block code that achieves tightness of the Singleton bound. Since a linear multicast achieves tightness of the Max-flow bound, it is formally a network generalization of an MDS code.

The starting point of classical coding theory and information-theoretic cryptography is the existence of a conduit through which we can transmit information from Point A to Point B without error. Single-source network coding provides a new such conduit. Therefore, we expect that both classical coding theory and information-theoretic cryptography can be extended to networks. Two Ramifications of Single-Source Network Coding

Classical error-correcting codes are devised for point-to-point communications. Such codes are applied to networks on a link-by-link basis. Point-to-Point Error Correction in a Network

Channel Decoder Channel Decoder Network Encoder Channel Encoder

Observation Only the receiving nodes have to know the message transmitted; the immediate nodes don’t. In general, channel coding and network coding do not need to be separated Network Error Correction Network error correction generalizes classical point-to-point error correction. A Motivation for Network Error Correction

Network Codec

A distributed error-correcting scheme over the network. Does not explicitly decode at intermediate nodes as in point-to-point error correction. At a sink node t, if c errors can be corrected, it means that the transmitted message can be decoded correctly as long as the total number of errors, which can happen anywhere in the network, is at most c. What Does Network Error Correction Do?

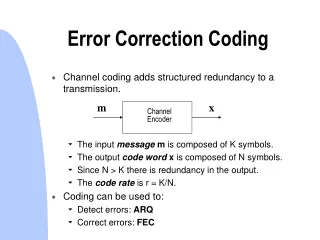

Classical Algebraic Coding y = x + z error vector received vector codeword y, x, and z are all in the same space.

Hamming distance is the most natural distance measure. For a code C, dmin = min d(v1,v2), where v1,v2 C andv1 v2. If dmin = 2c+1, then C can Correct c errors Detect 2c errors Correct 2c erasures Minimum Distance: Classical Case

Sphere Packing dmin

Upper bounds Hamming bound Singleton bound Lower bound Gilbert-Varsharmov bound Coding Bounds: Classical Case

Network Coding t yt x yu u s yv v z

The network code is specified by the local encoding kernels at each non-source node. Fix a sink node t. The codeword x, the error vector z, and the received vectors yt are all in different spaces. In this tutorial, we consider only linear network codes. Then yt = xFs,t + zFt where Fs,t and Ftdepend on t. In the classical case, Fs,t = Ft are the identity matrix. Input/Output Relation

The network Hamming distance can be defined for linear network codes. Many concepts in algebra coding based on the Hamming distance can be extended to network coding. Distance Properties of Linear Network Codes (Yang, Y, Zhang 07)

Fix both the network code and the codebook C, i.e., the set of all possible codewords transmitted into the network. For a sink node t, yt(x,z) = x Fs,t + z Ft For two codewords x1, x2 C , define their distance by Dtmsg(x1,x2) = arg minzwH(z) where the minimum is taken over all error vectors z such that yt(x1,0) = yt(x2,z) , or yt(x1,z) = yt(x2,0) IdeaDtmsg(x1,x2) is the minimum Hamming weight of an error vector z that makes x1 and x2 indistinguishable at node t. Dtmsg defines a metric on the input space of the linear network code. How to Measure the Distance between Two Codewords?

For a sink node t, dmin,t= minx1x2Dtmsg(x1,x2) Each sink node has a different view of the codebook as each is associated with a different distance measure. dmin,tis the minimum distance as seen by sink node t. If the codebook C is linear, dmin,thas the following equivalent definition: dmin,t= min { wH(z) : z At } where At = { z : yt(x,z) = 0 for some x C }. Minimum Distance for a Sink Node

If dmin,t= 2c+1, then sink node t can Correct c errors Detect 2c errors Correct 2c erasures Some form of “sphere packing” is at work. Much more complicated when the network code is nonlinear. Error Correction/Detection and Erasure Correctionfor a Linear Network Code

Sphere Packing dmin

In network coding, some error patterns have no effect on the sink nodes. These are “invisible” error patterns that cannot be (or do not need to be) detected. Also called “Byzantine modification detection” (Ho et al, ISIT 04) Remark on Error Detection

In classical algebraic coding, erasure correction has three equivalent interpretation: A symbol is erased means that it is not available A symbol is erased means that the erasure symbol is received The error locations are known. In our context, erasure correction means that the locations of the errors are known by the sink nodes but not the intermediate nodes. Remark on Erasure Correction

Cai & Y (02, 06) obtained the Hamming bound, the Singleton bound and the Gilbert-Varshamov bound for network codes. These bounds are natural extension of the bounds for algebraic codes. Let the base field be GF(q), n = mint maxflow(t) and dmin = mint dmin,t Coding Bounds for Network Codes

Hamming bound where . Singleton bound The Singleton bound is asymptotically tight, i.e., when q is sufficiently large. Upper Bounds

Observation Sink nodes with larger maximum flow can have better error correction capability. For a given linear network code, refined Hamming bounds and Singleton bounds specific to the individual sink nodes can be obtained. Refined Coding Bounds

A network code with rank(Fs,t) = mt, codebook C, and dmin,t > 0, satisfies where , for all sink node t. Refined Hamming Bound

A network code with rank(Fs,t) = mt, codebook C, and dmin,t > 0, satisfies for all sink node t. Refined Singleton Bound

Note that mt maxflow(t) for all sink nodes t. Thus the refined Hamming bounds imply the Hamming bound, and the refined Singleton bounds imply the Singleton bound. Remark

These bounds are shown to be asymptotically tight for linear network codes by construction, i.e., it is possible to construct a codebook that achieves tightness of the individual bound at every sink node t for any given linear network code provided that q is sufficiently large. This implies that for large base fields, only linear transformations need to be performed at the intermediate nodes! No decoding needed. Tightness of the Refined Singleton Bounds

Deterministic algorithms Alg1: Yang, Ngai and Y (ISIT 07) Alg2: Matsumoto (IEICE, 07) obtained an algorithm based on robust network codes. Alg3: Yang and Y (ITW, Bergen 07) All these algorithms have almost the same complexity in terms of the field size requirement and time complexity. These algorithms imply that when q is very large, network codes satisfying these bounds can be constructed randomly with high probability. Construction of Network Codes that Achieve the Refined Singleton bounds

Gilbert Bound • Let ns be the outgoing degree of source node s. • Let be the d-ball about x with respect to the metric Dtmsg.

Given a network code, let |C|max be the maximum possible size of the codebook such that dmin,t ≥dt for each sink node t. Then, where Gilbert Bound