Autotuning sparse matrix kernels

560 likes | 749 Views

Autotuning sparse matrix kernels. Richard Vuduc Center for Applied Scientific Computing (CASC) Lawrence Livermore National Laboratory April 2, 2007. Riddle: Who am I?. I am a Big Repository Of useful And useless Facts alike. Why “autotune” sparse kernels?.

Autotuning sparse matrix kernels

E N D

Presentation Transcript

Autotuning sparse matrix kernels Richard Vuduc Center for Applied Scientific Computing (CASC) Lawrence Livermore National Laboratory April 2, 2007

Riddle: Who am I? I am a Big Repository Of useful And useless Facts alike. View from space

Why “autotune” sparse kernels? • Sparse kernels abound, but usually perform poorly • Physical & economic models, PageRank, … • Sparse matrix-vector multiply (SpMV) <= 10% peak, decreasing • Performance challenges • Indirect, irregular memory access • Low computational intensity vs. dense linear algebra • Depends on matrix (run-time) and machine • Claim: Need automatic performance tuning • 2 speedup from tuning, will increase • Manual tuning is difficult, getting harder • Tune target app, input, machine using automated experiments View from space

This talk: Key ideas and conclusions • OSKI tunes sparse kernels automatically • Off-line benchmarking + empirical run-time models • Exploit structure aggressively • SpMV: 4 speedups, 31% peak • Higher-level kernels: ATA·x, Ak·x, … • Application impact • Autotuning compiler? (very early-stage work) • Different talks: Other work in high-perf. computing (HPC) • Analytical and statistical performance modeling • Compiler-based domain-specific optimization • Parallel debugging using static and dynamic analysis View from space

Outline • A case for sparse kernel autotuning • Trends? • Why tune? • OSKI • Autotuning compiler • Summary and future directions A case for sparse kernel autotuning

Irregular, indirect: x[ind[…]] “Regularize?” Dominant cost: Compress? SpMV crash course:Compressed Sparse Row (CSR) storage • Matrix-vector multiply: y = A*x • for all A(i, j): y(i) = y(i) + A(i, j) * x(j) A case for sparse kernel autotuning

Experiment: How hard is SpMV tuning? • Exploit 88 blocks • Compress data • Unroll block multiplies • Regularize accesses • As rc , speed A case for sparse kernel autotuning

Mflop/s (31.1%) Best: 42 Reference Mflop/s (7.6%) Speedups on Itanium 2: The need for search A case for sparse kernel autotuning

SpMV Performance—raefsky3 A case for sparse kernel autotuning

SpMV Performance—raefsky3 A case for sparse kernel autotuning

Better, worse, or about the same? A case for sparse kernel autotuning

* Reference improves * * Best possible worsens slightly * Better, worse, or about the same?Itanium 2, 900 MHz 1.3 GHz A case for sparse kernel autotuning

* Reference worsens! * * Relative importance of tuning increases * Better, worse, or about the same?Power4 Power5 A case for sparse kernel autotuning

* Reference & best improve; relative speedup improves (~1.4 to 1.6) * * Best decreases from 11% to 9.6% of peak * Better, worse, or about the same?Pentium M Core 2 Duo (1-core) A case for sparse kernel autotuning

More complex structures in practice • Example: 33 blocking • Logical grid of 33 cells A case for sparse kernel autotuning

Extra work can improve efficiency! • Example: 33 blocking • Logical grid of 33 cells • Fill-in explicit zeros • Unroll 3x3 block multiplies • “Fill ratio” = 1.5 • On Pentium III: 1.5 • i.e., 2/3 time A case for sparse kernel autotuning

Trends: My predictions from 2003 • Need for “autotuning” will increase over time • So kindly approve my dissertation topic • Example: SpMV, 1987 to present • Untuned: 10% of peak or less, decreasing • Tuned: 2 speedup, increasing over time • Tuning is getting harder (qualitative) • More complex machines & workloads • Parallelism A case for sparse kernel autotuning

Trends in uniprocessor SpMV performance (Mflop/s), pre-2004 A case for sparse kernel autotuning

Trends in uniprocessor SpMV performance (fraction of peak) A case for sparse kernel autotuning

Summary: A case for sparse kernel autotuning • “Trends” for SpMV • 10% of peak, decreasing • 2x speedup, increasing • No guarantees with new generation systems • Manual tuning is “hard” • Sparse differs from dense • Not LINPACK! • Data structure overheads • Indirect, irregular memory access • Run-time dependence (i.e., matrix) A case for sparse kernel autotuning

Outline • A case for sparse kernel autotuning • OSKI • Tuning is hard—how to automate? • Autotuning compiler • Summary and future directions

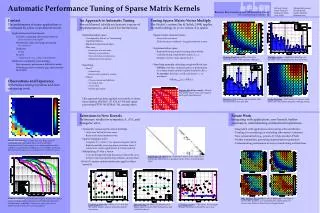

Basic approach to autotuning • High-level idea: Given kernel, machine… • Identify “space” of candidate implementations • Generate implementations in the space • Choose (search for) fastest by modeling and/or experiments • Example: Dense matrix multiply • Space = {Impl(R, C)}, where R x C is cache block size • Measure time to run Impl(R, C) for all R, C • Perform this expensive search once per machine • Successes: ATLAS/PHiPAC, FFTW, SPIRAL, … • SpMV: Depends on run-time input (matrix)! The view from space

OSKI: Optimized Sparse Kernel Interface • Autotuned kernels for user’s matrix & machine • a la PHiPAC/ATLAS, FFTW, SPIRAL, … • BLAS-style interface: mat-vec (SpMV), tri. solve (TrSV), … • Hides complexity of run-time tuning • Includes fast locality-aware kernels: ATA·x, Ak·x, … • Fast in practice • Standard SpMV < 10% peak, vs. up to 31% with OSKI • Up to 4 faster SpMV, 1.8 triangular solve, 4xATA·x, … • For “advanced” users & solver library writers • OSKI-PETSc; Trilinos (Heroux) • Adopted by ClearShape, Inc. for shipping product (2 speedup) OSKI

1. Build for Target Arch. 2. Benchmark Workload from program monitoring History Matrix Generated code variants Benchmark data 1. Evaluate Models Heuristic models 2. Select Data Struct. & Code To user: Matrix handle for kernel calls How OSKI tunes (Overview) Application Run-Time Library Install-Time (offline) OSKI

Heuristic model example: Select block size • Idea: Hybrid off-line / run-time model • Characterize machine with off-line benchmark • Precompute Mflops(r, c) using dense matrix for all r, c • Once per machine • Estimate matrix properties at run-time • Sample A to estimate Fill(r, c) • Run-time “search” • Select r, c to maximize Mflops(r, c) / Fill(r, c) • Run-time costs ~ 40 SpMVs • 80%+ = time to convert to new r c format OSKI

Accuracy of the Tuning Heuristics (1/4) DGEMV OSKI NOTE: “Fair” flops used (ops on explicit zeros not counted as “work”)

Accuracy of the Tuning Heuristics (2/4) DGEMV OSKI NOTE: “Fair” flops used (ops on explicit zeros not counted as “work”)

Tunable optimization techniques • Optimizations for SpMV • Register blocking (RB): up to 4 over CSR • Variable block splitting: 2.1 over CSR, 1.8 over RB • Diagonals: 2 over CSR • Reordering to create dense structure + splitting: 2 over CSR • Symmetry: 2.8 over CSR, 2.6 over RB • Cache blocking: 3 over CSR • Multiple vectors (SpMM): 7 over CSR • And combinations… • Sparse triangular solve • Hybrid sparse/dense data structure: 1.8 over CSR • Higher-level kernels • AAT·x or ATA·x: 4 over CSR, 1.8 over RB • A2·x: 2 over CSR, 1.5 over RB OSKI

Structural splitting for complex patterns • Idea: Split A = A1 + A2 + …, and tune Ai independently • Sample to detect “canonical” structures • Saves time and/or storage (avoid fill) The view from space

Example: Variable Block Row (Matrix #12) 2.1 over CSR 1.8 over RB The view from space

Example: Row-segmented diagonals 2 over CSR The view from space

Solve Tx = b for x, T triangular Raefsky4 (structural problem) + SuperLU + colmmd N=19779, nnz=12.6 M Dense trailing triangle: dim=2268, 20% of total nz Can be as high as 90+%! Dense sub-triangles for triangular solve The view from space

dot product “axpy” Cache optimizations for AAT·x • Idea: Interleave multiplication by A, AT • Combine with register optimizations: ai = r c block row The view from space

OSKI tunes for workloads • Bi-conjugate gradients - equal mix of A·x and AT·y • 31: A·x, AT·y = 1053, 343 Mflop/s 517 Mflop/s • 33: A·x, AT·y = 806, 826 Mflop/s 816 Mflop/s • Higher-level operation - (A·x, AT·y) kernel • 31: 757 Mflop/s • 33: 1400 Mflop/s • Workload tuning • Evaluate weighted sums of empirical models • Dynamic programming to evaluate alternatives OSKI

Matrix powers kernel • Idea: Serial sparse tiling (Strout, et al.) • Extend for arbitrary A, combine other techniques (e.g., blocking) • Compute dependences, layer new data structure on (B)CSR • Matrix powers (Ak·x) with data structure transformations • A2·x: up to 2 faster • New latency-tolerant solvers? (Hoemmen’s thesis at Berkeley) The view from space

t3 t1 y1 y2 y3 y5 t2 y4 t5 x1 x2 x3 x4 x5 t4 Example: A2·x • Let A be 55 tridiagonal • Consider y = A2·x • t = A·x, y = A·t • Nodes: vector elements • Edges: matrix elements aij a11 a11 a12 a12 The view from space

t3 t1 y1 y2 y3 y5 t2 y4 t5 x1 x2 x3 x4 x5 t4 Example: A2·x • Let A be 55 tridiagonal • Consider y = A2·x • t=A·x, y=A·t • Nodes: vector elements • Edges: matrix elements aij • Orange = everything needed to compute y1 • Reuse a11, a12 a11 a11 a12 a12 The view from space

t1 x4 y1 y2 y3 y4 y5 x5 t3 t4 t5 x1 x2 x3 t2 Example: A2·x • Let A be 55 tridiagonal • Consider y = A2·x • t=A·x, y=A·t • Nodes: vector elements • Edges: matrix elements aij • Orange = everything needed to compute y1 • Reuse a11, a12 • Grey = y2, y3 • Reuse a23, a33, a43 The view from space

Examples of OSKI’s early impact • Integrating into major linear solver libraries • PETSc • Trilinos – R&D100 (Heroux) • Early adopter: ClearShape, Inc. • Core product: lithography process simulator • 2 speedup on full simulation after using OSKI • Proof-of-concept: SLAC T3P accelerator design app • SpMV dominates execution time • Symmetry, 22 block structure • 2 speedups OSKI

Outline • A case for sparse kernel autotuning • OSKI • Autotuning compiler • Sparse kernels aren’t my bottleneck… • Summary and future directions

Strengths and limits of the library approach • Strengths • Isolates optimization in the library for portable performance • Exploits domain-specific information aggressively • Handles run-time tuning naturally • Limitations • “Generation Me”: What about my application? • Run-time tuning: run-time overheads • Limited context for optimization (w/o delayed evaluation) • Limited extensibility (fixed interfaces) An autotuning compiler

A framework for performance tuningSource: SciDAC Performance Engineering Research Institute (PERI) An autotuning compiler

OSKI’s place in the tuning framework An autotuning compiler

An empirical tuning framework using ROSE Empirical Tuning Framework using ROSE gprof, HPCtoolkit Open SpeedShop POET Search engine An autotuning compiler

What is ROSE? • Research: Optimize use of high-level abstractions • Lead: Dan Quinlan at LLNL • Target DOE apps • Study application-specific analysis and optimization • Extend traditional compiler optimizations to abstraction use • Performance portability via empirical tuning • Infrastructure: Tool for building source-to-source tools • Full compiler: basic analysis, loop optimizer, OpenMP • Support for C, C++; Fortran 90 in progress • Targets “non-compiler audience” • Open-source An autotuning compiler

A compiler-based autotuning framework • Guiding philosophy • Leverage external stand-alone components • Provide open components and tools for community • User or “system” profiles to collect data and/or analyses • In ROSE • Profile-based analysis to find targets • Extract (“outline”) targets • Make “benchmark” using checkpointing • Generate parameterized target (not yet automated) • Independent search engine performs search An autotuning compiler

Case study: Loop optimizations for SMG2000 • SMG2000, implements semi-coarsening multigrid on structured grids (ASC Purple benchmark) • Residual computation has an SpMV bottleneck • Loop below looks simple but non-trivial to extract for (si = 0; si < NS; ++si) for (k = 0; k < NZ; ++k) for (j = 0; j < NY; ++j) for (i = 0; i < NX; ++i) r[i + j*JR + k*KR] -= A[i + j*JA + k*KA + SA[si]] * x[i + j*JX + k*KX + Sx[si]] An autotuning compiler

Before transformation for (si = 0; si < NS; si++) /* Loop1 */ for (kk = 0; kk < NZ; kk++) { /* Loop2 */ for (jj = 0; jj < NY; jj++) { /* Loop3 */ for (ii = 0; ii < NX; ii++) { /* Loop4 */ r[ii + jj*Jr + kk*Kr] -= A[ii + jj*JA + kk*KA + SA[si]] * x[ii + jj*JA + kk*KA + SA[si]]; } /* Loop4 */ } /* Loop3 */ } /* Loop2 */ } /* Loop1 */ An autotuning compiler

After transformation, including interchange, unrolling, and prefetching for (kk = 0; kk < NZ; kk++) { /* Loop2 */ for (jj = 0; jj < NY; jj++) { /* Loop3 */ for (si = 0; si < NS; si++) { /* Loop1 */ double* rp = r + kk*Kr + jj*Jr; const double* Ap = A + kk*KA + jj*JA + SA[si]; const double* xp = x + kk*Kx + jj*Jx + Sx[si]; for (ii = 0; ii <= NX-3; ii += 3) { /* core Loop4 */ _mm_prefetch (Ap + PFD_A, _MM_HINT_NTA); _mm_prefetch (xp + PFD_X, _MM_HINT_NTA); rp[0] -= Ap[0] * xp[0]; rp[1] -= Ap[1] * xp[1]; rp[2] -= Ap[2] * xp[2]; rp += 3; Ap += 3; xp += 3; } /* core Loop4 */ for ( ; ii < NX; ii++) { /* fringe Loop4 */ rp[0] -= Ap[0] * xp[0]; rp++; Ap++; xp++; } /* fringe Loop4 */ } /* Loop1 */ } /* Loop3 */ } /* Loop2 */ An autotuning compiler