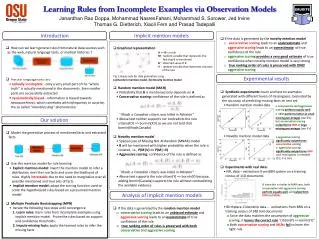

Learning Rules from Incomplete Examples via Observation Models

10 likes | 129 Views

This study explores effective methodologies for learning general rules from incomplete data, specifically focusing on the novel mention models. The research investigates how aggressive and conservative scoring affect the estimation of true confidence in rules. Notably, aggressive scoring provides a more reliable estimate of true confidence when the data is well-aligned with the novelty mention model. Through experiments with synthetic and real datasets, the paper examines the performance of various mention models and their implications for rule learning in contexts marked by data incompleteness.

Learning Rules from Incomplete Examples via Observation Models

E N D

Presentation Transcript

Learning Rules from Incomplete Examples via Observation Models Janardhan Rao Doppa, Mohammad NasresFahani, Mohammad S. Sorower, Jed Irvine Thomas G. Dietterich, Xiaoli Fern and Prasad Tadepalli • If the data is generated by the novelty mention model • conservative scoring leads to an underestimateand aggressive scoring leads to an overestimate of true confidence of the rule • aggressive scoring provides a very good estimate of true confidence when novelty mention model is very strong • true ranking order of rules is preserved with ONLY aggressive scoring Introduction Implicit mention models • How can we learn general rules from natural data sources such as the web, natural language texts, or medical histories ? • Natural language texts are • radically incomplete - only a very small part of the “whole truth” is actually mentioned in the documents. Even smaller parts are successfully extracted • systematically biased -information is biased towards newsworthiness, which correlates with infrequency or surprise, the so called “man bites dog” phenomenon • Graphical representation • Random mention model (MAR) • Probability that B is mentioned only depends on A • Conservative scoring: confidence of the rule is defined as • ``Khadr, a Canadian citizen, was killed in Pakistan“ • Above text neither supports nor contradicts the rule citizen(X,Y) => borinIn(X,Y) as we are not told that bornIn(Khadr,Canada) • Novelty mention model • Special case of Missing Not At Random (MNAR) model • B will be mentioned with higher probability when the rule is violated, i.e., P(M|V) >> P(M|¬V) • Aggressive scoring: confidence of the rule is defined as • ``Khadr, a Canadian citizen, was killed in Pakistan“ • Above text supports the rule citizen(Y) => bornIn(Y) because, adding bornIn(Canada) supports the rule without contradicting the available evidence A => B is a rule M : random variable that represents the fact that B is mentioned B’ : observed value of B V : random variable that represents violation of the rule Fig 1: Bayes nets for data generation using (a) Random mention model, (b) Novelty mention model Experimental results • Synthetic experiments: learn and test on examples generated with different levels of missingness. Evaluated by the accuracy of predicting missing facts on test set. • Random mention model data • Novelty mention model data • Experiments with real data: • NFL data – extractions from BBN system on a training corpus of 110 documents • Birthplace-Citizenship data -- extractions from BBN on a training corpus of 248 text documents • Since the data matches the assumption of aggressive scoring, it learns the correct rule “citizen(Y) => bornIn(Y)” • Both conservative scoring and MLNs fail to learn the right rule • conservative and aggressive • scoring perform equally well • SEMperforms better at small • missing percentages (see 0’s), but conservative scoringoutperformsSEM at large missing percentages (see O‘s) Our solution • Model the generative process of mentioned facts and extracted facts • Use this mention model for rule learning • Explicit mention model: invert the mention model to infer a distribution over the true facts and score the likelihood of rules. Highly intractable due to the need to marginalize over all possible mentioned and true sets of facts • Implicit mention model: adapt the scoring function used to score the hypothesized rules based on a presumed mention model • Multiple Predicate Bootstrapping (MPB) • Iterate the following two steps until convergence • Learn rules: learn rules from incomplete examples using implicit mention model. Prune the rules based on support and confidence thresholds. • Impute missing facts: apply the learned rules to infer the missing facts • aggressive scoringsignificantly outperformsconservativescoring • aggressive scoring significantly outperformsSEM until missingness is tolerable (0.2, 0.4, 0.6) • since this is similar to MAR case, both conservative and aggressive scoringperform equally well, and outperform SEM and MLNs Analysis of implicit mention models • If the data is generated by the random mention model • conservative scoringleads to an unbiased estimate and aggressive scoring leads to an overestimate of true confidence of the rule • true ranking order of rules is preserved with both conservative and aggressive scoring