Reinforcement Learning

Reinforcement Learning. 16 January 2009 RG Knowledge Based Systems Hans Kleine Büning. Outline. Motivation Applications Markov Decision Processes Q-learning Examples. How to program a robot to ride a bicycle?. Reinforcement Learning: The Idea.

Reinforcement Learning

E N D

Presentation Transcript

Reinforcement Learning 16 January 2009 RG Knowledge Based Systems Hans Kleine Büning

Outline • Motivation • Applications • Markov Decision Processes • Q-learning • Examples

How to program a robot to ride a bicycle?

Reinforcement Learning: The Idea • A way of programming agents by reward and punishment without specifying how the task is to be achieved

Environment state €€€ €€€ action Learning to Ride a Bicycle

States: Angle of handle bars Angular velocity of handle bars Angle of bicycle to vertical Angular velocity of bicycle to vertical Acceleration of angle of bicycle to vertical Learning to Ride a Bicycle

Environment state €€€ €€€ action Learning to Ride a Bicycle

Actions: Torque to be applied to the handle bars Displacement of the center of mass from the bicycle’s plan (in cm) Learning to Ride a Bicycle

Environment state €€€ €€€ action Learning to Ride a Bicycle

Angle of bicycle to vertical is greater than 12° no yes Reward = -1 Reward = 0

Learning To Ride a Bicycle Reinforcement Learning

Reinforcement Learning: Applications • Board Games • TD-Gammon program, based on reinforcement learning, has become a world-class backgammon player • Mobile Robot Controlling • Learning to Drive a Bicycle • Navigation • Pole-balancing • Acrobot • Sequential Process Controlling • Elevator Dispatching

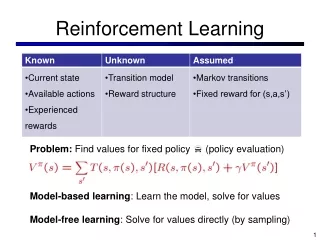

Key Features of Reinforcement Learning • Learner is not told which actions to take • Trial and error search • Possibility of delayed reward: • Sacrifice of short-term gains for greater long-term gains • Explore/Exploit trade-off • Considers the whole problem of a goal-directed agent interacting with an uncertain environment

The Agent-Environment Interaction • Agent and environment interact at discrete time steps: t = 0,1, 2, … • Agent observes state at step t : st2 S • produces action at step t: at2A • gets resulting reward : rt +12 ℜ • and resulting next state: st +12 S

The Agent’s Goal: • Coarsely, the agent’s goal is to get as much reward as it can over the long run Policy is • a mapping from states to action (s) = a • Reinforcement learning methods specify how the agent changes its policy as a result of experience experience

Nondeterministic Markov Decision Process P = 0.8 P = 0.1 P = 0.1

Methods Model (reward function and transition probabilities) is known Model (reward function or transition probabilities) is unknown discrete states continuous states discrete states continuous states Dynamic Programming Value Function Approximation + Dynamic Programming Reinforcement Learning, Monte Carlo Methods Valuation Function Approximation + Reinforcement Learning