Cognitive Engineering PSYC 530 Decision Making

300 likes | 658 Views

Cognitive Engineering PSYC 530 Decision Making. Raja Parasuraman. Overview. Normative Models of Decision Making Cognitive Factors Heuristics and Biases Enhancing Decision Making. Decision Making Errors. USS Vincennes incident—shot down Iranian commercial airliner.

Cognitive Engineering PSYC 530 Decision Making

E N D

Presentation Transcript

CognitiveEngineeringPSYC 530Decision Making Raja Parasuraman

Overview • Normative Models of Decision Making • Cognitive Factors • Heuristics and Biases • Enhancing Decision Making

Decision Making Errors • USS Vincennes incident—shot down Iranian commercial airliner • USS Stark incident—fired on by missiles from Iraqi fighter jets

Medical Decision Making Errors Therac-25 Radiation Therapy Machine • The Therac-25 is a high energy radiation machine, but also provides many low-energy dosages across successive treatment sessions. • Two basic modes: • low-energy mode (electron beam of about 200 rads) • x-ray mode, (full 25 million electron volt capacity). In this mode, a thick metal plate is inserted between the beam source to create an x-ray source. • Nurse intended to provide patient with electron mode, but hit the “x” key for x-ray mode. She realized her mistake, hit “Up ” key, then “Edit”, then typed “e” for electron mode, then “Enter”. • Patient received fatal radiation dose Corrupted Proton Beam 25 million EV! (Never before encountered)

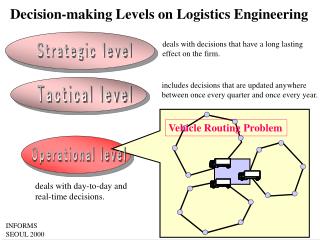

Characteristics of Decision Making • Uncertainty • Informational • Temporal • Spatial • Environmental • Risk • Low risk • High risk • Familiarity and expertise • Time • Tactical (high time pressure) • Strategic (low time pressure)

Decision Making Models • Normative • Signal detection theory • Expected value • Bayesian models • Multi-attribute theory • Information processing • Attention and working memory • Heuristics and biases • “Naturalistic” decision making • Recognition-primed decision making

Leading Decision Researchers Rev. Thomas Bayes 1702-1761 Ward Edwards, (retired Bayesian) Baruch Fischoff Carnegie Mellon University Nobel Laureates 2002 Daniel Kahneman Princeton University Amos Tversky (deceased) Gary Klein

Expected Value (Subjective Expected Utility) • Assign costs and values to choice outcomes • Assign (subjective) probability to States of the World • Computed expected value (subjective expected utility) • Select choice with highest expected value (expected utility)

V 1-V What is Utility? The Wheel of Fortune Course Grade Subjective Utility A 1 A- * B+ * B * B- * C * F 0 • Standard Gamble • Most desirable outcome if pointer falls above blue line (v) • Least desirable if it falls below blue line (1-v) • OR • Certain Outcome

Subjective Expected Utilities • List all choice outcomes in order from most to least favorable • Assign utilities for end points (e.g., 1 and 0) • Assign utilities for outcomes in between • Assign prior probabilities for each State of World (hypotheses) • From previous data (e.g., base rate of particular event) • From expert judgment

States of the World with prior probabilities and values The Decision Matrix A [PA ] B [PB ] C…. priors 1 EV1 = PA V1A + PB V1B Decision Options values EV2 = PA V2A + PB V2B 2

Computing Expected Value EV1 or SEU1 = PA V1A + PB V1B = .4(.7) + .6(0) = .28 + 0 = .28 EV2 or SEU2 = PA V2A + PB V2B = .4(-.3) + .6(.3) = -.12 + .18 = .06 SOW A and B roughly equi-probable SOW B more probable a priori EV1 or SEU1 = PA V1A + PB V1B = .2(.7) + .8(0) = .14 + 0 = .14 EV2 or SEU2 = PA V2A + PB V2B = .2(-.3) + .8(.3) = -.06 + .24 = .18 A PA .4 .2 B PB .6 .8 1 2

Multiple Hypotheses and Decision Options Prior probability vector Value matrix EV vector X = Best decision option = Highest EV

Objections to Normative Theories (e.g., SEU) • Computational — people are not calculators • However, criticism not necessarily valid as calculations not necessarily conscious (cf. expert motor control) • Asymmetry of utility function • Cognitive/affective — asymmetry of risk taking and risk aversion Theoretical utility function Actual utility function Gain Gain Utility Utility Losses have more impact! Value Value Loss Loss

EVA = 1($100) + 0($) = $100 EVC = 1(-$100) + 0($) = -$100 EVB = .5($200) + .5($0) = $100 EVD = .5(-$200) + .5($0) = -$100 Objections to Normative Theories (contd.) Not another coin-tossing game….! Yes, but this one is on risk taking vs risk aversion Heads Tails Option A: Guaranteed win of $100 Option B: 50% chance of winning $200 50% chance of winning $0 Option C: Guaranteed loss of $100 Option D: 50% chance of losing $200 50% chance of losing $0 Most people choose A over B even though EVs are same (risk aversive) The same majority choose D over C (risk taking)

Asymmetry in people’s choices may be related to framing of decision problem (Kahneman & Tversky, 1976) A new virulent epidemic breaks out in a small town with a population of 10,000 people. An experimental vaccine is developed to combat the virus, but the treatment is not completely effective in all people. Risk taking decision frame Option A: If the vaccine is administered, 1,000 people will survive (Option B: If nothing is done, none will survive) Risk aversion decision frame Option C: If the vaccine is administered, 9,000 people will die (Option D: If nothing is done, none will survive)

Cognitive Factors in Decision Making • Limited cognitive capacities means “satisficing” (Simon, 1956) rather than optimizing decision making — reduced cognitive effort • Perception — faulty estimates of cues (e.g., variance of set of values) • Selective attention — information cues (wrong focus may eliminate correct hypothesis early in the decision making cycle) • Working memory — limits on updating hypotheses as new information arrives • Long term memory — Expectations of particular hypotheses

Selective Attention and Cue Integration • Missing cues • Cue overload • which cues to attend to? • how many cues to integrate over? • c1 + c2 +c3 +……. cN [No improvement for N>2] • Cue salience • Not differentially weighing cues (As if heuristic)

Heuristics • “Cognitive short-cuts” or “rules of thumb” • Often useful way of “bounding” a complex decision problem • Can be potentially misleading because • Factors that influence the decision problem may not affect the reduced decision space of the heuristic • “Extraneous” factors affecting the heuristic decision space influence the decision choice Decision-relevant factors (e.g., prior probability) Extraneous factors (e.g., cue salience, recent memory Decision problem space Heuristic Decision outcomes

Heuristics and Biases • As if • Representativeness • Availability • Anchoring • Overconfidence bias • Confirmation bias • Hindsight bias

Representatitiveness • Extent to which a set of cues, symptoms, or evidence matches the set that is representative of the hypothesis stored in LTM • Patient matches 4 of 5 symptoms of disease X but only 3 of 5 symptoms of disease Y: Use of representativeness heuristic leads physician to diagnose X even though X has much lower prior probability than Y If it looks like a duck, walks like a duck, quacks like a duck, then it must be a…..dodo!

Availability • Frequent events are recalled more easily (OK, because frequent events are more probable • But other factors influence memory — e.g., recency (a recent but irrelevant event) The ease with which instances or occurrences of a hypothesis can be brought to mind Early and later events recalled better Events 1, 2, 3, 4, 5, 6, 7, ……..N Memory Accuracy Recency effect Item position

Bayes’ Theorem Easy form: Odds in favor of hypothesis = Prior odds x Likelihood ratio of data H1 = Hypothesis H2 = Alternative hypothesis D = Data (Diagnostic information) P(H1) = Prior probability of hypothesis 1 P(H2) = Prior probability of hypothesis 2 P(H1/D) = Posterior probability of hypothesis 1 given data P(H1/D) = P(H1) x P(D/ H1) P(H2/D) P(H2) P(D/ H2)

H1 = Breast cancer H2 = Normal D = Positive mammography test P(H1) = Population base rate of breast cancer = 1% = .01 P(H2) = 1 - .01 = .99 Prior odds = P(H1) / P(H2) = .1/.99 = .01 (1 in 100 chance) P(D/ H1) = Given cancer (H1), positive mammography test (D) 90% correct = .9 P(D/ H2) = 1 - .9 = .1 Likelihood ratio = P(D/ H1) / P(D/ H2) = .9/.1 = 9 (9 to 1 chance) Posterior odds of breast cancer = .01 x 9 = .09 or approx. 1 in 10 chance Most people misestimate the odds as closer to 9 to 1 than to 1 in 10 P(H1/D) = P(H1) x P(D/ H1) P(H2/D) P(H2) P(D/ H2) Mis-estimation of base rates (representativeness or availability heuristics

The Base Rate Fallacy in Action Department of Defense directive: All employees are to be screened using the most reliable technology for detection of deception and possible criminal behaviors. Following competitive bidding to the DOD, a company with a product is selected. The company claims that their technology is 95% accurate: that is, if an employee has engaged in criminal behavior, the test will detect deception 95% of the time; it will give a positive result for an honest person only 5% of the time. Sounds pretty good, doesn’t it? The system seems accurate……

The Base Rate Fallacy in Action (contd.) Things would be fine if the base rate of deception or criminality was high, but that is not the case. A low base rate leads to inflated estimates of the accuracy of the system for detection. Likelihood ratio of detection system: .95/.05 = 19 What this means is that if a tested person is a criminal, a positive test result means there is a 19 to 1 chance that the person tried to deceive the tester. The problem is that the base rate of criminality is likely to be low: Assume a base rate of 5%, or P(H1) = .05 Then P(H2) = 1 - P(H1) = .95 Then the prior odds = .05/.95 = 1/19 So, by Bayes’ theorem, the posterior odds of detecting deception = 19 x 1/19 = 1

Assume 10,000 DOD employees are screened 5% base rate: 500 criminal, 9,500 honest 95% accurate test result given criminal behavior State of the World Total Test Outcomes Criminal Honest 950 Positive Test Result Negative 9,050 500 9,500 Probability of accurately detecting deception given a positive test result is only 475/950 = 50% (odds = 1), no better than tossing a coin!

Enhancing Human Decision Making • Training • Practice does not “always make perfect” • Even expert decision makers exhibit biases and heuristics • Unambiguous, timely feedback • Proceduralization • Decision Aids (Automation)

Automation • Augmenting perception — e.g., pictorial representations of data • Selective attention — e.g., highlighting of relevant data sources • Working memory — e.g., keeping track of and aggregating evidence for hypotheses) • Decision making — e.g., recommend decision options or courses of action (expert systems) Caveat: Assumes automation assistance is reliable. Perfect reliability cannot be assured in many cases because of environmental unpredictability. Hence automation benefits may sometimes be offset by costs. These costs differ by stage of information processing — greater for decision making than perception and attention? ……. Stay tuned for future lecture.