Discrete Probability Models

This material explores the concept of independence in probability through conditional probabilities, emphasizing its significance in statistical inference and decision-making. It discusses how two events A and B are independent when their joint probability equals the product of their individual probabilities. The text further explains the extension of this concept to multiple events, introducing mutual independence. Additionally, it illustrates the application of Bayesian inference, particularly in legal contexts, highlighting how prior probabilities can be revised in light of new evidence, such as DNA matches.

Discrete Probability Models

E N D

Presentation Transcript

Discrete Probability Models James B. Elsner Department of Geography, Florida State University Some material based on notes from a one-day short course on Bayesian modeling and prediction given by David Draper (http://www.ams.ucsc.edu/~draper)

Review: Conditional probabilities provide information about the relationships among events. In particular, the independence or non-independence of events is of general interest for statistical inference and decision making. Two events A and B are said to be independent if their joint probability is equal to the product of their marginal probabilities. P(A and B) = P(A, B) = P(A) P(B) In terms of conditional probabilities, under independence we can write P(A|B) = P(A) and P(B|A) = P(B) Intuitively, two events are independent when knowledge about one does not change the probability of the other.

Sometimes the independence of two events A and B is conditional upon a third event (or assumption, or hypothesis) C. That is, P(A,B|C) = P(A|C)P(B|C), But P(A,B|~C) ~= P(A|~C)P(B|~C), where ~ means “not”. For example, suppose that you are a marketing manager and that you are interested in C, the event that a rival firm will NOT introduce a new product to compete with your product. You consider the possibility that the rival firm is about to conduct an extensive market survey (event A) and about to hire a larger number of new employees (event B). If C is true (the rival will not introduce a product), then you are willing to assume A and B are independent. If C is not true, then you feel that A and B are not independent.

The concept of independence can be extended to more than two events. The K events A1, A2, …, AK are said to be mutually independent if for all possible combinations of events chosen from a set of K events, the joint probability of the combination is equal to the product of the respective individual, or marginal, probabilities. For example, the three events A1, A2, and A3 are mutually independent if for all possible combinations of events chosen from a set of K events, the joint probability of the combination is equal to the product of the respective marginal probabilities. For example, the three events are mutually independent if P(A1,A2) = P(A1)P(A2), P(A1,A3) = P(A1)P(A3), P(A2,A3) = P(A2)P(A3), and P(A1,A2,A3) = P(A1)P(A2)P(A3). If the first three of these are satisfied but not the fourth, then the three events are said to be pairwise independent but not mutually independent.

Bayes’ theorem is a convenient formula that gives the relationship among various conditional probabilities. If there are just two possible outcomes A and ~A, then we can write Bayes’ theorem as P(A|B) = P(B|A) P(A) / [P(B|A)P(A) + P(B|~A)P(~A)], Where the denominator on the r.h.s. is the law of total probability applied to the P(B). This is the form of Bayes’ rule we used last week with our chess example for finding the probability of having played J given a win. A and B A B ~A and B P(B)=P(A,B) + P(~A,B) P(B)=P(B|A)P(A) + P(B|~A)P(~A)

To illustrate, suppose that the probability that a student passes an exam is 0.80 if the student studies and 0.50 if the student does not study. Suppose that 60 percent of the students in a particular class study for a particular exam. If a student chosen randomly from the class passes the exam, what is the probability that he or she studied? A={studied} B={passes} P(A|B) = P(B|A)P(A)/[P(B|A)P(A)+P(B|~A)P(~A)] = 0.80 (0.60)/[0.80 (0.60) + 0.50 (0.40)] = 0.706

In the courtroom • Bayesian inference can be used to coherently assess additional evidence of guilt in a court setting. • Let G be the event that the defendant is guilty. • Let E be the event that the defendant's DNA matches DNA found at the crime scene. • Let p(E|G) be the probability of seeing event E assuming that the defendant is guilty. (Usually this would be taken to be unity.) • Let p(G|E) be the probability that the defendant is guilty assuming the DNA match event E • Let p(G) be the probability that the defendant is guilty, based on the evidence other than the DNA match. • Bayesian inference tells us that if we can assign a probability p(G) to the defendant's guilt before we take the DNA evidence into account, then we can revise this probability to the conditional probability p(G|E), since p(G|E) = p(G) p(E|G) / p(E). • Suppose, on the basis of other evidence, a juror decides that there is a 30% chance that the defendant is guilty. Suppose also that the forensic evidence is that the probability that a person chosen at random would have DNA that matched that at the crime scene was 1 in a million, or 10-6. • The event E can occur in two ways. Either the defendant is guilty (with prior probability 0.3) and thus his DNA is present with probability 1, or he is innocent (with prior probability 0.7) and he is unlucky enough to be one of the 1 in a million matching people. • Thus the juror could coherently revise his opinion to take into account the DNA evidence as follows: • p(G|E) = (0.3 × 1.0) /(0.3 × 1.0 + 0.7 × 10-6) = 0.99999766667. • In the United Kingdom, Bayes' theorem was explained by an expert witness to the jury in the case of Regina versus Dennis John Adams. The case went to Appeal and the Court of Appeal gave their opinion that the use of Bayes' theorem was inappropriate for jurors.

Bayes’ theorem can be put into a much more general form. If the J events A1, A2, …, AJ are mutually exclusive (that is, no two events can both occur; if one of them occurs, none of the other J-1 can occur) and collectively exhaustive (that is, one of the events must occur; the J events exhaust all of the possible results), and B is another event (the “given” event), then P(Aj|B) = P(B|Aj)P(Aj) /[P(B|A1)P(A1) + P(B|A2)P(A2) + …+ P(B|AJ)P(AJ)] Example: Suppose that the director of a pollution control board for a particular area is concerned about the possibility that a certain firm is polluting a river. The director considers three events: the firm is a heavy polluter (A1), a light polluter (A2), or neither (A3). Furthermore, he feels that the odds are 7 to 3 against the firm’s being a heavy polluter, so that P(A1)=0.3; the odds are even that the firm is a light polluter, so that P(A2)=0.5; and the odds are 4 to 1 in favor of the firm’s being a polluter, so that P(A3)=0.2. In order to obtain more information, the director takes a sample of river water 10 miles downstream from the firm in question. The water is visibly polluted and has a strong odor.

Since there are few other possible sources of pollution for this river, the new information is not conclusive evidence that the firm in question is a polluter. However, the director judges that if the firm is a heavy polluter, the probability that the water would be so polluted is 0.9; if the firm is a light polluter, this probability is 0.6; and if the firm is not a polluter, the probability is only 0.3. Thus, P(B|A1)=0.9, P(B|A2)=0.6, and P(B|A3)=0.3, where B represents the information from the sample of river water. Applying Bayes’ theorem, Let c = Total Probability = P(B|A1)P(A1)+P(B|A2)P(A2)+P(B|A3)P(A3) = 0.9(0.3)+0.6(0.5)+0.3(0.2)=0.63 Then, P(A1|B) = P(B|A1)P(A1)/c = 0.90(0.30)/0.63=0.429 P(A2|B) = P(B|A2)P(A2)/c = 0.60(0.50)/0.63=0.476 P(A3|B) = P(B|A3)P(A3)/c = 0.30(0.20)/0.63=0.095 Before the sample of river water is observed, the probabilities for “heavy polluter,” “light polluter,” and “no polluter” are 0.3, 0.5, and 0.2, respectively. After seeing the river, the probabilities change as described above.

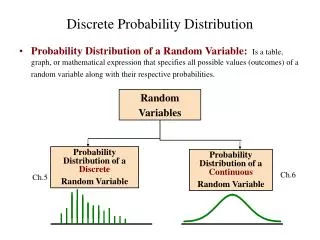

Thus far, Bayes’ theorem has been presented in terms of probabilities of various discrete events. The events were expressed in a qualitative fashion. It is often more convenient to quantify them by assigning numbers to the various events. When such numbers are assigned, the “function” that assigns a number to each elementary event is called a random variable. For instance, if a coin is to be tossed once, the two events are H and T, so one possible assignment of numbers is “1” for H and “0” for tails. Sometimes the events in question already have some numerical values, in which case it is convenient to take these values as the values of a random variable. E.g., the number of hurricanes in a season, the sales of a particular product, the number of vehicles passing over a bridge during a given time period. It is useful to think of a random variable as an uncertain quantity, since probability is a measure of uncertainty. The uncertainty involved in an uncertain quantity refers to uncertainty on the part of the decision maker.

For example, you might consider making a bet regarding the outcome a football game played 20 years ago. The outcome of the game is clearly not uncertain, but because your memory is vague, it is uncertain to you, so you can consider it a random variable. Another example. A production manager is interested in the proportion of parts that are defective in a newly received shipment. Clearly this proportion is fixed and could be determined with certainty by inspecting the entire shipment. However, such an inspection might be too costly so the proportion of defective parts should be treated as a random variable. We make our next steps toward understanding Bayesian analysis and modeling by considering discrete random variables. Discrete random variables are random variables with finite or countably infinite number of possible values. If the discrete random variable x can assume the J values x1, x2, …, xJ, then the J possible values, together with their probabilities P(x=x1), P(x=x2), …, P(x=xJ) comprise the probability distribution function of the random variable.

For example, if x represents the number of times heads occurs in two tosses of a fair coin, then the probability distribution function in tabular form is xi P(x=xi) P(x<xi) 0 ¼ ¼ 1 ½ ¾ 2 ¼ 1 In general, for a random variable with J possible values it is necessary for all J probabilities to be nonnegative and for their sum to equal 1. P(x<xi) is called the cumulative distribution function (CDF). Now Bayes’ theorem in terms of the conditional distribution of a random variable is P(x=xj|y) = P(y|x=xj)P(x=xj)/[åi=1J P(y|x=xi)P(x=xi)] Here we see how Bayes’ rule can be used to revise the entire probability distribution, since we can apply it to x=x1, x=x2, …

When the uncertain quantities are obvious, this is written in shorter form • P(xj|yk) = P(yk|xj)P(xj)/[åi=1J P(yk|xi)P(xi)] • Statistical problems are generally formulated be defining some population of interest, say • The population of items produced by a manufacturing process. • The population of licensed drivers in Florida. • The population of students at FSU. • Alternatively, the statistician might envision a process, sometimes called a stochastic process, or data-generating process, which generates the items of interest. For instance, she is interested in the incoming telephone calls at a switchboard, she can consider these calls to be “generated” by some underlying process. For a Bayesian, this way of thinking can help. • Whether thinking of the problem in terms of population or process (the terms are interchangeable), the statistician is interested in certain summary measures, or parameters, of the population or process.

Examples: • The proportion of defective items produced by manufacturing. • The avg weight and height of a manufactured product. • The proportion of drivers over 50 in FL. • The mean and variance of the grade-point avg of students at FSU. • The mean rate at which hurricanes affect FL. • Inferential statements concern one or more unknown parameters of a given population or data-generating process. Since Greek letters are usually used to represent such parameters, it is convenient to replace x with q, so • P(qj|yk) = P(yk|qj)P(qj)/[åi=1J P(yk|qj)P(qj)] • The two important discrete probability models are the binomial and the Poisson. • Hurricane activity: Bernoulli process/binomial distribution (handout). • Predicting hurricanes: Poisson prior (handout).