Minimum Information Inference

This talk by Naftali Tishby and Amir Globerson from ICNC explores the classification problem in machine learning. It contrasts generative and discriminative models, emphasizing the importance of minimizing generalization error. The discussion dives into the Minimum Information Principle, game-theoretic motivations, and the use of probabilistic models for classification. The presenters illustrate how to choose classifiers, employ empirical risk minimization, and introduce advanced techniques such as the MaxEnt approach and the advantages of various modeling strategies in achieving accurate predictions.

Minimum Information Inference

E N D

Presentation Transcript

Minimum Information Inference Naftali Tishby Amir Globerson ICNC, CSE The Hebrew University TAU, Jan. 2, 2005

Talk outline • Classification with probabilistic models: Generative vs. Discriminative • The Minimum Information Principle • Generalization error bounds • Game theoretic motivation • Joint typicality • The MinMI algorithms • Empirical evaluations • Related extensions: SDR and IB

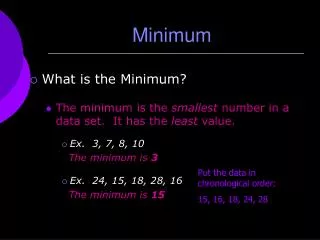

The Classification Problem • Learn how to classify (complex) observationsX into (simple) classes Y • Given labeled examples (xi,yi) • Use them to construct a classifiery=g(x) • What is a good classifier? • Denote by p *(x,y) the true underlying law • Want to minimize the generalization error

Problem … Generalization – Can’t be computed directly p*(x,y) y=g(x) (xi,yi), i=1…n Observed Learned Truth

Choosing a classifier • Need to limit search to some set of rules. If every rule is possible we will surely over-fit. Use a family g(x) where is a parameter. • Would be nice if the true rule is in g(x) • How do we choose in g(x) ?

Common approach:Empirical Risk Minimization • A reasonable strategy. Find the classifier which minimizes the empirical (sample) error: • Not necessarily provides the best generalization, although theoretical bounds exist. • Computationally hard to minimize directly. Many works minimize upper bounds on the error. • Here we focus on a different strategy.

Probabilistic models for classification • Had we known p*(x,y) the optimal predictor would be • But we don’t know it. We can try to estimate it. Two general approaches: generative and discriminative.

Generative Models • Assume p(x|y) has some parametric form, e.g. a Gaussian. • Each y has a different set of parameters y • How do we estimate y, p(y) ? Maximum Likelihood!

Generative Models -Estimation • Easy to see that p(y) should be set to the empirical frequency of the classes • The parameters yobtained by collecting all x values for the class y, and generating a maximum likelihood estimate.

Example: Gaussians • Assume the class conditional distribution is Gaussian • Then are the empirical mean and variance of the samples in class y. y=1 y=2

Example: Naïve Bayes • Say X=[X1,…,Xn] is an n dimensional observation • Assume: • Parameters are p(xi=k|y). Calculated by counting how many times xi=k in class y. • Empirical means of indicator functions:

Generative Classifiers: Advantages • Sometimes it makes sense to assume a generation process for p(x|y)(e.g. speech or DNA). • Estimation is easy. Closed form solutions in many cases (through empirical means). • The parameters can be estimated with relatively high confidence from small samples (e.g. empirical mean and variance). See Ng and Jordan (2001). • Performance is not bad at all.

Discriminative Classifiers • But, to classify we need onlyp(y|x). Why not estimate it directly? Generative classifiers (implicitly) estimate p(x), which is not really needed or known. • Assume a parametric form for p(y|x):

Discriminative Models - Estimation • Choose yto maximize conditional likelihood • Estimation is usually not in closed form. Requires iterative maximization (gradient methods etc).

Example: logistic regresion • Assume p(x|y) are Gaussians with different means and same variances. Then • Goal is to estimate ay,by • This is called logistic regression. Since the log of the distribution is linear in x

DiscriminativeNaïve Bayes • Assuming p(x|y) is in Naïve Bayes class, the discriminative distribution is • Similar to Naïve Bayes, but the ψ(x,y) functions are not distributions. This is why we need the additional normalization Z. • Also called a conditional first order loglinear model .

Discriminative: Advantages • Estimates only the relevant distributions (important when X is very complex). • Often outperforms generative models for large enough samples (see Ng and Jordan, 2001). • Can be shown to minimize an upper bound on the classification error.

The best of both worlds… • Generative models (often) employ empirical means which are easy and reliable to estimate. • But they model each class separately so poor discriminationis obtained. • We would like a discriminative approach based on empirical means.

Learning from Expected values(observations, in physics) • Assume we have some “interesting” observables: • And we are given their sample empirical means for different classes Y, e.g. class two moments: • How can we use this information to build a classifier? • Idea: Look for models which yield the observed expectations, but contain no other information.

The MaxEnt approach • The Entropy H(X,Y) is a measure of uncertainty (and typicality!) • Find the distribution with the given empirical means andmaximum joint entropy H(X,Y) (Jaynes 57, …) • “Least Committed” to the observations, most typical. • Yield “nice” exponential forms:

Occam’s in Classification • Minimum assumptions about X and Y imply independence. • Because X behaves differently for different Y they cannot be independent • How can we quantify their level of dependence ? p(x|y=1) p(x|y=2) m2 m1 X

Mutual Information (Shannon 48) • The measure of the information shared by two variables • X and Y are independent iff I(X;Y)=0 • Bounds the classification error: eBayes<0.5(H(Y)-I(X;Y)). (Hellman and Raviv 1970). • Why not minimizeit subject to the observation constraints?

More for Mutual Information… • I(X;Y) - the unique functional (up to units) that quantifies the notion of information in X about Y in a covariant way. • Mutual Information is the generating functional for both source coding (minimization) and channel coding (maximization). • Quantifies independence in a model free way • Has a natural multivariate extension - I(X1,…,Xn).

MinMI: Problem Setting • Given a sample (x1,y1),…,(xn,yn) • For each y, calculate the expected value of (X) • Calculate empirical marginal p(y) • Find the minimum Mutual Information distribution with the given empirical expected values • The valueof the minimum information is precisely the information in the observations!

MinMI Formulation • The (convex) set of constraints • The information minimizing distribution • A convex problem. No local minima!

pMI p • The problem is convex given p(y) for any empirical means, without specifying p(x). • The minimization generates an auxiliary sparse pMI (x): support alignments.

Characterizing • The solution form • Where (y) are Lagrange multipliers and • Via Bayes • Can be used for classification. But how do we find it?

Careful… I cheated… • What if pMI(x)=0 ? • No legal pMI(y|x) … • But we can still define: • Can show that it is subnormalized: • And use f(y|x) for classification! • Solutions are actually very sparse. Many pMI(x) are zero. “Support Assignments”…

A dual formulation • Using convex duality we can show that MinMI can be formulated as • Called a geometric program • Strict inequalities for x such that p(x)=0 • Avoids dealing with p(x) at all!

-log2 fMI(y|x) fMI(y|x) A generalization bound • If the estimated means are equal to their true expected values, we can show that the generalization error satisfies Y=1

A Game Theoretic Interpretation • Among all distributions in F(a), why choose MinMI? • The MinMI classifiers minimizes the worst case loss in the class • The loss is an upper bound on generalization error • Minimize a worst case upper bound

MinMI and Joint Typicality Given a sequence the probability that another independently drawn sequence: is drawn from their joint distribution, Is asymptotically Suggesting Minimum Mutual Information (MinMI) as a general principle for joint (typical) inference.

I-Projections (Csiszar 75, Amari 82,…) • The I-projection of a distribution q(x) on a set F • For a set defined by linear constraints: • Can be calculated using Generalized Iterative Scaling or Gradient methods. Looks Familiar ?

The MinMI Algorithm • Initialize • Iterate • For all y: Set to be the projection of on • Marginalize

Example: Two moments • Observations are class conditional mean and variance. • MaxEnt solution would be p(X|y) a Gaussian. • MinMI solutions are far from Gaussians and discriminate much better. MaxEnt MinMI

Example: Conditional Marginals • Recall in Naïve Bayes we used the empirical means of: • Can use these means for MinMI.

Naïve Bayes Analogs Naïve Bayes Discriminative 1st Order LogLinear

Experiments • 12 UCI Datasets. Discrete Features Only used singleton marginal constraints. • Compared to Naïve Bayes and 1st order LogLinear model. • Note: Naïve Bayes and MinMI use exactly the same input. LogLinear regression also approximates p(x) and uses more information.

Related ideas • Extract the best observables using minimum MI: Sufficient Dimensionality Reduction (SDR) • Efficient representations of X with respect to Y: The Information Bottleneck approach. • Bounding the information in neural codes from very sparse statistics. • Statistical extension of Support Vector Machines.

Conclusions • MinMI outperforms discriminative model for small sample sizes • Outperforms generative model. • Presented a method for inferring classifiers based on simple sample means. • Unlike generative models, provides generalization guarantees.