Preference Elicitation in Single and Multiple User Settings

1.09k likes | 1.27k Views

Preference Elicitation in Single and Multiple User Settings. Darius Braziunas, Craig Boutilier, 2005 ( Boutilier, Patrascu, Poupart, Shuurmans, 2003, 2005 ) Nathanael Hyafil, Craig Boutilier, 2006a, 2006b Department of Computer Science University of Toronto. Overview.

Preference Elicitation in Single and Multiple User Settings

E N D

Presentation Transcript

Preference Elicitation in Single and Multiple User Settings Darius Braziunas, Craig Boutilier, 2005 (Boutilier, Patrascu, Poupart, Shuurmans, 2003, 2005) Nathanael Hyafil, Craig Boutilier, 2006a, 2006b Department of Computer Science University of Toronto

Overview • Preference Elicitation in A.I. • Single User Elicitation • Foundations of Local queries [BB-05] • Bayesian Elicitation [BB-05] • Regret-based Elicitation [BPPS-03,05] • Multi-agent Elicitation (Mechanism Design) • One-Shot Elicitation [HB-06b] • Sequential Mechanisms [HB-06a]

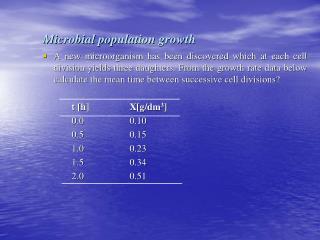

Preference Elicitation in AI Shopping for a Car: Luggage Capacity? Two Door? Cost? Engine Size? Color? Options?

The Preference Bottleneck • Preference elicitation:the process of determining a user’s preferences/utilities to the extent necessary to make a decision on her behalf • Why a bottleneck? • preferences vary widely • large (multiattribute) outcome spaces • quantitative utilities (the “numbers”) difficult to assess

Automated Preference Elicitation • The interesting questions: • decomposition of preferences • what preference info is relevant to the task at hand? • when is the elicitation effort worth the improvement it offers in terms of decision quality? • what decision criterion to use given partial utility info?

Overview • Preference Elicitation in A.I. • Constraint-based Optimization • Factored Utility Models • Types of Uncertainty • Types of Queries • Single User Elicitation • Multi-agent Elicitation (Mechanism Design)

Constraint-based Decision Problems • Constraint-based optimization (CBO): • outcomes over variables X = {X1 … Xn} • constraints C over X spell out feasible decisions • generally compact structure, e.g., X1 & X2 ¬ X3 • add a utility functionu: Dom(X) →R • preferences over configurations

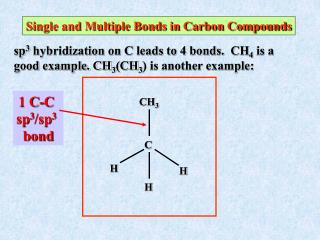

Constraint-based Decision Problems • Must express u compactly like C • generalized additive independence (GAI) • model proposed by Fishburn (1967) [and BG95] • nice generalization of additive linear models • given by graphical model capturing independence

f1(A) a: 3 a: 1 f2(B) b: 3 b: 1 A B f3(BC) bc: 12 bc: 2 … C u(abc) =f1(a)+ f2(b)+ f3(bc) Factored Utilities: GAI Models • Set of K factors fk over subset of vars X[k] • “local” utility for each local configuration • [Fishburn67] u in this form exists iff • lotteries p and q are equally preferred whenever p and q have the same marginals over each X[k]

Optimization with GAI Models f1(A) a: 3 a: 1 f2(B) b: 3 b: 1 • Optimize using simple IP (or Var Elim, or…) • number of vars linear in size of GAI model A B C f3(BC) bc: 12 bc: 2 …

Difficulties in CBO • Utility elicitation: how do we assess individual user preferences? • need to elicit GAI model structure (independence) • need to elicit (constraints on) GAI parameters • need to make decisions with imprecise parameters

Strict Utility Function Uncertainty • User’s actual utility u unknown • Assume feasible setF U = [0,1]n • allows for unquantified or “strict” uncertainty • e.g., F a set of linear constraints on GAI terms • How should one make a decision? elicit info? • u(red,2door,280hp) > 0.4 • u(red,2door,280hp) > u(blue,2door,280hp)

Strict Uncertainty Representation f1(L) l: [7,11] l: [2,5] L P N f2(L,N) l,n: [2,4] l,n: [1,2] Utility Function

Bayesian Utility Function Uncertainty • User’s actual utility u unknown • Assume densityP over U = [0,1]n • Given belief stateP, EU of decision x is: EU(x , P) = U (px . u) P( u ) du • Decision making is easy, but elicitation harder? • must assess expected value of information in query

Bayesian Representation f1(L) l: l: L P N f2(L,N) l,n: l,n: … Utility Function

Query Types • Comparison queries (is x preferred to x’?) • impose linear constraints on parameters • Sk fk(x[k]) > Sk fk(x’[k]) • Interpretation is straightforward U

Query Types • Bound queries (is fk(x[k])> v ?) • response tightens bound on specific utility parameter • can be phrased as a local standard gamble query U

Overview • Preference Elicitation in A.I. • Single User Elicitation • Foundations of Local queries • Bayesian Elicitation • Regret-based Elicitation • Multi-agent Elicitation (Mechanism Design) • One-Shot Elicitation • Sequential Mechanisms

Difficulties with Bound Queries • Bound queries focus on local factors • but values cannot be fixed without reference to others! • seemingly “different” local prefs correspond to same u • u(Color,Doors,Power) = u1(Color,Doors) + u2(Doors,Power) • u(red,2door,280hp) = u1(red,2door) + u2(2door,280hp) • u(red,4door,280hp) = u1(red,4door) + u2(4door,280hp) 10 6 1 4 9 6 3 3

Local Queries [BB05] • We wish to avoid queries on whole outcomes • can’t ask purely local outcomes • but can condition on a subset of default values • Conditioning setC(f) for factor fi(Xi) : • variables that share factors with Xi • setting default outcomes on C(f) renders Xi independent of remaining variables • enables local calibration of factor values

Local Standard Gamble Queries • Local std. gamble queries • use “best” and “worst” (anchor) local outcomes -- conditioned on default values of conditioning set • bound queries on other parameters relative to these • gives local value functionv(x[i]) (e.g., v(ABC) ) • Hence we can legitimately ask local queries: • But local Value Functions not enough: • must calibrate: requires global scaling

Global Scaling • Elicit utilities of anchor outcomes wrt global best and worst outcomes • the 2*m “best” and “worst” outcomes for each factor • these require global std gamble queries (note: same is true for pure additive models)

Bound Query Strategies • Identify conditioning sets Ci for each factor fi • Decide on “default” outcome • For each fi identify top and bottom anchors • e.g., the most and least preferred values of factor i • given default values of Ci • Queries available: • local std gambles: use anchors for each factor, C-sets • global std gambles: gives bounds on anchor utilities

Overview • Preference Elicitation in A.I. • Single User Elicitation • Foundations of Local queries • Bayesian Elicitation • Regret-based Elicitation • Multi-agent Elicitation (Mechanism Design) • One-Shot Elicitation • Sequential Mechanisms

Partial preference informationBayesian uncertainty • Probability distribution p over utility functions • Maximize expected (expected) utility MEU decisionx* = arg maxx Ep [u(x)] • Consider: • elicitation costs • values of possible decisions • optimal tradeoffs between elicitation effort and improvement in decision quality

Query selection • At each step of elicitation process, we can • obtain more preference information • make or recommend a terminal decision

Bayesian approachMyopic EVOI MEU(p) ... q1 q2 r1,1 r1,2 r2,1 r2,2 ... MEU(p1,2) MEU(p1,1) MEU(p2,1) MEU(p2,2)

Expected value of information MEU(p) • MEU(p) = Ep [u(x*)] • Expected posterior utility: EPU(q,p) = Er|q,p [MEU(pr)] • Expected value of information of query q: EVOI(q)= EPU(q,p) – MEU(p) q1 ... q2 r1,1 r1,2 r2,1 r2,2 ... MEU(p1,2) MEU(p1,1) MEU(p2,1) MEU(p2,2)

Bayesian approachMyopic EVOI • Ask query with highestEVOI - cost • [Chajewska et al ’00] • Global standard gamble queries (SGQ) “Is u(oi) > l?” • Multivariate Gaussian distributions over utilities • [Braziunas and Boutilier ’05] • Local SGQ over utility factors • Mixture of uniforms distributions over utilities

Local elicitation in GAI models[Braziunas and Boutilier ’05] • Local elicitation procedure • Bayesian uncertainty over local factors • Myopic EVOI query selection • Local comparison query “Is local value of factor settingxigreater thanl”? • Binary comparison query • Requires yes/no response • query point l can be optimized analytically

Experiments • Car rental domain: 378 parameters [Boutilier et al. ’03] • 26 variables, 2-9 values each, 13 factors • 2 strategies • Semi-random query • Query factor and local configuration chosen at random • Query point set to the mean of local value function • EVOI query • Search through factors and local configurations • Query point optimized analytically

Experiments Percentage utility error (w.r.t. true max utility) No. of queries

Bayesian Elicitation: Future Work • GAI structure elicitation and verification • Sequential EVOI • Noisy responses

Overview • Single User Elicitation • Foundations of Local queries • Bayesian Elicitation • Regret-based Elicitation • why MiniMax Regret (MMR) ? • Decision making with MMR • Elicitation with MMR • Multi-agent Elicitation (Mechanism Design)

Minimax Regret: Utility Uncertainty • Regret of x w.r.t. u: • Max regret of x w.r.t. F: • Decision with minimax regret w.r.t. F:

Why Minimax Regret?* • Appealing decision criterion for strict uncertainty • contrast maximin, etc. • not often used for utility uncertainty [BBB01,HS010] x x’ x’ x’ x’ Better x’ x x x x x x’ u1 u2 u3 u4 u5 u6

Why Minimax Regret? • Minimizes regret in presence of adversary • provides bound worst-case loss • robustness in the face of utility function uncertainty • In contrast to Bayesian methods: • useful when priors not readily available • can be more tractable; see [CKP00/02, Bou02] • effective elicitation even if priors available [WB03]

Overview • Single User Elicitation • Foundations of Local queries • Bayesian Elicitation • Regret-based Elicitation • why MiniMax Regret (MMR) ? • Decision making with MMR • Elicitation with MMR • Multi-agent Elicitation (Mechanism Design

Computing Max Regret • Max regret MR(x,F) computed as an IP • number of vars linear in GAI model size • number of (precomputed) constants (i.e., local regret terms) quadratic in GAI model size • r( x[k] , x’[k] ) = uT(x’[k] ) – u (x[k] )

Minimax Regret in Graphical Models • We convert minimax to min (standard trick) • obtain a MIP with one constraint per feasible config • linearly many vars (in utility model size) • Key question: can we avoid enumerating all x’ ?

Constraint Generation • Very few constraints will be active in solution • Iterative approach: • solve relaxed IP (using a subset of constraints) • Solve for maximally violated constraint • if any add it and repeat; else terminate

Constraint Generation Performance • Key properties: • aim: graphical structure permits practical solution • convergence (usually very fast, few constraints) • very nice anytime properties • considerable scope for approximation • produces solution x* as well as witnessxw

Overview • Single User Elicitation • Foundations of Local queries • Bayesian Elicitation • Regret-based Elicitation • why MiniMax Regret (MMR) ? • Decision making with MMR • Elicitation with MMR • Multi-agent Elicitation (Mechanism Design)

Regret-based Elicitation[Boutilier, Patrascu, Poupart, Schuurmans IJCAI05; AIJ 06] • Minimax optimal solution may not be satisfactory • Improve quality by asking queries • new bounds on utility model parameters • Which queries to ask? • what will reduce regret most quickly? • myopically? sequentially? • Closed form solution seems infeasible • to date we’ve looked only at heuristic elicitation

Elicitation Strategies I • Halve Largest Gap (HLG) • ask if parameter with largest gap > midpoint • MMR(U) ≤maxgap(U), hence nlog(maxgap(U)/e) queries needed to reduce regret to e • bound is tight • like polyhedral-based conjoint analysis [THS03] f1(a,b) f1(a,b) f1(a,b) f1(a,b) f2(b,c) f2(b,c) f2(b,c) f2(b,c)

Elicitation Strategies II • Current Solution (CS) • only ask about parameters of optimal solution x* or regret-maximizing witness xw • intuition: focus on parameters that contribute to regret • reducing u.b. on xw or increasing l.b. on x* helps • use early stopping to get regret bounds (CS-5sec) f1(a,b) f1(a,b) f1(a,b) f1(a,b) f2(b,c) f2(b,c) f2(b,c) f2(b,c)

Elicitation Strategies III • Optimistic-pessimistic (OP) • query largest-gap parameter in one of: • optimistic solution xo • pessimistic solution xp • Computation: • CS needs minimax optimization • OP needs standard optimization • HLG needs no optimization • Termination: • CS easy • Others ?

Results (Small Random) 10vars; < 5 vals 10 factors, at most 3 vars Avg 45 trials

Results (Car Rental, Unif) 26 vars; 61 billion configs 36 factors, at most 5 vars; 150 parameters Avg 45 trials

Results (Real Estate, Unif) 20 vars; 47 million configs 29 factors, at most 5 vars; 100 parameters Avg 45 trials

![Preference Elicitation [Conjoint Analysis]](https://cdn2.slideserve.com/5322185/preference-elicitation-conjoint-analysis-dt.jpg)