Empowering Excel Users to Big Data Scientists

80 likes | 186 Views

Explore challenges and solutions in handling Big Data, enabling interactive exploration, optimizing workflows, promoting collaboration, and managing data provenance. Can we transform a typical Excel/R user into an efficient Big Data Scientist?

Empowering Excel Users to Big Data Scientists

E N D

Presentation Transcript

It’s Always been Big Data…! MinosGarofalakis Technical University of Crete http://www.softnet.tuc.gr/~minos/

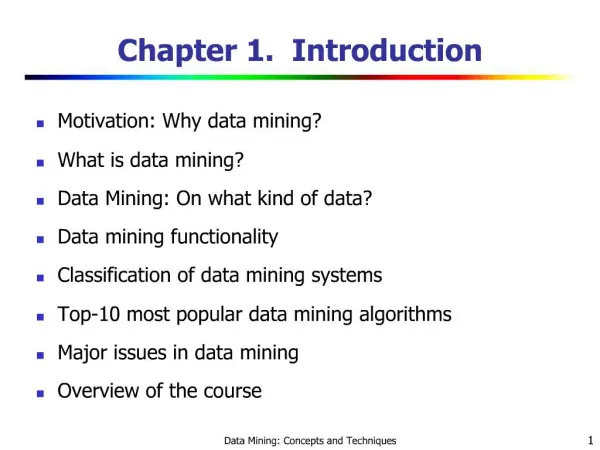

“Big” Depending on Context… • Grows by Moore’s Law… • 1st VLDB (1975): Big = millions of data points gathered by the US Census Bureau [Simonson, Alsbrooks, VLDB’75] • Things have changed since then… • In general, Big = data that cannot be handled using standalone, standard tools (on a desktop) • Today, this means using Hadoop/MR clusters, Cloud DBMSs, Supercomputers, …

The Big Data Pipeline • Several major pain points/ challenges at each step • Throwback to early batch computing of the 1960s! • No direct manipulation, interactivity, fast response • Processing is opaque, time consuming, costly • Typically, using a series of remote VMs • Different designs => VERY different temporal/financial implications

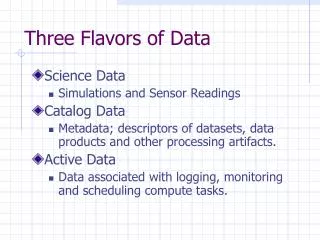

Data Analytics is Exploratory by Nature! • Can we support interactive exploration and rapid iteration over Big Data? • Mimic versatility of local file handling with tools like Excel and scripts (e.g., R) • One approach: Small footprint Synopses/Sketches for fast approximate answers and visualizations • Sampling already used (in ad-hoc manner) • Much relevant work on AQP and streaming • But, we must handle the Variety dimension • Both in data types and classes of analytics tasks! • Another important dimension: Distribution • LIFT/LEADS/FERARI projects and BD3 Workshop (this Friday!)

Optimization, Collaboration, Provenance • Can we help users to plan/monitor the monetary and time implications of their design decisions? • Again, this should be an interactive process • Can we enable users to collaborate around Big Data? • Share data sources, scripts, experiences, even data runs • Work on collaborative mashups/visualization, CSCW • Can we help users to explore and exploit the provenance and computation history of the data? • “Institutional memory” on data sources and analyses • Data synopses/approximation critical to all three…! • May just be my personal bias speaking…

A Grand Challenge Can we take a typical Excel/R user and empower them to become a Big Data Scientist? • For non-data-savvy “citizen scientists”, lack of statistical sophistication is a key problem • Can lead to poor decisions and results; more “play” than “science” • Support for fast interactive exploration, workflow optimization, collaboration, and provenance is critical • Relevant work exists in our community but still lots to be done…