Time Series Analysis

Time Series Analysis. What is Time Series Analysis? The analysis of data organized across units of time. Time series is a basic research design Data for one or more variables is collected for many observations at different time periods Usually regularly spaced May be either

Time Series Analysis

E N D

Presentation Transcript

Time Series Analysis • What is Time Series Analysis? • The analysis of data organized across units of time. • Time series is a basic research design • Data for one or more variables is collected for many observations at different time periods • Usually regularly spaced • May be either • univariate - one variable description • multivariate - causal explanation

Time Series vs. Cross Sectional Designs • It is usually contrasted to cross-sectional designs where the data is organized across a number of similar units • The data is collected at the same time for every observation • Thus: • A data set consisting of 50 states for the year 1998 is a cross-sectional design. • A data set consisting of data for Alabama for 1948 – 1998 is a time series design.

Why time series or cross sections? • Depends on your question • If you wish to explain why one state is different from another, use a cross-sectional design • If you wish to explain why a particular state has changed over time, use a time series design

Time-Series Cross-Sectional Designs • There are techniques for combining the two designs. • Due to concerns for autocorrelation, and estimation, we will examine this design later in the course

Conceptual reasons to consider time series models • Classic regression models assume that all causation is instantaneous. This is clearly suspect. • In addition, behaviors are dynamic - they evolve over time.

What is Time anyway? • Time may be a surrogate measure for other processes • i.e. maturation, • aging, • growth, • inflation, etc. • Many of the processes we are interested in are described in terms of their temporal behavior • Policy impact • Arms races, • growth and decay models, • compound interest or inflation • learning

Why time series? • My personal view is that Time Series models are theoretically fundamentally more important than cross-sectional models. • The models that we are really interested in are those that help us model how systems change across time - vis a vis what they look like at any given snapshot in time. • Statistical tools may often improve their degrees of freedom by using time series methods. (Sometimes this means larger n)

The Nature of Time Series Problems • Please note: Time series problems are theoretical one - they are not simply statistical artifacts. When you have a time series problem, it means some non-random process out there has not been accounted for. • And since there is usually something left out or not measurable, you usually have a time series problem!

A Basic Vocabulary for Time Series • Period • Cycle • Season • Stationarity • Trend • Drift

Periodicity • The Period • A Time Series design is simple to distinguish because of its period. The data set is comprised of measures taken at differing points in time. The unit of the analysis is the period. (i.e. daily, weekly, monthly, quarterly, annual, etc.) • Note that the period defines the discrete time interval over which the data measurement are taken.

Cycle • Uses classic trigonometric functions such as the sine and cosine functions to examine periodicity in the data. • This is the basis for Fourier Series and Spectral Analysis. • Used primarily in economics where they have data series measured over a long period of time with multiple regularly occurring and overlapping cycles. (Rarely used in Political Science, but try the commodity markets, with hog/beef/chicken cycles)

Cycles (cont.) • A simple cyclic or trigonometric function might look like this:

Cycles (cont.) • You could estimate a model like • But why would you? • What theory do you have that suggests that political data follow such trigonometric periodicity? • Are wars cyclic? Sunspots? • Would elections be cyclic?

Seasonality • Season • Sometimes, when a relationship or a data series has variation related to the unit of time, we often refer to this as seasonality. • (e.g. Christmas sales, January tax revenues.) • This most often occurs when we have discrete data. • Seasonality is thus the discrete data equivalent of the continuous data assumed by spectral analysis

Regression with Seasonal Effects • Estimating a regression model with seasonal behavior in the dependent variable is relatively easy: • Where S1 is a seasonal dummy. • S1 is coded 1 when the observation occurs during that season, and 0 otherwise.)

Estimating Seasonality • Like all dummy variable models, at least one Season (Category) must be excluded from the estimation • The intercept represents the mean of the excluded season(s). • Failure to exclude one of the seasonal dummies will result in: • A seasonal variable being dropped, or • biased estimation at best and in all likelihood error messages about singular matrices or extreme multicolinearity. • The slope coefficients represent the change from the intercept. • t-tests are tests of whether the seasons are different from the intercept, not just different from 0.

Estimating Seasonality • Estimating regression models with seasonality is a popular and valuable method in many circumstances. • (i.e. estimating tax revenues)

Stationarity • If a time series is stationary it means that the data fluctuates about a mean or constant level. • Many time series models assume equilibrium processes.

Non-Stationarity • Non-stationary data does not fluctuate about a mean. It may trend away or drift away from the mean

Trend • Trend indicates that the data increases or decreases regularly.

Drift • Drift means that the series ‘drifts’ away from its mean, but then drifts back at some later point.

Variance Stationarity • Variance Stationarity means that the variation in the data about the mean (or trend line) is constant across time. • Non-stationary variance would have higher variation at one end of the series or the other.

For instance Oxygen 18 isotope levels in Benthic Foraminifera

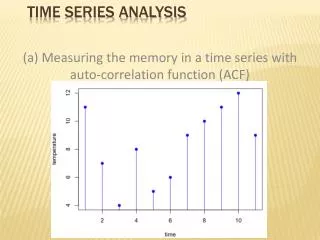

A Basic Vocabulary for Time Series • Random Process/ Stochastic Process • The data is completely random. It has no temporal regularity at all.

A Basic Vocabulary for Time Series • Trend • Means that the data increases or decreases over time. • Simplest form of time series analysis • Uses a variable as a counter {Xi = 1, 2, 3, .. n} and regresses the variable of interest on the counter. • This gives an estimate of the periodic increase/decrease in the variable (i.e. the monthly increase in GDP) • Problems occur for several reasons: • The first and last observations are the most statistically influential. • Very susceptible to problems of autocorrelation

Random- walk • If the data is generated by We call it a random-walk. • If B is equal to 0.0, the data is a pure random walk. • If B is non-zero, then the series drifts away from the mean for periods of time, but may return (hence often called drift, or drift non-stationarity).

Implications of a random walk • Random walks imply that memory is infinite. • Stocks are often said to follow random walks • And if so, they are largely unpredicatable!

Unit Root tests • A number of tests have emerged to test whether a data series is a Trend Stationary Process (TSP) or a Difference Stationary Process (DSP). Among them, the Dickey-Fuller test. (More on this in a few weeks) • The current literature seems to suggest that regression on differences is safer than regression on levels, due to the implications of TSP and DSP. We will return to this later.

More Unit Roots • Defining unit roots as • We can see that unit roots, a random walk, nonstationarity, and a stochastic trend can all be treated as the same thing. We can also see that if we difference a random walk, the resulting data is stationary.

Autocorrelated error • Also known as serial correlation • Detected via: • The Durbin Watson statistic • The Ljung-Box Q statistic ( a 2 statistic) • Note that Maddala suggests that this statistic is inappropriate. • Probably not too bad in small sample, low order processes. • Q does not have as much power as LM test. • The Portmanteau test • Lagrangian Multiplier test

Autocorrelated error (Cont.) • If autocorrelation is present, then the standard errors are underestimated, often by quite a bit, especially if there is a trend present. • Test for AC, and if present, use • the Cochran-Orcutt method • the Hildreth-Lu method • Durbin’s method • Method of first differences • Feasible Generalized Least squares • Prais-Winsten Estimator • Others!

Certain models are quite prone to autocorrelation problems • Distributed Lags • The effect of X on Y occurs over a longer period, • There are a number of Distributed Lag Models • Finite distributed lags • Polynomial lags • Geometric Lags • Almon lag • Infinite Distributed Lags • Koyck scheme

Lagged Endogenous variables • In addition, there are models which describe behavior as a function of both independent influences as well as the previous level of Y. • These models are often quite difficult to deal with. • The Durbin-Watson D is ineffective - use Durbin’s h

Some common models with lagged endogenous variables • Naive expectations • The Adaptive Expectations model • The Partial Adjustment model • Rational Expectations

Remedies for autocorrelation with lagged endogenous variables. • The 2SLSIV solution • regress Yt on all Xt’s, and Xt-1's. • Then take the Y-hats and use as an instrument for Lagged Y’s in the original model. • The Y-hats are guaranteed to have the autocorrelated component theoretically purged from the data series. • Have fun!

Non-linear estimation • Not all models are linear. • Models such as exponential growth are relatively tractable. • They can be estimated with OLS with the appropriate transformation • But a model like is somewhat more difficult to deal with.

Nonlinear Estimation (cont.) • The (1-c) parameter may be estimated as a B, but the t-test will not tell us if c is different from 0.0, but rather whether 1-c is different from 0.0. • Thus the greater the rate of decay, the worse the test. • Hence we wish to estimate the equation in its intractable form. • There may be analytic solutions or derivatives that may be employed, but conceptually the grid search will suffice for us to see how non-linear estimation works.

Intractable Non-linearity • Occasionally we have models that we cannot transform to linear ones. • For instance a logit model • Or an equilibrium system model

Intractable Non-linearity • Models such as these must be estimated by other means. • We do, however, keep the criteria of minimizing the squared error as our means of determining the best model

Estimating Non-linear models • All methods of non-linear estimation require an iterative search for the best fitting parameter values. • They differ in how they modify and search for those values that minimize the SSE.

Methods of Non-linear Estimation • There are several methods of selecting parameters • Grid search • Steepest descent • Marquardt’s algorithm

Grid search estimation • In a grid search estimation, we simply try out a set of parameters across a set of ranges and calculate the SSE. • We then ascertain where in the range (or at which end) the SSE was at a minimum. • We then repeat with either extending the range, or reducing the range and searching with smaller grid around the estimated SSE • Try the spreadsheet • Try this for homework!

The Backshift Operator • The backshift operator B refers to the previous value of a data series. • Thus • Note that this can extend over longer lags.

UNIVARIATE TIME SERIES • Autoregressive Processes • A simple Autoregressive model • This is an AR(1) process. • The level of a variable at time t is some proportion of its previous level at t-1. • This is called exponential decay (if is less than unity - 1.0)

Autoregressive Processes • An autoregressive process is one in which the current value is a function of its previous value, plus some additional random error. • 1st order autocorrelation in the residuals in regression analysis is the most frequently discussed example. • Keep in mind that with serial correlation we are talking about the residuals, not a variable. • An autoregressive process may be observed in the X’s, the Y or the residuals

Higher Order AR Processes • Autoregressive Processes of higher order do exist: • AR(2), ... AR(p) • In general be suspicious of anything higher than a 3rd order process: • Why should life be so abstractly complex? Autoregressive Processes • The general form using Backshift notation is: