Sorting

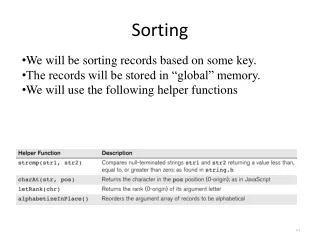

Sorting. Introduction. Assumptions Sorting an array of integers Entire sort can be done in main memory Straightforward algorithms are O(N 2 ) More complex algorithms are O(NlogN). Insertion Sort. Idea: Start at position 1 and move each element to the left until it is in the correct place

Sorting

E N D

Presentation Transcript

Introduction • Assumptions • Sorting an array of integers • Entire sort can be done in main memory • Straightforward algorithms are O(N2) • More complex algorithms are O(NlogN)

Insertion Sort • Idea: Start at position 1 and move each element to the left until it is in the correct place • At iteration i, the leftmost i elements are in sorted order for i = 1; i < a.size(); i++ tmp = a[i] for j = i; j > 0 && tmp < a[j-1]; j-- a[j] = a[j-1] a[j] = tmp

Insertion Sort – Example 34 8 64 51 32 21

Insertion Sort – Analysis • Worst case: Each element is moved all the way to the left • Example input? • Running time in this case?

Insertion Sort – Analysis • Running time in this case? • inner loop test executes p+1 times for each p N Σi = 2 + 3 + 4 + … + N = θ(N2) i=2

Sorting – Lower Bound • Inversion – an ordered pair (i, j) where i < j but a[i] > a[j] • Number of swaps required by insertion sort • Running time of insertion sort O(I+N) • Calculate average running time of insertion sort by computing average number of inversions

Sorting – Lower Bound • Theorem 7.1: The average number of inversions in an array of N distinct elements is N(N-1)/4 • Proof: Total number of inversions in a list L and its reverse Lr is N(N-1)/2. Average list has half this amount, N(N-1)/4. • Insertion sort is O(N2) on average

Sorting – Lower Bound • Theorem 7.2: Any algorithm that sorts by exchanging adjacent elements requires Ω(N2) time on average. • Proof: Number of inversions is N(N-1)/4. Each swap removes only 1 inversion, so Ω(N2) swaps are required.

Shellsort • Idea: Use increment sequence h1, h2, …ht. After phase using increment hk, a[i] <= a[i+ hk] for each hk for i = hk to a.size() tmp = a[i] for j = i j >= hk && tmp < a[j- hk] j-= hk a[j] = a[j- hk ] a[j] = tmp

Shellsort • Conceptually, separate array into hk columns and sort each column • Example: 81 94 11 96 12 35 17 95 28 58 41 75 15

Shellsort: Analysis • Increment sequence determines running time • Shell’s increments • ht = floor(N/2) and hk=floor(hk+1/2) • θ(N2) • Hibbard’s increments • 1, 3, 7, …, 2k-1 • θ(N3/2)

Shellsort – Analysis • Theorem 7.3: The worst-case running time of Shellsort, using Shell’s increments, is θ(N2). • Proof – part 1: There exists some input that takes Ω(N2). Choose N to be a power of 2. All increments except last will be even. Give as input array with N/2 largest numbers in even positions and N/2 smallest in odd positions.

Shellsort – Analysis Example: 1 9 2 10 3 11 4 12 5 13 6 14 7 15 8 16 • At last pass, ith smallest number will be in position 2i-1 requiring i-1 moves. Total number of moves: N/2 Σ i-1 = Ω(N2) i=1

Shellsort – Analysis • Proof – part 2: A pass with increment hk performs hk insertion sorts of lists of N/hk elements. Total cost of a pass is O(hk(N/ hk)2) = O(N2/ hk). Summing over all passes gives a total bound of O(sum from 1-t(N2/hi)) = O(N2 (sum from 1-t1/hi) = O(N2)

Heapsort • Strategy • Apply buildHeap function • Perform N deleteMin operations • Running time is O(NlogN) • Problem: Simple implementation uses two arrays – N extra space

Heapsort • Solution: After deletion, size of heap shrinks by 1, so simply insert into empty slot • Problem: Results in array sorted in decreasing order – use max heap instead • Example: 97 53 59 26 41 58 31

Mergesort • Recursively sort elements 1-N/2 and N/2-N and merge the result • This is a classic divide-and-conquer algorithm. • Uses temporary array – extra space

Mergesort – Algorithm • Base case • List of 1 element, return • Otherwise • Mergesort left half • Mergesort right half • Merge

Mergesort – Algorithm • Merge leftPos = leftStart; rightPos = rightStart; tmpPos = tmpStart; while(leftPos <=leftEnd && rightPos <= rightEnd) if(a[leftPos] <= a[rightPos]) tmpArray[tmpPos++] = a[leftPos++] else tmpArray[tmpPos++] = a[rightPos++] //copy rest of left half //copy rest of right half //copy tmpArray back • Example 24 13 26 1 2 27 38 15

Mergesort – Analysis • T(1) = 1 • T(N) = 2T(N/2) + N – divide by N • T(N)/N = T(N/2)/N/2 + 1 – substitute N/2 • T(N/2)/N/2 = T(N/4)/N/4 + 1 • T(N/4)/N/4 = T(N/8)/N/8 + 1 … • T(2)/2 = T(1)/1 + 1 – telescoping • T(N)/N = T(1)/1 + logN – mult by N • T(N) = N + NlogN = O(NlogN) • Can also repeatedly substitute recurrence on right-hand side

Quicksort • Base case: Return if number of elements is 0 or 1 • Pick pivot v in S. • Partition into two subgroups. First subgroup is < v and last subgroup is > v. • Recursively quicksort first subgroup and last subgroup

Quicksort • Picking the pivot • Choose first element • Bad for presorted data • Choose randomly • Random number generation can be expensive • Median-of-three • Choose median of left, right, and center • Good choice!

Quicksort • Partitioning • Move pivot to end • i = start; j = end; • i++ and j-- until a[i] > pivot and a[j] < pivot and i < j • swap a[i] and a[j] • swap a[last] and a[i] //move pivot • Example 13 81 92 43 65 31 57 26 75 0

Quicksort – Analysis • Quicksort relation • T(N) = T(i) + T(N-i-1) + cN • Worst-case – pivot always smallest element • T(N) = T(N-1) + cN • T(N-1) = T(N-2) + c(N-1) • T(N-2) = T(N-3) + c(N-2) • T(2) = T(1) + c(2) – add previous equations • T(N) = T(1) + csum([2-N] of i) = O(N2)

Quicksort – Analysis • Best-case – pivot always middle element • T(N) = 2T(N/2) + cN • Can use same analysis as mergesort • = O(NlogN) • Average-case (pg 278) • = O(NlogN)

Sorting – Lower Bound • A sorting algorithm that uses comparisons requires ceil(log(N!)) in worst case and log(N!) on average.

Decision Tree a<b<c a<c<b b<a<c b<c<a c<a<b c<b<a b<a a<b a<b<c a<c<b c<a<b b<a<c b<c<a c<b<a b<c a<c c<b c<a a<b<c a<c<b c<a<b b<a<c b<c<a c<b<a a<c b<c c<a c<b a<b<c a<c<b b<a<c b<c<a

Sorting – Lower Bound • Let T be a binary tree of depth d. The T has at most 2d leaves. • A binary tree with L leaves must have depth at least ceil(log L). • Any sorting algorithm that uses only comparisons between elements requires at least ceil(log(N!)) comparisons in the worst case. • N! leaves because N! permutations of N elements

Sorting – Lower Bound • Any sorting algorithm that uses only comparisons between elements requires Ω(NlogN) comparisons • log(N!) comparisons are required • log(N!) = log(N(N-1)(N-2)…(2)(1)) • = logN+log(N-1)+log(N-2)+…+log1 • >= logN+log(N-1)+log(N-2)+…+logN/2 • >= N/2log(N/2) >= N/2log(N)-N/2 • = Ω(NlogN)

Bucketsort • Sorting algorithm that runs in O(N) time, how? What is special about this algorithm? for i = 1 to n increment count[Ai]