Overview of Bayesian Classifiers for Data Classification

Learn about Bayesian classifiers, fundamental probabilities, conditional probability, and practical examples for classifying data into four classes. Understand how to calculate probabilities and apply the classifier to test data.

Overview of Bayesian Classifiers for Data Classification

E N D

Presentation Transcript

Classifier Clarifier Bayesian Classifiers

The Big Picture • Problem : classify data into one of four classes • Data : you select 3 features which are mutually exclusive. {Note : this is a really bad decision. Why? We’ll fix it later} • You generate feature vectors for the training data.

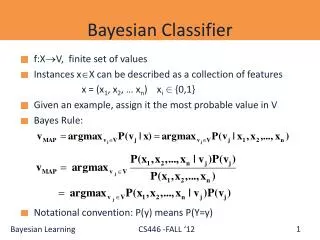

The Big Picture Cont. • Once you have the classifier, you will observe features in the testing vectors and based on those assign a class to the vector. • In other words, we want to find the class (Ci) given the feature observed (Xk). • We need to find a formula for P(Ci | Xk)

Fundamental Probabilities • P(C1) = .4 P(X1) = .44 • P(C2) = .16 P(X2) = .4 • P(C3) = .24 P(X3) = .16 • P(C4) = .20 • Sum of P(Ci) = 1 because all vectors are in one of the classes • Sum P(Xi) = 1 because each vector has only one feature

Question • Are the features and the classes independent? • Definition of independent is : • P(a AND b) = P(a)*P(b) • Check : P(C1 AND X1) = 6/25 = .24 from table • But P(C1) * P(X1) = (.44)*(.4) = .176 • The features and classes are not independent

So? Need Conditional Probability • P(C AND X) = P(C|X) * P(X) or • P(X AND C) = P(X|C) * P(C) • P(C AND X ) = P(X AND C) • P(C|X) * P(X) = P(X|C) *P(C) • P(C|X) = [P(X|C) * P(C)] /P(X) • Now we have what we need

The Classifier • For each feature, determine which class is most likely given that the feature was present. • Calculate the probability of being in each class based on that feature’s presence • Select the largest probability • Classify the sample • Note : we need the features to be mutually exclusive to make this works. We will fix this in a minute.

The probabilities • P(C1|X1) = P(X1|C1)*P(C1) = (.6)*(.4) = .24 • P(C1|X2) = P(X2|C1)*P(C1) = (.3)*(.4) = .12 • P(C1|X3) = P(X3|C1)*P(C1) = (.1)*(.4) = .04 • P(C2|X1) = P(X1|C2)*P(C2) = (0)*(.16) = 0 • P(C2|X2) = P(X2|C2)*P(C2) = (.5)*(.16) = .08 • P(C2|X3) = P(X3|C2)*P(C2) = (.5)*(.16) = .08 • P(C3|X1) = P(X1|C3)*P(C3) = (.17)*(.24) = .04 • P(C3|X2) = P(X2|C3)*P(C3) = (.67)*(.24) = .16 • P(C3|X3) = P(X3|C3)*P(C3) = (.17)*(.24) = .04 • P(C4|X1) = P(X1|C4)*P(C4) = (.8)*(.2) = .16 • P(C4|X2) = P(X2|C4)*P(C4) = (.2)*(.2) = .04 • P(C4|X3) = P(X3|C4)*P(C4) = (0)*(.2) = 0

Examples • Test vector 1 = 100 • P(C1|X1) = .24 • P(C2|X1) = 0 • P(C3|X1) = .04 • P(C4|X1) = .16 • Classify as C1 • Test vector 2 = 010 • P(C1|X2) = .12 • P(C2|X2) = .08 • P(C3|X2) = .16 • P(C4|X2) = .04 • Classify as C3 • Test vector 3 = 001 • P(C1|X3) = .04 • P(C2|X3) = .08 • P(C3|X3) = .04 • P(C4|X3) = 0 • Classify as C2 • You guessed it : we never classify anything as class 4.