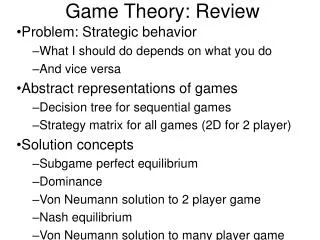

Game Theory: Review

210 likes | 601 Views

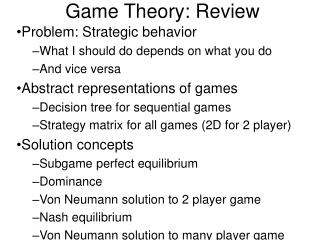

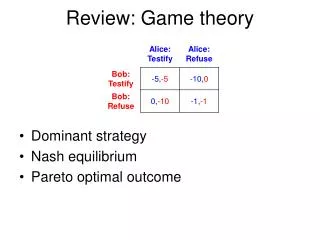

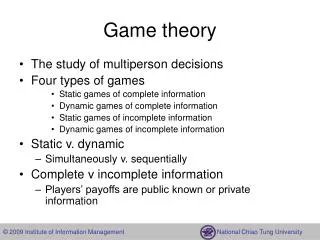

Game Theory: Review. Problem: Strategic behavior What I should do depends on what you do And vice versa Abstract representations of games Decision tree for sequential games Strategy matrix for all games (2D for 2 player) Solution concepts Subgame perfect equilibrium Dominance

Game Theory: Review

E N D

Presentation Transcript

Game Theory: Review Problem: Strategic behavior What I should do depends on what you do And vice versa Abstract representations of games Decision tree for sequential games Strategy matrix for all games (2D for 2 player) Solution concepts Subgame perfect equilibrium Dominance Von Neumann solution to 2 player game Nash equilibrium Von Neumann solution to many player game

Subgame perfect equilibrium • Treat the final choice (subgame) as a decision theory problem • The solution to which is obvious • So plug it in • Continue right to left on the decision tree • Assumes no way of committing and • No coalition formation • In the real world, A might pay B not to take what would otherwise be his ideal choice-- • because that will change what C does in a way that benefits A. • One criminal bribing another to keep his mouth shut, for instance • But it does provide a simple way of extending the decision theory approach • To give an unambiguous answer • In at least some situations • Consider our basketball player problem

Dominant Strategy • Each player has a best choice, whatever the other does • Might not exist in two senses • If I know you are doing X, I do Y—and if you know I am doing Y, you do X. Nash equilibrium. Driving on the right. The outcome may not be unique, but it is stable. • If I know you are doing X, I do Y—and if you know I am doing Y, you don't do X. Unstable. Scissors/paper/stone.

Nash Equilibrium • By freezing all the other players while you decide, we reduce it to decision theory for each player--given what the rest are doing • We then look for a collection of choices that are consistent with each other • Meaning that each person is doing the best he can for himself • Given what everyone else is doing • This assumes away all coalitions • it doesn't allow for two ore more people simultaneously shifting their strategy in a way that benefits both • Like my two escaping prisoners • It ignores the problem of defining “freezing other players” • Their alternatives partly depend on what you are doing • So “freezing” really means “adjusting in a particular way” • For instance, freezing prize, varying quantity, or vice versa • It also ignores the problem of how to get to that solution • One could imagine a real world situation where • A adjusts to B and C • Which changes B's best strategy, so he adjusts • Which changes C and A's best strategies … • Forever … • A lot of economics is like this--find the equilibrium, ignore the dynamics that get you there

Von Neumann Solution • aka minimax aka saddlepoint aka ….? • It tells each player how to figure out what to do • A value V • A strategy for one player that guarantees winning at least V • And for the other that guarantees losing at most V • Describes the outcome if each follows those instructions • But it applies only to two person fixed sum games.

VN Solution to Many Player Game • Outcome--how much each player ends up with • Dominance: Outcome A dominates B if there is some group of players, all of whom do better under A (end up with more) and who, by working together, can get A for themselves • A solution is a set of outcomes none of which dominates another, such that every outcome not in the solution is dominated by one in the solution • Consider, to get some flavor of this, three player majority vote • A dollar is to be divided among Ann, Bill and Charles by majority vote. • Ann and Bill propose (.5,.5,0)--they split the dollar, leaving Charles with nothing • Charles proposes (.6,0,.4). Ann and Charles both prefer it, to it beats the first proposal, but … • Bill proposes (0, .5, .5), which beats that … • And so around we go. • One solution is the set: (.5,.5,0), (0, .5, .5), (.5,0,.5)

Schelling aka Focal Point • Two people have to coordinate without communicating • Either can’t communicate (students to meet) • Or can’t believe what each says (bargaining) • They look for a unique outcome • Because the alternative is choosing among many outcomes • Which is worse than that • While the bank robbers are haggling the cops may show up • But “unique outcome” is a fact • Not about nature but • About how people think • Which implies that • You might improve the outcome by how you frame the decision • Coordination may break down on cultural boundaries • Because people frame decisions differently • Hence each thinks the other is unreasonably refusing the obvious compromise.

Conclusion • Game theory is helpful as a way of thinking • “Since I know his final choice will be, I should …” • Commitment strategies • Prisoner’s dilemmas and how to avoid them • Mixed strategies where you don’t want the opponent to know what you will do • Nash equilibrium • Schelling points • But it doesn’t provide a rigorous answer • To either the general question of what you should do • In the context of strategic behavior • Or of what people will do

Insurance • Risk Aversion • I prefer a certainty of paying $1000 to a .001 chance of $1,000,000 • Why? • Moral Hazard • Since my factory has fire insurance for 100% • Why should I waste money on a sprinkler system? • Adverse selection • Someone comes running into your office • “I want a million dollars in life insurance, right now” • Do you sell it to him? • Doesn’t the same problem exist for everyone who wants to buy? • Buying signals that you think you are likely to collect • I.e. a bad risk • So price insurance assuming you are selling to bad risks • Which means it’s a lousy deal for good risks • So good risks don’t get insured • even if they would be willing to pay a price • At which it is worth insuring them

Risk Aversion • The standard explanation for insurance • By pooling risks, we reduce risk • I have a .001 chance of my $1,000,000 house burning down • A million of us will have just about 1000 houses burn down each year • For an average cost of $1000/person/year • Why do I prefer to reduce the risk? • The more money I have, the less each additional dollar is worth to me • With $20,000/year, I buy very important things • With $200,000/year, the last dollar goes for something much less important • So I want to transfer money • To futures where I have little, because my house burned down • From futures where I have lots--house didn’t burn

“Risk Aversion” a Misleading Term • Additional dollars are probably worth less to me the more I have • It doesn’t follow that (say) additional years of life are • Without the risky operation I live fifteen years • If it succeeds I live thirty, but … • Half the time the operation kills the patient • And I always wanted to have children • So really “risk aversion in money” • Aka “declining marginal utility of income.”

Moral hazard • I have a ten million dollar factory and am worried about fire • If I can take ten thousand dollar precaution that reduces the risk by 1% this year, I will—(.01x$10,000,000=$100,000>$10,000) • But if the precaution costs a million, I won't. • insure my factory for $9,000,000 • It is still worth taking a precaution that reduces the chance of fire by %1 • But only if it costs less than …? • Of course, the price of the insurance will take account of the fact that I can be expected to take fewer precautions: • Before I was insured, the chance of the factory burning down was 5% • So insurance should have cost me about $450,000/year, but … • Insurance company knows that if insured I will be less careful • Raising the probability to (say) 10%, and the price to $900,000 • There is a net loss here—precautions worth taking that are not getting taken, because I pay for them but the gain goes mostly to the insurance company.

Solutions? • Require precautions (signs in car repair shops—no customers allowed in, mandated sprinkler systems) • The insurance company gives you a lower rate if you take the precautions • Only works for observable precautions • Make insurance only cover fires not due to your failure to take precautions (again, if observable) • Only insure for (say) 50% of value • There are still precautions you should take and don’t • But you take the ones that matter most • I.e. the ones where benefit is at least twice cost • So moral hazard remains, but is cost is reduced • Of course, you also now have more risk to bear

A Puzzle • The value of a dollar changes a lot between $20,000/year and $200,000/year • But very little between $200,000 and $200,100 • So why do people insure against small losses? • Service contract on a washing machine • Even a toaster! • Medical insurance that covers routine checkups • Filling cavities, and the like

Is Moral Hazard a Bug or a Feature? • Big company, many factories, they insure • Why? They shouldn't be risk averse • Since they can spread the loss across their factories. • Consider the employee running one factory without insurance • He can spend nothing, have 3% chance of a fire • Or spend $100,000, have 1%--and make $100,000 less/year for the company • Which is it in his interest to do? • Insure the factory to transfer cost to insurance company • Which then insists on a sprinkler system • Makes other rules • Is more competent than the company to prevent the fire!

Put Incentive Where It Does the Most Good • Insurance transfers loss from owner to the insurance company • Sometimes the owner is the one best able to prevent the loss • In which case moral hazard is a cost of insurance • To be weighed against risk spreading gain • Sometimes the insurance company is best able • In which case “moral hazard” is the objective • Sears knows more about getting their washing machines fixed than I do • So I buy a service contract to transfer the decision to them • Sometimes each party has precutions it is best at • So coinsurance--say 50%--gives each an incentive to take • Those precautions that have a high payoff

Health Insurance • If intended as risk spreading • Should be a large deductible • So I pay for all minor things • Giving me an incentive to keep costs down • Since I am paying them • But cover virtually 100% of rare high ticket items • If my life is at stake, I want it • But I don’t want to risk paying even 10% of a million dollar procedure • But maybe it’s intended • To transfer to the insurance company • The incentive to find me a good doctor • Negotiate a good price • Robin Hanson’s version • I decide how much my life is worth • I buy that much life insurance, from a company that also • Makes and pays for my medical decisions • And now has the right incentive to keep me alive

Adverse Selection • The problem: The market for lemons • Assumptions • Used car in good condition worth $10,000 to buyer, $8000 to seller • Lemon worth $5,000, $4,000 • Half the cars are creampuffs, half lemons • First try: • Buyers figure average used car is worth $7,500 to them, $6,000 to seller, so offer something in between • What happens? • What is the final result? • How might you avoid this problem—due to asymmetric information • Make the information symmetric—inspect the car. Or … • Transfer the risk to the party with the information—seller insures the car • What problems does the latter solution raise?

Plea Bargaining • A student raised the following question: • Suppose we include adverse selection in our analysis of plea bargaining • What does the D.A. signal by offering a deal? • What does the defendant signal by accepting? • Which subset of defendants end up going to trial?

Why insurance matters? • Most of you won’t be working for insurance companies • Or even negotiating contracts with them • But the analysis of insurance will be important • Almost any contract is in part insurance • Do you pay salesmen by the month or by the sale? • Is your house built for a fixed price, or cost+? • Do you take the case for a fixed price, contingency fee, or hourly charge?