S parse K ernel M achines

This text provides a comprehensive overview of kernel methods, focusing on Support Vector Machines (SVM) and Relevance Vector Machines (RVM) within the context of supervised learning. It explains how these methods leverage kernel functions to manage high-dimensional feature spaces and mitigate problems such as overfitting using maximum margin classifiers. Additionally, the outlines of various applications and challenges related to SVM and RVM are discussed, along with the principles of linear and nonlinear models for regression and classification.

S parse K ernel M achines

E N D

Presentation Transcript

Christopher M. Bishop, Pattern Recognition and Machine Learning Sparse Kernel Machines

Outline • Introduction to kernel methods • Support vector machines (SVM) • Relevance vector machines (RVM) • Applications • Conclusions

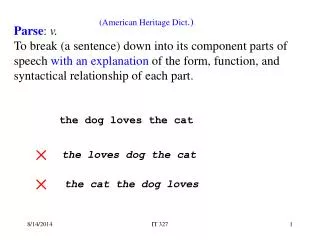

Supervised Learning • In machine learning, applications in which the training data comprises examples of the input vectors along with their corresponding target vectors are called supervised learning (x,t) (1,60,pass) (2,53,fail) (3,77,pass) (4,34,fail) ﹕ y(x) output

Classification x2 y<0 y>0 y=0 t=1 t=-1 x1

Regression t 1 0 -1 x new x 0 1

Linear Models • Linear models for regression and classification: if we apply feature extraction, model parameter input

Problems with Feature Space • Why feature extraction? Working in high dimensional feature spaces solves the problem of expressing complex functions • Problems: - there is a computational problem (working with very large vectors) - curse of dimensionality

Kernel Methods (1) • Kernel function: inner products in some feature space nonlinear similarity measure • Examples - polynomial: - Gaussian:

Kernel Methods (2) • Many linear models can be reformulated using a “dual representation” where the kernel functions arise naturally only require inner products between data (input)

Kernel Methods (3) • We can benefit from the kernel trick: - choosing a kernel function is equivalent to choosing φ no need to specify what features are being used - We can save computation by not explicitly mapping the data to feature space, but just working out the inner product in the data space

Kernel Methods (4) • Kernel methods exploit information about the inner products between data items • We can construct kernels indirectly by choosing a feature space mapping φ, or directly choose a valid kernel function • If a bad kernel function is chosen, it will map to a space with many irrelevant features, so we need some prior knowledge of the target

Kernel Methods (5) • Two basic modules for kernel methods General purpose learning model Problem specific kernel function

Kernel Methods (6) • Limitation: the kernel function k(xn,xm) must be evaluated for all possible pairs xn and xm of training points when making predictions for new data points • Sparse kernel machine makes prediction only by a subset of the training data points

Outline • Introduction to kernel methods • Support vector machines (SVM) • Relevance vector machines (RVM) • Applications • Conclusions

Support Vector Machines (1) • Support Vector Machines are a system for efficiently training the linear machines in the kernel-induced feature spaces while respecting the insights provided by the generalization theory and exploiting the optimization theory • Generalization theory describes how to control the learning machines to prevent them from overfitting

Support Vector Machines (2) • To avoid overfitting, SVM modify the error function to a “regularized form” where hyperparameter λ balances the trade-off • The aim of EW is to limit the estimated functions to smooth functions • As a side effect, SVM obtain a sparse model

Support Vector Machines (3) Fig. 1 Architecture of SVM

SVM for Classification (1) • The mechanism to prevent overfitting in classification is “maximum margin classifiers” • SVM is fundamentally a two-class classifier

Maximum Margin Classifiers (1) • The aim of classification is to find a D-1 dimension hyperplane to classify data in a D dimension space • 2D example:

Maximum Margin Classifiers (2) support vectors support vectors margin

Maximum Margin Classifiers (3) small margin large margin

Maximum Margin Classifiers (4) • Intuitively it is a “robust” solution - If we’ve made a small error in the location of the boundary, this gives us least chance of causing a misclassification • The concept of max margin is usually justified using Vapnik’s Statistical learning theory • Empirically it works well

SVM for Classification (2) • After the optimization process, we obtainthe prediction model: where (xn,tn) are N training data we can find that an will be zero except for that of the support vectors sparse

SVM for Classification (3) Fig. 2 data from twp classes in two dimensions showing contours of constant y(x) obtained from a SVM having a Gaussian kernel function

SVM for Classification (4) • For overlapping class distributions, SVM allow some of the training points to be misclassified soft margin penalty

SVM for Classification (5) • For multiclass problems, there are some methods to combine multiple two-class SVMs - one versus the rest - one versus one more training time Fig. 3 Problems in multiclass classification using multiple SVMs

SVM for Regression (1) • For regression problems, the mechanism to prevent overfitting is “ε-insensitive error function” quadratic error function ε-insensitive error funciton

SVM for Regression (2) × Error = |y(x)-t|- ε No error Fig . 4 ε-tube

SVM for Regression (3) • After the optimization process, we obtainthe prediction model: we can find that an will be zero except for that of the support vectors sparse

SVM for Regression (4) Fig . 5 Regression results. Support vectors are line on the boundary of the tube or outside the tube

Disadvantages • It’s not sparse enough since the number of support vectors required typically grows linearly with the size of the training set • Predictions are not probabilistic • The estimation of error/margin trade-off parameters must utilize cross-validation which is a waste of computation • Kernel functions are limited • Multiclass classification problems

Outline • Introduction to kernel methods • Support vector machines (SVM) • Relevance vector machines (RVM) • Applications • Conclusions

Relevance Vector Machines (1) • The relevance vector machine (RVM) is a Bayesian sparse kernel technique that shares many of the characteristics of SVM whilst avoiding its principal limitations • RVM are based on Bayesian formulation and provides posterior probabilistic outputs, as well as having much sparser solutions than SVM

Relevance Vector Machines (2) • RVM intend to mirror the structure of the SVM and use a Bayesian treatment to remove the limitations of SVM the kernel functions are simply treated as basis functions, rather than dot-product in some space

Bayesian Inference • Bayesian inference allows one to model uncertainty about the world and outcomes of interest by combining common-sense knowledge and observational evidence.

Relevance Vector Machines (3) • In the Bayesian framework, we use a prior distribution over w to avoid overfitting where α is a hyperparameter which control the model parameter w

Relevance Vector Machines (4) • Goal: find most probable α* and β* to compute the predictive distribution over tnew for a new input xnew, i.e. p(tnew | xnew, X, t, α*, β*) • Maximize the likelihood function to obtain α* and β* : p(t|X, α, β) Training data and their target values

Relevance Vector Machines (5) • RVM utilize the “automatic relevance determination” to achieve sparsity where αm represents the precision of wm • In the procedure of finding αm*, some αmwill become infinity which leads the corresponding wm to be zero remain relevance vectors !

Comparisons - Regression SVM RVM (on standard deviation predictive distribution)

Comparison - Classification SVM RVM

Comparisons • RVM are much sparser and make probabilistic prediction • RVM gives better generalization in regression • SVM gives better generalization in classification • RVM is computationally demanding while learning

Outline • Introduction to kernel methods • Support vector machines (SVM) • Relevance vector machines (RVM) • Applications • Conclusions

Applications (1) • SVM for face detection

Applications (2) Marti Hearst, “ Support Vector Machines” ,1998

Applications (3) • In the feature-matching based object tracking, SVM are used to detect false feature matches Weiyu Zhu et al., “Tracking of Object with SVM Regression” , 2001

Applications (4) • Recovering 3D human poses by RVM A. Agarwal and B. Triggs, “3D Human Pose from Silhouettes by Relevance Vector Regression” 2004

Outline • Introduction to kernel methods • Support vector machines (SVM) • Relevance vector machines (RVM) • Applications • Conclusions

Conclusions • The SVM is a learning machine based on kernel method and generalization theory which can perform binary classification and real valued function approximation tasks • The RVM have the same model as SVM but provides probabilistic prediction and sparser solutions