Information Theory

Information Theory. The Work of Claude Shannon (1916-2001) and others. Introduction to Information Theory. Lectures taken from:

Information Theory

E N D

Presentation Transcript

Information Theory The Work of Claude Shannon (1916-2001) and others

Introduction to Information Theory • Lectures taken from: • John R. Pierce: An Introduction to Information Theory: Symbols, Signals and Noise, N.Y. Dover Publishing, 1980 [second edition], 2 copies on reserve in ISAT Library • Read Chapters 2 - 5

Information Theory • Theories physical or mathematical are generalizations or laws that explain a broad range of phenomena • Mathematical theories do not depend on the nature of objects. [e.g. Arithmetic: it applies to any objects.] • Mathematical theorists make assumptions and definitions; then draw out their implications in proofs and theorems which then may call into question the assumptions and definitions

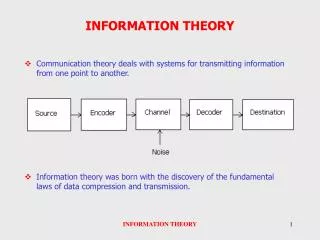

Information Theory • Communication Theory [the term Shannon used] tells how many bits of information can be sent per second over perfect and imperfect communications channels, in terms of abstract descriptions of the properties of these channels

Information Theory • Communication theory tells how to measure the rate at which a message source generates information • Communication theory tells how to encode messages efficiently for transmission over particular channels and tells us when we can avoid errors

Information Theory • The origins of information theory was telegraphy and electrical communications: Thus it uses “discrete” mathematical theory [statistics] as well as “continuous” mathematical theory [wave equations and Fourier Analysis] • The term “Entropy” in information theory was an analogy from the term used in statistical mechanics and physics.

Entropy in Information Theory • In physics, if a process is reversible, the entropy is constant. Energy can be converted from thermal to mechanical and back. • Irreversible processes resulted in an increase in entropy • Thus, entropy was also a measure of “order”: increase in entropy = decrease of order

Entropy • By analogy, if information is “disorderly”, there is less knowledge, or disorder is equivalent to unpredictability [in physics, a lack of knowledge about the positions and velocities of particles]

Entropy • In which case does a message of a given length convey the most information? • A. I can only send one of 10 messages. • B. I can only send one of 1,000,000 messages • In which state is there “more entropy”:

Entropy • Entropy = amount of information conveyed by a message from a source • Information in popular use means the amount of knowledge it conveys; its “meaning” • “Information” in communication theory refers to the amount of uncertainty in a system that a message will get rid of.

Symbols and Signals • It makes a difference HOW you translate a message into electrical signals. • Morse/Vail instinctively knew that shorter codes for frequently used letters would speed up the transmission of messages • “Morse Code” could have been 15% faster by better research on letter frequencies.

Symbols and Signals • [Telegraphy] Discrete Mathematics [statistics] used where current shifts represent on/off choices or combinations of on/off choices. • [Telephony]. Continuous Mathematics [sine functions and Fourier Analysis] is used where complex wave forms encode information in terms of changing frequencies and amplitudes.

Speed of Transmission: Line Speed • A given circuit has a limit to the speed of successive current values that can be sent, before individual symbols [current changes] interfere with one another and cannot be distinguished at the receiving end.[ “inter-symbol interference] This is the “Line Speed” • Different materials [coaxial cable, wire, optical fiber] would have a different line speeds, represented by K in the equations.

Transmission Speed • If more “symbols” can be used [different amplitudes or different frequencies], more than one message can be sent simultaneously, and thus transmission speed can be increased above line speed by effective coding, using more symbols. W = K(Log2 m) • If messages are composed of 2 “letters” and we send M messages simultaneously, then we need 2M different current values, to represent the combinations of M messages using two letters. W = K Log2 (2M) = KM

Nyquist Thus the Speed of Transmission, W, is proportional to the line speed [which is related to the number of successive current values per second you can send on the channel] AND the number of different messages you can send simultaneously. [which depends on how & what you code]

Transmission Speed • Attenuation and noise interference may make certain values unusable for coding.

Telegraphy/Telephony/Digital • In Telephony, messages are composed of a continuously varying wave form, which is a direct translation of pressure wave into electromagnetic wave. • Telegraphy codes could be sent simultaneously with voice, if we used frequencies [not amplitudes] and selected ones that were not confused with voice frequencies. • Fourier Analysis enables us to “separate out” the frequencies at the other end.

Fourier Analysis • If transmission characteristics of a channel do not change with time, it is a linear circuit. • Linear Circuits may have attenuation [amplitude changes] or delay [phase shifts], but they do not have period/frequency changes. • Fourier showed that any complex wave form [quantity varying with time] could be expressed as a sum of sine waves of different frequencies. • Thus, a signal containing a combination of frequencies [some representing codes of dots and dashes, and some representing the frequencies of voice] can be de-composed at the receiver and decoded. [draw picture]

Fourier Analysis • In digital communications, we “sample” the continuously varying wave, and code it into binary digits representing the value of the wave at time t and then send different frequencies to represent simultaneous messages of samples.

Digital Communications • 001100101011100001101010100…. • This stream represents the values of a sound wave at intervals of 1/x seconds • 01011110000011110101011111010… • This stream represents numerical data in a data base • 00110010100010000110101010100… • This stream represents coded letters

Digital Communications I represent the three messages simultaneously by a range of frequencies: 0 0 1… “000” = f 1 0 1 0… “010” = f 2 0 0 1… “101” = f 3 How many frequencies do I need? 2M

Digital Communications The resulting signal containing three simultaneous messages is a wave form changing continuously across these 8 frequencies f 1, f 2, f 3,….. And with Fourier analysis I can tell at any time what the three different streams are doing. And we know that the speed of transmission will vary with this “bandwidth”.

Digital Communications • Input: messages coded by several frequencies • Channel Distortion: Signals of different frequencies undergo different amplitude and phase shifts during transmission. • Output: same frequencies, but with different phases and amplitudes, thus wave has different shape. Fourier analysis can tell you what frequencies were sent, and thus what the three messages were. • In “distortionless circuits” shape of input is the same as the shape of the output.

Hartley • Given a “random selection of symbols” from a given set of symbols [a message source] • The “Information” in a message, H, is proportional to the bandwidth [allowable values] x “time of transmission”, H = n log s • n = # of symbols selected, s = # of different symbols possible (2M in the previous example) , log s [# independent choice sent simultaneously [i.e. proportional to the speed of transmission]

And now, time for something completely different! • Claude Shannon: encoding simultaneous messages from a known “ensemble” [i.e. bandwidth], so to transmit them accurately and swiftly in the presence of noise. • Norbet Weiner: research on extracting signals of an “ensemble” from noise of a known type, to predict future values of the “ensemble” [tracking enemy planes].

Other names • Dennis Gabor’s theory of communication did not include noise • W.G. Tuller explored the theoretical limits on the rate of transmission of information

Mathematics of Information • Deterministic v. stochastic models. • How do we take advantage of the language [the probabilities that a message will contain certain things] to further compress and encode messages. • 0-order approximation of language: all 26 letters have equal probabilities • 1st-order approximation of English: we assign appropriate probabilities to letters