Super computers Parallel Processing

Super computers Parallel Processing. By Lecturer: Aisha Dawood. Text book. "INTRODUCTION TO PARALLEL COMPUTING", by Ananth Grama , Anshul Gupta, George Karypis , and Vipin Kumar. Sequential computing .

Super computers Parallel Processing

E N D

Presentation Transcript

Super computersParallel Processing By Lecturer: Aisha Dawood

Text book • "INTRODUCTION TO PARALLEL COMPUTING", • by • AnanthGrama, Anshul Gupta, George Karypis, and VipinKumar.

Sequential computing • Sequential computer consists of a memory connected to a processor via a data path. • Bottleneck of the computational processing rate of a computer system. • This overhead led to parallelism. • Implicit parallelism and explicit parallelism.

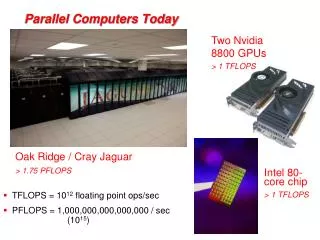

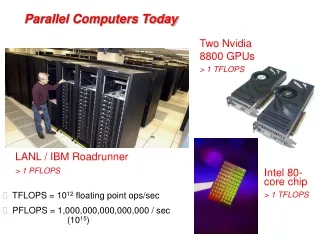

Motivating Parallelism • The computational power – Transistors to FLOPS. • Memory/Disk speed ( DRAM Latency – Memory Bandwidth – Caches). • Data communication ( Networks – undesirable Centralized approaches).

Parallel and Distributed Computing • Parallel computing (processing): • the use of two or more processors (computers), usually within a single system, working simultaneously to solve a single problem. • Distributed computing (processing): • any computing that involves multiple computers remote from each other that each have a role in a computation problem or information processing. • Parallel programming: • the human process of developing programs that express what computations should be executed in parallel.

Pipelining and Superscalar execution • Processors relies on pipelining to improve execution rates. • Pipelining by overlapping various stages in instruction execution (Fetch , Decode, Operand Fetch, Execute, Store). • i.e. (The assembly of a car taking 100 time units can be broken into 10 pipelines).

Pipelining and Superscalar execution • To increase the speed of a pipeline we would break the tasks into smaller subtasks thus lengthen the pipeline and increasing the overlap of instructions execution. • This enables faster clock rates since tasks are now smaller. • E.g. Pentium 4 which operates at 2.0 GHz has 20 stage pipelines. • The speed of a single pipeline limited by the largest atomic task in the pipeline. • Long instruction pipeline need effective techniques for predicting branches. • The penalty of a misprediction increases as the pipeline become deeper since a large number of instructions need to be flushed.

Superscalar execution • The ability of a processor to issue multiple instructions in the same cycle is referred to as Superscalar execution. • Architectures allow two issues per clock cycle is referred to as two-way superscalar.

Superscalar execution • Example 2.1

Superscalar execution • Load R1, @1000 and Load R2, @1008 are mutually independent. • Add R1, R2 and Store R1 , @2000 are dependent. • True data dependency : The result of instruction may be required for subsequent instructions, e.g. Load R1, @1000 and add R1, @1004

Superscalar execution • Dependencies must be resolved before issue of instructions, this has two implications: • Since the resolution is done at a runtime it must bee supported in hardware, the complexity can be high. • The parallelism in the instruction level is limited and is a function of coding technique. • You can extract more parallelism by reordering instructions Example 2.1 (i).

Superscalar execution • Consider two scheduling of two floating point operations on a dual issue machine with a single floating point unit the instructions can not be issued together since they are competing for a single processor resource this is called resource dependency. • In a conditional branched instruction since the branch destination only known at the execution time, scheduling such instruction leads to errors this dependency referred to as branch dependencies or procedural dependencies handled by rolling back.

Superscalar execution • Accurate branch prediction is critical for efficient superscalar execution. • The ability of a processor to detect and schedule concurrent instructions is critical to superscalar performance example 2.1 (iii) • In-order, out-of-order ( dynamic instruction issue). • Superscalar architectures the execution aspect of the program assuming the multiply add unit (example 2.1) vertical waste , horizontal waste.

Very Long Instruction Word Processors (VLIW) • The parallelism adopted by Superscalars is often limited by the instruction look-ahead. • In VLIW processors relies on the compiler to resolve dependencies and resource availabilities at compile time.

Very Long Instruction Word Processors (VLIW) • VLIW has advantages and disadvantages: • Since scheduling is done in software the decoding and instruction issue mechanisms is simpler. • Compilers are easier to optimize parallelism when compared to hardware issue unit. • Compilers do not have the dynamic program state available to make scheduling decisions. • Compilers allows the use of more static prediction schemas. • Other runtime situations such as stalls on data fetch because of cache misses are difficult to predict. • VLIW very sensitive to the compilers ability to detect dependencies. • Superscalar computers have limited parallelism compared to VLIW processors.

Limitation of memory system performance • The effective performance of a computer relies not just on the processor speed but also on the memory system ability to feed data to the processor. • A memory system takes in a request for a word and return a block of size b containing the requested word in l nanoseconds, l referred to as latency. • The rate at which data will be pumped to the processor determines the bandwidth. • I.e. Water hose.

Improving memory latency using Caches • One memory system innovation addresses the speed mismatch between the processor and DRAM. • Caches have low latency and high bandwidth storage. • Data reference satisfied by the cache is called cache hit ratio. • The computation rate of application at which data can be pumped into the CPU is referred to as memory bound. • The improvement of performance resulted from caches is based on the assumption of repeated references in a small time window this is called temporal locality.