Learning III

Learning III. Introduction to Artificial Intelligence CS440/ECE448 Lecture 22 Next Lecture: Roxana Girju, Natural Language Processing New Homework Out Today. Last lecture. Identification trees This lecture Neural networks: Perceptrons Reading Chapter 20. Sunburn data.

Learning III

E N D

Presentation Transcript

Learning III Introduction to Artificial Intelligence CS440/ECE448 Lecture 22 Next Lecture: Roxana Girju, Natural Language Processing New Homework Out Today

Last lecture • Identification trees This lecture • Neural networks: Perceptrons Reading • Chapter 20

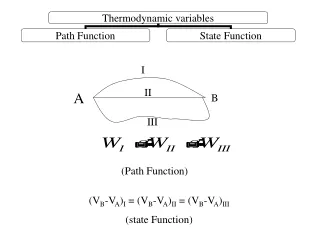

Sunburn data There are 3 x 3 x 3 x 2 = 54 possible feature vectors

An ID tree consistent with the data Hair Color Blond Brown Red Alex Pete John Emily Lotion Used Yes No Sarah Annie Dana Katie Sunburned Not Sunburned

Another consistent ID tree Height Sunburned Not Sunburned Tall Dana Pete Short Average Hair Color Suit Color Brown Red Red Blond Yellow Blue Alex Hair Color Suit Color Sarah Red Blue Red Yellow Brown Annie Emily Katie John

An ideaSelect tests that divide as well as possible people into sets with homogeneous labels Hair Color Lotion used No Blond Yes Red Brown Sarah Annie Emily Pete John Sarah Annie Dana Katie Dana Alex Katie Alex Pete John Emily Height Suit Color

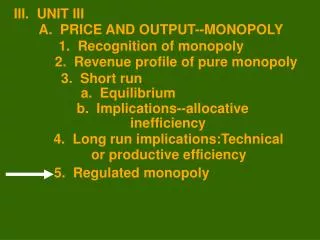

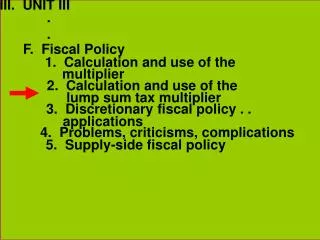

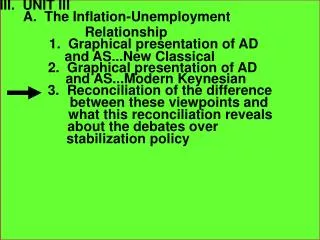

Problem: • For practical problems, it is unlikely that any test will produce one completely homogeneous subset. • Solution: • Minimize a measure of inhomogeneity or disorder. • Available from information theory.

Information • Let’s say we have a question which has n possible answers and call them vi. • Let’s say that answer vi occurs with probability P(vi), then the information content (entropy) measured in bits of knowing the answer is: • One bit of information is enough information to answer a yes or no question. • E.g. consider flipping a fair coin, how much information do you have if you know which side comes up? I(½, ½) = - (½ log2½ + ½ log2½) = 1bit

Information at a node • In our decision tree for a given feature (e.g. hair color), we have • b: number of branches (e.g. possible values for the feature) • Nb: number of samples in branch • Np: number of samples in all branches • Nbc: number of samples in class c in branch b. • Using frequencies as an estimate of the probabilities, we have • For a single branch, the information is simply

What is the amount of information required for classification after we have used the hair test? Hair Color Blond Red Brown Sarah Annie Dana Katie Alex Pete John Emily -1 log21 -0 log20 = 0 - 0 log20 - 3/3 log23/3 = 0 - 2/4 log22/4 - 2/4 log22/4 = 1 Information = 4/8*1 + 1/8*0 + 3/8*0 = 0.5

Hair Color Blond Red Brown Sarah Annie Dana Katie Alex Pete John Emily Selecting top level feature • Using the 8 samples we have so far, we get: TestInformation Hair 0.5 Height 0.69 Suit Color 0.94 Lotion 0.61 • Hair wins, least additional information needed for rest of classification. • This is used to build the first level of the identification tree:

Hair Color Blond Red Brown Sarah Annie Dana Katie Alex Pete John Emily Selecting second level feature • Let’s consider the remaining features for the blond branch (4 samples) TestInformation Height 0.5 Suit Color 1 Lotion 0 • Lotion wins, least additional information.

Thus we get to the tree we had arrived at earlier Hair Color Blond Brown Red Alex Pete John Emily Lotion Used Yes No Sarah Annie Dana Katie Sunburned Not Sunburned

Using the Identification tree as a classification procedure Hair Color Red Brown Blond Lotion Used OK Sunburn Yes No Sunburn OK Rules: • If Blond and uses lotion, then OK • If Blond and does not use lotion, then gets burned • If red-haired, then gets burned • If brown hair, then OK

Performance measurement How do we know that h ≈ f ? • Use theorems of computational/statistical learning theory • Try h on a new test set of examples (use same distribution over example space as training set) Learning curve = % correct on test set as a function of training set size

What & Why • Artificial Neural Networks: a bottom-up attempt to model the functionality of the brain. • Two main areas of activity: • Biological: Try to model biological neural systems. • Computational: • Artificial neural networks are biologically inspired but not necessarily biologically plausible. • So may use other terms: Connectionism, Parallel Distributed Processing, Adaptive Systems Theory. • Interests in neural networks differ according to profession.

Some Applications NETTALK vs. DECTALK DECTALK is a system developed by DEC which reads English characters and produces, with a 95% accuracy, the correct pronunciation for an input word or text pattern. • DECTALK is an expert system which took 20 years to finalize. • It uses a list of pronunciation rules and a large dictionary of exceptions. NETTALK, a neural network version of DECTALK, was constructed over one summer vacation. • After 16 hours of training the system could read a 100-word sample text with 98% accuracy! • When trained with 15,000 words it achieved 86% accuracy on a test set. NETTALK is an example of one of the advantages of the neural network approach over the symbolic approach to Artificial Intelligence. • It is difficult to simulate the learning process in a symbolic system; rules and exceptions must be known. • On the other hand, neural systems exhibit learning very clearly; the network learns by example.

LeNet-5Convolutional Neural NetworksYann LeCun http://yann.lecun.com/exdb/lenet/index.html

Human Brain & Connectionist Model Consider humans: • Neuron switching time .001 second • Number of neurons 1010 • Connections per neuron 104 • Scene recognition time .1 second • 100 inference steps doesn’t look like enough - massively parallel computation Properties of ANNs: • Many neuron-like switching units • Many weighted interconnections among units • Highly parallel, distributed process • Emphasis on tuning weights automatically

Artificial neurons: Perceptrons Linear, threshold units Xi : inputs Wi :weights : threshold 0

0 Artificial neurons: Perceptrons The threshold can be easily forced to 0 by introducing an additional weight input w0 = . w0 x0=-1