Comprehensive Overview of Replicated Transactions and Consistency Protocols

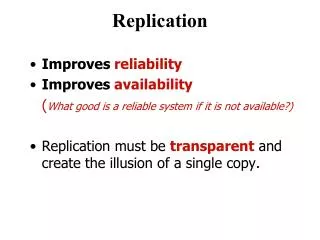

This document delves into the mechanics of replicated transactions, focusing on various consistency models such as one-copy serializability and two-phase commit protocols. It explores the dynamics of primary copy replication, read-one/write-all replication, and available copies replication, detailing how clients interact with replicated data. It also examines error handling during RM failures and network partitions, emphasizing the importance of local validation and quorum approaches to maintain consistency and integrity in distributed systems. This provides a thorough groundwork for understanding replicated data management.

Comprehensive Overview of Replicated Transactions and Consistency Protocols

E N D

Presentation Transcript

Client + front end Client + front end U T deposit(B,3); getBalance(A) B Replica managers Replica managers A A B B B A Transactions on Replicated Data

One Copy Serialization • In a non-replicated system, transactions appear to be performed one at a time in some order. This is achieved by ensuring a serially equivalent interleaving of transaction operations. • One-copy serializability: The effect of transactions performed by clients on replicated objects should be the same as if they had been performed one at a time on a single set of objects.

Two Phase Commit Protocol For Replicated Objects Two level nested 2PC • In the first phase, the coordinator sends the canCommit? command to the workers, each of which then passes it onto the other RMs and collects their replies before replying to the coordinator. • In the second phase, the coordinator sends the doCommit or doAbort request, which is passed onto the members of the groups of RMs.

Primary Copy Replication • For now, assume no crashes/failures • All the client requests are directed to a single primary RM. • Concurrency control is applied at the primary. • To commit a transaction, the primary communicates with the backup RMs and replies to the client. • View synchronous comm. one-copy serializability • Disadvantage? Performance.

Read One/Write All Replication • FEs may communicate with any RM. • Every write operation must be performed at all of the RMs, each of which sets a write lock on the object. • A read operation can be performed at a single RM, which sets a read lock on the object. • Consider pairs of operations of different transactions on the same object. • Any pair of write operations will require conflicting locks at all of the RMs. • A read operation and a write operation will require conflicting locks at a single RM. • One-copy serializability is achieved. Disadvantage? Failures block the system.

Available Copies Replication • A client’s read request on an object must be performed by any available RM, but a client’s update request must be performed by all available RMs in the group. • As long as the set of available RMs does not change, local concurrency control achieves one-copy serializability in the same way as in read-one/write-all replication. • This is not true if RMs fail and recover during conflicting transactions.

Client + front end T U Client + front end getBalance(B) deposit(A,3); getBalance(A) Replica managers deposit(B,3); B M Replica managers B B A A N P X Y Available Copies Approach

The Impact of RM Failure • Assume that (i) RM X fails just after T has performed getBalance; and (ii) RM N fails just after U has performed getBalance. Both failures occur before any of the deposit()s. • T’s deposit will be performed at RMs M and P, and U’s deposit will be performed at RM Y. • The concurrency control on A at RM X does not prevent transaction U from updating A at RM Y. • Solution: RM crashes and recoveries must be serialized with respect to transaction operations.

Local Validation (using Our Example) • From T’s perspective, • T has read from an object at X X must have failed after T’s operation. • T observes the failure of N when it attempts to update the object B N’s failure must be before T. • N fails T reads object A at X; T writes objects B at M and P T commits X fails. • From U’s perspective, • X fails U reads object B at N; U writes object A at Y U commits N fails. • At the time T commits, it checks N is still not available and X, M and P are still available. If so, T can commit. • In the example T cannot commit. May need less stringent rules to allow T to commit • Can be combined with 2PC. • Even if T commits U’s validation fails because N has already failed. • Local validation may fail if partitions occur in the network

Client + front end Client + front end Network U T withdraw(B, 4) partition deposit(B,3); B Replica managers B B B Network Partition

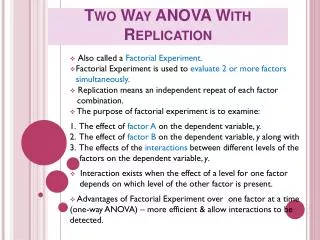

Dealing with Network Partitions • As there has been a partition, pairs of conflicting transactions have been allowed to commit in different partitions. The only choice after the network has recovered is to abort one of the transactions. • Assumption: Partitions heal eventually • Basic idea: allow operations to continue, finalize trans. when partitions have healed • Quorum approaches used to decide whether reads and writes are allowed • In the pessimistic quorum consensusapproach, updates are allowed only in a partition that has the majority of RMs and updates are propagated to the other RMs when the partition is repaired.

Static Quorums • The decision about how many RMs should be involved in an operation on replicated data is called Quorum selection • Quorum rules state that: • At least r replicas must be accessed for read • At least w replicas must be accessed for write • r + w > N, where N is the number of replicas • w > N/2 • Each object has a version number or a consistent timestamp • Static Quorum predefines r and w , & is a pessimistic approach: if partition occurs, update is possible in at most one partition

Voting with Static Quorums • A version of quorum selection where each replica has a number of votes. Quorum is reached by majority of votes (N is the total number of votes) e.g., a cache replica may be given a 0 vote -with r = w = 2, access time for write is 750 ms access time for read without cache is 750 ms access time with cache is 175 to 825ms Replica votes access time version chk P(failure) Cache 0 100ms 0ms 0% Rep1 1 750ms 75ms 1% Rep2 1 750ms 75ms 1% Rep3 1 750ms 75ms 1%

Example 1 Example 2 Example 3 Latency 75 75 75 Replica 1 (milliseconds) 65 100 750 Replica 2 65 750 750 Replica 3 Voting 1 2 1 Replica 1 configuration 0 1 1 Replica 2 0 1 1 Replica 3 Quorum 1 2 1 R sizes 1 3 3 W Derived performance of file suite: Read 75 75 Latency 65 0.0002 0.000001 Blocking probability 0.01 Write 100 750 Latency 75 0.0101 0.03 Blocking probability 0.01 Quorum Consensus Examples [Gifford]’s examples Ex1: High R to W ratio Single RM on Replica 1 Weak consistency Ex2: Moderate R to W Accessed from local LAN of RM 1 Ex3: V. High R to W ratio All RM’s equidistant 0.01 failure prob. per RM

Optimistic Quorum Approaches • An OptimisticQuorum selection allows writes to proceed in any partition. • This might lead to write-write conflicts that are detected when partition repairs • Any writes that violate one-copy serializability will then be aborted • Still improves performance because partition repair not needed until commit time • Optimistic Quorum is practical when: • Conflicting updates are rare • Conflicts are always detectable • Damage from conflicts can be easily confined • Repair of damaged data is possible or an update can be discarded without consequences.

View-based Quorum • Quorum is based on views at any time • In a small partition, the group plays dead, hence no writes occur. • In a large enough partition, inaccessible nodes are considered in the quorum as ghost processors. • Once the partition is repaired, processors in the small partition know whom to contact for updates.

View-based Quorum - details • We define a read and a write threshold: • Aw: minimum nodes in a view for write, e.g., Aw > N/2 • Ar: minimum nodes in a view for read • E.g., Aw +Ar > N • If ordinary quorum cannot be reached for an operation, we take a straw poll, i.e. update views • In a large enough partition for read, Viewsize Ar In a large enough partition for write, Viewsize Aw(inaccessible nodes are considered as ghosts.) • Views are per object, numbered sequentially and only updated if necessary • The first update after partition repair forces restoration for nodes in the smaller partition

Initially all nodes are in 1 2 3 4 5 V1.0 V2.0 V3.0 V4.0 V5.0 Network is partitioned 1 2 3 4 5 V1.0 V2.0 V3.0 V4.0 V5.0 read Read is initiated, quorum is reached 1 2 3 4 5 V1.0 V2.0 V3.0 V4.0 V5.0 w X write is initiated, quorum not reached 1 2 3 4 5 V1.0 V2.0 V3.0 V4.0 V5.0 w P1 changes view, writes & updates views 1 2 3 4 5 V1.1 V2.1 V3.1 V4.1 V5.0 Example: View-based Quorum • Consider: N = 5, w = 5, r = 1, Aw = 3, Ar = 3

X P5 initiates write, no quorum, Aw not met, aborts. P5 initiates read, has quorum, reads stale data 1 2 3 4 5 1 2 3 4 5 X w r X X V1.1 V2.1 V3.1 V4.1 V5.0 V1.1 V2.1 V3.1 V4.1 V5.0 1 2 3 4 5 Partition is repaired V1.1 V2.1 V3.1 V4.1 V5.0 w p3 initiates write, notices repair 1 2 3 4 5 V1.1 V2.1 V3.1 V4.1 V5.0 Views are updated to 1+ Max (views) 1 2 3 4 5 V1.2 V2.2 V3.2 V4.2 V5.2 Example: View-based Quorum (cont’d)