Non-linear Dimensionality Reduction

Non-linear Dimensionality Reduction. CMPUT 466/551 Nilanjan Ray Prepared on materials from the book Non-linear dimensionality reduction By Lee and Verleysen , Springer, 2007. Agenda. What is dimensionality reduction? Linear methods Principal components analysis

Non-linear Dimensionality Reduction

E N D

Presentation Transcript

Non-linear Dimensionality Reduction CMPUT 466/551 Nilanjan Ray Prepared on materials from the book Non-linear dimensionality reduction By Lee and Verleysen, Springer, 2007

Agenda • What is dimensionality reduction? • Linear methods • Principal components analysis • Metric multidimensional scaling (MDS) • Non-linear methods • Distance preserving • Topology preserving • Auto-encoders (Deep neural networks)

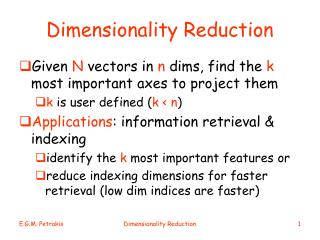

Dimensionality Reduction • Mapping d-dimensional data points y to p-dimensional vectors x; p < d. • Purposes • Visualization • Classification/regression • Most of the times we are only interested in the forward mapping y to x. • The backward mapping is difficult in general. • If the forward and the backward mappings are linear they method is called linear, else it is called non-linear dimensionality reduction technique.

Distance Preserving Methods Let’s say the points yi are mapped to xi, i=1,2,…,N. Distance preserving methods try to preserve pair wise distances, i.e., d(yi, yj) = d(xi, xj), or the pair wise dot products, <yi, yj> = <xi, xj>. What is a distance? Nondegeneracy: d(a, b) = 0 if and only if a = b Triangular inequality: for any three points a, b, and c, d(a, b) d(c, a) + d(c, b) Other two properties, nonnegativity and symmetry follows from these two

Metric MDS A multidimensional scaling (MDS) method is a linear generative model like PCA: y’s are d-dimensional observed variable and x’s are p-dimensional latent variable W is a matrix with the property: Let Then So, dot product is preserved. How about Euclidean distances? So, Euclidean distances are preserved too!

Metric MDS Algorithm Center data matrix Y; and compute dot product matrix S = YTY If data matrix is not available, only distance matrix D is available, do double centering to form scalar matrix: Compute eigenvalue decomposition S = UUT Construct p-dimensional representation as: Metric MDS is actually PCA and is a linear method

Sammon’s Nonlinear Mapping (NLM) NLM minimizes the energy function: Start with initial x’s Update x’s by (quasi-Newton update) xk,i is the kth component of vector xi

A Basic Issue with Metric Distance Preserving Methods Geodesic distances seem to be better suited

ISOMAP ISOMAP = MDS with graph distance Needs to decide how the graph is constructed: who is the neighbor of whom K closest rule or -distance rule can build a graph

KPCA Closely related to MDS algorithm KPCA using Gaussian kernel

Topology Preserving Techniques • Topology Neighborhood relationship • Topology preservation means two neighboring points in d-dimensions should map to two neighboring points in p-dimension • Distance preservation is too often too rigid; topology preservation techniques can sometimes stretch or shrink point clouds • More flexible; algorithmically more complex

TP Techniques • Can be categorized broadly into • Methods with predefined topology • SOM (Kohonen’s self-organizing map) • Data driven lattice • LLE (locally linear embedding) • Isotop…

Kohonen’s Self-Organizing Maps (SOM) Step 1: Define a 2D lattice indexed by (l, k): l, k =1,…K. Step 2: For a set of data vectors yi, i=1,2,…,N, find a set of prototypes m(l, k). Note that by this indexing (l, k), the prototypes are mapped to the 2D lattice. Step 3: Iterate for each data yi: • Find the closest prototype m (using Euclidean distance in the d-dimensional space): • Update prototypes: (prepared from [HTF] book)

Neighborhood Function for SOM A hard threshold function: Or, a soft threshold function:

Remarks • SOM is actually a constrained k-means • Constrains K-means clusters on a smooth manifold • If only one neighbor (itself) is allowed => K-means • Learning rate () and distance threshold () usually decrease with training iterations • Mostly useful for a visualization tool: typically it cannot map to more than 3 dimensions • Convergence is hard to assess

Locally Linear Embedding • Data driven lattice, unlike SOM on predefined lattice • Topology preserving: it is based on conformal mapping, which is a transformation that preserves angles; LLE is invariant to rotation, translation and scaling • To some extent similar to preserving dot-product • A data point yi is assumed to be a linear combination of its neighbors

LLE Principle Each data point y is a local linear combination: Neighborhood of yi: determined by a graph Constraints on wij: LLE first computes the matrix W by minimizingE. Then it assumes that in the low dimensions the same local linear combination holds: So, it minimizesF with respect to x’s: obtains low dimensional mapping!

LLE Results Let’s visit: http://www.cs.toronto.edu/~roweis/lle/