Enhancing Search Results Diversity for Ambiguous Queries

250 likes | 341 Views

Explore techniques to diversify search results for ambiguous queries, maximizing relevance for users. Discusses problem formulation, theoretical analysis, and metrics for measuring diversity with experiments.

Enhancing Search Results Diversity for Ambiguous Queries

E N D

Presentation Transcript

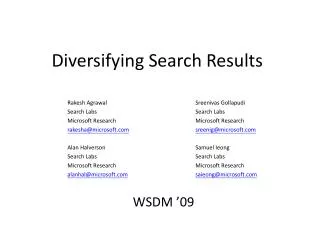

Diversifying Search Results Rakesh Agrawal, SreenivasGollapudi,Alan Halverson, Samuel Ieong Search Labs, Microsoft Research WSDM, February 10, 2009 TexPoint fonts used in EMF. Read the TexPoint manual before you delete this box.: AAAAAA

Ambiguity and Diversification • Many queries are ambiguous • “Barcelona” (City? Football team? Movie?) • “Michael Jordan” Michael I. Jordan Michael J. Jordan

Ambiguity and Diversification • Many queries are ambiguous • “Barcelona” (City? Football team? Movie?) • “Michael Jordan” (which one?) How best to answer ambiguous queries? • Use context, make suggestions, … • Under the premise of returning a single (ordered) set of results, how best to diversify the search results so that a user will find something useful?

Intuition behind Our Approach • Analyze click logs for classifying queries and docs • Maximize the probability that the average user will find a relevant document in the retrieved results • Use the analogy of marginal utility to determine whether to include more results from an already covered category

Outline • Problem formulation • Theoretical analysis • Metrics to measure diversity • Experiments

Assumptions • A taxonomy (categorization of intents) C • For each query q, P(c | q) denote distribution of intents • c ∊ CP(c | q) = 1 • Quality assessment of documents at intent level • For each doc d, V(d | q, c) denote probability of the doc satisfying the intent • Conditional independence • Users are interested in finding at least one satisfying document

Problem Statement Diversify(k) • Given a query q, a set of documents D, distribution P(c | q), quality estimates V(d | c, q), and integer k • Find a set of docs S D with |S| = k that maximizes interpreted as the probability that the set S is relevant to the query over all possible intentions Multiple intents Find at least one relevant doc

Discussion of Objective • Makes explicit use of taxonomy • In contrast, similarity-based: [CG98], [CK06], [RKJ08] • Captures both diversification and doc relevance • In contrast, coverage-based: [Z+05], [C+08], [V+08] • Specific form of “loss minimization” [Z02], [ZL06] • “Diminishing returns” for docs w/ the same intent • Objective is order-independent • Assumes that all users read k results • May want to optimize kP(k) P(S | q)

Outline • Problem formulation • Theoretical analysis • Metrics to measure diversity • Experiments

Properties of the Objective • Diversify(k) is NP-Hard • Reduction from Max-Cover • No single ordering that will optimize for all k • Can we make use of “diminishing returns”?

A Greedy Algorithm • Intent distribution: P(R | q) = 0.8, P(B | q) = 0.2. U(R | q) = 0.8 U(B | q) = 0.08 0.07 0.12 0.2 D V(d | q, c) g(d | q, c) S • Actually produces an ordered set of results • Results not proportional to intent distribution • Results not according to (raw) quality • Better results ⇒ less needed to be shown ×0.8 0.9 0.72 0.9 ×0.08 0.04 0.5 ×0.08 ×0.8 0.40 ×0.08 0.03 0.4 ×0.8 ×0.08 0.32 0.08 0.4 ×0.2 ×0.2 0.08 0.4 ×0.12 0.08 0.05 0.4 ×0.2 ×0.2 0.08 0.4

Formal Claims Lemma 1 P(S | q) is submodular. • Same intuition as diminishing returns • For sets of documents where S T, and a document d, Theorem 1 Solution is an (1 – 1/e) approx from opt. • Consequence of Lemma 1 and [NWF78] Theorem 2 Solution is optimal when each document can only satisfy one category. • Relative quality of docs does not change

Outline • Problem formulation • Theoretical analysis • Metrics to measure diversity • Experiments

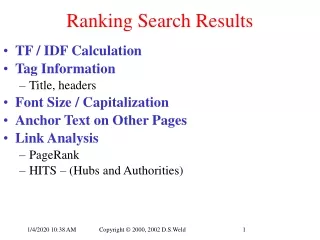

How to Measure Success? • Many metrics for relevance • Normalized discounted cumulative gains at k (NDCG@k) • Mean average precision at k (MAP@k) • Mean reciprocal rank (MRR) • Some metrics for diversity • Maximal marginal relevance (MMR) [CG98] • Nugget-based instantiation of NDCG [C+08] • Want a metric that can take into account both relevance and diversity [JK00]

Generalizing Relevance Metrics • Take expectation over distribution of intents • Interpretation: how will the average user feel? • Consider NDCG@k • Classic: • NDCG-IA depends on intent distribution and intent-specific NDCG

Outline • Problem formulation • Theoretical analysis • Metrics to measure diversity • Experiments

Setup • 10,000 queries randomlysampled from logs • Queries classified acc.to ODP (level 2) [F+08] • Keep only queries withat least two intents (~900) • Top 50 results from Live, Google, and Yahoo! • Documents are rated on a 5-pt scale • >90% docs have ratings • Docs w/o ratings are assigned random grade according to the distribution of rated documents

Experiment Detail • Documents are classified using a Rocchio classifier • Assumes that each doc belongs to only one category • Quality scores of documents are estimated based on textual and link features of the webpage • Our approach is agnostic of how quality is determined • Can be interpreted as a re-ordering of search results that takes into account ambiguities in queries • Evaluation using generalized NDCG, MAP, and MRR • f(relevance(d)) = 2^rel(d); discount(j) = 1 + lg2 (j) • Take P(c | q) as ground truth

Evaluation using Mechanical Turk • Created two types of HITs on Mechanical Turk • Query classification: workers are asked to choose among three interpretations • Document rating (under the given interpretation) • Two additional evaluations • MT classification + current ratings • MT classification + MT document ratings

Concluding Remarks • Theoretical approach to diversification supported by empirical evaluation • What to show is a function of both intent distribution and quality of documents • Less is needed when quality is high • There are additional flexibilities in our approach • Not tied to any taxonomy • Can make use of context as well

Future Work • When is it right to diversify? • Users have certain expectations about the workings of a search engine • What is the best way to diversify? • Evaluate approaches beyond diversifying theretrieved results • Metrics that capture both relevance and diversity • Some preliminary work suggests that there will be certain trade-offs to make

Thanks {rakesha, sreenig, alanhal, sieong}@microsoft.com