Sentiment Analysis

Sentiment Analysis. From Bing Liu and Moshe Koppel’s slides. Facts and Opinions. Two main types of textual information on the Web. Facts and Opinions Current search engines search for facts Facts can be expressed with topic keywords. Search engines do not search for opinions

Sentiment Analysis

E N D

Presentation Transcript

Sentiment Analysis From Bing Liu and Moshe Koppel’s slides

Facts and Opinions • Two main types of textual information on the Web. • Facts andOpinions • Current search engines search for facts • Facts can be expressed with topic keywords. • Search engines do not search for opinions • Opinions are hard to express with a few keywords • How do people think of Motorola Cell phones? • Current search ranking strategy is not appropriate for opinion retrieval/search.

Two types of evaluations • Direct Opinions: sentiment expressions on some objects, e.g., products, events, topics, persons. • E.g., “the picture quality of this camera is great” • Comparisons: relations expressing similarities or differences of more than one object. Usually expressing an ordering. • E.g., “car x is cheaper than car y.”

Opinion search (Liu, Web Data Mining book, 2007) • Can you search for opinions as conveniently as general Web search? • Whenever you need to make a decision, you may want some opinions from others • Wouldn’t it be nice if you could find them on a search system instantly, by issuing queries such as • Opinions: “Motorola cell phones” • Comparisons: “Motorola vs. Nokia” • Cannot be done yet! (but could be soon …)

Introduction • Two main types of textual information. • Facts and Opinions • Most current text information processing methods (e.g., web search, text mining) work with factual information. • Sentiment analysis oropinion mining • computational study of opinions, sentiments and emotions expressed in text. • Why opinion mining now? Mainly because of the Web; huge volumes of opinionated text. Bing Liu, 5th Text Analytics Summit, Boston, June 1-2, 2009

Introduction – user-generated media • Importance of opinions: • Opinions are so important that whenever we need to make a decision, we want to hear others’ opinions. • In the past, • Individuals: opinions from friends and family • businesses: surveys, focus groups, consultants … • Word-of-mouth on the Web • User-generated media: One can express opinions on anything in reviews, forums, discussion groups, blogs ... • Opinions of global scale: No longer limited to: • Individuals: one’s circle of friends • Businesses: Small scale surveys, tiny focus groups, etc. Bing Liu, 5th Text Analytics Summit, Boston, June 1-2, 2009

An Example Review • “I bought an iPhone a few days ago. It was such a nice phone. The touch screen was really cool. The voice quality was clear too. Although the battery life was not long, that is ok for me. However, my mother was mad with me as I did not tell her before I bought the phone. She also thought the phone was too expensive, and wanted me to return it to the shop. …” • What do we see? • Opinion holder, target of opinion, opinion Bing Liu, 5th Text Analytics Summit, Boston, June 1-2, 2009

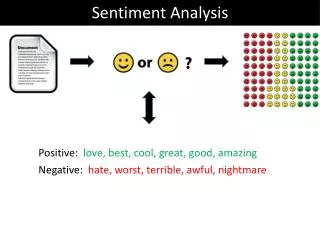

Sentiment Analysis Determine if a sentence/document expresses positive/negative/neutral sentiment towards some object

Some Applications Review classification:Is a review positive or negative toward the movie? Product review mining:What features of the ThinkPad T43 do customers like/dislike? Tracking sentiments toward topics over time:Is anger increasing or cooling down? Prediction (election outcomes, market trends): Will McCain or Obama win?

Challenges • If we are using a general search engine, how to indicate that we are looking for opinions? • How to find the parts in a document that contain opinion/review material? • Sentiment analysis of relevant text • Presenting the results in a meaningful way: • summary • negative/positive • aspectwise

Text categorization • Classify a set of documents as belonging to several categories according to their topics • Sentiment analysis • Classify a document or text as positive, negative, neutral, or assign 3-star etc. • Regression-like nature of strength of feeling is unique to sentiment analysis • Techniques should work accross various domains

Level of Analysis We can inquire about sentiment at various linguistic levels: Words –objective, positive, negative, neutral Clauses – “going out of my mind” (go crazy) Sentences – possibly multiple sentiments Documents

Opinion mining tasks At the document (or review) level: • Task: sentiment classification of reviews • Classes: positive, negative, and neutral • Assumption: each document (or review) focuses on a single object (not true in many discussion posts) and contains opinion from a single opinion holder. At the sentence level: • Task 1: identifying subjective/opinionated sentences • Classes: objective and subjective (opinionated) • Task 2: sentiment classification of sentences • Classes: positive, negative and neutral. • Assumption: a sentence contains only one opinion; not true in many cases. • Otherwise we can also consider clauses or phrases.

Opinion Mining Tasks (cont.) At the feature level: • Task 1: Identify and extract object features that have been commented on by an opinion holder (e.g., a reviewer). • Task 2: Determine whether the opinions on the features are positive, negative or neutral. • Task 3:Group feature synonyms. • Produce a feature-based opinion summary of multiple reviews. Opinion holders: identify holders is also useful, e.g., in news articles, etc, but they are usually known in the user generated content, i.e., authors of the posts.

Words Adjectives objective: red, metallic positive:honest important mature large patient negative: harmful hypocritical inefficient subjective (but not positive or negative): curious, peculiar, odd, likely, probable

Words Verbs positive:praise, love negative: blame, criticize subjective: predict Nouns positive: pleasure, enjoyment negative: pain, criticism subjective:prediction, feeling

Clauses Might flip word sentiment “not good at all” “not all good” Might express sentiment not contained in any of the words “convinced my watch had stopped” “got up and walked out”

Sentences/Documents Might express multiple sentiments “The acting was great but the story was a bore” Problem even more severe at document level

Two Approaches to Classifying Documents Bottom-Up Assign sentiment to words Derive clause sentiment from word sentiment Derive document sentiment from clause sentiment Top-Down Get labeled documents Use usual text categorization methods to learn models Derive word/clause sentiment from models

Some Special Issues Whose opinion? Opinion about what?

Some Special Issues Whose opinion? (Writer) (writer, Xirao-Nima, US) (writer, Xirao-Nima) “The US fears a spill-over’’, said Xirao-Nima, a professor of foreign affairs at the Central University for Nationalities.

Laptop Review I should say that I am a normal user and this laptop satisfied all my expectations, the screen size is perfect, its very light, powerful, bright, lighter, elegant, delicate... But the only think that I regret is the Battery life, barely 2 hours... some times less... it is too short... this laptop for a flight trip is not good companion... Even the short battery life I can say that I am very happy with my Laptop VAIO and I consider that I did the best decision. I am sure that I did the best decision buying the SONY VAIO

Word Sentiment Let’s try something simple Choose a few seeds with known sentiment Mark synonyms of good seeds: good Mark synonyms of bad seeds: bad Iterate

Word Sentiment Let’s try something simple Choose a few seeds with known sentiment Mark synonyms of good seeds: good Mark synonyms of bad seeds: bad Iterate Does not quite work: exceptional -> unusual -> weird

Better IdeaHatzivassiloglou & McKeown 1997 Build training set: label all adj. with frequency > 20; test agreement with human annotators Extract all conjoined adjectives nice and comfortable nice and scenic

Hatzivassiloglou & McKeown 1997 3. A supervised learning algorithm builds a graph of adjectives linked by the same or different semantic orientation scenic nice terrible painful handsome fun expensive comfortable

Hatzivassiloglou & McKeown 1997 4. A clustering algorithm partitions the adjectives into two subsets + slow scenic nice terrible handsome painful fun expensive comfortable

Even Better Idea Turney 2001 Pointwise Mutual Information (Church and Hanks, 1989): wordi is the presence of the word i in a given text window. Probabilities under independence: p (word1, word2) = p (word1) p(word2) p (word1, word2) = p (word1) p( word2) p ( word1, word2) = p ( word1) p(word2) p ( word1, word2) = p ( word1) p( word2)

PMI • This quantity is • zero if x and y are independent, • positive if they are positively correlated, • negative if they are negatively correlated. • Positive values indicate that two word occur together more, • compared to the case of independence. • In other words, if two words are highly associated, • their PMI will be high.

Even Better Idea Turney 2001 Pointwise Mutual Information (Church and Hanks, 1989): Semantic Orientation: Out of the well-known positive and negative words, which group is this new word has a stronger association? Read paper PointwiseMutualInfo-Waterloo-2003.pdffor this and other measures.

Even Better Idea Turney 2001 Pointwise Mutual Information (Church and Hanks, 1989): Semantic Orientation: PMI-IR estimates PMI by issuing queries to a search engine

PMI • In Turney, ACL 2002 this was used as a way of assessing the • semantic orientation of words or phrases. • Specifically the semantic orientation of x was defined as: • SO(x) = PMI(x,'excellent') − PMI(x,'poor') • In more detail, Turney interpreted "X=x and Y=y" as an event where two words x and y occur nearby in the same document,and "X=x" as an event where word x occurs in a document. • After some simplification, SO(x) can then be written as:

Resources These -- and related -- methods have been used to generate sentiment dictionaries Sentinet General Enquirer …

Bottom-Up: Words to Clauses Assume we know the “polarity” of a word Does its context flip its polarity?

Prior polarity: out of context, positive or negative wonderful positive horrid negative Contextual polarity:A word may appear in a phrase with a different polarity Prior Polarity versus Contextual PolarityWilson et al 2005 “Cheers to Timothy Whitfield for the wonderfullyhorrid visuals.”

Target Object (Liu, Web Data Mining book, 2006) • Definition (object): An objectois a product, person, event, organization, or topic. o is represented as • a hierarchy of components, sub-components, and so on. • Each node represents a component and is associated with a set of attributesof the component. • An opinion can be expressed on any node or attribute of the node. • To simplify our discussion, we use the term features to represent both components (nodes) and attributes. Bing Liu, 5th Text Analytics Summit, Boston, June 1-2, 2009

What is an Opinion? (Liu, a Ch. in NLP handbook) • An opinion is a quintuple (oj, fjk, soijkl, hi, tl), where • oj is a target object. • fjk is a feature of the object oj. • soijkl is the sentiment value of the opinion of the opinion holder hi on feature fjk of object oj at time tl. soijkl is +ve, -ve, or neu, or a more granular rating. • hi is an opinion holder. • tl is the time when the opinion is expressed. Bing Liu, 5th Text Analytics Summit, Boston, June 1-2, 2009

Objective – structure the unstructured • Objective: Given an opinionated document, • Discover all quintuples (oj, fjk, soijkl, hi, tl), • i.e., mine the five corresponding pieces of information in each quintuple, and • Or, solve some simpler problems • With the quintuples, • Unstructured Text Structured Data • Traditional data and visualization tools can be used to slice, dice and visualize the results in all kinds of ways • Enable qualitative and quantitative analysis. Bing Liu, 5th Text Analytics Summit, Boston, June 1-2, 2009

Sentiment Classification: doc-level(Pang and Lee, Survey, 2008) • Classify a document (e.g., a review) based on the overall sentiment expressed by opinion holder • Classes: Positive, or negative • Assumption: each document focuses on a single object and contains opinions from a single op. holder. • E.g., thumbs-up or thumbs-down? • “I bought an iPhone a few days ago. It was such a nice phone. The touch screen was really cool. The voice quality was clear too. Although the battery life was not long, that is ok for me. However, my mother was mad with me as I did not tell her before I bought the phone. She also thought the phone was too expensive, and wanted me to return it to the shop. …” Bing Liu, 5th Text Analytics Summit, Boston, June 1-2, 2009

Subjectivity Analysis: sent.-level (Wiebe et al 2004) • Sentence-level sentiment analysis has two tasks: • Subjectivity classification: Subjective or objective. • Objective: e.g., I bought an iPhone a few days ago. • Subjective: e.g., It is such a nice phone. • Sentiment classification: For subjective sentences or clauses, classify positive or negative. • Positive: It is such a nice phone. • But(Liu, a Chapter in NLP handbook) • subjective sentences ≠+ve or –ve opinions • E.g., I think he came yesterday. • Objective sentence ≠ no opinion • Imply –ve opinion: The phone broke in two days Bing Liu, 5th Text Analytics Summit, Boston, June 1-2, 2009

Feature-Based Sentiment Analysis • Sentiment classification at both document and sentence (or clause) levels are not enough, • they do not tell what people like and/or dislike • A positive opinion on an object does not mean that the opinion holder likes everything. • An negative opinion on an object does not mean ….. • Objective (recall): Discovering all quintuples (oj, fjk, soijkl, hi, tl) • With all quintuples, all kinds of analyses become possible. Bing Liu, 5th Text Analytics Summit, Boston, June 1-2, 2009

“I bought an iPhone a few days ago. It was such a nice phone. The touch screen was really cool. The voice quality was clear too. Although the battery life was not long, that is ok for me. However, my mother was mad with me as I did not tell her before I bought the phone. She also thought the phone was too expensive, and wanted me to return it to the shop. …” …. Feature Based Summary: Feature1: Touch screen Positive:212 The touch screen was really cool. The touch screen was so easy to use and can do amazing things. … Negative: 6 The screen is easily scratched. I have a lot of difficulty in removing finger marks from the touch screen. … Feature2: battery life … Note: We omit opinion holders Feature-Based Opinion Summary (Hu & Liu, KDD-2004) Bing Liu, 5th Text Analytics Summit, Boston, June 1-2, 2009

+ • Summary of reviews of Cell Phone1 _ Voice Screen Battery Size Weight + • Comparison of reviews of Cell Phone 1 Cell Phone 2 _ Visual Comparison (Liu et al. WWW-2005) Bing Liu, 5th Text Analytics Summit, Boston, June 1-2, 2009

Feat.-based opinion summary in Bing Bing Liu, 5th Text Analytics Summit, Boston, June 1-2, 2009

Sentiment Analysis is Hard! • “This past Saturday, I bought a Nokia phone and my girlfriend bought a Motorola phone with Bluetooth. We called each other when we got home. The voice on my phone was not so clear, worse than my previous phone. The battery life was long. My girlfriend was quite happy with her phone. I wanted a phone with good sound quality.So my purchase was a real disappointment. I returned the phone yesterday.” Bing Liu, 5th Text Analytics Summit, Boston, June 1-2, 2009

Senti. Analy. is not Just ONE Problem • (oj, fjk, soijkl, hi, tl), • oj - a target object: Named Entity Extraction (more) • fjk - a feature of oj: Information Extraction • soijkl is sentiment: Sentiment determination • hi is an opinion holder: Information/Data Extraction • tl is the time: Data Extraction • Co-reference resolution • Relation extraction • Synonym match (voice = sound quality) … • None of them is a solved problem! Bing Liu, 5th Text Analytics Summit, Boston, June 1-2, 2009

Accuracy is Still an Issue! • Some commercial solutions give clients several example opinions in their reports. • Why not all? Accuracy could be the problem. • Accuracy: both • Precision: how accurate is the discovered opinions? • Recall: how much is left undiscovered? • Which sentence is better? (cordless phone review) • (1) The voice quality is great. • (2) I put the base in the kitchen, and I can hear clearly from the handset in the bed room, which is very far. Bing Liu, 5th Text Analytics Summit, Boston, June 1-2, 2009

Easier and Harder Problems • Reviews are easier. • Objects/entities are given (almost), and little noise • Forum discussions and blogs are harder. • Objects are not given, and a large amount of noise • Determining sentiments seems to be easier. • Determining objects and their corresponding features is harder. • Combining them is even harder. Bing Liu, 5th Text Analytics Summit, Boston, June 1-2, 2009

Manual to Automation • Ideally, we want an automated solution that can scale up. • Type an object name and then get +ve and –ve opinions in a summarized form. • Unfortunately, that will not happen any time soon. Manual --------------------|-------- Full Automation • Some creativity is needed to build a scalable and accurate solution. Bing Liu, 5th Text Analytics Summit, Boston, June 1-2, 2009