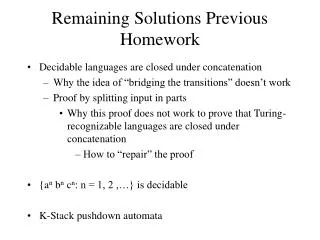

Remaining Solutions Previous Homework

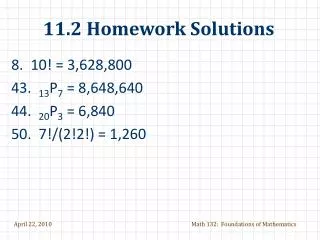

Remaining Solutions Previous Homework. Decidable languages are closed under concatenation Why the idea of “bridging the transitions” doesn’t work Proof by splitting input in parts Why this proof does not work to prove that Turing-recognizable languages are closed under concatenation

Remaining Solutions Previous Homework

E N D

Presentation Transcript

Remaining Solutions Previous Homework • Decidable languages are closed under concatenation • Why the idea of “bridging the transitions” doesn’t work • Proof by splitting input in parts • Why this proof does not work to prove that Turing-recognizable languages are closed under concatenation • How to “repair” the proof • {an bn cn: n = 1, 2 ,…} is decidable • K-Stack pushdown automata

Control head1 head2 … … a1 a1 a2 a2 Tape1 Tape2 Multi-tape Turing Machines We add a fixed number of tapes …

NEW SLIDE Multi-tape Turing Machines: Transitions Transactions in a multi-tape Turing machine allows the head to stay put where they are. So the general for a 2-Tape Turing machine are: Q ( ) Q ( ) ({L,R,S} {L,R,S}) So for example the transition: ((q,(a,b)), (q’, (a,a), (L,S))) Is moving the head of the first tape to the left while keeping the head of the second tape where it was

Multi-tape Turing Machines vs Turing Machines M2 … a1 a2 ai … … … b1 b2 bj • We can simulate a 2-tape Turing machine M2 in a Turing machine M: • we can represent the contents of the 2 tapes in the single tape by using special symbols • We can simulate one transition from the M2 by constructing multiple transitions on M • We introduce several (but fixed) new states into M

State in the Turing machine M: “s+b+1+a+2” Which represents: Using States to “Remember” Information Configuration in a 2-tape Turing Machine M2: Tape1 a b a b State: s Tape2 b b a • M2 is in state s • Cell pointed by first header in M2 contains b • Cell pointed by second header in M2 contains an a

(# states in M2) * | or or or S| * | or or or S| State in the Turing machine M: “s+b+1+a+2” Using States to “Remember” Information (2) How many states are there in M? Yes, we need large number of states for M but it is finite!

4 e a b 3 b b 1 2 a b a Configuration in a 2-tape Turing Machine M2: Tape1 a b a b State in M2: s Tape2 b b a Equivalent configuration in a Turing Machine M: State in M: s+b+1+a+2

Simulating M2 with M • The alphabet of the Turing machine M extends the alphabet 2 from the M2 by adding the separator symbols: 1, 2, 3 , 4 and e, and adding the mark symbols: and • We introduce more states for M, one for each 5-tuple p++1+ +2where p in an state in M2 and +1+ +2indicates that the head of the first tape points to and the second one to • We also need states of the form p++1++2 for control purposes

At the beginning of each iteration of M2, the head starts at e and both M and M2 are in an state s • We traverse the whole tape do determine the state p++1+ +2,Thus, the transition in M2 that is applicable must have the form: State in M: s+b+1+a+2 4 e a b 3 b b 1 2 a b a ((p,(, )), (q,(1, 2) ,(,S))) in M2 ((p+ +1+ +2, e), (q+ 1++1++S+2, e,) in M Simulating transitions in M2 with M

i i 1 … 1 … Simulating transitions in M2 with M (2) • To check if the transformation (q,(,),…) is applicable,we go forwards from the first cell. • If transformation is (or ) we move the marker to the right (left): • To overwrite characters, M must first determine the correct position

Output: 4 e a b 3 b b 2 1 a b a 4 e 1 a b 3 b b 2 a b a 4 e 3 b b a a 1 b 2 a b 4 e 1 3 b b 2 a b a b a 4 e a b 3 b b 2 1 a b a state: s 4 e a b 3 b b 2 1 a b a state: s+b+1

Multi-tape Turing Machines vs Turing Machines (final) • We conclude that 2-tape Turing machines can be simulated by Turing machines. Thus, they don’t add computational power! • Using a similar construction we can show that 3-tape Turing machines can be simulated by 2-tape Turing machines (and thus, by Turing machines). • Thus, k-tape Turing machines can be simulated by Turing machines

Implications • If we show that a function can be computed by a k-tape Turing machine, then the function is Turing-computable • In particular, if a language can be decided by a k-tape Turing machine, then the language is decidable Example: Since we constructed a 2-tape TM that decides L = {anbn : n = 0, 1, 2, …}, then L is Turing-computable.

Summary of Previous Class • There are languages that are not decidable • (we have not proved this yet) • Why not extend Turing machines just as we did with finite automata and pushdown automata? • First Try: multi-tape Turing machines • More convenient than Turing machines • But multi-tape Turing machines can be simulated with Turing machines • Therefore, anything we can do with a multi-tape Turing machines can be done with Turing machines • On the bright side we can use this to our advantage! • Second Try: k-stack Pushdown automata • But k-stack Pushdown Automata are equivalent to Turing machines for k 2

Control head1 head2 headn … Tape … a1 a2 • We add a fixed number of heads for the same tape • As with Multi-tape Turing machines, heads are allowed to stay put in the transitions Third Try: Multi-Head Turing Machines update

a b a b Can be represented in a 3-tape Turing Machine Configuration in a 2-header Turing Machine: Tape a b State: s a b And use states to remember the pointers: s+b+1+b+2

a b 1 2 a b e 3 s+b+1 s+b+1+b+2 Or could be represented directly in a Turing Machine Configuration in a 2-header Turing Machine: Tape a b State: s a b

Fourth Try: Nondeterministic Turing Machines Reminder: When a word is accepted by a nondeterministic automata? Given an NFA, a string w *, is accepted by A if at least one of the configurations yielded by (s,w) is a configuration of the form (f,e) with f a favorable state (s,w) (p,w’) … (q,w’’) with w’’ e (s,w) (p,w’) … (q,e) with q F … (s,w) (p,w’) … (f,e) … We will do something similar for nondeterministic TMs

Deciding, Recognizing Languages with Nondeterministic Turing machines Definition. A nondetermistic Turing machine NM decides a language L (L is said to be decidable) if: • If w L then: • at least one possible computation terminates in an acceptable configuration • No computation ends in a rejecting state • If w L then: • No computation ends in an acceptable state • At least one possible computation terminates in a rejecting configuration Definition. A Turing machine recognizes a language L if it meets condition 1 above (L is said to be Turing-recognizable)

C0 = q0aab a b apab a b aapb aaqb ? aabqaccept aabq p accept q qaccept ? Example of Nondeterministic TM Find a nondeterministic TM recognizing the language a*ab*b Solution: ML: accept

Nondeterministic TMs can be Simulated by TMs Theorem. If a nondeterministic Turing machine, NM, recognizes a language L, then there is a Turing machine, M, recognizing the language L Theorem. If a nondeterministic Turing machine, NM, decides a language L, then there is a Turing machine, M, deciding the language L

C0 C11 C12 C13 …C1n C21 C22 … … Nondeterministic TMs can be Simulated by TMs Idea: Let w = hRS • Simulate all computations in NM by computing them doing breadth-first order C0 = q0hRS NM: … • If w L one of the Cij is an accepting configuration: (h,…) which will also be found by M C11 C1n C12 C13 … C21 … C22 • Similar argument can be made for w L M:

Context-Free Languages are Decidable • Let L be a context-free language • Let A be a pushdown automata accepting L • We simulate the pushdown automata A using a 2-tape nondeterministic Turing machine, M. The second tape is used for simulating the stack • The header of the first tape points to the next character to be processed and the header of the second tape points to the last element (top of the stack)

Context-Free Languages are Decidable For each transition ((q, ,), (q’,)) in A, we construct several transitions in M doing the following steps: • Check if the next character is is (check if head of Tape 1 points to , and move the header to the right) • Check if the top of the stack is (check if head of Tape 2 points to , write a blank, and move the header to the left) • If 1 and 2 hold, “push” on tape 2 (move header to the right and write )

Adding several tapes Pushdown automata with 2 or more stacks Matrix Adding several heads Non determinism “no one can be told what the Matrix is, you have to see it for your self” Possible Extensions of Turing Machines Turing Machines Physical Computational None of these extensions add computational power to the Turing Machines

Please provide a secret nickname • So I can post all grades on web site. • 2. Proof that the function: z = x + y is decidable. Assume that x, y and z are binary numbers. Hint: Use a 3-tape Turing-Machine as follows: • Tape 1: x • Tape 2: y • Tape 3: z • 3. Suppose that a language L is enumerated by an enumerator Turing machine (we say that L is Turing-enumerable). • Prove that L must be Turing-recognizable • Can we prove that L is decidable? Provide the proof or explain why not • 4. Problem 3.14 (note that there are 2 parts) Homework for Friday