Unsupervised learning Networks

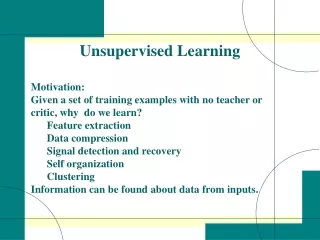

ELE571 Digital Neural Networks. Unsupervised learning Networks. Associative Memory Networks. Associative Memory Networks. Associative Memory Networks recalls the original undistorted pattern from a distorted or partially-missing pattern. feedforward type (one-shot-recovery)

Unsupervised learning Networks

E N D

Presentation Transcript

ELE571 Digital Neural Networks Unsupervised learning Networks • Associative Memory Networks

Associative Memory Networks Associative Memory Networks recalls the original undistorted pattern from a distorted or partially-missing pattern. • feedforward type (one-shot-recovery) • feedback type e.g., the Hopfield network (iterative recovery)

Associative Memory Model (feedfoward type) a nonlinear unit: e.g. threshold b b(k) W = b(k)m b(m) T a(m) = a(k) W could be (1) symmetric or not, (2) square or not

a1 = [1 1 1 1 –1 1 1 1 1] a2 = [1 -1 1 -1 1 -1 1 –1 1] X=[ ] 1 1 1 1 –1 1 1 1 1 1 -1 1 -1 1 -1 1 –1 1 2 0 2 0 0 0 2 0 2 0 2 0 2 -2 2 0 2 0 2 0 2 0 0 0 2 0 2 0 2 0 2 -2 2 0 2 0 0 -2 0 -2 2 -2 0 -2 0 0 2 0 2 -2 2 0 2 0 2 0 2 0 0 0 2 0 2 0 2 0 2 -2 2 0 2 0 2 0 2 0 0 0 2 0 2 The weight matrix W =XTX=

Associative Memory Model (feedback type) anew aold • W must be • normalized • W = WH W=X+X

Each iteration in AMM(W) comprises two substeps: • (a) Projection of aold onto W-plane • anet = W aold • Remap the net-vector to closest symbol vector: • anew = T[anet] The two substeps in one iteration can be sumarized as one procedure: anew = arg minanew ss|| anew - Waold ||

initial vector Γρ x -plane symbol vector perfect attractor Linear projection onto x-plane = g-update in DML, shown as 2 steps in one AMM iteration Resymbolization nonlinear mapping = ^ s-update in DML, shown as ^ anet is the (least-square-error) projection of aold onto the (column) subspace of W.

Common Assumptions on Signal for Associative Retrieval • Inherent properties of the signals (patterns) • to be retrieved: • Orthogonality • Higher-order statistics • Constant modulus property • FAE -Property • others

gX=gH S = v S = ŝ Blind Recovery of MIMO System Channel Source Observation X S ε1 H g +

h11 g1 h21 h1p + g2 S h2p gq hq1 hqp Blind Recovery of MIMO System s1 si sp Goal: to find g, such that v gH, and v S = [ 0 .. 0 1 0 .. 0 ]S = sj

Assumptions on MIMO System For Deterministic H, …… Signal Recoverability • H is PR (perfectly recoverable) if and only if H has full column rank, i.e. an inverse exists.

H=[ ] H=[ ] 1 2 1 3 1 2 1 2 1 2 1 2 Examples for Flat MIMO non-recoverable recoverable

H=[ ] 1 2 1 3 1 2 If perfectly recoverable, e.g. • Parallel Equalizer • ŝ = H+X = G X

gX=gH S = v S = ŝ Example: Wireless MIMO System X S ε1 H g + Signal Constellation Ci Signal recovery via g:

FAE()-Property: Finite-Alphabet Exclusiveness Given v s = ŝ, for ŝ to be always a valid symbol for any valid symbol vector s, if and only if v [ 0 .. 0 ±1 0 .. 0 ]

Theorem: FAE -Property Suppose that a v W = b. For the output b to be always a valid symbol sequence given whatever v, the necessary and sufficient condition is that v = E(k) . In other words, it is impossible to produce a valid but different output symbol vector.

], S = [ -1 +1 +1 +1 +1 +1 • • +1 +1 +1 -1 -1 +1 • • -1 -1 +1 -1 +1 +1 • • FAE()-Property: Finite-Alphabet Exclusiveness v S = [valid symbols] if and only if v [ 0 .. 0 ±1 0 .. 0 ] If v =[ 0 .. 0 ±1 0 .. 0 ] If v ≠[ 0 .. 0 ±1 0 .. 0 ]

Blind-BLAST gX=gH S = v S = ŝ “EM”: • The E step determines the best guess of the membership function zj . • The M step determines the best parameters, θn , which maximizes the likelihood function. ŝ = T[gX ] ŝ [Finite Alphabet] E-step M-step ĝ= ŝX+ š= T[ĝ X] E-step ğ= šX+ M-step š= T[ĝ X] =T[ ŝX+ X] =T[ ŝ W] Combined EM:

:threshold Associative Memory Network ŝnew ŝnew W=X+X ŝnew ŝold=ŝ ŝnew= Sign[ŝoldW]

Nearest symbol vector initial vector ŝ gx -plane ŝ’ = T[ŝW] = Linear projection onto gx - plane g= ŝold X+ = FA nonlinear mapping

Definition: Perfect Attractor of AMM(W) • A symbol vector a* is a "perfect attractor" of AMM(W) if and only if • a* is symbol vector • a* = W a*

Let v= [ f(1) ≠0 f(2) … f(p)] Let v’= [ f(1) ≠0 f(2) … f(p-1) 0] Let ûi= [ ui (1) ui(2) … ui(p) ]T 1 q 2 Let ǖi = v ûi Let ǖ’i = v’ ûi 1 p = 0 1 Thus f(p) =0

MatLab Exercise Compare the two programs and determine the differences in performance. Why such a difference?

p = zeros(1,100); for j=1:100; S = sign(randn(5,200)); A = randn(5,5)+eye(5); X = A*S + 0.01*randn(5,200); s = sign(randn(200,1)); W = X'*inv(X*X')*X; for i=1:20; sold = s; s = tanh(100*W*s); s = sign(s); end while norm(s-W*s)> 5.0, s = sign(randn(200,1)); for i=1:20; sold = s; s = tanh(100*W*s); s = sign(s); end end p(j) = max(abs(S*s)); end hist(p)

p = zeros(1,100); for j=1:100; S = sign(randn(5,200)); A = randn(5,5)+eye(5); X = A*S + 0.01*randn(5,200); s = sign(randn(200,1)); W = X'*inv(X*X')*X; for i=1:20; sold = s; s = tanh(100*W*s); s = sign(s); end p(j) = max(abs(S*s)); end hist(p)