Enhancing User Interaction through Anthropomorphic Interface Metaphor

Explore the importance of empathy in user interface synthesis and analysis, gUI research objectives, data specification, implementation issues, and results of the prototype system with anthropomorphic interface metaphors.

Enhancing User Interaction through Anthropomorphic Interface Metaphor

E N D

Presentation Transcript

gUI: Specifying Complete User Interaction Soft computing Laboratory Yonsei University October25, 2004

Contents • Computer human user interfaces – Synthesis • Value of empathy in synthesis • Computer human user interfaces – Analysis • gUI research objectives • The role of the anthropomorphic interface metaphor • gUI data specification issues • Implementation issues • Results of the prototype system • Conclusion

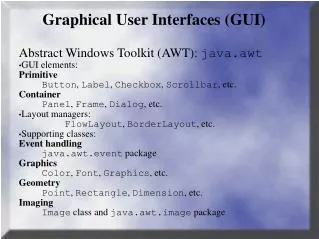

Computer Human User Interfaces – Synthesis • Humane CHIs (Computer Human Interfaces) • Embodied character agents (ECAs), Embodied conversational agents, virtual conversational characters, virtual humans, talking heads • Basic criteria for a standard markup language • Completeness, simplicity, consistency, intuitiveness, abstraction, usability, standardization, evaluation • VHML • An open specification available at the VHML web site • Two applications • MetaFace, an ECA • The Mentor systems, a dialogue management system

Value of Empathy in Synthesis • ECA with emotions or a personality: Increase the feeling of familiarity • Application areas with common anthropomorphic metaphors • Universities • E-commerce • Web guides • Traveling • Interactive games • Knowledge interactive companions

Computer Human User Interfaces – Analysis • “Aware”: The user does not know how to perform the task • Gestalt user interfaces (gUIs) • “gestalt”: An organized whole in which each part affects every other part • The mind organizes events and situations as a pattern “big picture” • The entities • Similarity, proximity, closure, continuity, membership • Input: A combined “stimulus” of text, button clicks, and analyzed facial, vocal, and emotional gestures • Recognition: Translate the stimulus into the intent

gUIs Value of Empathy in Analysis • “more complete” encapsulation of human interaction • The empathic ability of an interface • The extra functionality acquired • An intuitive interface • Overlooked empathic state of the virtual character • Attend: Considering the thoughts and feelings of the user • Engage: Aligning actions, thoughts, and feelings with those of the user • Value: Expressing the value of the user’s interaction • Encourage: Expression encouragement for further interaction • Parting: Suspending dialogue while user performs other tasks • Available: Allowing the user to interrupt for interaction

gUI Research Objectives • Formal specification, development, implementation, evaluation, and standardization of a markup language is necessary to provide a stable, consistent base for both industrial use and future research into multi-modal Human-Computer interaction • MPEG-4: Solutions to facial and body animation at the low level • Require a unified standard architecture plus language to control/record the higher level human ECA interaction

The Role of the Anthropomorphic Interface Metaphor (1) • Anthropomorphicmetaphor • To specify complete user interaction with an ECA • To use the analysis and synthesis of emotion and gesture • Desktop metaphor • The most predominant metaphor used in computer systems • The metaphor’s likeness to our perception of the world • “illusions” • Reduce cognitive overhead when users interact with an anthropomorphic interface • gUI applications become an extension of real life • Waste paper basket on the Macintosh desktop • The humanity of talking heads

The Role of the Anthropomorphic Interface Metaphor (2) • Anthropomorphic web applications • Bryan and Gerchman • Browsing: The leisurely navigation along an arbitrary path • Searching: Goal oriented finding specific information • A knowledgable ECA (MetaFace) • Knows that you are getting frustrated in web searching • Offers assistance as a friend • Transcription • Representation, transformation, and storage of analyzed emotions and gestures • Real-time interaction and data representation • Efficient storage and communication of the data • Intelligent television application: handle real-time emotions and gestures to control the function of the TV

gUI Data Specification Issues (1) • “Affect recognition” • Proper gestalt understanding of the user requirements • “Non-verbal” behavior and “Non-verbal” communications web site (http://www3.usal.es/~nonverbal/paper.htm) • Temporal window • Differentiate short mixed emotions such as surprise before anger or fear • Detect more lengthy emotions such as gradual happiness as “understanding comes to someone” • Specification of the gUI input data format • At the lowest level: Efficient binary format • At the higher levels: a more structured format aiding in abstraction and cognitive understanding

gUI Data Specification Issues (3) • Dynamic context transitions • Time-stamping of emotion and gestures: Synchronizing the resulting XML document and providing context • MPEG-4 face and body animation • Efficient communication standard • Emotion and gesture representation for real-time applications • Some problems in VHML data representation • Lack of a consistent set of emotions, and the ability to add more • Lack of ability to show change in different sets of emotions or just a single emotion • Lack of additional attributes for emotion besides intensity

gUI Data Specification Issues (4) • A more realistic and extensible semantic structure • Gesture input

gUI Data Specification Issues (5) • Issues on specifying gestures • A huge diversity of gestures for the human body to try and identify a base set for all analysis and synthesis applications • Own associated information on each gesture: No standard parameters for all gestures • Culturally dependent gestures • A less naïve format

Implementation Issues (1) • MetaFace framework • An example ECA used to test the underlying theories • Based on MPEG-4 and VHML • The ability to vocalize and visually display emotion and gesture • Dialogue management tool language (DMTL) • Evaluate tag: Inference the context

Conclusion • Challenges • The overlap of text input, gesture, and emotion as one coherent stimulus • Standardization of emotion and gesture representation • Complete user interaction with gestalt user interface • Use anthropomorphic metaphors • Reduce the conceptual load • Mentor and MetaFace research project