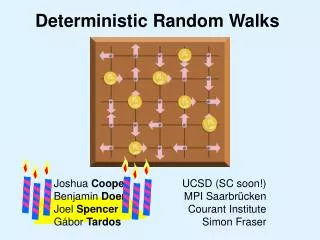

Benjamin Doerr

330 likes | 353 Views

Introduction to Quasirandomness. Benjamin Doerr. Max-Planck-Institut für Informatik Saarbrücken. Randomness in Computer Science. Revolutionary Discovery (~1970): Algorithms making random decisions (“randomized algorithms”) are extremely powerful.

Benjamin Doerr

E N D

Presentation Transcript

Introduction to Quasirandomness Benjamin Doerr Max-Planck-Institut für Informatik Saarbrücken

Randomness in Computer Science • Revolutionary Discovery (~1970): • Algorithms making random decisions (“randomized algorithms”) are extremely powerful. • Often, making all decisions at random, suffices. • Such algorithms are • easy to find • easy to implement ( “algorithm engineering”) • often easy to analyse. • Numerous applications: • Quicksort • primality testing • randomized rounding, ... Benjamin Doerr

Randomness in Computer Science • Example: Exploring unknown environment. • Environment: • Graph G = (V,E) • Task: Explore! • E.g.: “Is there a way from A to B?” • Simple randomized solution: • Do a random walk: Choose the next vertex to go to uniformly at random from your neighbors. • Analysis: For all graphs, the expected time to visit all vertices (“cover time”) is at most 2 |V| |E|. Benjamin Doerr

Beyond Randomness... • Pseudorandomness (not the topic of this talk): • Aim: Have something look completely random • Cope with the fact that true randomness is difficult to get. • Example: Pseudorandom numbers shall look random independent of the particular application. • Quasirandomness (topic of this talk): • Aim: Imitate a particular property of a random object. • Extract the good featrure from the random object. • Example: Quasi-Monte-Carlo Methods (numerics) • Monte-Carlo Integration: Use random sample points. • QMC Integration: Use evenly distributed sample points. Benjamin Doerr

Outline of the Talk • Part 0: From Randomness to Quasirandomness [done] • Quasirandom: Imitate a particular aspect of randomness • Part 1: Quasirandom random walks • also called “Propp machine” or “rotor router model” • Part 2: Quasirandom rumor spreading • the right dose of randomness? Benjamin Doerr

Reminder Quasirandomness • Imitate a particular property of a random object! • Why study this? • Understanding randomness (basic research): Is it truely the randomness that makes a random object useful, or just a particular property it usually has? • Learn from the random object and make it better (custom it to your application) without randomness! • Combine random and quasirandom elements: What is the right dose of randomness? Benjamin Doerr

Part 1: Quasirandom Random Walks • Random Walk • How to make it quasirandom? • Three results: • Cover times • Discrepancies: Massive parallel walks • Internal diffusion limited aggregation (physics) Benjamin Doerr

Random Walks • Rule: Move to a neighbor chosen at random Benjamin Doerr

Quasirandom Random Walks • Simple observation: If the random walk visits a vertex many times, then it leaves it to each neighbor approximately equally often • n visits to a vertex of constant degree d: n/d + O(n1/2) moves to each neighbor. • Quasirandomness: Ensure that neighbors are served evenly! Benjamin Doerr

Quasirandom Random Walks • Rule: Follow the rotor. Rotor updates after each move. Benjamin Doerr

Quasirandom Random Walks • Simple observation: If the random walk visits a vertex many times, then it leaves it to each neighbor approx. equally often • n visits to a vertex of constant degree d: n/d + O(n1/2) moves to each neighbor. • Quasirandomness: Ensure that neighbors are served evenly! • Put a rotor on each vertex, pointing to its neighbors • The quasirandom walk moves in the rotor direction • After each step, the rotor turns and points to the next neighbor (following a given permutation of the neighbors) Benjamin Doerr

Quasirandom Random Walks • Other names • Propp machine (after Jim Propp) • Rotor router model • Deterministic random walk • Some freedom in the design • Initial rotor directions • Order, in which the rotors serve the neighbors • Also: Alternative ways to ensure fairness in serving the neighbors Fortunately: No real difference Benjamin Doerr

Result 1: Cover Times • Cover time: How many steps does the (quasi)random walk need to visit all vertices? • Classical result [AKLLR’79]: For all graphs G=(V,E) and all vertices v, the expected time a random walk started in v needs to visit all vertices, is at most 2 |E|(|V|-1). • Quasirandom: Same bound of 2|E|(|V|-1), independent of the particular set-up of the Propp machine. • Note: Same bound, but ‘sure’ instead of ‘expected’. • “Lesson to learn”: Not randomness yields the good cover time, but the fact that each vertex serves its neighbors evenly! Benjamin Doerr

Result 2: Discrepancies • Model: Many chips do a synchronized (quasi)random walk • Discrepancy: Difference between the number of chips on a vertex at a time in the quasirandom model and the expected number in the random model Benjamin Doerr

Example: Discrepancies on the Line Random Walk (Expectation): 19 2.375 4.75 7.125 9.5 9.5 7.125 9.5 4.75 2.375 Quasirandom: 19 2 5 8 9 9 10 6 5 3 But: You’ll never have more than 2.29 [Cooper, D, Spencer, Tardos] A discrepancy > 1! Difference: -0.375 0.25 0.875 -0.5 -0.5 -1.125 0.5 0.25 0.625 Benjamin Doerr

Result 2: Discrepancies • Model: Many chips do a synchronized (quasi)random walk • Discrepancy: Difference between the number of chips on a vertex at a time in the quasirandom model and the expected number in the random model • Cooper, Spencer [CPC’06]: If the graph is an infinite d-dim. grid, then (under some mild conditions) the discrepancies on all vertices at all times can be bounded by a constant (independent of everything!) • Note: Again, we compare ‘sure’ with expected Benjamin Doerr

Result 3: Internal Diffusion Limited Aggregation • Model: • Start with an empty 2D grid. • Each round, insert a particle at ‘the center’ and let it do a (quasi)random walk until it finds an empty grid cell, which it then occupies. • What is the shape of the occupied cells after n rounds? • 100, 1600 and 25600 particles in the random walk model: [Moore, Machta] Benjamin Doerr

Result 3: Internal Diffusion Limited Aggregation • Model: • Start with an empty 2D grid. • Each round, insert a particle at ‘the center’ and let it do a (quasi)random walk until it finds an empty grid cell, which it then occupies. • What is the shape of the occupied cells after n rounds? • With random walks: • Proof: outradius – inradius = O(n1/6) [Lawler’95] • Experiment: outradius – inradius ≈ log2(n) [Moore, Machta’00] • With quasirandom walks: • Proof: outradius – inradius = O(n1/4+eps) [Levine, Peres] • Experiment: outradius – inradius < 2 Benjamin Doerr

Result 3: Internal Diffusion Limited Aggregation • Model: • Start with an empty 2D grid. • Each round, insert a particle at ‘the center’ and let it do a (quasi)random walk until it finds an empty grid cell, which it then occupies. • What is the shape of the occupied cells after n rounds? Benjamin Doerr

Summary Quasirandom Walks • Model: • Follow the rotor and rotate it • Simulates: Balanced serving of neighbors • 3 particular results: Surprising good simulation (“or better”) of many random walk aspects. • Graph exploration in 2|E|(|V|-1) steps • Many chips: For grids, we have the same number of chips on each vertex at all times as expected in the random walk (apart from constant discrepancy) • IDLA: Almost perfect circle. • Try a quasirandom walk instead of a random one ! Benjamin Doerr

Part 2: Quasirandom Rumor Spreading • Classical: “Randomized Rumor Spreading” • protocol to distribute information in a network • How to make this quasirandom? • “The right dose of randomness”? Benjamin Doerr

Randomized Rumor Spreading • Model (on a graph G): • Start: One node is informed • Each round, each informed node informs a neighbor chosen uniformly at random • Broadcast time T(G): Number of rounds necessary to inform all nodes (maximum taken over all starting nodes) Round 4: Each informed node informs a random node Round 5: Let‘s hope the remaining two get informed... Round 2: Each informed node informs a random node Round 3: Each informed node informs a random node Round 1: Starting node informs random node Round 0: Starting node is informed Benjamin Doerr

Randomized Rumor Spreading • Model (on a graph G): • Start: One node is informed • Each round, each informed node informs a neighbor chosen uniformly at random • Broadcast time T(G): Number of rounds necessary to inform all nodes (maximum taken over all starting nodes) • Application: • Broadcasting updates in distributed replicated databases • Properties: • simple • robust • self-organized Benjamin Doerr

Randomized Rumor Spreading • Model (on a graph G): • Start: One node is informed • Each round, each informed node informs a neighbor chosen uniformly at random • Broadcast time T(G): Number of rounds necessary to inform all nodes (maximum taken over all starting nodes) • Results [n: Number of nodes]: • Easy: For all graphs G, T(G) ≥ log(n) • Complete graphs: T(Kn) = O(log(n)) w.h.p. • Hypercubes: T({0,1}d) = O(log(n)) w.h.p. • Random graphs: T(Gn,p) = O(log(n)) w.h.p., p > (1+eps)log(n)/n [Frieze&Grimmet (1985), Feige, Peleg, Raghavan, Upfal (1990), Karp, Schindelhauer, Shenker, Vöcking (2000)] Benjamin Doerr

Deterministic Rumor Spreading? • As above except: • Each node has a list of its neighbors. • Informed nodes inform their neighbors in the order of this list (starting at the top if done). • Simulates 2 “neighbor fairness” properties: • Within few rounds, a vertex informs distinct neighbors only. • Within many rounds, each neighbor is informed equally often [clearly the less useful property] Benjamin Doerr

Deterministic Rumor Spreading? • As above except: • Each node has a list of its neighbors. • Informed nodes inform their neighbors in the order of this list (starting at the top if done). • Problem: Might take long... • Here: n-1 rounds . • No hope for quasirandomness here? 1 2 3 4 5 6 List: 2 3 4 5 6 3 4 5 6 1 4 5 6 1 2 5 6 1 2 3 6 1 2 3 4 1 2 3 4 5 Benjamin Doerr

Semi-Deterministic Rumor Spreading • As above except: • Each node has a list of its neighbors. • Informed nodes inform their neighbors in the order of this list, but start at a random position in the list Benjamin Doerr

Semi-Deterministic Rumor Spreading • As above except: • Each node has a list of its neighbors. • Informed nodes inform their neighbors in the order of this list, but start at a random position in the list • Results (1): Benjamin Doerr

Semi-Deterministic Rumor Spreading • As above except: • Each node has a list of its neighbors. • Informed nodes inform their neighbors in the order of this list, but start at a random position in the list • Results (1): The log(n) bounds for • complete graphs, • hypercubes, • random graphs Gn,p, p > (1+eps) log(n) still hold... Benjamin Doerr

Semi-Deterministic Rumor Spreading • As above except: • Each node has a list of its neighbors. • Informed nodes inform their neighbors in the order of this list, but start at a random position in the list • Results (1): The log(n) bounds for • complete graphs, • hypercubes, • random graphs Gn,p, p > (1+eps) log(n) still hold independent from the structure of the lists [2 good news: (a) results hold, (b) things can be analyzed] [D, Friedrich, Sauerwald] Benjamin Doerr

Semi-Deterministic Rumor Spreading • Results (2): • Random graphs Gn,p, p = (log(n)+log(log(n)))/n: • fully randomized: T(Gn,p) = Θ(log(n)2) w.h.p. • semi-deterministic: T(Gn,p) = Θ(log(n)) w.h.p. • Complete k-regular trees: • fully randomized: T(G) = Θ(k log(n)) w.h.p. • semi-deterministic: T(G) = Θ(k log(n)/log(k)) w.p.1 • Algorithm Engineering Perspective: • need fewer random bits • easy to implement: Any implicitly existing permutation of the neighbors can be used for the lists Benjamin Doerr

Outlook: The Right Dose of Randomness? • Broadcasting results also indicate that the right dose of randomness can be important! • Alternative to the classical “everything independent at random” • May-be an interesting direction for future research? • Related: • Dependent randomization, e.g., dependent randomized rounding: • Gandhi, Khuller, Partharasathy, Srinivasan (FOCS’01+02) • D. (STACS’06+07) • Randomized search heuristics: Combine random and other techniques, e.g., greedy • Example: Evolutionary algorithms • Mutation: Randomized • Selection: Greedy (survival of the fittest) Benjamin Doerr

Summary • Quasirandomness: • Simulate a particular aspect of a random object • Surprising results: • Quasirandom walks • Quasirandom rumor spreading • For future research: • Good news: Quasirandomness can be analyzed (in spite of ‘nasty’ dependencies) • Many open problems • “What is the right dose of randomness?” Grazie! Benjamin Doerr