Streamlining Distributed Data Analysis with CRAB in CMS Grid Environment

210 likes | 319 Views

CRAB (CMS Remote Analysis Builder) is designed to facilitate CMS collaboration data analysis efforts in a vast distributed framework. As the CMS experiment generates around 2 PB of data annually at CERN’s LHC, CRAB provides a user-friendly interface for researchers unfamiliar with grid infrastructure. It automates job creation, data discovery, resource management, and output retrieval, allowing physicists to focus on analysis rather than technical hurdles. This tool enhances computational efficiency and simplifies access to distributed datasets.

Streamlining Distributed Data Analysis with CRAB in CMS Grid Environment

E N D

Presentation Transcript

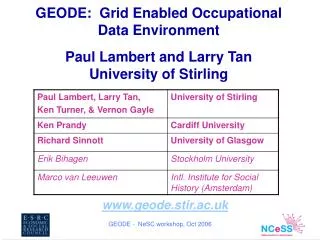

Federica Fanzago INFN PADOVA CRAB: a tool for CMS distributed analysis in grid environment

Introduction CMS “Compact Muon Solenoid” is one of the four particle physics experiment that will collect data at LHC “Large Hadron Collider” starting in 2007 at CERN CMS will produce a large amount of data (events) that should be made available for analysis to world-wide distributed physicists • CMS will produce • ~2 PB events/year (assumes startup luminosity 2x1033 cm-2 s-1) • All events will be stored into files • O(10^6) files/year • Files will be grouped in Fileblocks • O(10^3) Fileblocks/year • Fileblocks will be grouped in Datasets • O(10^3) Datasets (total after 10 years of CMS) • 0.1- 100 TB “bunch crossing” every 25 nsecs. 100 “triggers” per second Each triggered event ~1 MB in size

How to manage and where to store this huge quantity of data? How to assure data access to physicists of CMS collaboration? How to have enough computing power for processing and data analysis? How to ensure resources and data availability? How to define local and global policy about data access and resources? CMS will use a distributed architecture based on grid infrastructure Tools for accessing distributed data and resources are provided by WLCG (World LHC Computing Grid) with two main different flavours LCG/gLite in Europe, OSG in the US Issues and help

CMS computing model Offline farm recorded data Online system CERN Computer center Tier 0 . . Italy Regional Center Fermilab Regional Center France Regional Center Tier 1 . . . Tier 2 Tier2 Center Tier2 Center Tier2 Center workstation Tier 3 InstituteA InstituteB The CMS offline computing system is arranged in four Tiers and is geographically distributed Remote data accessible via grid

Analysis:what happens in a local environment... User writes his own analysis code and configuration parameter card Starting from CMS specific analysis software Builds executable and libraries He apply the code to a given amount of events, whose location is known, splitting the load over many jobs But generally he is allowed to access only local data He writes wrapper scripts and uses a local batch system to exploit all the computing power Comfortable until data you’re looking for are sitting just by your side Then he submits all by hand and checks the status and overall progress Finally collects all output files and store them somewhere

...and in a distributed grid environment The distributed analysis is a more complex computing task because it assume to know: which data are available where data are stored and how to access them which resources are available and are able to comply with analysis requirements grid and CMS infrastructure details But users don't want deal with these kind of problem Users want to analyze data in “a simple way” as in local environment

Distribution analysis chain... To allow analysis in distributed environment, the CMS collaboration is developing some tools interfaced with grid services, that include Installation of CMS software via grid on remote resources Data transfer service: to move and manage a large flow of data among tiers Data validation system: to ensure data consistency Data location system: to keep track of data available in each site and to allow data discovery, composed by Central database (RefDB) that knows what kind of data (dataset) have been produced in each Tier Local database (PubDB) in each Tier, with info about where data are stored and their access protocol CRAB: Cms Remote Analysis Builder...

... and CRAB role CRAB is a user-friendly tool whose aim is to simplify the work of users with no knowledge of grid infrastructure to create, submit and manage job analysis into grid environments. written in python and installed on UI (grid user access point) Users have to develop their analysis code in a interactive environment and decide which data to analyse. They have to provide to CRAB: Dataset name, number of events Analysis code and parameter card Output files and handling policy CRAB handles data discovery, resources availability, job creation and submission, status monitoring and output retrieval

How CRAB works Job creation: crab –create N (or all) data discovery: sites storing data are found querying RefDB and local PubDBs packaging of user code: creation of a tgz archive with user code (bin, lib and data) wrapper script (sh) for the real user executable JDL file, script which drives the real job towards the “grid” splitting: according to user request (number of events per job and in total) Job submission: crab –submit N (or all) -c jobs are submitted to the Resource Broker using BOSS, the submitter and tracking tool interfaced with CRAB jobs are sent to those sites which host data

How CRAB works (2) Job monitoring: crab –status (n_of_job) the status of all submitted jobs is checked using Boss Job output management: crab –getoutput (n_of_job) following user request CRAB can copy them back to the UI ... ... or copy to a Storage Element Job resubmission: crab –resubmit n_of_job if job suffers grid failure (aborted or cancelled status)

CRAB experience Used by tens of users to access remote MC data for Physics TDR analysis ~7000 Datasets available for O(10^8) total events, full MC production CMS users, via CRAB, use two dedicated Resources Brokers (at CERN and at CNAF) knowing all CMS sites CRAB proves that CMS users are able to use available grid services and that the full analysis chain works in a distributed environment!

Top 20 CE where CRAB-Jobs run Top 20 dataset/owner requested from users CRAB usage CRAB is currently used to analyse data for the CMS Physics TDR (being written now…) The total number of jobs submitted to the grid using CRAB during the second half of the last year is more than 300’000 by 40-50 users.

CRAB future • As CMS analysis framework and grid middleware evolve: • CRAB has to adapt to cope with these changes and always guarantee its usability and thus remote data access to users • New data discovery components (DBS, DLS) that will substitute RefDB and PubDB • New Event Data Model (as analysis framework) • gLite, new middleware for grid computing • Open issues to be resolved (number of users and submitted jobs is increasing…) • Jobs policies and priorities at VO level: for example • for next tree weeks Higgs group users have priorities over other groups • tracker alignment jobs performed by user xxx must start immediately • Bulk submission: handle 1000 jobs as a single task, just one submission/status/...

CRAB future (2) • CRAB will be split in two different components to minimize the user effort to manage analysis jobs and obtain their results. • Some user actions will be delegated to “not user dependent” services, that take care to follow job evolution on the grid, get results and return them to user • The Me/MyFriend idea: • Me: the user desktop (laptop or shell), where working environment is and where user can work interactively. For user operation as: • job creation • job submission • MyFriend: a set of robust services running 24x7 to guarantee the execution of: • job tracking • resubmission • output retrieval

Conclusion CRAB was born in April ’05 A big effort has been done to understand user needs and how to use in the best way services provided by grid Lot of work have been made to make it robust, flexible and reliable Users appreciate the tool and are asking for further improvements CRAB has been used by many CMS collaborators to analyze remote data for CMS Physics TDR, otherwise not accessible CRAB is used to continuously test CMS Tiers to prove the whole infrastructure robustness The use of CRAB proves the complete computing chain for distributed analysis works for a generic CMS user ! http://cmsdoc.cern.ch/cms/ccs/wm/www/Crab/

back-up Back-up slide

Statistics with CRAB(1) # of jobs From 10-07-05 to 22.01.06 The weekly rate of the CRAB-jobs flow is: LCG OSG week (%) jobs week

Efficiency: % of jobs which arrive to WN (remote CE) and run Statistics with CRAB(2) INFN CE All CE