CS435/535: Internet-Scale Applications

CS435/535: Internet-Scale Applications. Lecture 24 Service Backend Design: Storage/State Management: Local FS and Single Networked FS 4/20/2010. Admin. Please schedule two project meetings with instructors Project report due: May 11. Recap: Data Center Trend. Mega data centers

CS435/535: Internet-Scale Applications

E N D

Presentation Transcript

CS435/535: Internet-Scale Applications Lecture 24Service Backend Design: Storage/State Management: Local FS and Single Networked FS 4/20/2010

Admin. • Please schedule two project meetings with instructors • Project report due: May 11

Recap: Data Center Trend • Mega data centers • Microsoft DC @ Chicago500,000+ servers • Virtualization at DC • 10 VMs per server ->5 mil. addresses • Large-scale app provider • All2all, internal comm. • multiple app.

Recap: Data Center Design • How to achieve high interconnect capacity? • How to design large L2 domain? • How to achieve application isolation?

Recap: Support for Backend Processing • Programming model and workload/processing management • Means for rapidly installing a service’s code on a server • MapReduce/Dryad, virtual machines, disk images • Network infrastructure • Means for communicating with other servers, regardless of where they are in the data center(s) • Data center design • Storage management • Means for a server to store and access data • Distributed storage systems

Outline • Admin. • Storage system

Context • Some example data that we may store at the backend servers: • Web pages, inverted index • Content videos • Google map • User profiles, documents, emails, calendars, photos • Facebook feed of a user • Inventory • Shopping cart • User/device presence state • …

Context • Storage design depends on what you store and what you want, e.g., • Access pattern: e.g., mostly read or mostly write • Item size and # of items • Soft state vs hard state • Availability vs reliability • … • Storage system design is still evolving rapidly • Focus on problems/basic concepts, and sketch of solutions

Outline • Admin. • Storage system • Review of design points in a local file system

Discussion/Review: Major Design Points of a Local File System

Local FS: Name Space Management • Many notions: • name, dir, inode, block, file descriptor (bind to inode in Unix) • Impact of introducing file descriptor? • Performance: lookup file name only once • Have impact on semantics under concurrency:

Local FS: “3 Write Problems” • When and how are the effects of write committed on the disk? • persistence vs performance • data structure/locality (write data or log) • How to handle the semantics of a file system operation that requires multiple disk writes? • Crash recovery and FS consistency • E.g., add a new block: mark block as not-free, add block # to inode • E.g., creating a new file modifies multiple disk blocks (allocating free inode, update list of free nodes, record name/inode as a directory entry, which may need to allocate a new block…) • How to/if handle concurrent write? • Performance vs consistency (lost updates or mixed data)

Outline • Admin. • Storage system • Review of local file system (FS) • Networked FS with one server

Single-Server Networked Storage System Application Logic/Server Generally -> A Single Storage Server (e.g., DB Server, File Server) Brion Vibber, CTO, Wikipedia, 2008 slides

Example: NFSv2/3 Architecture NFS client NFS client • Design objectives • Compatible with local Unix FS semantics if possible • Acceptable performance NFS server

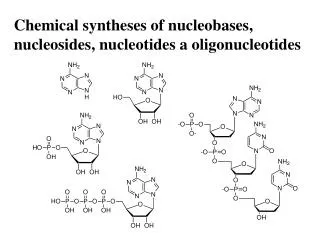

NFSv3 Server Procedures • LOOKUP • READ, WRITE • CREATE, RENAME, • MKDIR, RMDIR • GETATTR, SETATTR, LINK, • … http://tools.ietf.org/html/rfc1813

Example: NFS Messages for Reading a File Why does NFS use file handles?

NFSv3 READ • Input: • struct READ3args { nfs_fh3 file; offset3 offset; count3 count; }; • Output • struct READ3resok { post_op_attr file_attributes; count3 count; bool eof; opaque data<>; }; READ must specify offset, but typically we do not include offset in local FS access. Implicationof removing offset? NFSv3 Design Decision: Stateless Server What is file handler?

NFS WRITE • Design question: spectrum of persistent strategies for a client to write to server • Not write from client to server until close • Typical: called close-to-open semantics, e.g., Linux client in kernels newer than 2.4.20 supports this mode • Write back (not persist, asynchronous) • Sends to server but does not ask server to sync to disk • Write through (synchronous, persist to disk)

NFS Write Evolution • NFSv2 • struct writeargs { fhandle file; unsigned beginoffset; unsigned offset; unsigned totalcount; nfsdata data; }; • Two client modes • Close-to-open • Synchronous write (write through) • NFSv3: allows asynchronous write http://tools.ietf.org/html/rfc1094

NFSv3 WRITE • Input: • enum stable_how { UNSTABLE = 0, DATA_SYNC = 1, FILE_SYNC = 2 }; struct WRITE3args { nfs_fh3 file; offset3 offset; count3 count; stable_how stable; opaque data<>; }; struct WRITE3resok { wcc_data file_wcc; count3 count; stable_how committed; writeverf3 verf; };

NFSv3 Write • NFSv3 asynchronous write • Typical client behavior • unstable writes (for fast write) • COMMIT a group of writes later • Problem in a networked setting: server may crash and lose previous data but client does not know (no fate sharing) • Server veri is a cookie to allow a client to detect server crash/reboot; veri can be server start time

NFSv3 Write • Write returns wcc_data: • Allows a client to detect changes between writes to support concurrency detection struct wcc_data { pre_op_attr before; post_op_attr after; };

NFSv3 CREATE • Input • enum createmode3 { UNCHECKED = 0, GUARDED = 1, EXCLUSIVE = 2 }; • union createhow3 switch (createmode3 mode) { case UNCHECKED: case GUARDED: sattr3 obj_attributes; case EXCLUSIVE: createverf3 verf; // EXCLUSIVE means no duplicate}; struct CREATE3args { diropargs3 where; createhow3 how; }; struct CREATE3resok { post_op_fh3 obj; post_op_attr obj_attributes; wcc_data dir_wcc; }; What is verf: A combination of a client identifier, e.g., the client network address, and a unique number generated by the client, e.g., the RPC transaction identifier. The server creates the file and stores verf in a stable storage before return. Why verf?

NFS: Exact-Once-Semantics • An issue not in local FS is lost/repeated requests • A request from client to server can be lost, and client retransmits request • Retransmissions can cause issues with non-idempotent requests (examples?) • NFS ties to provide EOS using a reply cache • Cache entry indexed by Source address + RPC transaction XID

EOS with Reply Cache • Problem of reply cache: • True and complete EOS is not possible unless the server persists the reply cache in stable storage; but persists for each request is expensive • NFSv3 solution: allow client veri for selected calls to enforce persistence and identify request

Summary So Far: Issues Using One Network File Server • Design issues not in or more important than local FS • Stateless or stateless server • Loss/repeated requests and EOS • Asynchronous write with server crash

Discussion • What do you want to remove from/add to NFSv3?

Exercise: How to Append in NFSv3? • App: append data in buf1 to file “/d1/f1”: • GET handler for /d1/f1 • … • GETATTR to retrieve file size size; • write buf1 at file offset size • App: append data inbuf2 to file “/d1/f1”: • … • GET handler for /d1/f1 • … • GETATTR to retrieve file size size; • write buf2 to at file offset size time What is the result? Solution: Use a Lock (server) to synchronize

NFSv4/4.1: Stateless to State • Integrating locking into the NFS protocol necessarily causes it to be stateful. • A NFSv4 server can grant a lock of any type • opens, • byte-range locks, • delegations (recallable guarantees by the server to the client that other clients will not reference or modify a particular file, until the delegation is returned), and • layouts • Server assigns a unique stateid that represents a set of locks (often a single lock) for the same file, of the same type, and sharing the same ownership characteristics • All stateids associated with a given client ID are associated with a common lease • Server guarantees exclusive access to a client until lease expires or client holding the lease is notified

Discussion • Pros of using lease • Problems of using lease

Exercise: Design Question • CS signup calendar:http://cs-www.cs.yale.edu/calendars/signup/signup.cgi?event=kurose.02.04.10.ev

Exact-Once-Semantics • Non-idempotent requests. • rename • Idempotent modifying requests. • WRITE operation. Repeated execution of the same WRITE has the same effect as execution of that WRITE a single time. Nevertheless, enforcing EOS for WRITEs and other idempotent modifying requests is necessary to avoid data corruption. Suppose a client sends WRITE A to a noncompliant server that does not enforce EOS, and receives no response, perhaps due to a network partition. The client reconnects to the server and re-sends WRITE A. Now, the server has outstanding two instances of A. The server can be in a situation in which it executes and replies to the retry of A, while the first A is still waiting in the server's internal I/O system for some resource. Upon receiving the reply to the second attempt of WRITE A, the client believes its WRITE is done so it is free to send WRITE B, which overlaps the byte-range of A. When the original A is dispatched from the server's I/O system and executed (thus the second time A will have been written), then what has been written by B can be overwritten and thus corrupted. • Idempotent non-modifying requests.