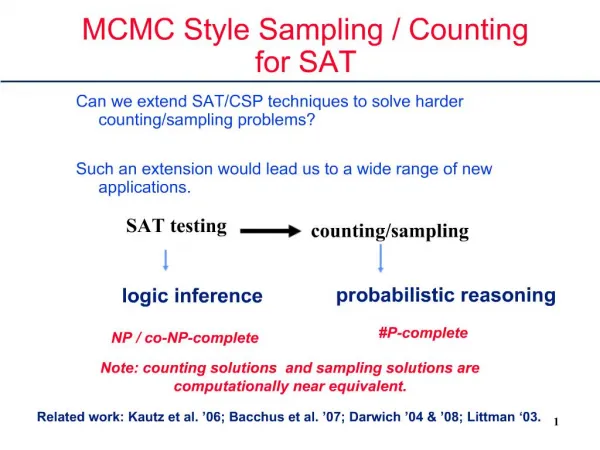

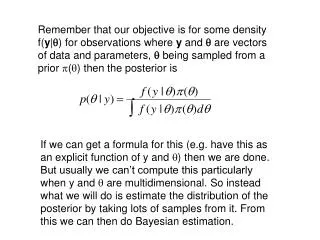

Understanding MCMC Techniques for Data Association Exploration

Gain insights into mixing MCMC chains for data association through log likelihood plots and sampling methods like Gibbs and Metropolis Hasting. Explore toy models, proposals distributions, and Gibbs sampling with augmented paths. Get hands-on experience with MATLAB scripts for live animations and parameter adjustments.

Understanding MCMC Techniques for Data Association Exploration

E N D

Presentation Transcript

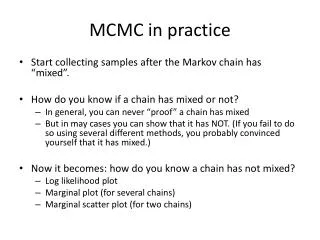

MCMC in practice • Start collecting samples after the Markov chain has “mixed”. • How do you know if a chain has mixed or not? • In general, you can never “proof” a chain has mixed • But in may cases you can show that it has NOT. (If you fail to do so using several different methods, you probably convinced yourself that it has mixed.) • Now it becomes: how do you know a chain has not mixed? • Log likelihood plot • Marginal plot (for several chains) • Marginal scatter plot (for two chains)

Initialized from a bad (non-informative) configuration Initialized from a “good” configuration (found by other methods) Mixing? Log likelihood Probably iterations iterations Log likelihood NO iterations iterations

Each dot is a statistic (e.g., P(X_1 = x_10)) • x-position is its estimation value from chain 1 • y-position is its estimation value from chain 2 Mixing? NO Probably

Toy Model for Data Association • Blue dots: variables, x_i (i=1,2,3,4) • Red dots: observations (values that we assign to variables) • What does the distribution look like? distance (A) (B) (C)

How do we sample from it? • Add one observation (such that Gibbs would work) • Two modes: • Gibbs • How does it traverse between the two modes? • Block Gibbs (block size = 2) • How do we sample? • Metropolis Hasting • Take larger steps using a proposal distribution. (We will come to details of this later.)

Try it yourself • Connect to clusters with graphics • Windows https://itservices.stanford.edu/service/unixcomputing/unix/moreX • MacOS or Linux ssh –x user@corn.stanford.edu • Copy the code to your own directory cp –r /afs/ir/class/cs228/mcmc ./ cdmcmc • Run Matlab and execute the following scripts VisualMCMC1(10000, 0.1, 0.05); % live animation of sampling % parameters: num of samples, sigma, pause time after each sample Plot1; % the first few lines of Plot1.m contain the parameters you may want to play around with

Proposal distributions for M-H • Back to the model with 4 observations • What will Gibbs do on this? • Proposal distribution 1 (flip two) • Randomly pick two variables, flip their assignments • What is the acceptance probability?

Proposal distributions for M-H • Proposal distribution 2 (augmented path) • 1. randomly pick one variable • 2. sample it pretending that all observations are available • 3. pick the variable whose assignment was taken (conflict), goto step 2 • 4. loop until step 2 creates no conflict • What is the acceptance probability?

Proposal distributions for M-H • Proposal distribution 3 (“smart” augmented path) • Same as the previous one except for the highlighted • 1. randomly pick one variable • 2. sample it pretending that all observations are available (excluding the current one) • 3. pick the variable whose assignment was taken (conflict), goto step 2 • 4. loop until step 2 creates no conflict • What is the acceptance probability?

Try it yourself • Run the following Matlab scripts: VisualMCMC2(10000, 0.7, 0.05); % live animation of sampling % parameters: num of samples, sigma, pause time after each sample Plot2; % the first few lines of Plot2.m contain the parameters you may want to play around with

Try to fix Gibbs with annealing • A skewed distribution The right blue dot is moved up a little bit such that a < b • How does the distribution look like? • What would you use for annealing? (A) Multiple shorter chains. (B) One longer chain (suppose that our computation resource cannot afford multiple long chains) a b

Try it yourself • Run the following Matlab scripts: VisualMCMC3(200, 0.06, 0.05, 50); % parameters: num of samples, sigma, pause time after each sample, num of chains to run • What you will see using default parameters: • Live animation of sampling for 5 chains with annealing, 5 chains without. • At the end of 200 samples: Estimate P(x1=1) using Gibbs without annealing: 0.664000 Estimate P(x1=1) using Gibbs with annealing: 0.876000 Estimate P(x1=1) using Metropolis Hasting: 0.909100 (numbers may vary in different runs) should be close to the true value