NUMA aware heap memory manager

NUMA aware heap memory manager . How to use our resources wisely in multi-thread and multi - proccessor systems Michael Shteinbok Shai Ben Nun Supervisor: Dmitri Perelman. What’s the problem?.

NUMA aware heap memory manager

E N D

Presentation Transcript

NUMA aware heap memory manager How to use our resourceswisely in multi-threadand multi-proccessorsystems Michael Shteinbok Shai Ben Nun Supervisor: Dmitri Perelman

What’s the problem? • While increasing number of cores/processors seems to increase the performance in proportion, it usually increases in lower numbers and sometimes even slows us down.

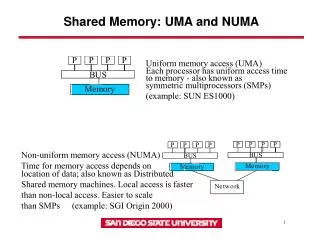

Ok, But Why? • Some physical issues can still cause a bottleneck. For example Memory Access and Management. • UMA – Uniformed Memory Access

Solution - NUMA • Non Uniform Memory Access - each CPU has it’s own close memory with quick access. • One processor can access the other memory units but with slower BW. • The NUMA API lets theprogrammertomanagethememory.

HowOneManagesTheMemory? • When we allocate and free, we are blind to the process in the background. Forthisneedwehavedifferentlibfunction. (glibc, TCMalloc, TCMalloc NUMA Aware) • The article refers to this management problem and offers the TCMalloc NUMA Aware heap manager http://developer.amd.com/Assets/NUMA_aware_heap_memory_manager_article_final.pdf

Performance Change • The Previous solutions(more BW, more local access):

Project Goals • Improving the current TCMallocnuma aware in scenarios it losses performance • Managing wisely the memory of different cores and offer a new “read” function that will be faster (TBD)

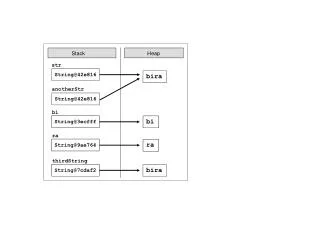

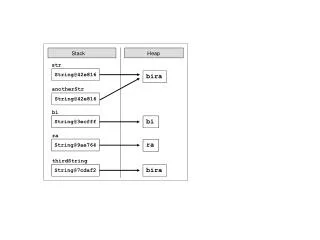

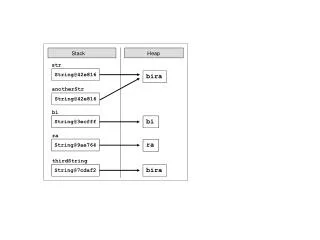

Problem In Current Solution • When thread frees memory that was allocated by another thread (on another numa node) we can get performance loss Thread A Thread A Thread B X = Allocate X = malloc Thread A Free pool Free (X) Free (X)

Our Benchmark • Each couple of threads on a different numa node. • 8 quad-cores processors => Total 16 couples. Allocator Thread Alloc Queue Free Thread Alloc Rand List (5000) First-touch policy Access Memory

Achieving Project Goals Step By Step • Learn how does the TCMalloc work • Find scenarios that makes current TCM to loss performance. • Sort the scenarios by most likely to occur in real environment • Implement a support in these scenarios • Make new TCMalloc to get better performance!