Identifying Boundary of Different Classes of Objects

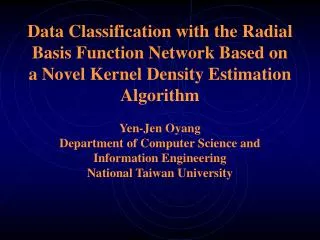

Data Classification with the Radial Basis Function Network Based on a Novel Kernel Density Estimation Algorithm Yen-Jen Oyang Department of Computer Science and Information Engineering National Taiwan University. Identifying Boundary of Different Classes of Objects. Boundary Identified.

Identifying Boundary of Different Classes of Objects

E N D

Presentation Transcript

Data Classification with the Radial Basis Function Network Based on a Novel Kernel Density Estimation AlgorithmYen-Jen OyangDepartment of Computer Science and Information EngineeringNational Taiwan University

The Proposed RBF Network Based Classifier • The proposed algorithm constructs one RBF network for approximating the probability density function of one class of objects. • Classification of a new object is conducted based on the likelihood function:

Rule Generated by the Proposed RBF(Radial Basis Function) Network Based Learning Algorithm Let and If then prediction=“O”. Otherwise prediction=“X”.

Problem Definition of Kernel Smoothing • Given the values of function at a set of samples . We want to find a set of symmetric kernel functions and the corresponding weights such that

Kernel Smoothing with the Spherical Gaussian Functions • Hartman et al. showed that a linear combination of spherical Gaussian functions can approximate any function with arbitrarily small error. • “Layered neural networks with Gaussian hidden units as universal approximations”, Neural Computation, Vol. 2, No. 2, 1990.

With the Gaussian kernel functions, we want to find such that

Problem Definition of Kernel Density Estimation • Assume that we are given a set of samples taken from a probability distribution in a d-dimensional vector space. The problem now is how to find a linear combination of kernel functions that approximate the probability density function of the distribution?

The value of the probability density function at a vector can be estimated as follows: where n is the total number of samples, is the distance between vector and its k-th nearest samples, and is the volume of a sphere with radius = in a d-dimensional vector space.

A 1-D Example of Kernel Smoothing with the Spherical Gaussian Functions

The Existing Approaches for Kernel Smoothing with Spherical Gaussian Functions • One conventional approach is to place one Gaussian function at each sample. As a result, the problem becomes how to find for each sample such that

The most widely-used objective is to minimize where are test samples and S is the set of training samples. • The conventional approach suffers high time complexity, approaching , due to the need to compute the inverse of a matrix.

M. Orr proposed a number of approaches to reduce the number of units in the hidden layer of the RBF network. • Beatson et. al. proposed O(nlogn) learning algorithms using polyharmonic spline functions.

An O(n) Algorithm for Kernel Smoothing • In the proposed learning algorithm, we assume uniform sampling. That is, samples are located at the crosses of an evenly-spaced grid in the d-dimensional vector space. Let denote the distance between two adjacent samples. • If the assumption of uniform sampling does not hold, then some sort of interpolation can be conducted to obtain the approximate function values at the crosses of the grid.

The Basic Idea of the O(n) Kernel Smoothing Algorithm • Under the assumption that the sampling density is sufficiently high, i.e. , we have the function values at a sample and its k nearest samples, , are virtually equal. That is, . • In other words, is virtually a constant function equal to in the proximity of

In the 1-D example, samples at located at , where i is an integer. • Under the assumption that , we have and • The issue now is to find appropriate and such that

Therefore, with , we can set and obtain for

In fact, it can be shown that with , is bounded by • Therefore, we have the following function approximator:

Generalization of the 1-D Kernel Smoothing Function • We can generalize the result by setting , where is a real number. • The table on the next page shows the bounds of with various values.

The Smoothing Effect • The kernel smoothing function is actually a weighted average of the sampled function values. Therefore, selecting a larger value implies that the smoothing effect will be more significant. • Our suggestion is set

An Example of the Smoothing Effect The smoothing effect Elimination of the smoothing effect with a compensation procedure

Compensation of the Smoothing Effect and Handling of Random Noises • Let denote the observed function value at sample , where is the random noise due to the sampling procedure. • The expected value of the random noise at each sample is 0.

According to the law of large numbers, for any real number , we have • Therefore, if then

The General Form of a Kernel Smoothing Function in the Multi-Dimensional Vector Space • Under the assumption that the sampling density is sufficiently high, i.e. , we have the function values at a sample and its k nearest samples, , are virtually equal. That is, .

As a result, we can expect that where are the weights and bandwidths of the Gaussian functions located at , respectively.

Since the influence of a Gaussian function decreases exponentially as the distance increases, we can set k to a value such that, for a vector in the proximity of sample , we have

Since we have our objective is to find and such that

Let Then, we have

Therefore, with , is virtually a constant function and • Accordingly, we want to set

Finally, by setting uniformly to , we obtain the following kernel smoothing function that approximates f(v):

Generally speaking, if we set uniformly to , we will obtain

Application in Data Classification • One of the applications of the RBF network is data classification. • However, recent development in data classification focuses on the support vector machines (SVM), due to accuracy concern. • In this lecture, we will describe a RBF network based data classifier that can delivers the same level of accuracy as the SVM and enjoys some advantages.

The Proposed RBF Network Based Classifier • The proposed algorithm constructs one RBF network for approximating the probability density function of one class of objects based on the kernel smoothing algorithm that we just presented.

The Proposed Kernel Density Estimation Algorithm for Data Classification • Classification of a new object is conducted based on the likelihood function:

Let us adopt the following estimation of the value of the probability density function at each training sample:

In the kernel smoothing problem, we set the bandwidth of each Gaussian function uniformly to , where is the distance between two adjacent training samples. • In the kernel density estimation problem, for each training sample, we need to determine the average distance between two adjacent training samples of the same class in the local region.

In the d-dimensional vector space, if the average distance between samples is , then the number of samples in a subspace of volume V is approximately equal to • Accordingly, we can estimate by

Accordingly, with the kernel smoothing function that we obtain earlier, we have the following approximate probability density function for class-m objects:

An interesting observation is that, regardless of the value of , we have . • If the observation holds generally, then

In the discussion above, is defined to be the distance between sample and its nearest training sample. • However, this definition depends on only one single sample and tends to be unreliable, if the data set is noisy. • We can replace with