Memory Management

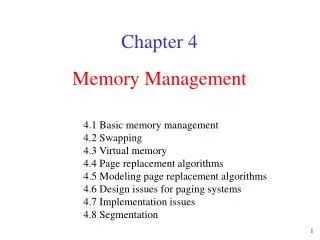

Memory Management. 4.1 Basic memory management 4.2 Swapping 4.3 Virtual memory 4.4 Page replacement algorithms 4.5 Modeling page replacement algorithms 4.6 Design issues for paging systems 4.7 Implementation issues 4.8 Segmentation. Chapter 4. Memory Management .

Memory Management

E N D

Presentation Transcript

Memory Management 4.1 Basic memory management 4.2 Swapping 4.3 Virtual memory 4.4 Page replacement algorithms 4.5 Modeling page replacement algorithms 4.6 Design issues for paging systems 4.7 Implementation issues 4.8 Segmentation Chapter 4

Memory Management • Ideally programmers want memory that is • large • fast • nonvolatile • Memory hierarchy • small amount of fast, expensive memory – cache • some medium-speed, medium price main memory • gigabytes of slow, cheap disk storage • Memory manager handles the memory hierarchy

Basic Memory ManagementMonoprogramming without Swapping or Paging Three simple ways of organizing memory - an operating system with one user process

Multiprogramming with Fixed Partitions • Fixed memory partitions • separate input queues for each partition Disadvantage: A large partition is empty. • single input queue

Memory Management • The CPU utilization can be modeled by the formula CPU utilization = 1 - pn where there are n processes in memory and each process spends a fraction p of its time waiting for I/O (the probability that all n processes are waiting for I/O is pn • CPU utilization is a function of n, which is called the degree of multiprogramming. • A more accurate model can be constructed using queuing theory. • Example: A computer has 32 MB. The OS takes 16 MB. Each process takes 4 MB. 80 percent of time is waiting for I/O. The CPU utilization is 1 – 0.84 = 60%. If 16 MB is added, then the utilization is 1 – 0.88 = 83%.

Modeling Multiprogramming CPU utilization as a function of number of processes in memory Degree of multiprogramming

Analysis of Multiprogramming System Performance • Arrival and work requirements of 4 jobs • CPU utilization for 1 – 4 jobs with 80% I/O wait • Sequence of events as jobs arrive and finish • note numbers show amout of CPU time jobs get in each interval

Relocation and Protection • Multiprogramming introduces two problems – relocation and protection. • Relocation - Cannot be sure where program will be loaded in memory • address locations of variables and code routines cannot be absolute • must keep a program out of other processes’ partitions • Protection - Use base and limit values (registers) • address locations added to base value to map to physical address • address locations larger than limit value is an error

Swapping • Two approaches to overcome the limitation of memory: • Swapping puts a process back and forth in memory and on the disk. • Virtual memory allows programs to run even when they are only partially in main memory. • When swapping creates multiple holes in memory, memory compaction can be used to combine them into a big one by moving all processes together.

Swapping Memory allocation changes as • processes come into memory • leave memory Shaded regions are unused memory

Swapping • Allocating space for growing data segment • Allocating space for growing stack & data segment

Memory Management with Bit Maps and Linked Lists • There are two ways to keep track of memory usage: bitmaps and free lists. • The problem of bitmaps is to find a run of consecutive 0 bits in the map. This is a slow operation. • Four major algorithms can be used in memory management with linked lists (double-linked list): • First fit searches from the beginning for a hole that fits. • Next fit searches from the place where it left off last time for a hole that fits. • Best fit searches the entire list and takes the smallest hole that fits. • Worstfit searches the largest hole that fits. • (Quick fit) maintains separate lists for some of the more common size requested. The same size holes are linked together.

Memory Management with Bit Maps • Part of memory with 5 processes, 3 holes • tick marks show allocation units • shaded regions are free • Corresponding bit map • Same information as a list

Memory Management with Linked Lists Four neighbor combinations for the terminating process X

Virtual Memory • Problem: Program too large to fit in memory • Solution: • Programmer splits program into pieces called Overlays - too much work • Virtual memory - [Fotheringham 1961] - OS keeps the part of the program currently in use in memory • Paging is a technique used to implement virtual memory. • Virtual Address is a program generated address. • The MMU (memory management unit) translates a virtual address into a physical address.

Virtual MemoryPaging The position and function of the MMU

Virtual Memory • Suppose the computer can generate 16-bit addresses, (0-64k). However, the computer only has 32k of memory 64k program can be written, but not loaded into memory. • The virtual address space is divided into (virtual) pages and those in the physical memory are (page) frames. • A Present/Absent bit keeps track of whether or not the page is mapped. • Reference to an unmapped page causes the CPU to trap to the OS. • This trap is called a Page fault. The MMU selects a little used page frame, writes its contents back to disk, fetches the page just referenced, and restarts the trapped instruction.

Paging • The relation betweenvirtual addressesand physical memory addres-ses given bypage table • Where to keep the mapping information? • Page table

Page Tables • Example: Virtual address = 4097 = 0001 000000000001 Virtual page # 12-bit offset • See Figure 4-11. • The purpose of the page table is to map virtual pages into page frames. The page table is a function to map the virtual page to the page frame. • Two major issues of the page tables are faced: • Page tables may be extremely large (e.g. most computers use) 32-bit address with 4k page size, 12-bit offset 20 bits for virtual page number 1 million entries! What can we do about it? Multiple-level paging • The mapping must be fast because it is done on every memory access!!

Page Tables Internal operation of MMU with 16 4 KB pages

Two-Level Paging Example • A logical address (on 32-bit machine with 4K page size) is divided into: • a page number consisting of 20 bits. • a page offset consisting of 12 bits. • Since the page table is paged, the page number is further divided into: • a 10-bit page number. • a 10-bit page offset. • Thus, a logical address is as follows: where p1 is an index into the outer page table, and p2 is the displacement within the page of the outer page table. page number page offset p2 p1 d 12 10 10

Address-Translation Scheme • Address-translation scheme for a two-level 32-bit paging architecture is shown as below.

Page Tables • Multilevel page tables - reduce the table size. Also, don't keep page tables in memory that are not needed. • See the diagram in Figure 4-12 • Top level entries point to the page table for 0 = program text 1 = program data 1023 = stack 4M stack 4M data 4M code

Page Tables Second-level page tables • 32 bit address with 2 page table fields • Two-level page tables Top-level page table

Page Tables • Most operating systems allocate a page table for each process. • Single page table consisting of an array of hardware registers. As a process is loaded, the registers are loaded with page table. • Advantage - simple • Disadvantage - expensive if table is large and loading the full page table at every context switch hurts performance. • Leave page table in memory - a single register points to the table • Advantage - context switch cheap • Disadvantage - one or more memory references to read table entries

Hierarchical Paging • Examples of page table design • PDP-11 uses one-level paging. • The Pentium-II uses this two-level architecture. • The VAX architecture supports a variation of two-level paging (section + page + offset). • The SPARC architecture (with 32-bit addressing) supports a three-level paging scheme. • The 32-bit Motorola 68030 architecture supports a four-level paging scheme. • Further division could be made for large logical-address space. • However, for 64-bit architectures, hierarchical page are general infeasible.

Page Tables Typical page table entry

Structure of a Page Table Entry • Page frame number: map the frame number • Present/absent bit: 1/0 indicates valid/invalid entry • Protection bit: what kids of access are permitted. • Modified (dirty bit) – set when modified and writing to the disk occur • Referenced - Set when page is referenced (help decide which page to evict) • Caching disabled - Cache is used to keep data that logically belongs on the disk in memory to improve performance. (Reference to I/O may require no cache!)

TLB • Observation: Most programs make a large number of references to a small number of pages. • Solution: Equip computers with a small hardware device, called Translation Look-aside Buffers(TLBs) or associative memory, to map virtual addresses to physical addresses without using the page table. • Modern RISC machines do TLB management in software. If the TLB is large enough to reduce the miss rate, software management of the TLB become acceptably efficient. • Methods to reduce TLB misses and the cost of a TLB miss: • Preload pages • Maintain large TLB

TLBs – Translation Lookaside Buffers A TLB to speed up paging

Effective Access Time • Associative Lookup = time unit • Assume memory cycle time is t time unit • Hit ratio – percentage of times that a page number is found in the associative memory; ration related to number of associative memory. • Hit ratio = • Effective Access Time (EAT) EAT = (t + ) + (1 – ) (2t + ) = t + + 2t + - 2t - = (2 – )t + • Example: = 0.8, = 20 ns, t = 100 ns EAT = 0.8 x 120 + 0.2 x (200 + 20) = 140 ns.

Inverted Page Table • Usually, each process has a page table associated with it. One of drawbacks of this method is that each page table may consist of millions of entries. • To solve this problem, an inverted page table can be be used. There is one entry for each real (page) frame of memory. • Each entry consists of the virtual address of the page stored in that real memory location, with information about the process that owns that page. • Examples of systems using the inverted page tables include 64-bit UltraSPARC and PowerPC.

Inverted Page Table • To illustrate this method, a simplified version of the implementation of the inverted page is described as: <process-id, page-number, offset>. • Each inverted page-table entry is a pair <process-id, page-number>. The inverted page table is then searched for a match. If a match i found, then the physical address <i, offset> is generated. Otherwise, an illegal address access has been attempted. • Although it decreases memory needed to store each page table, but increases time needed to search the table when a page reference occurs. • Use hash table to limit the search to one — or at most a few — page-table entries.

Inverted Page Tables Comparison of a traditional page table with an inverted page table

Page Replacement Algorithms • Page fault forces choice • which page must be removed • make room for incoming page • Modified page must first be saved • unmodified just overwritten • Better not to choose an often used page • will probably need to be brought back in soon • Applications: Memory, Cache, Web pages

Optimal Page Replacement Algorithm • Replace the page which will be referenced at the farthest point • Optimal but impossible to implement and is only used for comparison • Estimate by • logging page use on previous runs of process • although this is impractical

Not Recently Used Page Replacement Algorithm • Each page has Reference bit (R) and Modified bit (M). • bits are set when page is referenced (read or written recently), modified (written to) • when a process starts, both bits R and M are set to 0 for all pages. • periodically, (on each clock interval (20msec) ), the R bit is cleared. (i.e. R=0). • Pages are classified Class 0: not referenced, not modified (00) Class 1: not referenced, modified (01) Class 2: referenced, not modified (10) Class 3: referenced, modified (11) • NRU removes page at random • from lowest numbered non-empty class

FIFO Page Replacement Algorithm • Maintain a linked list of all pages • in order they came into memory with the oldest page at the front of the list. • Page at beginning of list is replaced • Advantage: easy to implement • Disadvantage • page in memory the longest (perhaps often used) may be evicted

Second Chance Page Replacement Algorithm • Inspect R bit: if R = 0 evict the page if R = 1 set R = 0 and put page at end (back) of list. The page is treated like a newly loaded page. • Clock Replacement Algorithm : a different implementation of second chance

Second Chance Page Replacement Algorithm • Operation of a second chance • pages sorted in FIFO order • Page list if fault occurs at time 20, A has R bit set(numbers above pages are loading times)

Least Recently Used (LRU) • Assume pages used recently will used again soon • throw out page that has been unused for longest time • Software Solution: Must keep a linked list of pages • most recently used at front, least at rear • update this list every memory reference Too expensive!! • Hardware solution: • Equip hardware with a 64 bit counter. • That is incrementing after each instruction. • The counter value is stored in the page table entry of the page that was just referenced. • choose page with lowest value counter • periodically zero the counter • Problem: page table is larger.

Least Recently Used (LRU) • Hardware solution: • Maintain a matrix of n x n bits for a machine with n page frames. • When page frame K is referenced: (i) Set row K to all 1s. (ii) Set column K to all 0s. • The row whose binary value is smallest is the LRU page.

Simulating LRU in Software LRU using a matrix – pages referenced in order 0,1,2,3,2,1,0,3,2,3