Univariate Time Series

840 likes | 1.6k Views

Univariate Time Series. Concerned with time series properties of single series. Denote y t to be observed value in period t Observations run from 1 to T Very likely that observations at different points in time are correlated as economic time series change only slowly. Stationarity.

Univariate Time Series

E N D

Presentation Transcript

Concerned with time series properties of single series • Denote yt to be observed value in period t • Observations run from 1 to T • Very likely that observations at different points in time are correlated as economic time series change only slowly

Stationarity • Properties of estimators depend on whether series is stationary or not • Time series yt is stationary if its probability density function does not depend on time i.e. pdf of (ys,ys+1,ys+2,..ys+t ) does not depend on s • Implies: • E(yt) does not depend on t • Var(yt) does not depend on t • Cov(yt,yt+s) depends on s and not t

Weak Stationarity • A series has weak stationarity if first and second moments do not depend on t • Stationarity implies weak stationarity but converse not necessarily true • Will be the case for most cases you will see • I will focus on weak stationarity when ‘proving’ stationarity

Simplest Stationary Process • Where εt is ‘white noise’ – iid with mean 0 and variance σ2 • Simple to check that: • E(yt)=α0 • Var(yt)= σ2 • Cov(yt,yt-s)=0 • Implies yt is ‘white noise’ – unlikely for most economic time series

When is AR(1) stationary? • Take expectations: • If stationary then can write this as: • Only ‘makes sense’ if α1<1

Look at Variance • If stationary then: • Only ‘makes sense’ if |α1 |<1 – this is the condition for stationarity of AR(1) process • If α1 =1 then this is random walk – without drift if α0 =0, with drift otherwise – variance grows over time

What about covariances? • Define • Then can write model as:

Higher-Order Covariances • Or, in terms of correlation coefficient: • General rule is:

More General Auto-Regressive Processes • AR(p) can be written as: • This is stationary if root of p-th order polynomial are all inside unit circle: • Necessary condition is:

Why is this condition necessary? • Think of taking expectations: • If stationary, expectations must all be equal: • Only ‘makes sense’ if coefficients sum to <1

Moving-Average Processes • Most common alternative to an AR process – MA(1) can be written as: • MA process will always be stationary – can see weak stationarity from:

Stationarity of MA Process • And further covariances are zero

MA(q) Process • Will always be stationary • Covariances between two observations zero if more than q periods apart

Relationship between AR and MA Processes • Might seem unrelated but connection between the two • Consider AR(1) with α0=0: • Substitute yt-1 to give:

Do Repeated Substitution… • AR(1) can be written as an MA(∞) with geometrically declining weights • Need stationarity for final term to disappear

A quicker way to get this…the lag operator • Define the lag operator: • Can write AR(1) as:

Should recognize denominator as sum of geometric series so… • Which is the same as we had by repeated substitution

For a general AR(p) process • If α(L) invertible we have: • So invertible AR(p) can be written as particular MA(∞)

From an MA to an AR Process • Can use lag operator to write MA(q) as: • If θ(L) invertible: • So MA(q) can be written as particular AR(∞)

ARMA Processes • Time series might have both AR and MA components • ARMA(p,q) can be written as:

Estimation of AR Models • Simple-minded approach would be to run regression of y on p lags of y and use OLS estimates • Lets consider properties of this estimator – simplest to start with AR(1) • Assume y0 is available

The OLS estimator of the AR Coefficient • Want to answer questions about bias, consistency, variance etc • Have to regard ‘regressor’ as stochastic as lagged value of dependent variable

Bias in OLS Estimate • Can’t derive explicit expression for bias • But OLS estimate is biased and bias is negative • Different from case where x independent of every ε • Easiest way to see the problem is consider expectation of numerator in second term:

First part has expectation zero but second part can be written as: • These terms are not zero as yt can be written as function of lagged εt • All these correlations are positive so this will be positive and bias will be negative • This bias often called Hurwicz bias – can be sizeable in small samples

But….. • Hurwicz bias goes to zero as T→∞ • Can show that OLS estimate is consistent • What about asymptotic distribution of OLS estimator? • Depends on whether yt is stationary or not

If time series is stationary • OLS estimator is asymptotically normal with usual formulae for asymptotic variance e.g. for AR(1): • Can write this as:

The Initial Conditions Problem • To estimate AR(p) model by OLS does not use information contained in first p observations • Loss of efficiency from this • Number of methods for using this information – will describe ML method for AR(1)

ML Estimation of AR(1) Process • Need to write down likelihood function – probability of outcome given parameters • Can always factorize joint density as: • With AR(1) only first lag is any use:

Assume εt has normal distribution • Then yt t>1, is normally distributed with mean (α0+α1yt-1) and variance σ2 • y0 is normally distributed with mean (α0/(1-α1)) and variance σ2(1-α12) • Hence likelihood function can be written as:

Comparison of ML and OLS Estimators • Maximization of first part leads to OLS estimate – you should know this • Initial condition will cause some deviation from OLS estimate • Effect likely to be small if T reasonably large

Estimation of MA Processes • MA(1) process looks simple but estimation surprisingly complicated • To do it ‘properly’ requires Kalman Filter • ‘Dirty’ Method assumes ε0=0 • Then repeated iteration leads to:

Can then end up with.. • And maximize with respect to parameters • Packages like STATA, EVIEWS have modules for estimating MA processes

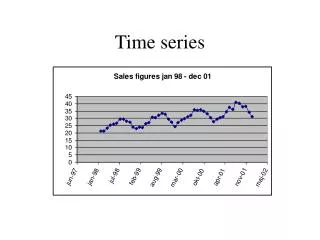

Deterministic Trends • Restriction to stationary processes very limiting as many economic time series have clear trends in them • But results can be modified if deterministic trend as series stationary about this

Non-Stationary Series • Will focus attention on random walk • Note that conditional on some initial value y0 we have:

Terminologies… • These formulae should make clear non-stationarity of random walk • Different terminologies used to describe non-stationary series: • yt is a random walk • yt has a stochastic trend • yt is I(1) – integrated of order 1 -Δyt is stationary • yt has a unit root • All mean the same

Problems Caused by Unit Roots - Bias • Autoregressive Coefficients biased towards zero • Same problem as for stationary AR process – but problem bigger • But bias goes to zero as T→∞ so consistent

Problems Caused by Unit Roots – Non-Normal Asymptotic Distribution • Asymptotic distribution of OLS estimator is • Non-normal • Shifted to the left of true value (1) • Long left tail • Convergence relates to: • Cannot assume t-statistic has normal distribution in large samples

Testing for a Unit Root – the Basics • Interested in H0:α1=1 against H1:α1<1 • Use t-statistic but do not use normal tables for confidence intervals etc • Have to use Dickey-Fuller Tables – called the Dickey-Fuller Test

Implementing the Dickey-Fuller Test • Want to test H0:β1=0 against H1: β1<0 • Estimate by OLS and form t-statistic in usual way • But use t-statistic in different way: • Interested in one-tail test • Distribution not ‘normal’

Critical Values for the Dickey-Fuller Test • Typically larger than for normal distribution (long left tail) • Critical values differ according to: • the sample size (typically small sample critical values are based on the assumption of normally distributed errors) • whether constant is included in the regression or not • Whether a trend is included in the regression or not • the order of the AR process that is being estimated • Reflects fact that distribution of t-statistic varies with all these things • Most common cases have been worked out and tabulated – often embedded in packages like STATA, EVIEWS

The Augmented Dickey-Fuller Test • Critical values for unit root test depend on order of AR process • DF test for AR(p) often called ADF Test • Model and hypothesis is:

The easiest way to implement • Can always re-write AR(p) process as: • i.e. regression of Δyt on (p-1) lags inΔyt and yt-1 • Test hypothesis that coefficient on is zero against alternative it is less than zero

ARIMA Processes • One implication of above is that AR(p) process with a unit root can always be written as AR(p-1) process in differences • Such processes are often called auto-regressive integrated moving average processes ARIMA(p,d,q) where the d refers to how many times the data is differenced before it is an ARMA(p,q)

Some Caution about Unit Root Tests • Unit root test has null hypothesis that series is non-stationary • This is because null of stationarity not well-defined • accepting hypothesis of unit root implies data consistent with non-stationarity – but may also be consistent with stationarity • economic theory may often provide guidance

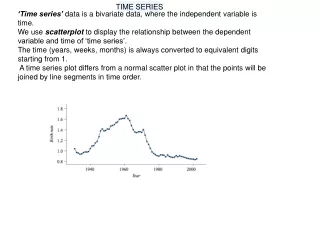

Structural Breaks • Suppose series looks like: