Chap 2. SIMPLE LINEAR REGRESSION MODEL

Chap 2. SIMPLE LINEAR REGRESSION MODEL. by Bambang Juanda. Definition of Model. Problem Formulation Model Model: Abstract of reality in mathematic equation Ekonometric model : statistic model including error. Y = f(X 1 , X 2 , ..., X p ) + error (2.1)

Chap 2. SIMPLE LINEAR REGRESSION MODEL

E N D

Presentation Transcript

Chap 2.SIMPLE LINEAR REGRESSION MODEL by BambangJuanda

Definition of Model • Problem Formulation Model • Model: Abstract of reality in mathematic equation • Ekonometric model : statistic model including error Y = f(X1, X2, ..., Xp) + error (2.1) actual data = estimate + residual data = systematic term + non-systematic term estimated Y = f(X1, X2, ..., Xp) (2.2)

Description of Error : • Measurement error and proxies of dependent variable Y and explanatory variable X1, X2, ..., and Xp. • Wrong assumption of the functional form. • Omitted variables. • unpredictable effects.

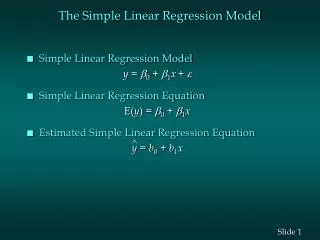

Simple Linear Regression Model • Relation between 2 variables in Linear function of Parameter Population Regression Model : Slope Error random intercept Y Explanatory (Independent) Variable Respons (dependent) Variable Sample Regresssion Model :

Population Regression Model Y Y = b + b X + e Observation value i 0 1 i i e = random error i + m b b = X estimate 0 1 i Y/Xi X Observation value

Persamaan Regresi Linear Sederhana (Teladan) Annual Store Square Sales Fotage ($000) 1 1,726 3,681 2 1,542 3,395 3 2,816 6,653 4 5,555 9,543 5 1,292 3,318 6 2,208 5,563 7 1,313 3,760 Square footage and annual sales ($000) for sample of 7 grocery stores

Sample Linear regression Model Ù Ù Yi = Estimated Y for the ith observation Xi = Value of X for the ith observation b0 = estimated intercept coefficient of b0 ; average Y when X=0 b1 = estimated slope coefficient of b1 ; average difference of Y when X differ 1 unit

The “Best” Straight Line Equation Ù Predictor Coef SE Coef T P Constant 1636.4 451.5 3.62 0.015 X 1.4866 0.1650 9.01 0.000 S = 611.752 R-Sq = 94.2% R-Sq(adj) = 93.0% Analysis of Variance Source DF SS MS F P Regression 1 30380456 30380456 81.18 0.000 Residual Error 5 1871200 374240 Total 6 32251656

The “Best” Straight Line Equation Yi = 1636.415 +1.487Xi Ù

Interpretation of Coefficients Ù Yi = 1636.415 +1.487Xi Interpretation of slope value 1.487 (‘generally’): for the increase of 1 unit in X, estimated Y will increase 1.487unit. • The ‘precise’ Interpretation’: • Average difference of sales between stores which their area differ 1 square footage is $1487 per year • The Implication of estimated slope(with certain assumption: • When the size of store increase 1 square feet, the model predicts that the expected sales will increase $1487 per year.

Assumption of Linear Regression Model • Normality of error • Homoscedasticity of error • Independence of error

Variance of Error around Regression Line f(e) Y X2 X1 X Regression Line

Estimated Standard Error bi~N(i;2 ) bi Properties of OLS Estimator: i bi

Inference of Slope: t-test • t-test for Population Slope Is there linear relationship between X and Y ? • Statistical Hypothesis • H0: b1 = 0 (X cannot explainY) • H1: b1¹ 0 (X can explain Y) • Test statistic: where and df = n - 2

Inference of Slope: Example of t-test • H0: b1 = 0 H1: b1¹ 0 a=.05 df=7 - 2 = 5 Critical values : T-test statistic : Decision: Conclussion: Reject H0 Reject H0 Reject H0 .025 .025 There is a linear relationship. The bigger the store size, the larger its sales t -2.5706 0 2.5706

Confidence Interval of Slope b1 ± tn-2 Sb1 Excel Output of the problem of Grocery Stores We estimate with 95% confidence that the value of slope between 1.062 and 1.911. (This confidence interval excludes value of 0)

Level of significance,a and rejection region b1~N(I;2 ) b1 1 b1 a H0:1³ k H1: 1 < k Rejection region (ttk kritis) 0 t a H0: 1£ k H1: 1 > k t 0 a/2 H0: 1= k H1: 1¹ k 0 t

Assumption of Linear Regression Model : εi are normally, independently and identically distributed for i=1,.. ,n. (i) ei~N(0;2 ) • independence: Cov(εt, εs)= E(εtεs)=0 for t≠s. • Homoscedasticity: Var(εi)= E(εi2)=2. 0 ei (ii) X fixed variable • OLS estimates of i are Best Linear Unbiased Estimator, and normally distributed ^ μY/X • Estimated average Y for certain Xi Normally distributed ~N(0+1Xi;2 ) i μY i ^ μY/X i 0 + 1 Xi • Estimated individual Y for certain Xi equal to its estimated average, also Normally distributed with higher variance ^ Y/Xi~N(0+1Xi;2 ) Yi ^ Y/X1

Estimated Interval of Forcast Values Confidence Interval of mYX, Average Y for certain Xi Interval varies according to the distance to the average X. Estimated Standard error T value from table with df=n-2

Estimated Interval of Forcast Values Confidence Interval of individual Yi for certain Xi The addition of 1 makes this interval is wider than CI of the average Y, µXY

Estimated Interval of Forcast Values for Different X Values Confidence Inteverage Y Confidence Interval for individual Yi Y Ù Yi = b0 + b1Xi X _ Certain Xi X

ANOVA: Analysis of Variance Is the variance of Y can be explained by (variable X in) the Model ? Yi = b0 + b1 Xi + ei Yi = (Y - b1 X) + b1 Xi + ei (Yi – Y) = b1 (Xi – X) + ei (Yi – Y)2 = { b1 (Xi – X) + ei }2 (Yi – Y)2 = { b1 (Xi – X) + ei }2 (Yi – Y)2 = b12(Xi – X)2 + ei2 TSS = RSS + ESS

Measure of Variance: Sum of Squares Y Ù ESS =å(Yi-Yi )2 _ Yi = b0 + b1Xi Ù TSS =å(Yi-Y)2 _ Ù RSS = å(Yi -Y)2 _ Y X Xi

_ Ù RSS = å(Yi -Y)2 Table of ANOVA For Simple Linear Regression Model Ù ESS =å(Yi-Yi )2 _ T SS=å(Yi-Y)2

Inference of the Model: F-test Is the Model can explain the variance of Y? • Statistical Hypothesis • H0: b1 = 0 (model cannot explain) • H1: b1¹ 0 (model can explain) • Test statistic : a = 0.05 F = MSR/MSE ~ F(p, n-1-p) p: number of independent variables 0 6.61 F(1,5) Analysis of Variance Source DF SS MS F P Regression 1 30380456 30380456 81.18 0.000 Residual Error 5 1871200 374240 Total 6 32251656

Residual Analysis for Linearity ü Not Linear Linear e e X X

Residual Analysis for Homoscedasticity ü Heteroscedasticity Homoscedasticity SR SR X X Using Standardized Residuals (SR)

Residual Analysis for Independence of e ü not independent independent SR SR X X