Optimizing General Compiler Optimization

This paper presents a systematic approach to optimizing compiler settings by leveraging orthogonal arrays to explore a reduced search space. Given the vast number of optimization settings available in compilers like GCC, an exhaustive search is impractical. The proposed method identifies and combines subsets of optimization options that positively interact without negatively affecting each other. This reduces complexity while achieving superior performance compared to standard optimization levels (-O1, -O2, -O3). The experimental results demonstrate improved performance across various benchmarks.

Optimizing General Compiler Optimization

E N D

Presentation Transcript

Optimizing General Compiler Optimization M. Haneda, P.M.W. Knijnenburg, and H.A.G. Wijshoff

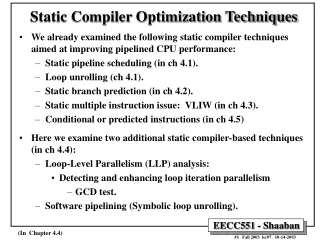

Problem: Optimizing optimizations • A compiler usually has many optimization settings (e.g. peephole, delayed-branch, etc) • gcc 3.3 has 54 optimization options • gcc 4 has over 100 possible settings • Very little is known about how these options affect each other • Compiler writers typically include switches that bundle together many optimization options • gcc –O1, -O2, -O3

…but can we do better? • It is possible to perform better than these predefined optimization settings, but doing so requires extensive knowledge of the code as well as the available optimization options • How do we define one set of options that would work well with a large variety of programs?

Motivation for this paper • Since there are too many optimization settings, an exhaustive search would cost too much • gcc 3: 2^50 different combinations! • We want to define a systematic method to find the optimal settings, with a reduced search space • Ideally, we would like to do this with minimal knowledge of what the options actually will do

Big Idea • We want to find the biggest subsets of compiler options that positively interact with each other • Once we obtain these subsets, we will try to combine them together, under the condition that they do not negatively affect each other • We will select our ultimate optimal compiler setting from the result of these set combinations

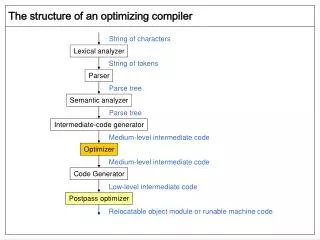

Full vs. Fractional Factorial Design • Full Factorial Design: explores the entire search space, with every possible combination • Given k options, this will take O(2^k) time • Fractional Factorial Design: explores a reduced search space, that is representative of the full search space • This can be done using orthogonal arrays

Orthogonal Arrays • An Orthogonal Array is a matrix of 0’s and 1’s. • The rows represent the experiments to be performed. • The columns represent the factors that the experiment tries to analyze • Any option is equally likely to be turned on/off. • Given a particular experiment with a particular option turned on, all the other options are still equally likely to be turned on/off

Algorithm – Step 1 • Finding maximum subsets of positively interacting options • Step 1.1: Find a set of options that give the best overall improvement • For any single optimization setting i, compute the average speedup for all the settings in the search space in which i is turned on • Select M of the highest average improvement settings

Algorithm – Step 1(cont.) • Step 1.2: Iteratively add new options to the already obtained sets, to get a maximum set of positively reinforcing optimizations • Ex: If using options A and B together produces a more optimal setting than just using A, then add B • If using {A, B} and C together produces a more optimal setting than {A, B}, then add C to {A, B}

Algorithm – Step 2 • Take the sets that we already have and try to combine them together, assuming that they do not negatively influence each other. • This is done to maximize the number of settings turned on for each set • Example: • If {A, B, C} and {D, E} do not counteract each other, then we can combine them into {A, B, C, D, E} • Otherwise, leave them separate

Algorithm – Step 3 • Take the resulting sets from step 2, and select the one with the best overall improvement. • The result would be the ideal combination of optimization settings, according to this methodology.

Comparing results • The compiler setting obtained by this methodology outpeforms –O1, -O2, and –O3 on almost all the SPECint95 benchmarks • -O3 performs better on li (39.2% vs. 38.4%) • The new setting delivers the best performance for perl (18.4% vs. 10.5%)

Conclusion • The paper introduced a systematic way of combining compiler optimization settings • Used a reduced search space, constructed as an orthogonal array • Can be done with no knowledge of actual options • Can be done independently of architecture • Can be applied to a wide variety of applications

Future work • Using the same methodology to find a good optimization setting for a particular domain of applications • Applying the methodology to newer versions of the gcc compiler, such as gcc 4.0.1