Comprehensive Overview of Reasoning Systems in Artificial Intelligence

270 likes | 308 Views

Explore reasoning under uncertainty and knowledge representation in AI, including logic, non-monotonic logic, probability, and fuzzy logic. Learn about truth maintenance systems and their types like justification-based TMS and assumption-based TMS with examples. Understand the implementation of Circumscription and Default logic. Gain insights into JTMS and ATMS with examples illustrating their functionality. Discover how probability is applied to represent the likelihood of events occurring. Deep dive into various scenarios and calculations to grasp the essence of reasoning systems in AI.

Comprehensive Overview of Reasoning Systems in Artificial Intelligence

E N D

Presentation Transcript

Uncertain Knowledge Representation CPSC 386 Artificial Intelligence Ellen Walker Hiram College

Reasoning Under Uncertainty • We have no way of getting complete information, e.g. limited sensors • We don’t have time to wait for complete information, e.g. driving • We can’t know the result of an action until after having done it, e.g. rolling a die • There are too many unlikely events to consider, e.g. “I will drive home, unless my car breaks down, or unless a natural disaster destroys the roads …”

But… • A decision must be made! • No intelligent system can afford to consider all eventualities, wait until all the data is in and complete, or try all possibilities to see what happens

So… • We must be able to reason about the likelihood of an event • We must be able to consider partial information as part of larger decisions • But • We are lazy (too many options to list, most unlikely) • We are ignorant • No complete theory to work from • All possible observations haven’t been made

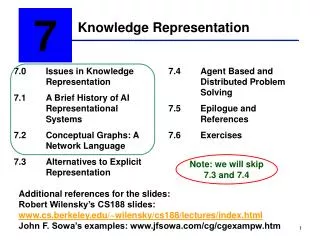

Quick Overview of Reasoning Systems • Logic • True or false, nothing in between. No uncertainty • Non-monotonic logic • True or false, but new information can change it. • Probability • Degree of belief, but in the end it’s either true or false • Fuzzy • Degree of belief, allows overlapping of true and false states

Examples • Logic • Rain is precipitation • Non-monotonic • It is currently raining • Probability • It will rain tomorrow (70% chance) • Fuzzy • It is raining (.5 hard / .8 very hard / .2 a little)

NonMonotonic Logic • Once true (or false) does not mean always true (or false) • As information arrives, truth values can change (Penelope is a bird, penguin, magic penguin) • Implementations (you are not responsible for details) • Circumscription • Bird(x) and not abnormal(x) -> flies(x) • We can assume not abnormal(x) unless we know abnormal(x) • Default logic • “x is true given x does not conflict with anything we already know”

Truth Maintenance Systems • These systems allow truth values to be changed during reasoning (belief revision) • When we retract a fact, we must also retract any other fact that was derived from it • Penelope is a bird. (can fly) • Penelope is a penguin. (cannot fly) • Penelope is magical. (can fly) • Retract (Penelope is magical). (cannot fly) • Retract (Penelope is a penguin). (can fly)

Types of TMS • Justification based TMS • For each fact, track its justification (facts and rules from which it was derived) • When a fact is retracted, retract all facts that have justifications leading back to that fact, unless they have independent justifications. • Each sentence labeled IN or OUT • Assumption based TMS • Represent all possible states simultaneously • When a fact is retracted, change state sets • For each fact, use list of assumptions that make that fact true; each world state is a set of assumptions.

TMS Example (Quine & Ullman 1978) • Abbot, Babbit & Cabot are murder suspects • Abbot’s alibi: At hotel (register) • Babbit’s alibi: Visiting brother-in-law (testimony) • Cabot’s alibi: Watching ski race • Who committed the murder? • New Evidence comes in… • TV video shows Cabot at the ski race • Now, who committed the murder?

JTMS Example • Each belief has justifications (+ and -) • We mark each fact as IN or OUT Suspect Abbot (IN) – + Beneficiary Abbot (IN) Alibi Abbot(OUT)

Revised Justification Suspect Abbot (OUT) – + Beneficiary Abbot (IN) Alibi Abbot (IN) + – + Far Away(IN) Forged(OUT) Registered(IN)

ATMS Example (Partial) • List all possible assumptions (e.g. A1: register was forged, A2: register was not forged) • Consider all possible facts • (e.g. Abbot was at hotel.) • For each fact, determine all possible sets of assumptions that would make it valid • Eg. Abbot was at hotel (all sets that include A2 but not A1)

JTMS vs. ATMS • JTMS is sequential • With each new fact, update the current set of beliefs • ATMS is “pre-compiled” • Determine the correct set of beliefs for each fact in advance • When you have actual facts, find the set of beliefs that is consistent with all of them (intersection of sets for each fact)

Probability • The likelihood of an event occurring represented as a percentage of observed events over total observations • E.g. • I have a bag containing red & black balls • I pull 8 balls from the bag (replacing the ball each time) • 6 are red and 2 are black • Assume 75% of balls are red, 25% are black • The probability of the next ball being red is 75%

More examples • There are 52 cards in a deck, 4 suits (2 red, 2 black) • What is the probability of picking a red card • (26 red cards) / (52 cards) = 50% • What is the probability of picking 2 red cards? • 50% for the first card • (25 red cards) / (51 cards) for the second • Multiply for total result (26*25) / (52*51)

Basic Probability Notation • Proposition • an assertion like “the card is red” • Random variable • Describes an event we want to know the outcome of, like “ColorofCardPicked” • Domain is set of values such as {red, black} • Unconditional (prior) probability P(A) • In the absence of other information • Conditional probability P(A | B) • Based on specific prior knowledge

Some important equations • P(true) = 1; P(false) = 0 • 0 <= P(a) <= 1 • All probabilities between 0 and 1, inclusive • P(a v b) = P(a) + P(b) – P(a ^ b) • We can derive others • P(a v ~a) = 1 • P(a ^ ~a) = 0 • P(~a) + P(a) = 1

Conditional & unconditional Probabilities in example • Unconditional • P(Color2ndCard = red) = 50% • With no other knowledge • Conditional • P(Color2ndCard = red |Color1stCard=red) = 25/51 • Knowing the first card was red, gives more info (lower likelihood of 2nd card being red) • The bar is read “given”

Computing Conditional Probabilities • P(A|B) = P(A ^ B) / P(B) • The probability that the 2nd card is red given the first card was red is (the probability that both cards are red) / (probability that 1st card is red) • P(CarWontStart |NoGas) = P(CarWontStart ^ NoGas) / P(NoGas) • P(NoGas | CarWontStart) = P(CarWontStart ^ NoGas) / P(CarWontStart)

Product Rule and Independence • P(A^B) = P(A|B) * P(B) • (just an algebraic manipulation) • Two events are independent if P(A|B) = P(A) • E.g. 2 consecutive coin flips are independent • If events are independent, we can multiply their probabilities • P(A^B) = P(A)*P(B) when A and B are independent

Back to Conditional Probabilities • P (CarWontStart | NoGas) • This predicts a symptom based on an underlying cause • These can be generated empirically • (Drain N gastanks, see how many cars start) • P (NoGas | CarWontStart) • This is a good example of diagnosis. We have a symptom and want to predict the cause • We can’t measure these

Bayes’ Rule • P(A^B) = P(A|B) * P(B) • = P(B|A) * P(A) • So • P(A|B) = P(B|A) * P(A) / P(B) • This allows us to compute diagnostic probabilities from causal probabilities and prior probabilities!

Bayes’ rule for diagnosis • P(measles) = 0.1 • P(chickenpox) = 0.4 • P(allergy) = 0.6 • P(spots | measles) = 1.0 • P(spots | chickenpox) = 0.5 • P(spots | allergy) = 0.2 • (assume diseases are independent) • What is P(measles | spots)?

P(spots) • P(spots) was not given. • We can estimate it with the following (unlikely) assumptions • The three listed diseases are independent; no one will have two or more • There are no other causes or co-factors for spots, P.e. p(spots | none-of-the-above) = 0 • Then we can say that: • P(spots) = p(spots^measles) + p(spots^chickenpox) + p(spots^allergy) (0.42)

Combining Evidence ache flu fever Multiple sources of evidence leading to the same conclusion thermometer reading fever flu Chain of evidence leading to a conclusion