Ch10 Machine Learning: Symbol-Based

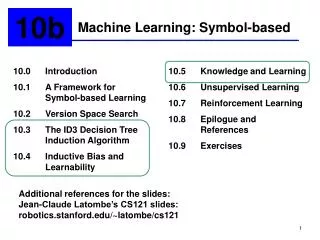

Ch10 Machine Learning: Symbol-Based. Dr. Bernard Chen Ph.D. University of Central Arkansas Spring 2011. Machine Learning Outline . The book present four chapters on machine learning, reflecting four approaches to the problem: Symbol Based Connectionist Genetic/Evolutionary Stochastic.

Ch10 Machine Learning: Symbol-Based

E N D

Presentation Transcript

Ch10 Machine Learning: Symbol-Based Dr. Bernard Chen Ph.D. University of Central Arkansas Spring 2011

Machine Learning Outline • The book present four chapters on machine learning, reflecting four approaches to the problem: • Symbol Based • Connectionist • Genetic/Evolutionary • Stochastic

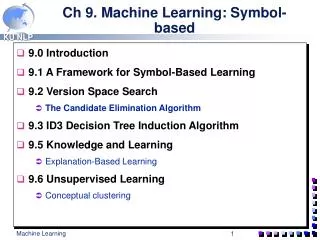

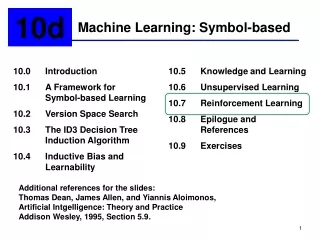

Ch.10 Outline • A framework for Symbol-Based Learning • ID3 Decision Tree • Unsupervised Learning

The Framework Example • Data • The representation: • Size(small)^color(red)^shape(round) • Size(large)^color(red)^shape(round)

The Framework Example • A set of operations: Based on • Size(small)^color(red)^shape(round) replace a single constant with a variable produces the generalizations: Size(X)^color(red)^shape(round) Size(small)^color(X)^shape(round) Size(small)^color(red)^shape(X)

The Framework Example • The concept space • The learner must search this space to find the desired concept. • The complexity of this concept space is a primary measure of the difficulty of a learning problem

The Framework Example • Heuristic search: Based on • Size(small)^color(red)^shape(round) The learner will make that example a candidate “ball” concept; this concept correctly classifies the only positive instance If the algorithm is given a second positive instance • Size(large)^color(red)^shape(round) The learner may generalize the candidate “ball” concept to • Size(Y)^color(red)^shape(round)

Learning process • The training data is a series of positive and negative examples of the concept: examples of blocks world structures that fit category, along with near misses. • The later are instances that almost belong to the category but fail on one property or relation

Learning process • This approach is proposed by Patrick Winston (1975) • The program performs a hill climbing search on the concept space guided by the training data • Because the program does not backtrack, its performance is highly sensitive to the order of the training examples • A bad order can lead the program to dead ends in the search space

Ch.10 Outline • A framework for Symbol-Based Learning • ID3 Decision Tree • Unsupervised Learning

ID3 Decision Tree • ID3, like candidate elimination, induces concepts from examples • It is particularly interesting for • Its representation of learned knowledge • Its approach to the management of complexity • Its heuristic for selecting candidate concepts • Its potential for handling noisy data

ID3 Decision Tree • The previous table can be represented as the following decision tree:

ID3 Decision Tree • In a decision tree, each internal node represents a test on some property • Each possible value of that property corresponds to a branch of the tree • Leaf nodes represents classification, such as low or moderate risk

ID3 Decision Tree • A simplified decision tree for credit risk management

ID3 Decision Tree • ID3 constructs decision trees in a top-down fashion. • ID3 selects a property to test at the current node of the tree and uses this test to partition the set of examples • The algorithm recursively constructs a sub-tree for each parturition • This continues until all members of the partition are in the same class

ID3 Decision Tree • For example, ID3 selects income as the root property for the first step

ID3 Decision Tree • How to select the 1st node? (and the following nodes) • ID3 measures the information gained by making each property the root of current subtree • It picks the property that provides the greatest information gain

ID3 Decision Tree • If we assume that all the examples in the table occur with equal probability, then: • P(risk is high)=6/14 • P(risk is moderate)=3/14 • P(risk is low)=5/14

ID3 Decision Tree ID3 Decision Tree • I[6,3,5]= • Based on

ID3 Decision Tree • The information gain form income is: Gain(income)= I[6,3,5]-E[income]= 1.531-0.564=0.967 Similarly, • Gain(credit history)=0.266 • Gain(debt)=0.063 • Gain(colletral)=0.206

ID3 Decision Tree • Since income provides the greatest information gain, ID3 will select it as the root of the tree

Attribute Selection Measure: Information Gain (ID3/C4.5) • Select the attribute with the highest information gain • Let pi be the probability that an arbitrary tuple in D belongs to class Ci, estimated by |Ci, D|/|D| • Expected information (entropy) needed to classify a tuple in D:

Attribute Selection Measure: Information Gain (ID3/C4.5) • Information needed (after using A to split D into v partitions) to classify D: • Information gained by branching on attribute A

Decision Tree Example • Info(Tenured)=I(3,3)= • log2(12)=log12/log2=1.07918/0.30103=3.584958. • Teach you what is log2http://www.ehow.com/how_5144933_calculate-log.html • Convenient tool: http://web2.0calc.com/

Decision Tree Example • InfoRANK (Tenured)= 3/6 I(1,2) + 2/6 I(1,1) + 1/6 I(1,0)= 3/6 * ( ) + 2/6 (1) + 1/6 (0)= 0.79 • 3/6 I(1,2) means “Assistant Prof” has 3 out of 6 samples, with 1 yes’s and 2 no’s. • 2/6 I(1,1) means “Associate Prof” has 2 out of 6 samples, with 1 yes’s and 1 no’s. • 1/6 I(1,0) means “Professor” has 1 out of 6 samples, with 1 yes’s and 0 no’s.

Decision Tree Example • InfoYEARS (Tenured)= 1/6 I(1,0) + 2/6 I(0,2) + 1/6 I(0,1) + 2/6 I (2,0)= 0 • 1/6 I(1,0) means “years=2” has 1 out of 6 samples, with 1 yes’s and 0 no’s. • 2/6 I(0,2) means “years=3” has 2 out of 6 samples, with 0 yes’s and 2 no’s. • 1/6 I(0,1) means “years=6” has 1 out of 6 samples, with 0 yes’s and 1 no’s. • 2/6 I(2,0) means “years=7” has 2 out of 6 samples, with 2 yes’s and 0 no’s.

Ch.10 Outline • A framework for Symbol-Based Learning • ID3 Decision Tree • Unsupervised Learning

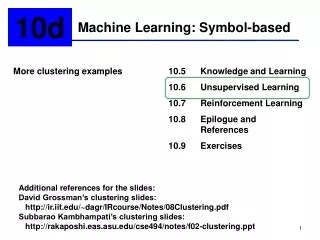

Unsupervised Learning • The learning algorithms discussed so far implement forms of supervised learning • They assume the existence of a teacher, some fitness measure, or other external method of classifying training instances • Unsupervised Learning eliminates the teacher and requires that the learners form and evaluate concepts their own

Unsupervised Learning • Science is perhaps the best example of unsupervised learning in humans • Scientists do not have the benefit of a teacher. • Instead, they propose hypotheses to explain observations,

Unsupervised Learning • The clustering problem starts with (1) a collection of unclassified objects and (2) a means for measuring the similarity of objects • The goal is to organize the objects into classes that meet some standard of quality, such as maximizing the similarity of objects in the same class

Unsupervised Learning • Numeric taxonomy is one of the oldest approaches to the clustering problem • A reasonable similarity metric treats each object as a point in n-dimensional space • The similarity of two objects is the Euclidean distance between them in this space

Unsupervised Learning • Using this similarity metric, a common clustering algorithm builds clusters in a bottom-up fashion, also known as agglomerative clustering: • Examining all pairs of objects, select the pair with the highest degree of similarity, and mark that pair a cluster • Defining the features of the cluster as some function (such as average) of the features of the component members and then replacing the component objects with this cluster definition • Repeat this process on the collection of objects until all objects have been reduced to a single cluster

Unsupervised Learning • The result of this algorithm is a Binary Tree whose leaf nodes are instances and whose internal nodes are clusters of increasing size • We may also extend this algorithm to objects represented as sets of symbolic features.

Unsupervised Learning • Object1={small, red, rubber, ball} • Object1={small, blue, rubber, ball} • Object1={large, black, wooden, ball} • This metric would compute the similary values: • Similarity(object1, object2)= ¾ • Similarity(object1, object3)=1/4

Partitioning Algorithms: Basic Concept • Given a k, find a partition of k clusters that optimizes the chosen partitioning criterion • Global optimal: exhaustively enumerate all partitions • Heuristic methods: k-means and k-medoids algorithms • k-means (MacQueen’67): Each cluster is represented by the center of the cluster • k-medoids or PAM (Partition around medoids) (Kaufman & Rousseeuw’87): Each cluster is represented by one of the objects in the cluster

The K-Means Clustering Method • Given k, the k-means algorithm is implemented in four steps: • Partition objects into k nonempty subsets • Compute seed points as the centroids of the clusters of the current partition (the centroid is the center, i.e., mean point, of the cluster) • Assign each object to the cluster with the nearest seed point • Go back to Step 2, stop when no more new assignment